The era of AI robots powered by physical AI has arrived. Physical AI models understand their environments and autonomously complete complex tasks in the physical world. Many of the complex tasks—like dexterous manipulation and humanoid locomotion across rough terrain—are too difficult to program and rely on generative physical AI models trained using reinforcement learning (RL) in simulation.

With NVIDIA Isaac Sim, a reference application built on NVIDIA Omniverse, developers can design, simulate, test, and train AI-based robots and autonomous machines in a virtual environment that obeys the laws of physics.

NVIDIA Isaac Sim enables teams to generate synthetic data, train robot policies, and run a multitude of what-if scenarios to validate their entire robotic stack before deployment.

The post covers the latest Isaac Sim 4.0 release, which includes NVIDIA PhysX 5.4 and Isaac Lab. Isaac Sim 4.0, available now to download, is built on NVIDIA Omniverse Kit 106, which brings developers greater ease and control over workflows.

New NVIDIA Isaac Sim 4.0 features

Isaac Sim 4.0 delivers powerful new features and enhancements to supercharge your robotics workflows, including:

- Faster installing with PIP

- Improved usability with wizard-based import and system compatibility checker

- New assets, environments, robots, environments sensor

- New PhysX features such as mimic joints, TGS solver, residual visualizations, and more

- Multi-GPU and multi-node capabilities for reinforcement learning

Read on to learn more about these new features, plus new PhysX and sensor features used during the simulation phase.

Get started faster with PIP install

You can now install Isaac Sim on local or remote systems using a Python package manager such as PIP. This greatly accelerates and simplifies the installation process using the same development environment developers are familiar with. Easily deploy Isaac Sim within cloud-based IDEs such as Jupyter.

Additionally, you can now use the Compatibility Checker app to view system requirements and compatibility. This provides instant feedback before starting the installation process.

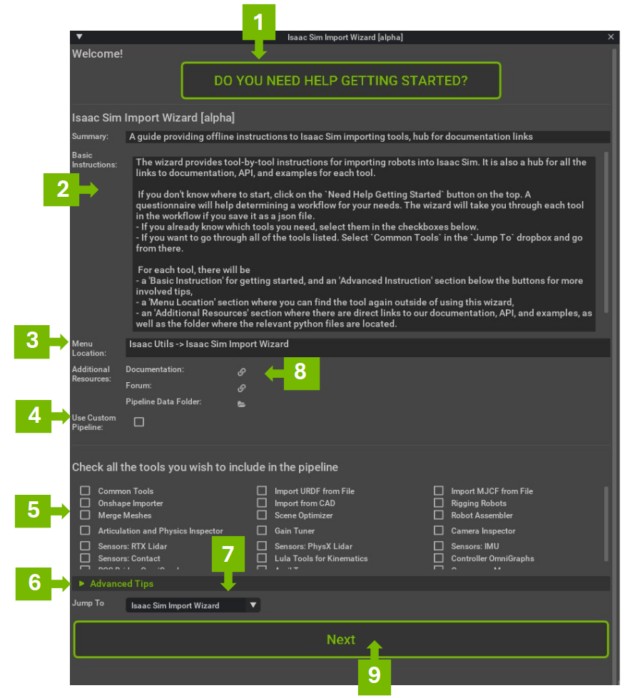

Improved usability with wizard-based import

Every simulation starts with building the virtual environment, and the robots that occupy the space within. To speed up this process, Isaac Sim now includes a wizard with a guided process for importing and tuning robots in the prescribed environment. The wizard has a whole host of other options documentation, most commonly tools such as the CAD importer, sensors, rigging robots, and more.

Additional asset libraries

Use the following new assets for simulations:

- Prebuilt warehouse models

- Robot models

- UR20 and UR30 robot arms from Universal Robots

- Spot from Boston Dynamics

- Humanoids

- 1X Neo

- Unitree H1

- Agility Digit

- Fourier GR1

- Sanctuary AI Phoenix

- XiaoPeng PX5

- Sensors

- Ouster

- SICK

- Velodyne

New PhysX 5.4 features for mimicking and inspecting joints

Once the scene is built and the robot is set up in the environment, you can use some of the new features from NVIDIA PhysX 5.4 on the robot model.

For example, the Mimic Joint feature enables you to model coupled joint positions in robots. You can now model parallel-gripper mechanisms, and four-bar linkages, or mechanically coupled elements of parallel grippers or robotic hands. The mimic joint can capture the URDF specification relationship:

jointPosition = multiplier * referenceJointPosition + offset.

The joint velocities are analogously constrained, and the interaction is two-way. Forces applied by the mimic joint to satisfy the constraint are fed back to the reference joint according to the multiplier (that is, gearing).

The Physics Inspector feature now enables authoring single articulations and maximal joints by positioning a robotic arm into a specific pose for a given simulation scene. Teams can also visually check for collisions and degrees of freedom prior to actual simulation.

New simulation features

Some of the new features in the simulation phases are designed to help enhance physics and sensor simulation.

Enhanced physics simulation

Physics plays an integral part of the overall movement and performance of the robots. The new enhancements in Isaac Sim 4.0 provide better simulation, visualization, and debugging tools.

The latest update to the TGS solver features a new mode that helps improve solver convergence and collision fidelity. With a newly introduced solver option, TGS is enabled to consider gravity and other external forces, and articulate joint efforts in every TGS position iteration (substep) instead of once at the beginning of the time step. Enable the mode in the Physics Scene Advanced options, or the USD PhysxSceneAPI EnableExternalForcesEveryIteration attribute.

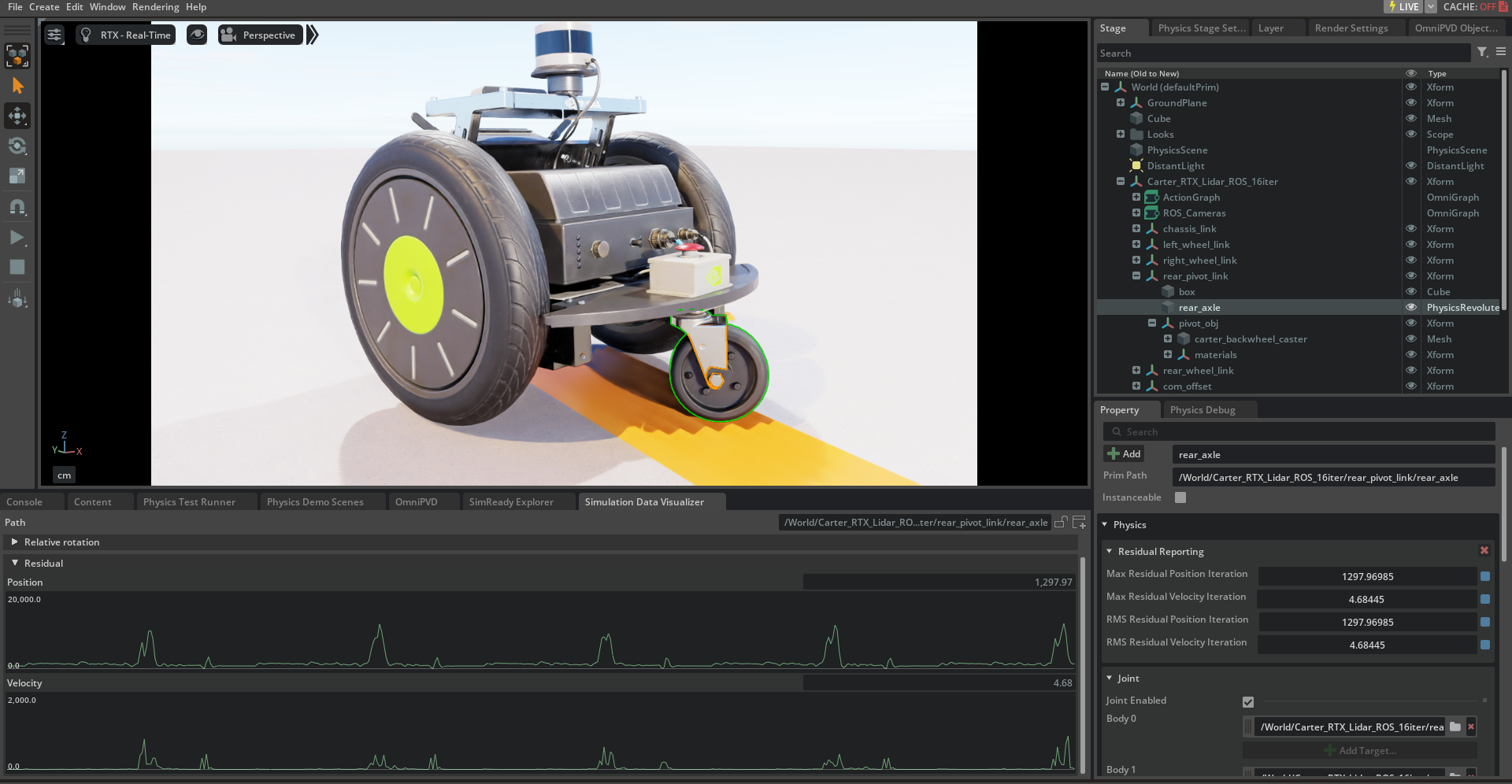

For high-performance simulations, it’s important to find an appropriate tradeoff between the number of solver iterations and simulation fidelity. The new residual reporting feature helps with this simulation tuning task by exposing data corresponding to the convergence quality of the solver at the end of the timestep. Data can be queried in aggregate on the physics scene, or per articulation and maximal-coordinate joint.

Not only can you see the solver residuals, but you can also monitor simulation data such as positions and orientations with the new Simulation Data Visualizer Window. Supported properties are body positions and orientation, solver residuals, and more. For more information. see the visualizer documentation.

You can also now query the linear and angular accelerations of both rigid bodies and articulation links using a unified API. The reported values can be used to compute the simulated output of an inertial measurement unit, for example.

Additional features include SDF collision improvements, contact friction force reporting and more.

More support for sensor simulation

Sensors form the backbone of the robotics perception stack. Isaac Sim has a growing library of realistic sensor models that can simulate ground-truth perception and physics-based sensors.

The latest release includes RTX support for non-visual materials where material optical properties now extend to the non-visual spectrum—such as infrared, radio waves, and ultraviolet—enabling new and more advanced sensor modeling. Additionally, you can take advantage of RTX-based radars using the new RTX non-visual materials feature set to accurately model radar response to different material types and environments.

Further, the IMU sensor backend is now compatible with Tensor API. This helps unifies the physics backend for IMU sensor with other Isaac Sim sensors and nodes, enabling more consistent access to latest physics data.

Performance improvements to OmniGraph-based sensor pipelines include reduced overhead of pipelines by only running them as needed instead of every frame.

Accelerating reinforcement learning with Isaac Lab

Isaac Lab, built on Isaac Sim, is a unified, modular, and open-source framework for robot learning that aims to simplify common workflows such as reinforcement, imitation, and demonstration learning, as well as motion planning. It incorporates features from Orbit, an open-source framework developed through a joint initiative by NVIDIA, the AI Institute, ETH Zurich, and the University of Toronto.

Isaac Lab helps teams scale, taking advantage of multi-GPU and multi-node training on Linux using the PyTorch distributed framework. These jobs can be easily scaled across heterogeneous environments with OSMO, a cloud-native, orchestration platform for scheduling complex multi-stage and multi-container heterogeneous computing workflows.

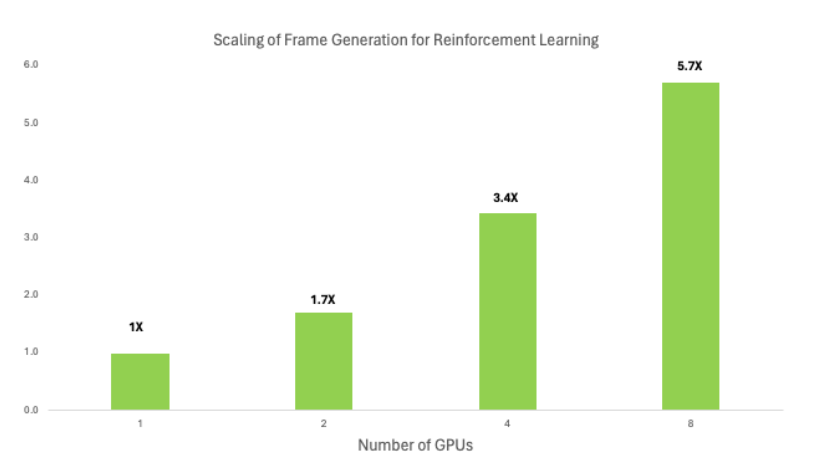

When running on more than one GPU, higher rollout FPS is achieved with multiple GPUs (as shown in Figure 5). The increased FPS means that more trajectories and experiences can be generated in the same amount of time, providing the model with a richer set of data to learn from. The model may then converge more quickly and achieve higher performance levels compared to training on a single GPU.

In reinforcement learning, robots must perform a multitude of scenarios that are independent of each other. It can be extremely tedious to visualize the progress of these robots as they perform tasks across multiple cameras—but tiled rendering helps to visualize all these scenarios in a singular view. Tiled rendering works by concatenating camera outputs from multiple cameras and rendering one single large image instead of multiple smaller images produced by each individual camera.

Additionally, for large RL scenes with many environments, new optimizations in PhysX 5.4 accelerate environment cloning by up to 3x compared to the previous release.

Ecosystem adoption

Leading robotic developers from 1X, Agility Robotics, The AI Institute, Boston Dynamics, Fourier, Galbot, LimX Dynamics, RobotEra, Sanctuary AI, and UBTECH are integrating Isaac Lab to develop their next-generation robots and humanoids. Many of these companies are already leveraging Isaac Sim to test their robots in realistic environments and to generate synthetic data for model training.

Learn more about Boston Dynamics’ collaboration with NVIDIA and The AI Institute to develop the Spot Reinforcement Learning Research Kit. The kit combines advanced simulation, NVIDIA Jetson AI technology, and precise robot controls to efficiently transition quadrupeds from virtual environments to real-world applications.

Isaac Lab is open-sourced under the BSD-3 license and is available through isaac-sim/IsaacLab on GitHub.

More support for ROS developers in Isaac Sim

The latest version of Isaac Sim provides ROS developers with rich new functionality, making it easier to test and simulate robots in Isaac Sim than ever before. This starts with enhanced usability with support for URDF import from ROS2 node that enables switching between a URDF file or a ROS2 node containing a robot description. For Cyclone DDS users, it is supported on Linux in Isaac Sim for Isaac ROS or Nav2-related workflows.

Additional new features include:

- Simplified and improved end-to-end workflow with ROS2 launch support

- ROS2 Quality of Service for configuring any ROS subscriber or publisher with QoS settings

- Support for ROS2 publisher/subscriber and server/client for any available message type. This can be used to interface with any available message installed in the system, as well as custom messages.

Get started developing your robotics solutions

To receive updates on the following additional resources and reference architectures to support your development goals, sign up for the NVIDIA Developer Program.

- NVIDIA Isaac ROS, built on the open-source ROS 2 software framework, is a collection of accelerated computing packages and AI models, bringing NVIDIA-acceleration to ROS developers everywhere.

- NVIDIA Isaac Sim, built on NVIDIA Omniverse, is a reference application enabling developers to design, simulate, test, and train AI-based robots and autonomous machines in a physically based virtual environment. It includes NVIDIA Isaac Lab, a lightweight app for robot learning.

- NVIDIA Isaac Perceptor, a reference workflow for autonomous mobile robots (AMRs) and automated guided vehicles (AGVs).

- NVIDIA Isaac Manipulator offers new foundation models and a reference workflow for industrial robotic arms.

- NVIDIA Jetson is the leading platform for autonomous machines and embedded applications.

Stay up to date on LinkedIn, Instagram, X, and Facebook. Explore the NVIDIA documentation and YouTube channels, and join the NVIDIA Developer Robotics forum. Learn more with self-paced training and webinars on Isaac ROS and Isaac Sim.