The pace for development and deployment of AI-powered robots and other autonomous machines continues to grow rapidly. The next generation of applications require large increases in AI compute performance to handle multimodal AI applications running concurrently in real time.

Human-robot interactions are increasing in retail spaces, food delivery, hospitals, warehouses, factory floors, and other commercial applications. These autonomous robots must concurrently perform 3D perception, natural language understanding, path planning, obstacle avoidance, pose estimation, and many more actions that require both significant computational performance and highly accurate trained neural models for each application.

NVIDIA Jetson AGX Orin modules are the highest-performing and newest members of the NVIDIA Jetson family. These modules deliver tremendous performance with class-leading energy efficiency. They run the comprehensive NVIDIA AI software stack to power the next generation of demanding edge AI applications.

Jetson AGX Orin and Jetson Orin NX series

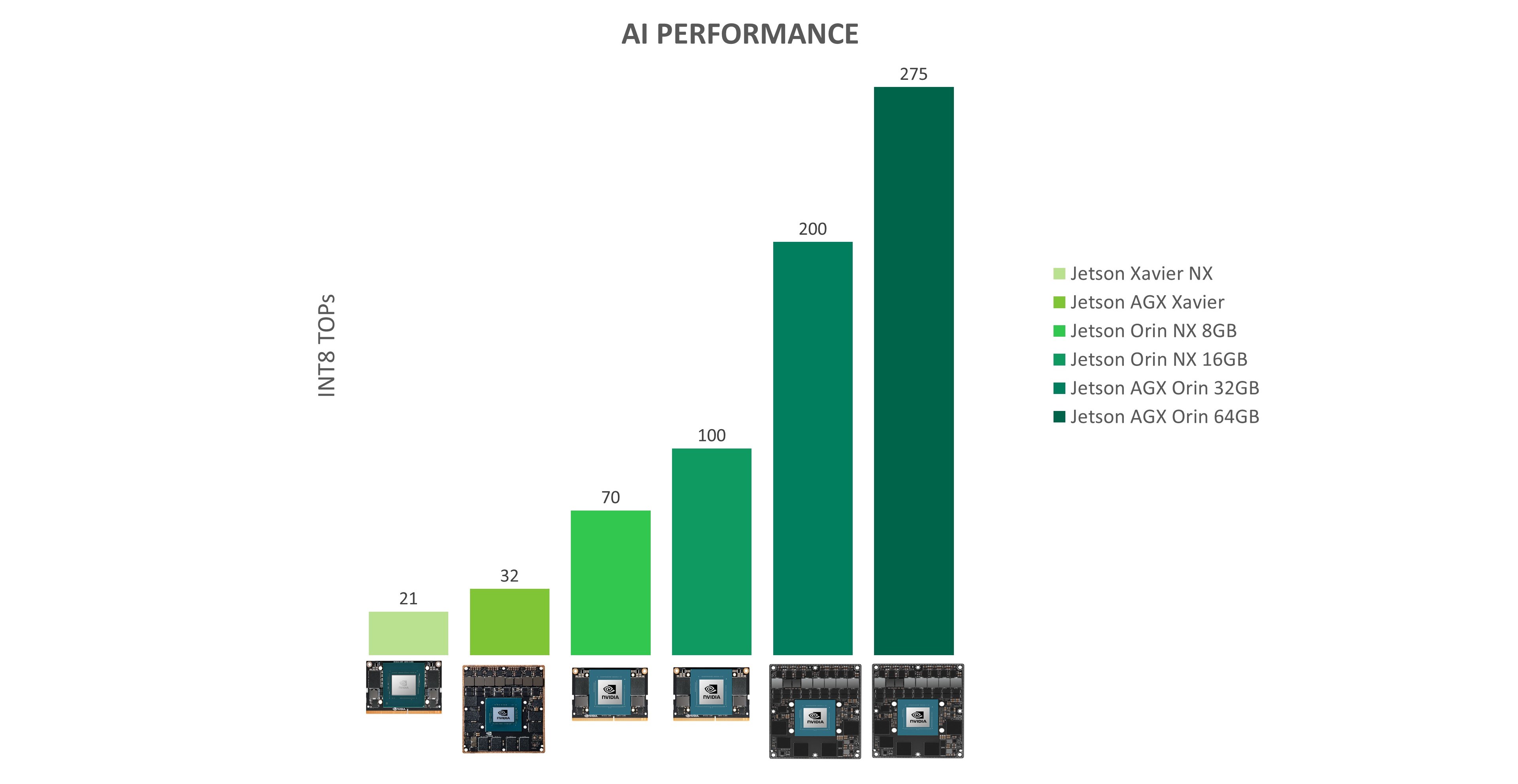

At GTC Spring 2022, we announced that four Jetson Orin modules will be available in 2022. With up to 275 tera operations per second (TOPS) of performance, Jetson Orin modules can run server class AI models at the edge with end-to-end application pipeline acceleration. Compared to Jetson Xavier modules, Jetson Orin brings even higher performance, power efficiency, and inference capabilities to modern AI applications.

| JETSON AGX XAVIER 64GB | JETSON AGX ORIN 64GB | |

| 32 DENSE INT8 TOPS | 275 SPARSE|138 DENSE, INT8 TOPS | |

| 10W to 30W | 15W to 60W | |

| $1,299 (1KU+) | $1,599 (1KU+) | |

| JETSON AGX XAVIER 32GB | JETSON AGX ORIN 32GB | |

| 32 DENSE INT8 TOPS | 200 SPARSE|100 DENSE, INT8 TOPS | |

| 10W to 30W | 15W to 40W | |

| $899 (1KU+) | $899 (1KU+) | |

| JETSON XAVIER NX 16GB | JETSON ORIN NX 16GB | |

| 21 DENSE INT8 TOPS | 100 SPARSE|50 DENSE, INT8 TOPS | |

| 10W to 20W | 10W to 25W | |

| $499 (1KU+) | $599 (1KU+) | |

| JETSON XAVIER NX 8GB | JETSON ORIN NX 8GB | |

| 21 DENSE INT8 TOPS | 70 SPARSE|35 DENSE, INT8 TOPS | |

| 10W to 20W | 10W to 20W | |

| $399 (1KU+) | $399 (1KU+) |

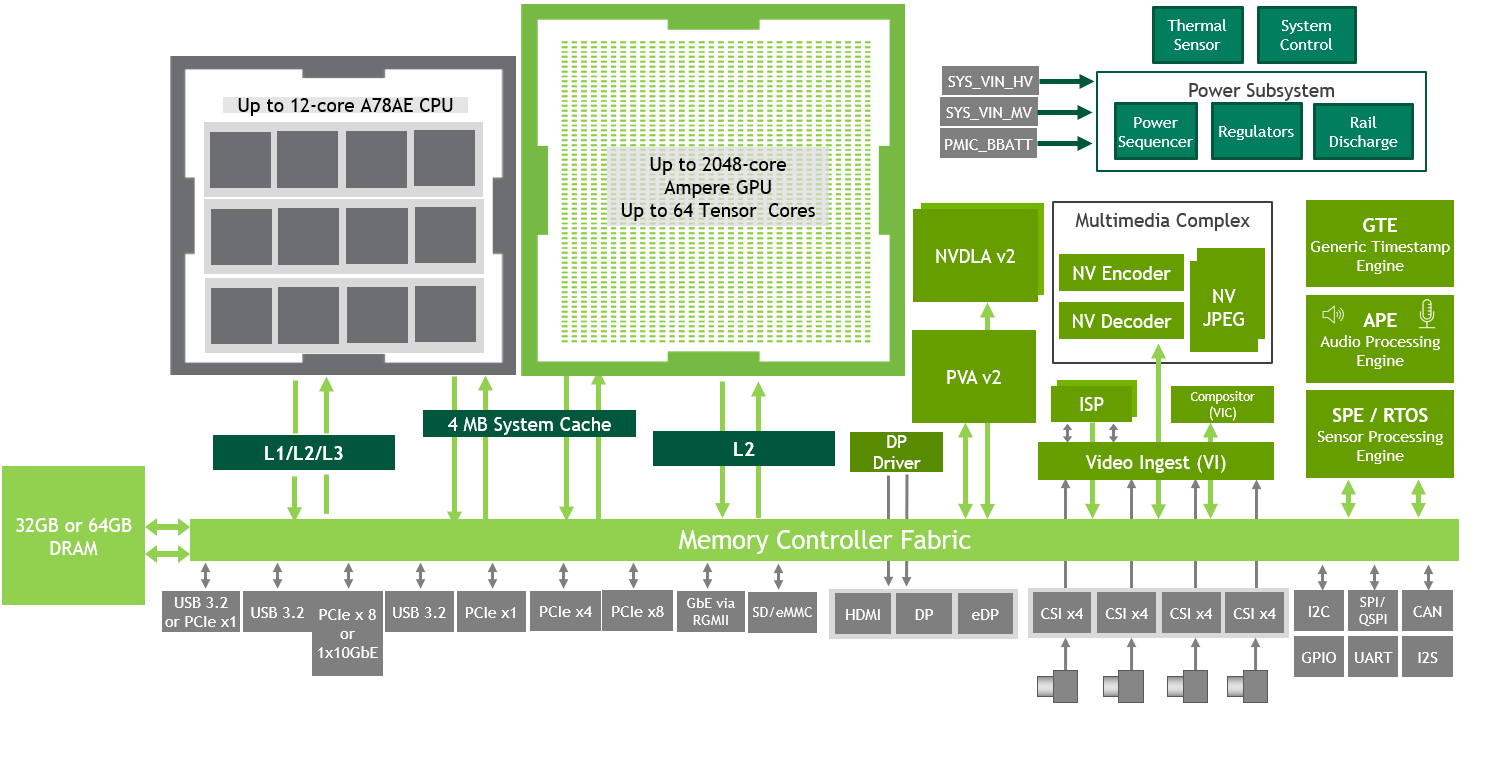

The Jetson AGX Orin series includes the Jetson AGX Orin 64GB and the Jetson AGX Orin 32GB modules.

- Jetson AGX Orin 64GB delivers up to 275 TOPS with power configurable between 15W and 60W.

- Jetson AGX Orin 32GB delivers up to 200 TOPs with power configurable between 15W and 40W.

These modules have the same compact form factor and are pin compatible with Jetson AGX Xavier series modules, offering you an 8x performance upgrade, or up to 6x the performance at the same price.

Edge and embedded systems continue to be driven by the increasing number, performance, and bandwidth of sensors. The Jetson AGX Orin series brings not only additional compute for processing these sensors, but also additional I/O:

- Up to 22 lanes of PCIe Gen4

- 10Gb Ethernet

- Higher speed CSI lanes

- Double the storage with 64GB eMMC 5.1

- 1.5X the memory bandwidth

For more information, see the Jetson Orin product page and the Jetson AGX Orin Series Data Sheet.

USB 3.2, MGBE, and PCIe share UPHY Lanes. For the supported UPHY configurations, see the Design Guide.

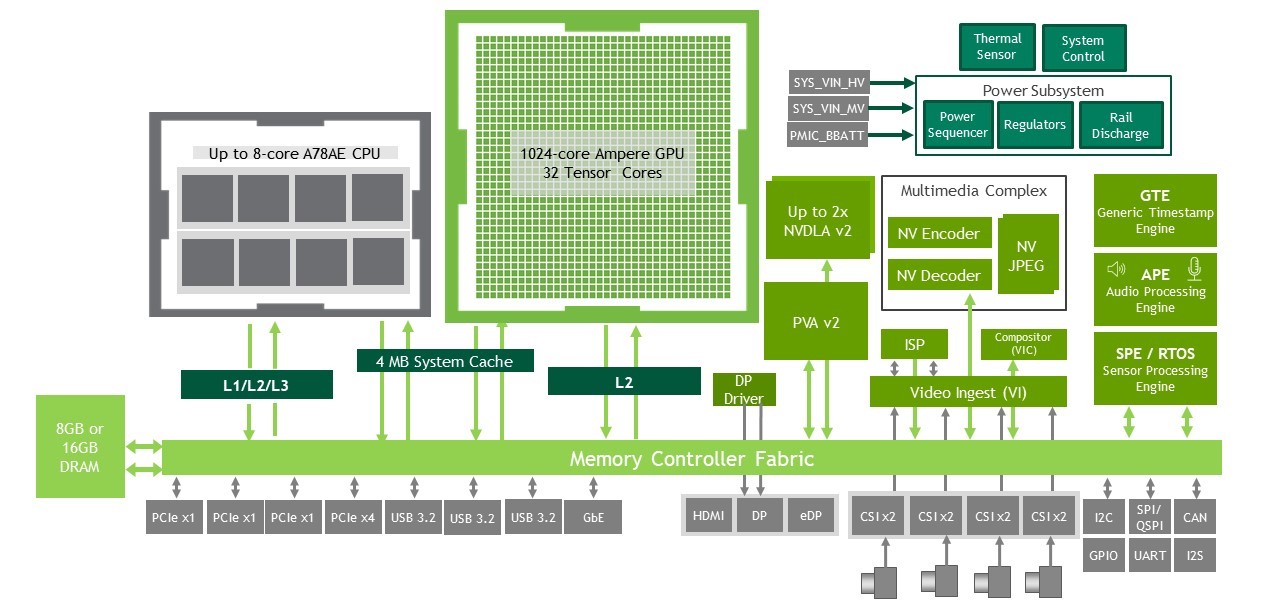

The NVIDIA Jetson Orin NX series includes Jetson Orin NX 16GB with up to 100 TOPS of AI performance and Jetson Orin NX 8GB with up to 70 TOPS. With this series, we followed a similar design philosophy as with Jetson Xavier NX. We brought the NVIDIA Orin architecture to the smallest Jetson form factor, with a 260-pin SODIMM connector and lower power consumption.

You can bring this higher class of performance to your next-generation, small form factor products like drones and handheld devices. Jetson Orin NX 16GB comes with power configurable between 10W and 25W, and Jetson Orin NX 8GB comes with power configurable between 10W and 20W.

The Jetson Orin NX series is form factor compatible with the Jetson Xavier NX series, and delivers up to 5x the performance, or up to 3X the performance at the same price. The Orin NX series also brings additional high speed I/O capabilities with up to seven PCIe lanes and three 10Gbps USB 3.2 interfaces. For storage, you can leverage the additional PCIe lanes to connect to external NVMe. For more information, see the Jetson Orin product page.

Jetson AGX Xavier was designed around the NVIDIA Xavier SoC, our first architecture developed from the ground up for autonomous machines. The NVIDIA Orin SoC architecture takes this class of product to the next level. It continues to showcase multiple different on-chip processors, but brings greater capability, higher performance, and more power efficiency.

Jetson Orin modules contain the following:

- An NVIDIA Ampere Architecture GPU with up to 2048 CUDA cores and up to 64 Tensor Cores

- Up to 12 Arm A78AE CPU cores

- Two next-generation deep learning accelerators (DLA)

- A computer vision accelerator

- Various other processors to offload the GPU and CPU:

- Video encoder

- Video decoder

- Video image compositor

- Image signal processor

- Sensor processing engine

- Audio processing engine

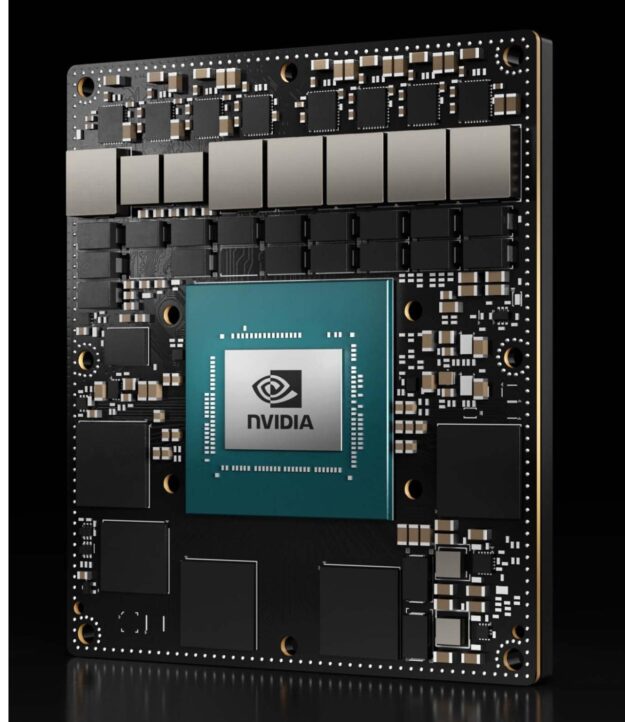

Like the other Jetson modules, Jetson Orin is built using a system-on-module (SOM) design. All the processing, memory, and power rails are contained in the module. All the high-speed I/O is available through a 699-pin connector (Jetson AGX Orin series) or a 260-pin SODIMM connector (Jetson Orin NX series). This SOM design makes it easy for you to integrate the modules into your system designs.

Jetson AGX Orin Developer Kit

At GTC 2022, NVIDIA also announced the availability of the Jetson AGX Orin Developer Kit. The developer kit contains everything needed for you to get up and running quickly. It includes a Jetson AGX Orin module with the highest performance and runs the world’s most advanced deep learning software stack. This kit delivers the flexibility to create sophisticated AI solutions now and well into the future.

Compact size, high-speed interfaces, and lots of connectors make this developer kit perfect for prototyping advanced AI-powered robots and edge applications for manufacturing, logistics, retail, service, agriculture, smart cities, healthcare, life sciences, and more.

The Jetson AGX Orin Developer Kit features:

- An NVIDIA Ampere Architecture GPU and 12-core Arm Cortex-A78AE 64-bit CPU, together with next-generation deep learning and vision accelerators

- High-speed I/O, 204.8 GB/s of memory bandwidth, and 32 GB of DRAM capable of feeding multiple concurrent AI application pipelines

- A powerful NVIDIA AI software stack with support for SDKs and software platforms, including the following:

- NVIDIA JetPack

- NVIDIA Riva

- NVIDIA DeepStream

- NVIDIA Isaac

- NVIDIA TAO

The developer kit runs the latest NVIDIA JetPack 5.0 software. NVIDIA JetPack 5.0 supports emulating the performance and clock frequencies of Jetson Orin NX series and Jetson AGX Orin series modules with a Jetson AGX Orin Developer Kit. You can kickstart your development for any of those modules today.

The Jetson AGX Orin Developer Kit is available for purchase through NVIDIA authorized distributors worldwide. Get started today by following the Getting Started guide.

| Developer Kit | AGX Orin 64GB | AGX Orin 32GB | |

| AI Performance | 275 INT8 Sparse TOPs | 200 INT8 Sparse TOPs | |

| GPU | 2048-core NVIDIA Ampere Architecture GPU with 64 Tensor Cores |

1792-core NVIDIA Ampere Architecture GPU with 56 Tensor Cores | |

| CPU | 12-core Arm Cortex-A78AE v8.2 64-bit CPU 3MB L2 + 6MB L3 |

8-core Arm Cortex-A78AE v8.2 64-bit CPU 2MB L2 + 4MB L3 |

|

| Power | 15W-60W | 15W-40W | |

| Memory | 32 GB | 64 GB | 32GB |

| MSRP | $1,999 | $1,599 | $899 |

Table 2. Summary comparison of Jetson AGX Orin series modules and Developer Kit

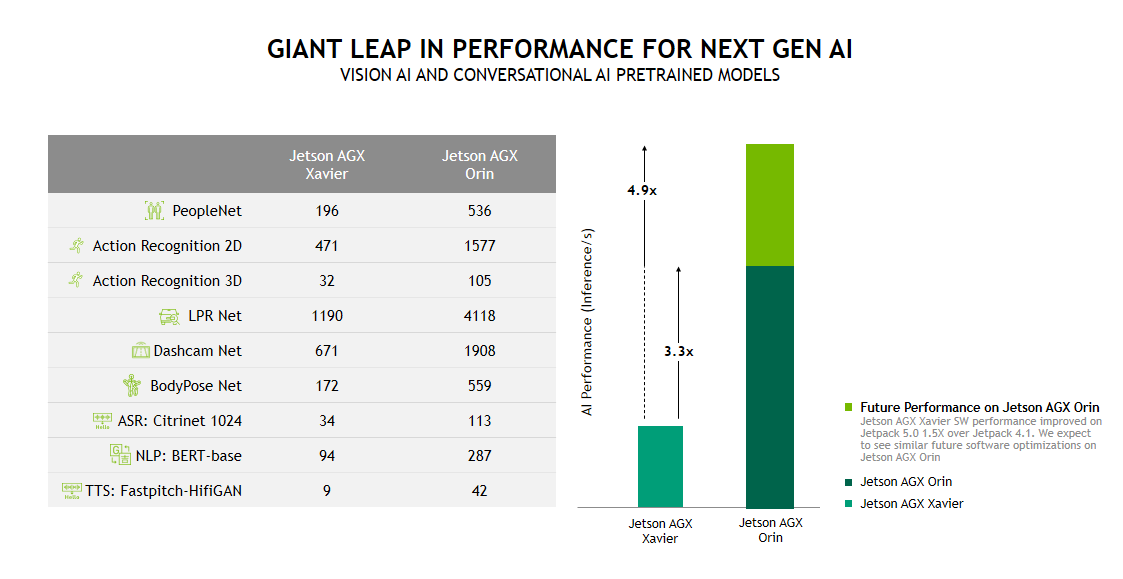

Best-in-class performance

Jetson Orin provides a giant leap forward for your next-generation applications. Using the Jetson AGX Orin Developer Kit, we have taken the geometric mean of measured performance for our highly accurate, production-ready, pretrained models for computer vision and conversational AI. Testing included the following benchmarks:

- NVIDIA PeopleNet for people detection

- NVIDIA ActionRecognitionNet 2D and 3D models

- NVIDIA LPRNet for license plate recognition

- NVIDIA DashcamNet, BodyPoseNet for multiperson human pose estimation

- Citrinet-1024 for speech recognition

- BERT-base for natural language processing

- FastPitchHifiGanE2E for text to speech

With the NVIDIA JetPack 5.0 Developer Preview, Jetson AGX Orin shows a 3.3X performance increase compared to Jetson AGX Xavier. With future software improvements, we expect this to approach a 5X performance increase. Jetson AGX Xavier performance has increased 1.5X since NVIDIA JetPack 4.1.1 Developer Preview, the first software release to support it.

The benchmarks have been run on our Jetson AGX Orin Developer Kit. PeopleNet and DashcamNet provide examples of dense models that can be run concurrently on the GPU and the two DLAs. The DLA can be used to offload some AI applications from the GPU and this concurrent capability enables them to operate in parallel.

PeopleNet, LPRNet, DashcamNet, and BodyPoseNet provide examples of dense INT8 benchmarks run on Jetson. ActionRecognitionNet 2D and 3D and the conversational AI benchmarks provide examples of dense FP16 performance. All these models can be found on NVIDIA NGC.

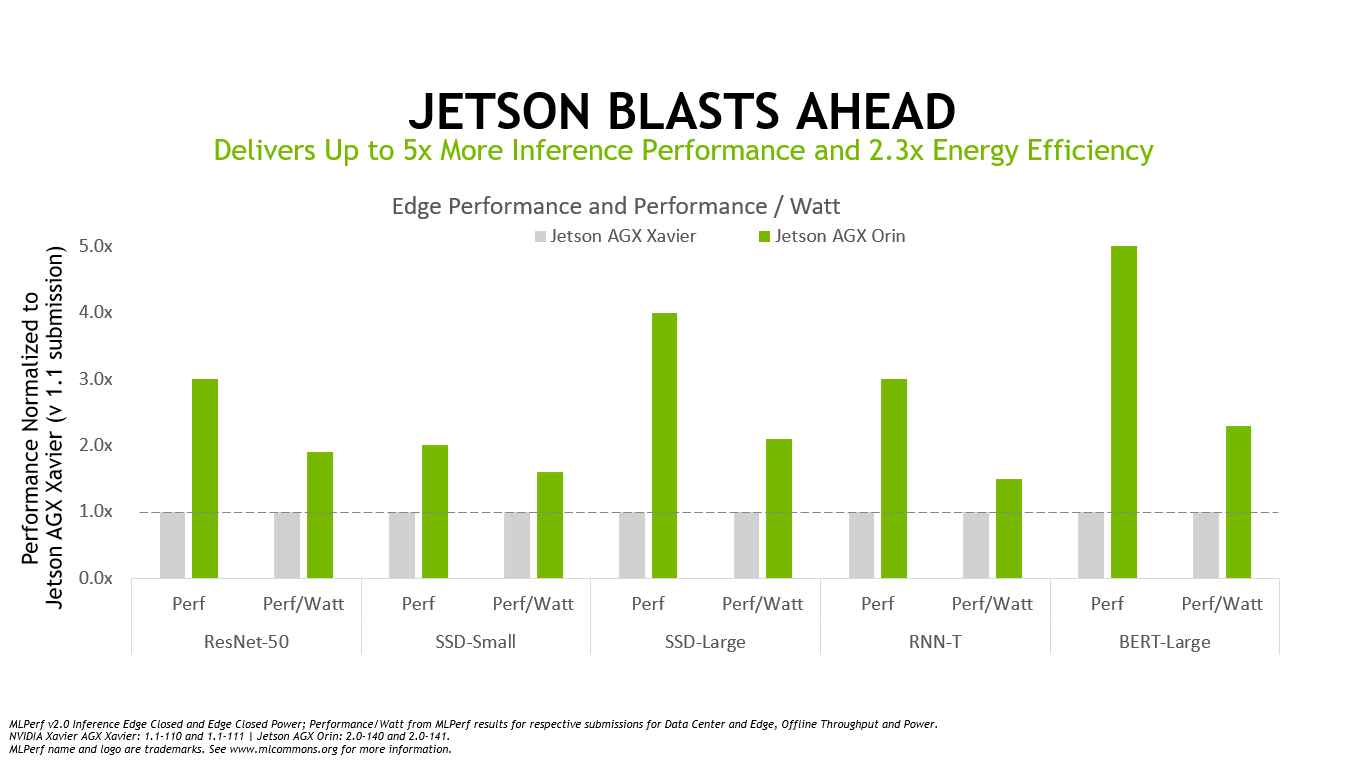

Moreover, Jetson Orin continues to raise the bar for AI at the edge, adding to the NVIDIA overall top rankings in the latest MLPerf industry inference benchmarks. Jetson AGX Orin provides up to a 5X performance increase on these MLPerf benchmarks compared to previous results on Jetson AGX Xavier, while delivering an average of 2x better energy efficiency.

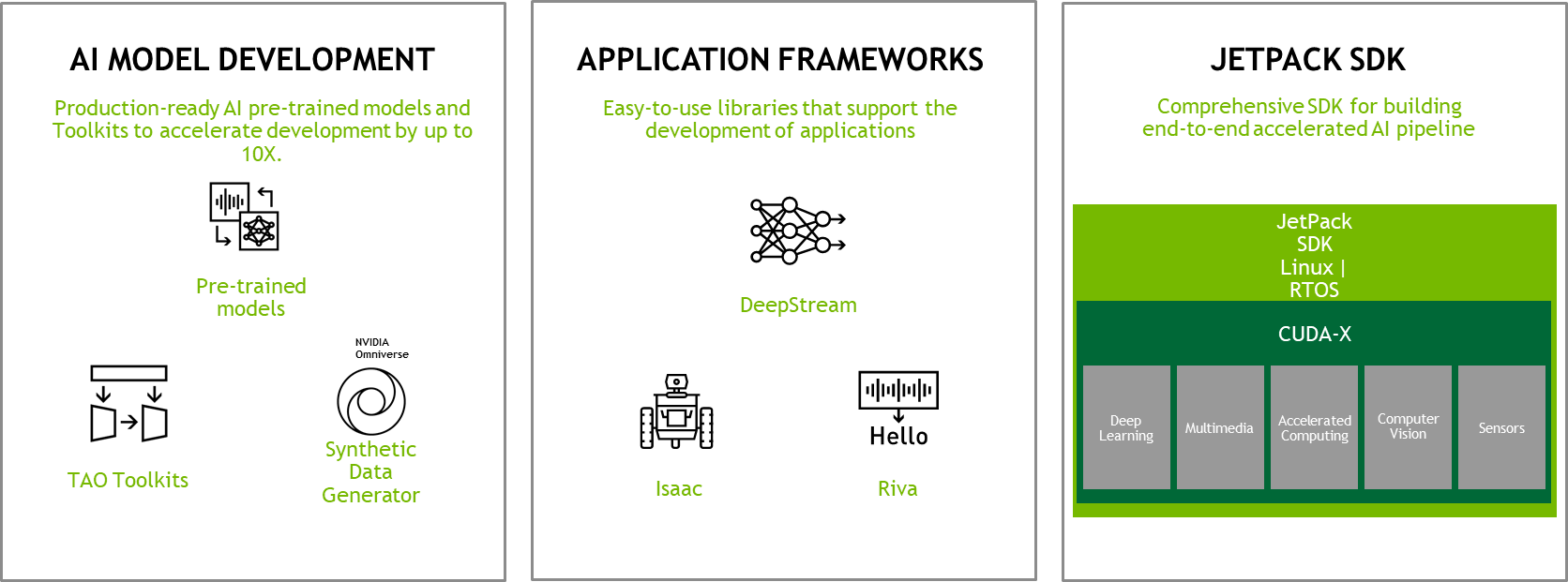

Accelerate time-to-market with Jetson software

The class-leading performance and energy efficiency of Jetson Orin are backed by the same powerful NVIDIA AI software that is deployed in GPU-accelerated data centers, hyperscale servers, and powerful AI workstations.

NVIDIA JetPack is the foundational SDK for the Jetson platform. NVIDIA JetPack provides a full development environment for hardware-accelerated AI-at-the-edge development. Jetson Orin is supported by NVIDIA JetPack 5.0, which includes the following:

- LTS Kernel 5.10

- A root file system based on Ubuntu 20.04

- A UEFI-based bootloader

- The latest compute stack with CUDA 11.4, TensorRT 8.4, and cuDNN 8.3

NVIDIA JetPack 5.0 also supports Jetson Xavier modules.

For you to develop fully accelerated applications quickly on the Jetson platform, NVIDIA provides application frameworks for various use cases:

- With DeepStream, rapidly develop and deploy vision AI applications and services. DeepStream offers hardware acceleration beyond inference, as it offers hardware-accelerated plug-ins for end-to-end AI pipeline acceleration.

- NVIDIA Isaac provides hardware-accelerated ROS packages that make it easier for ROS developers to build high-performance robotics solutions.

- NVIDIA Isaac Sim, powered by Omniverse, is a tool that powers photo-realistic, physically accurate virtual environments to develop, test, and manage AI-based robots.

- NVIDIA Riva provides state-of-the-art, pretrained models for automatic speech recognition (ASR) and text-to-speech (TTS), which can be easily customizable. The models enable you to quickly develop GPU-accelerated conversational AI applications.

To accelerate the time to develop production-ready and highly accurate AI models, NVIDIA provides various tools to generate training data, train and optimize models, and quickly create ready-to-deploy AI models.

The NVIDIA Omniverse Replicator for synthetic data generation helps in creating high-quality datasets to boost model training. With Omniverse Replicator, you can create large and diverse synthetic datasets that are not only hard but sometimes impossible to create in the real world. Using synthetic data along with real data for training the model, you can significantly improve the model accuracy.

NVIDIA pretrained models from NGC start you off with highly accurate and optimized models and model architectures for various use cases. Pretrained models are production-ready. You can further customize these models by training with your own real or synthetic data, using the NVIDIA TAO (Train-Adapt-Optimize) workflow to quickly build an accurate and ready-to-deploy model.

Watch these NVIDIA technologies coming together on Jetson AGX Orin for a robotic use case:

Learn about the Jetson AGX Orin Developer Kit in this getting started video:

For more information about all the NVIDIA technologies that we bring in NVIDIA Jetson Orin modules, watch a webinar on Jetson software.

Usher in the new era of autonomous machines and robotics

Get started developing for the four new Jetson Orin modules by placing an order for the Jetson AGX Orin Developer Kit and installing the latest JetPack. Find additional Jetson Orin documentation at the download center. For information and support, visit the NVIDIA Embedded Developer page and forums for help from community experts.