At GDC 2023, NVIDIA released new tools that make real-time path tracing more accessible to developers while accelerating the creation of ultra-realistic game worlds.

Generate frames with the latest breakthrough in AI rendering

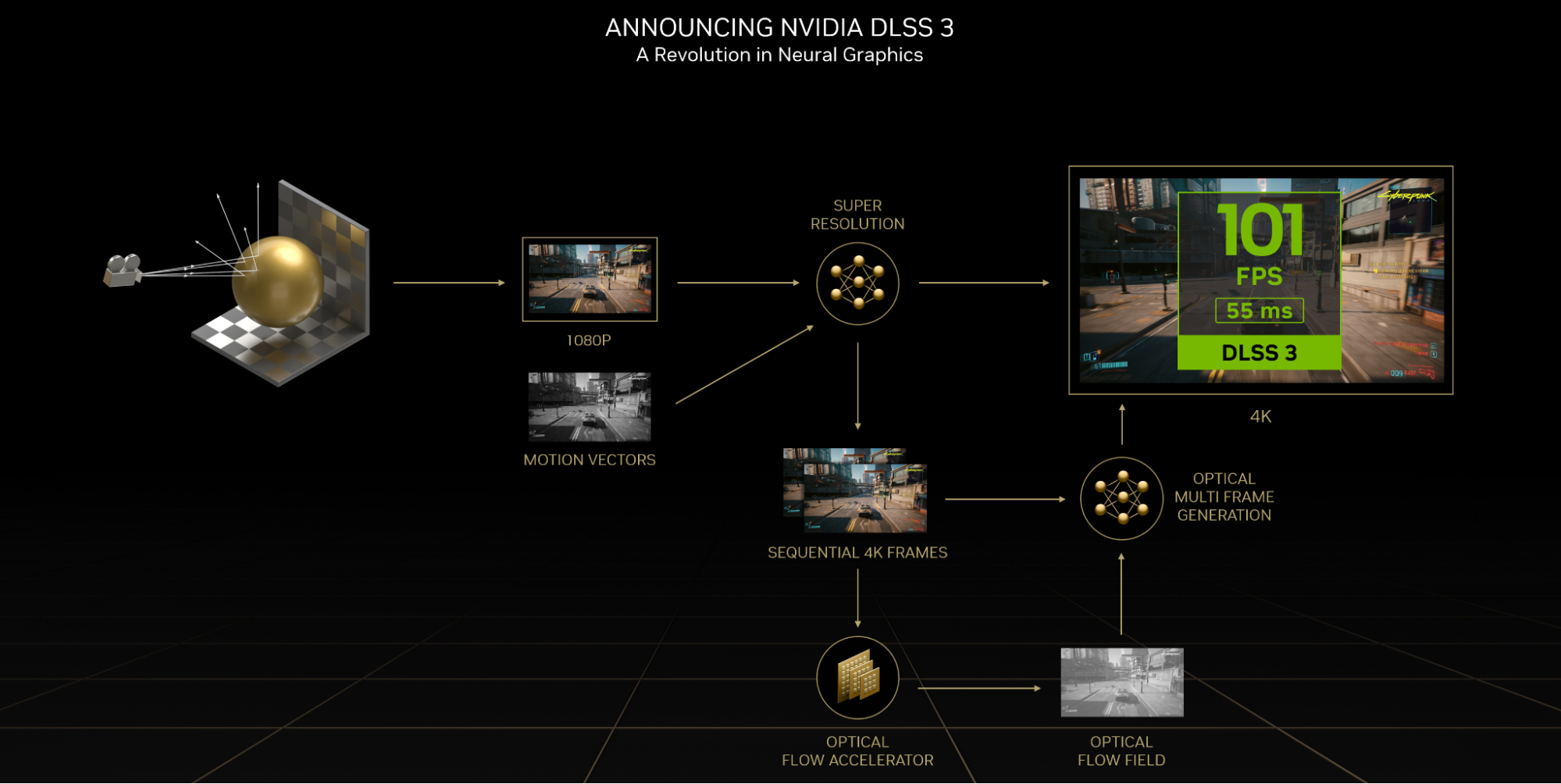

Announced with the NVIDIA Ada Lovelace architecture, DLSS 3 raised the bar not just for visuals but also performance and responsiveness. Since its introduction in 2019 with the NVIDIA Turing architecture, DLSS has continuously set new standards for AI in game rendering.

Frame generation is the latest evolution.

DLSS Frame Generation is the new performance multiplier in DLSS 3 that uses AI to create entirely new frames. It is powered by NVIDIA GeForce 40 series and the Optical Flow Accelerator. This breakthrough has made real-time path tracing—the next frontier in video game graphics—possible.

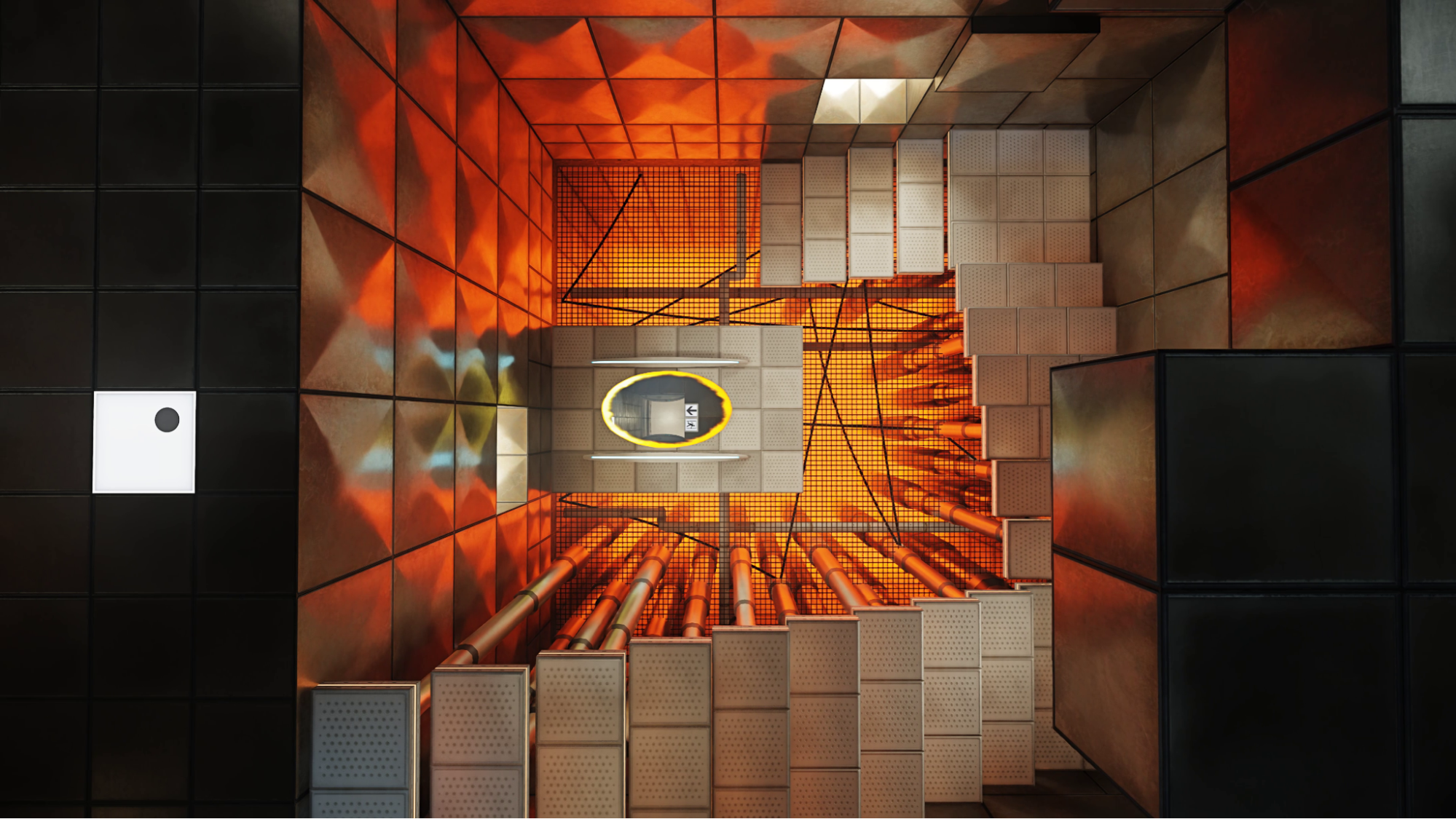

Since that announcement, 28 top games and applications now use DLSS 3 to deliver realistic graphics with incredible performance, including A Plague Tale Requiem, Portal with RTX, and Cyberpunk 2077. In some cases, frames-per-second in games have almost tripled.

DLSS Frame Generation is coming to GDC as a plugin for developers through the Streamline 2.0 SDK. Streamline is the NVIDIA open-source cross-IHV framework that simplifies the integration of features like DLSS 3. Instead of manually integrating the DLSS Frame Generation SDK, you identify which resources (motion vectors, depth, and so on) are required for the desired plug-in and then execute the plug-ins to run in your rendering pipeline.

In addition, Epic Games announced the DLSS Frame Generation plugin coming to Unreal Engine in its next release. Coupled with the NVIDIA Reflex low latency technology available through UE5, you have all the tools to boost the performance of your games while providing a highly responsive experience for players.

Together, Epic and NVIDIA are pushing the bounds of AI in game development, bringing real-time path tracing a step closer to reality.

“NVIDIA DLSS 3 introduces truly impressive frame generation technology and the Unreal Engine 5.2 plugin will offer developers a great choice for increased quality and performance of their games,” said Nick Penwarden, vice president of engineering at Epic Games.

Integration of real-time path tracing technology simplified

Real-time ray tracing in games was made possible with the introduction of RT cores in the NVIDIA Turing architecture. Since then, NVIDIA has been hard at work on the next challenge.

Released at GDC 2023, the NVIDIA RTX Path Tracing SDK is available to developers everywhere.

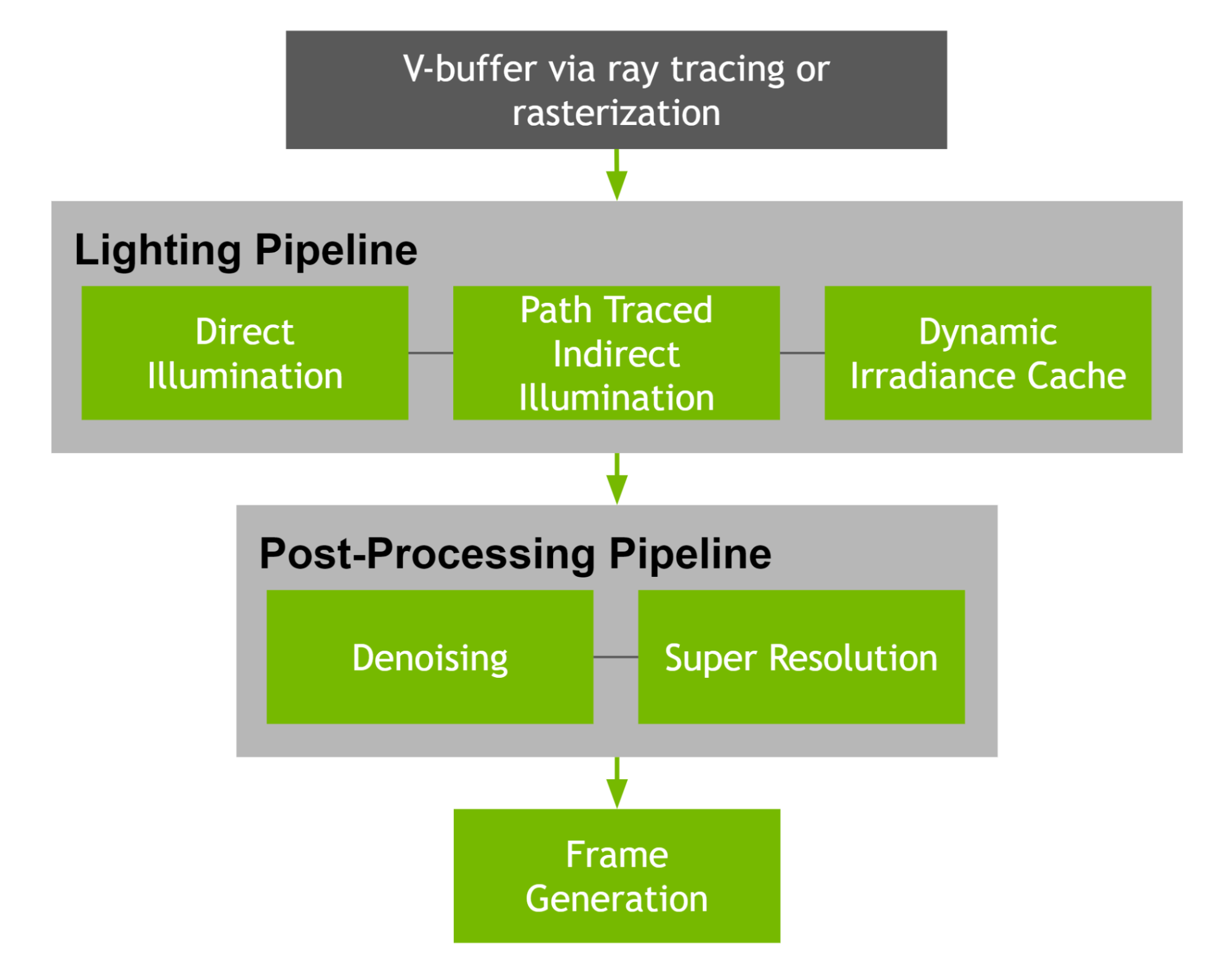

The RTX Path Tracing SDK accurately re-creates the physics of all light sources in a scene to reproduce what the eye sees in real life. This new SDK gives you the flexibility and customizability to take advantage of proven NVIDIA technologies to suit the following use cases:

- Building a reference path tracer to ensure that your lighting during production is true to life, accelerating the iteration process.

- Building high-quality photo modes for RT-capable GPUs or real-time, ultra-quality modes that take advantage of the Ada Lovelace architecture.

The RTX Path Tracing SDK is the culmination of decades of NVIDIA research. This SDK demonstrates best practices for building a path tracer using the latest versions of the following tools and features:

- DLSS 3 for super-resolution and frame generation, to multiply performance.

- RTX Direct Illumination (RTXDI) for efficient sampling of a high number of shadow casting and dynamic lights.

- NVIDIA Real-Time Denoisers (NRD) for high-performance denoising of all light sources.

- Opacity Micro-Map (OMM) for improving RT performance in scenes with heavy alpha effects.

- Shader execution reordering (SER) for improving shader scheduling, thus increasing performance.

This SDK has all the necessary NVIDIA RTX components, documentation, and sample application for you to get started today.

Improving path tracing performance and increasing accessibility

NVIDIA continuously looks for more opportunities to improve the performance of real-time path tracing, which has led to the development of Opacity Micro-Map (OMM), announced at GTC 2022.

OMM SDK 1.0 is available to all developers. OMM allows you to efficiently map complex geometries, such as dense vegetation and foliage, onto triangles and micro-meshes, providing high-level performance in detailed scenes.

To optimize all this new path tracing and AI rendering technology, an update to Nsight Systems is available now. This release brings support for profiling OMM in Vulkan applications, enabling you to intercept malfunctioning OMM functions. An upcoming Nsight Graphics update will give you the ability to inspect and debug OMM’s performance gain.

NVIDIA Nsight Developer Tools has provided industry-leading performance insights and debugging guidance that has ensured optimal path-tracing integration for years now. Nsight Graphics dissects GPU activity, like exposing ray-gen shader metrics that root out stalls, and acceleration structures to optimize for ground truth lighting.

Recently, it was used to improve path tracing in the upcoming Cyberpunk 2077 Ray Tracing: Overdrive mode by resolving a shader inefficiency. Watch the demo video to learn more.

Caustics

Lastly, NVIDIA is making it easier for all Unreal Engine developers to start their path-tracing journey with a set of new ray-tracing features in the NVIDIA Caustics branch of Unreal Engine 5.

Caustics is an optical phenomenon that exists all around us and is invisible to the naked eye until it hits a reflective material like glass and produces a curved region of light.

This technology is available now through the UE 5.1 Caustics branch and makes it easier to leverage caustic effects around metallic and translucent meshes and water surfaces. For more information about how to get access, see Accessing Unreal Engine source code on GitHub.

Ray-traced depth of field is a standout feature of this branch. In traditional rasterization workflows, camera depth of field is a challenge to calculate translucent objects accurately. With the Caustics branch, such camera effects are achievable.

Figure 4 shows a traditional rasterization pipeline camera depth of field with translucency challenges.

Figure 5 shows a ray-traced camera depth of field bringing addressing translucency challenges.

In Figures 4 and 5, you can see the difference in detail between traditional caustics raster and ray-traced depth of field.

Next steps

For more information about other features introduced in this Caustics branch, see the Unreal Engine overview page.

For more information about free resources to re-create fully path-traced and AI-driven virtual worlds, see the NVIDIA Game Development resources page.

Check out the NVIDIA GTC 2023 game development sessions.