Imagine a future where ultra-high-fidelity simulation and training applications are deployed over any network topology from a centralized secure cloud or on-premises infrastructure. Imagine that you can stream graphical training content from the datacenter to remote end devices ranging from a single flat-screen or synchronized displays to AR/VR/MR head-mounted displays. This datacenter architecture enables the standardization of cloud orchestration, scales on demand, and offers 24/7 optimization of infrastructure investments.

The centralization of cloud-based content and distribution framework, by definition, implies the massive compute capacity that is essential for next-generation, AI-based, high-fidelity, training content. ISVs and content creators can tap into limitless computation power to accelerate innovation and bring about the true physically accurate, digital, twin collaboration platform.

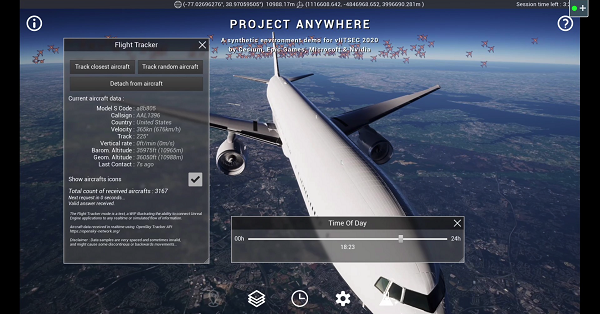

Project Anywhere is a demonstration of these ambitions. It is a real-time, cloud-based, streaming simulation and training demo:

- Created by Epic Games using the Unreal Engine authoring platform and high-resolution terrain geometry and texture tiles from Cesium.

- Hosted on Microsoft Azure Cloud, running on powerful NVIDIA V100 GPUs

- Deployed with the help of NVIDIA RTX Virtual Workstation (vWS) and NVENC software for image encoding.

- Accessible through any web browser and through Microsoft HoloLens 2, streamed wirelessly from Azure with NVIDIA CloudXR streaming software.

Unreal Engine with Cesium for simulation and training

Cesium, the platform for 3D geospatial, makes it possible to stream a full-scale, high-fidelity WGS84 globe with space-to-ground accuracy into Unreal Engine as 3D Tiles, unlocking the rich potential of real-world 3D location data for simulated environments. The combination of Unreal Engine’s stunning visual quality and high performance with Cesium’s ability to stream real-world data captured by satellites and drones will drive new innovations in simulation and training.

IT challenges in simulation and training

Traditionally, simulation and training solutions were dependent on consumer-grade computers physically attached to every trainer. From the IT and ROI perspectives, this 1:1 trainer-to-computer approach was highly inefficient and problematic. The challenges with individual computers attached to every trainer include the following issues:

- Multiple points of failure and a point of vulnerability at every seat: Problems with individual hardware components, operating system software, application software, or training content files can bring the whole system down.

- IT management chaos: It is extremely challenging for IT to manage, maintain, and update individual computers, especially when they are not always connected to the network.

- Considerable security risks: In the case of emergencies in the field, every hard drive must be collected or destroyed.

- Lack of future-readiness: Consumer-grade computers typically do not have the computational capacity required for next-generation, AI-driven software solutions.

- Low ROI: Consumer-grade computers are generally outdated as soon as they are deployed, and the units are single-purpose—meaning that they cannot be used for any other purpose when they are idle.

Hosted solution: Azure and CloudXR

By deploying Unreal Engine’s Pixel Streaming capability in Azure, you can stream 3D interactive experiences to any device with a web browser. Use the cloud’s hyperscalability and various GPU server stacks to power complex applications in a virtual network.

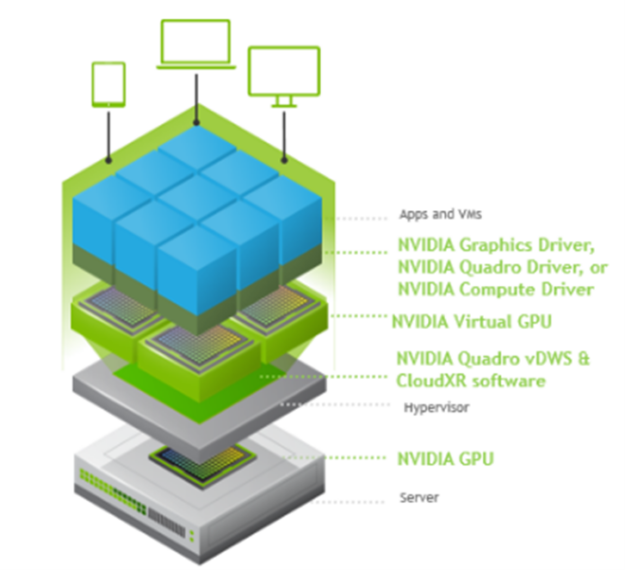

On-premises solution: EGX Server and CloudXR

NVIDIA EGX Server is a highly flexible reference design that combines NVIDIA datacenter GPUs with NVIDIA vWS software running on OEM server hardware. CloudXR is low-latency streaming software that has the flexibility to broadcast graphical training data over LAN, WAN, or 5G to any use context, from single flat screens, to multiple specialized displays, to VR/AR.

The EGX Server and CloudXR solution works by computing graphics-rich training content in the datacenter and dispatching the resulting pixels to display devices located anywhere in the world, using any network. The pixels themselves contain no data, so confidential information remains highly secure in the datacenter. Low-end, low-cost computing devices connected to monitors and head-mounted devices (HMDs) receive the pixel streams but also store no data locally and can be easily replaced upon failure.

All critical hardware and software updates occur in the datacenter, not at the endpoints, and both the datacenter hardware and endpoint hardware can be used for multiple software solutions simultaneously. Moving the endpoints from consumer PCs to the datacenter enables optimal IT efficiency, security, redundancy, and ROI.

By investing in EGX Server enterprise-class hardware, your organization can have the flexibility to expand datacenter capacity to host a multitude of training applications, all on a standardized data center platform, rather than single-purpose PCs. Workloads such as HPC, deep learning (DL), and virtual workstations can all use this centralized compute capacity. Additionally, critical hardware and software updates can be executed centrally within the data center, not to individual PC endpoints, for optimum IT efficiency.

The NVIDIA EGX Server and CloudXR solution can stream graphical training content to remote participants anywhere, for multiple use case contexts including:

- Single screen (access from a web browser)

- Multiple synchronized displays

- Hybrid reality: a VR headset physically mapped to room/trainer controls and props

- XR: AR, VR, and mixed reality use cases

NVIDIA simulation and training SDKs

Perhaps one of the most exciting developments in computer graphics today is the impact of deep learning. In the simulation and training industry, content creation is often one of the most expensive and time-consuming activities. Deep learning offers the possibility to further increase the value of existing assets through AI-based techniques including upscaling, material generation, and texture multiplication. NVIDIA offers a range of SDKs to accelerate every deep learning framework making these kinds of results practical.

With the arrival of NVIDIA RTX GPU ray tracing coupled with AI, you can now generate ultra-high-fidelity imagery in real time. NVIDIA RTX technology and OptiX SDK enable ISVs to compute ray tracing on the GPU and use AI-based de-noising to accelerate computation time by orders of magnitude. NVIDIA CUDA, CUDA-X libraries, and SDKs can even incorporate GPU acceleration into non-graphics tasks, such as CGF/SAF, mission functions, vehicle dynamics, and audio and sensor processing.

NVIDIA modeling, simulation, and training SDKs include software libraries that can be used to accelerate AI and graphics content creation for simulation and training use cases, ranging from compute, image generation, display, and immersive interaction solutions. Here are some examples of how NVIDIA SDKs can help create simulation and training solutions:

- Style transfer: Synthesizes imagery, like generating realistic terrain imagery from vectorized map data.

- Super-resolution: As display resolution/refresh increases, content fidelity must also increase. NVIDIA super-resolution can re-master existing content.

- Feature extraction: Traditionally a manual process to vectorize features from imagery. DL can be used to perform segmentation of features automatically.

- In-painting: DL can be used to segment objects and create a mask (for example, cars). In-painting can be used to fill in the masked areas with appropriate content. DL can also be used to remove time-dependent artifacts.

- Material synthesis: Realistic rendering depends on physically based materials. With material synthesis, a material can be generated from two images of a surface.

- De-noising: With the advent of ray tracing, it is possible to generate far more realistic scenes. To make it practical for real-time, de-noising can reduce computation by orders of magnitude.

- Warp and blend: Warp and Blend are interfaces for warping (image geometry corrections) and blending (intensity and black level adjustment) a single display output or multiple display outputs.

- NVENC: NVIDIA GPUs contain hardware-based encoders for fully accelerated, hardware-based video decoding and encoding for several popular codecs, freeing the CPU for other operations.

Conclusion

The future state of simulation and training your AI-generated, high-fidelity training solutions distributed over WAN and 5G networks depends on moving compute from the training endpoints to centralized consolidation. It also depends on enriching ISV solutions with the power to tap into GPU compute, GPU raytracing, and AI.

NVIDIA offers EGX Server with CloudXR and simulation and training SDKs to help customers and partners achieve their goals. Project Anywhere is an excellent implementation of these technologies – the first of many to come. For more information, see Project Anywhere.