Get Started With Public Sector Use Cases

Blueprint

Autonomous Orchestration of Agentic Workflows

Federal agencies are automating the extraction and synthesis of insights from multimodal enterprise data to deliver real-time situational awareness and faster, more informed decision-making. Autonomous workflows enhance productivity with critical information advantages and reduce cognitive load.

Blueprint

Customized and Streamlined Public Service Delivery

Organizations are using AI-powered chatbots, virtual assistants, and digital humans to deliver personalized government information and support services to citizens. Automated digital workflows improve efficiency and provide more responsive, tailored engagement with constituents.

Blueprint

Agentic AI Automation in Public Services

Generative AI is being used to automate administrative tasks, summarize documents, and improve internal communications, accelerating decision-making and streamlining service delivery. AI tools enable agencies to personalize content and citizen interactions, resulting in more engaging and responsive public services.

Blueprint

Vulnerability Analysis for Container Security

Generative AI can improve vulnerability defense while decreasing the load on security teams. Using NVIDIA NIM™ microservices and the Morpheus cybersecurity AI SDK, the NIM Agent Blueprint accelerates CVE analysis at enterprise scale, reducing time to assess from days to just seconds.

Blueprint

Safety for Agentic AI

As the number of connected users and devices grows, organizations are producing more data than they can manage, increasing cybersecurity risks. Learn how to improve safety, security, and privacy of AI systems at build, deploy, and run stages.

Training

Building AI-Based Cybersecurity Pipelines

In this course, you’ll learn to build Morpheus pipelines to process and perform AI-based inference on massive amounts of data for cybersecurity use cases in real time. Explore how to utilize several AI models with a variety of data input types for tasks like sensitive information detection, anomalous behavior profiling, and digital fingerprinting.

Blueprint

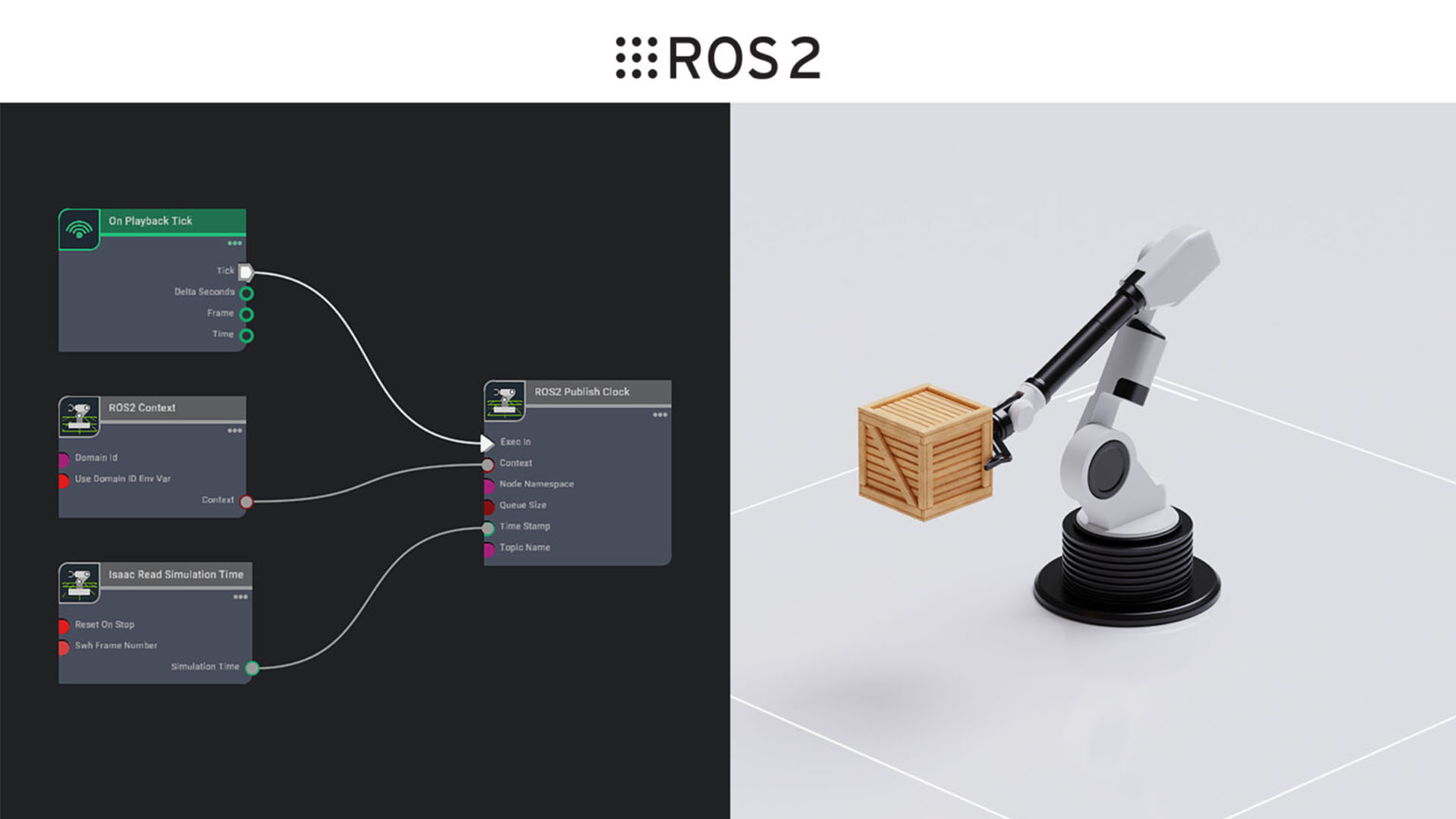

Synthetic Manipulation Motion Generation for Robotics

Autonomous vehicles and robotics are enhancing operations, improving safety, and increasing efficiency in complex and changing environments. By using simulation-driven development and edge AI processing, agencies can streamline logistics, manufacturing, and service delivery with minimal risk and greater adaptability.

Blueprint

Test Multi-Robot Fleets for Industrial Automation

Humanoid robots are streamlining logistics and manufacturing by automating tasks like material transport, inventory management, and assembly. These robots deliver real-time data, adapt to changing environments, and improve safety and efficiency, while reducing costs and workforce strain.

Training

Synthetic Data Generation for Perception Model Training in Isaac Sim

In this course, you’ll learn how to train and deploy a perception model using synthetic data generation (SDG) for dynamic robotic tasks. Learn how to analyze the role of perception models, use simulation for SDG, apply domain randomization techniques, and evaluate the effectiveness of a trained model.

Blueprint

Build Digital Twins for AI Factory Design and Operations

Virtual factory integration enables the development of OpenUSD-based tools and data pipelines to speed up operations and create new digital manufacturing opportunities. This includes layout planning, process simulation, robotics, and monitoring—all enhanced by AI for tasks like multi-camera tracking.

Blueprint

Enhance Predictive Maintenance

Accelerated data processing and efficient ETL workflows enable predictive maintenance for faster, smarter decisions and lower costs. The optical inspection of public infrastructure is critical in accelerating time to insight and improving the assessment of maintenance needs.

Training

Extend Omniverse Kit Applications for Building Digital Twins

In this hands-on course, you’ll learn how to develop OpenUSD-based digital twin applications on the Omniverse™ platform, focusing on data aggregation, interactivity, and physics simulation.

Blueprint

Visualization and Simulation of Geospatial Environments

GPU-accelerated pipelines in the cloud rapidly process massive satellite and sensor data, enabling faster, more informed decisions for geospatial intelligence. These advanced AI and computer vision workflows enhance visualization and simulation for digital twins, autonomous systems, sensor processing, and more.

Blueprint

Air Traffic Management Systems Accelerated Signal Processing

Accelerated radar and signal processing enables real-time analysis in air traffic management. These enhancing signal processing frameworks equip controllers with the timely, detailed insights needed to manage complex and ever-increasing traffic volumes.

Training

Disaster Risk Monitoring Using Satellite Imagery

Learn how to build and deploy a deep learning model to automate the detection of flood events using satellite imagery. This workflow can be applied to lower the cost, improve the efficiency, and significantly enhance the effectiveness of various natural disaster management use cases.