An AI model card is a document that details how machine learning (ML) models work. Model cards provide detailed information about the ML model’s metadata, including the datasets it is based on, performance measures it was trained on, and the deep learning training methodology itself. This post walks you through the current practice for AI model cards and how NVIDIA is advancing Model Card++, the enhanced AI model card.

In their 2019 paper Model Cards for Model Reporting, a group of data scientists including Margaret Mitchell, Timnit Gebru, Parker Barnes, and Lucy Vasserman, sought to create a documentation standard for AI models. Their primary motivation was to promote transparency and accountability into the AI model development process by disclosing essential information about an AI model.

This information includes who developed the model, intended use cases and out-of-scope applications, expected users, how the model performs with different demographic groups, information about the data used to train and verify the model, limitations, and ethical considerations.

Until the development of the first AI model card, little information was shared about a particular AI model to help determine whether the model was suitable for a particular organization’s purpose.

This becomes problematic if the output of a model could have an adverse impact on a particular group of people. For example, the 2019 university-led study, Discrimination through Optimization: How Facebook’s Ad Delivery Can Lead to Skewed Outcomes revealed that algorithms for delivering ads on social media resulted in discriminatory ad delivery despite the use of neutral parameters for targeting the ads.

Why are AI model cards important?

AI model cards play a critical role in AI transparency. They provide both developers and downstream users and beneficiaries with a clear understanding of an AI model’s capabilities in a clear and concise format.

Model cards are a best practice, encouraging developers to engage with the people who will ultimately be impacted by the model’s output. They are built to communicate to nontechnical stakeholders, customers using the software, and even students. In addition, model cards educate policymakers who are drafting regulations and legislation governing AI models and systems.

While model cards are designed to encourage model transparency and trustworthiness, they are also used by stakeholders to improve developer understanding, evaluate model fitness for use, and standardize decision making processes.

Model cards are structured and organized in schema and can communicate a common software quality expectation. They concisely report information on different factors like demographics, environmental conditions, quantitative evaluation metrics, and, where provided, ethical considerations.

Model cards can also record model version, type, date, license restrictions, information about the publishing organization, and other qualitative information. Model cards are designed to educate and allow an informed comparison of measures and benchmarks, enabling developers to compare their results to those of similar models, and even pave the way for innovation. Figure 1 shows the NVIDIA Nemotron Nano 9b v2 model card.

Model cards are like open-source fact sheets. Unless you are the developer of the model itself, you would likely not know much about the AI model itself without model cards. Model cards provide the most comprehensive understanding of a model’s details and considerations individuals should take into account for its application.

For instance, a smartphone might have a face detection system that allows the user to unlock it based on recognition. Without model cards, model developers might not realize how a model will behave until it is deployed. This is what happened when Dr. Joy Buolamwini tried to use a face detection system as part of her graduate work at MIT.

Why is AI model card accessibility important?

AI model cards should not just be built for developers. Companies should also build model cards that are accessible to nontechnical individuals and technical experts alike.

Model cards are not restricted to a given industry or domain. They can be used for computer vision, speech, recommender systems, and other AI workflows. In addition to having active use in higher education and research and high performance computing spaces, model cards have utility across multiple industries including automotive, healthcare, and robotics applications. Model cards can:

- Teach students and help them understand real-world use cases

- Inform policymakers and clarify intended use for non-model developers

- Educate those interested in seeking the benefits of AI

Mobilizing AI through model cards is a decisive and transparent step that companies can take toward the advancement of trustworthy AI.

Improving and enhancing model cards

We conducted market research to inform the improvements to existing model cards. While 90% of respondents in the developer sample agreed that model cards are important and 70% would recommend them as is, there is room for improvement to drive their adoption, use, and impact.

Based on our research, existing model cards should be enhanced in two primary areas: accessibility and content quality. Model card users need model cards to be easily accessible and understandable.

Accessibility

Discovery was noted as one area of improvement. This pertains to the idea that AI developers should be able to find and then promote model cards alongside their work. This is true of models introduced in research papers as well as models deployed for commercial use.

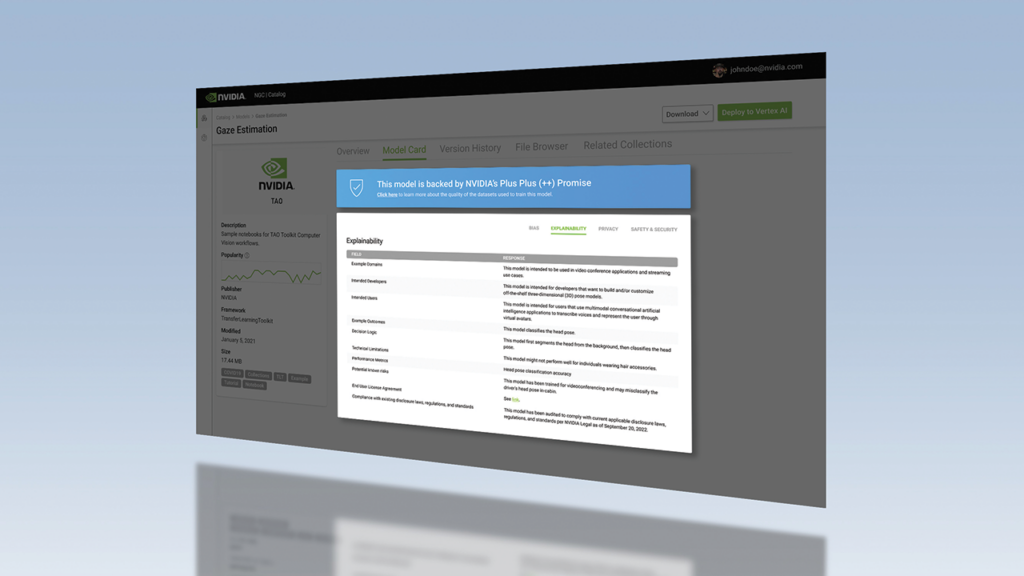

Secondly, model cards need to be located where interested individuals can reference them. One of the ways NVIDIA promotes model cards is through NVIDIA and partner platforms. Models and model cards are located side-by-side in this same repository.

Content quality

After a model card has been located, the next challenge for the user is understanding the information contained in it. This is particularly critical in the model evaluation stage before selection. Not understanding the information contained in the model card leads to the same outcome as not knowing the information exists: model card users cannot make informed decisions.

To address this, NVIDIA encourages using a consistent organizational structure, simple format, and clear language for model cards. Adding filterable and searchable fields is also recommended. When individuals can find the information contained in model cards, they are more likely to understand the software. According to our research, respondents relied on the information contained in the model card when it was easily accessible and understandable.

In fact, performance and licensing information were the two most important areas respondents wanted to see in model cards. Figure 2 shows how the Nemotron Nano v2 model card devotes separate sections to performance and licensing.

After performance and licensing information, respondents felt that the section on ethical considerations was the most important category of information to include in model selection criteria. Respondents shared that they wanted more information about the datasets used to train and validate the model—particularly details regarding unwanted bias—as well as information about safety and security.

What is Model Card++?

Model Card++ is the improved model card that NVIDIA developed in 2020. In addition to the typical information provided in the Overview section of a model card, Model Card++ incorporates:

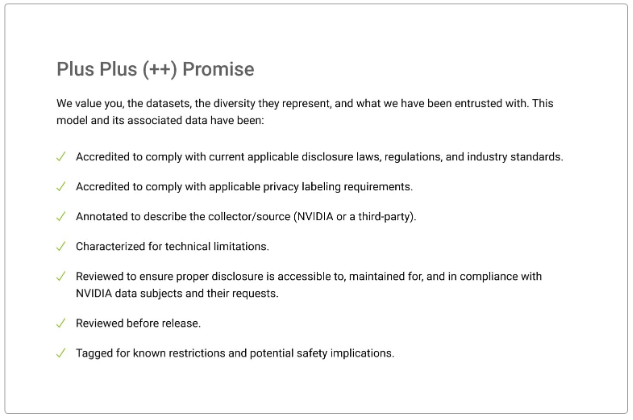

- The ++ Promise, which describes the NVIDIA software development approach to model development.

- Four subsections detailing model-specific information concerning Bias, Explainability, Privacy, and Safety and Security.

Figure 3 shows the ++ Promise, which is embedded in every Model Card++.

The ++ Promise describes the steps NVIDIA takes to demonstrate the trustworthiness of our work embedded in design. The four subcards outline:

- Steps taken to mitigate unwanted bias

- Provenance of training datasets, including what type of data was collected and how it was collected

- Key disclosures related to privacy and personal data use

- Technical limitations, known risks, and potential safety impacts

- Key performance indicators

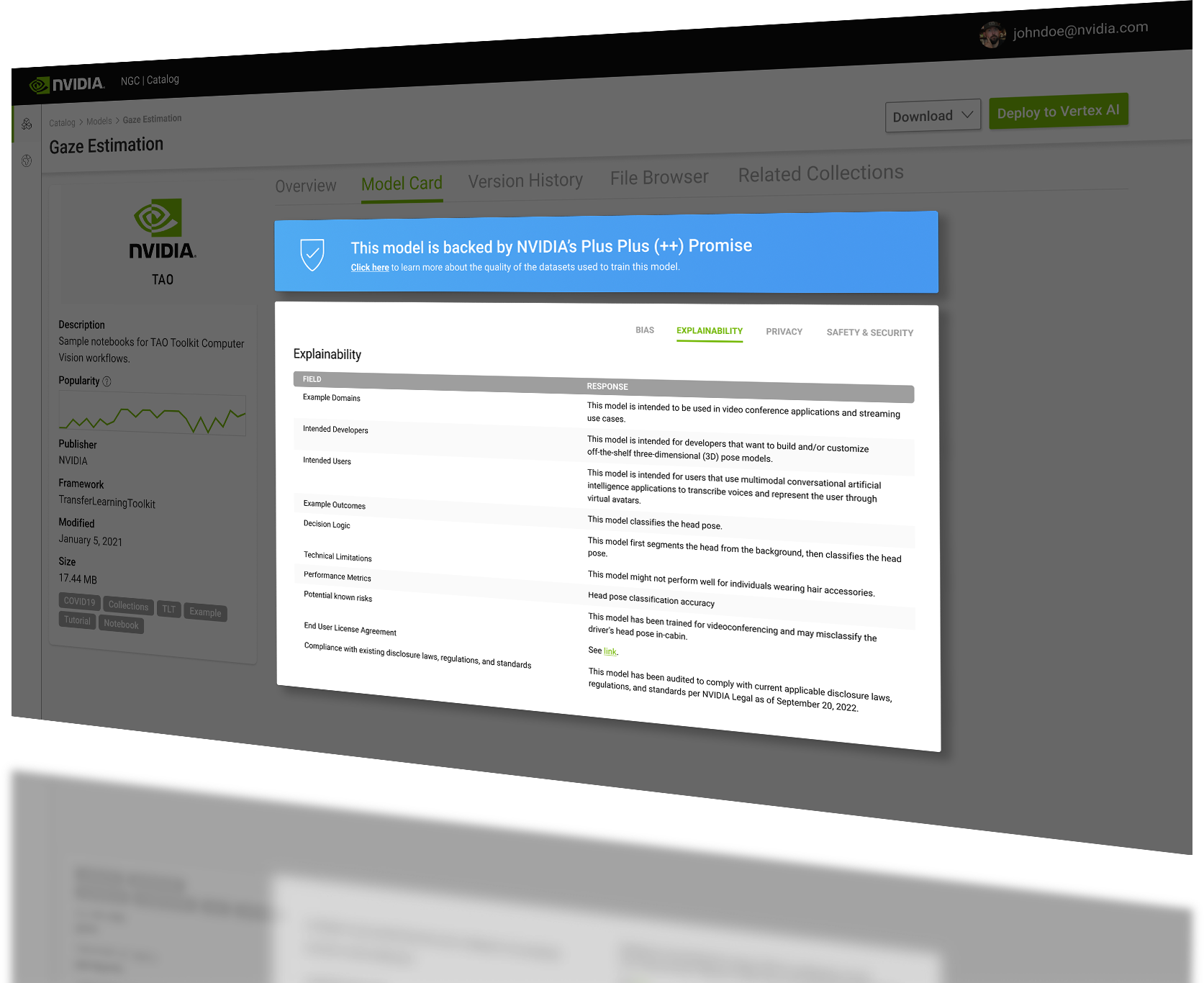

While this is not an exhaustive list, it does demonstrate the intent by design and commitment to standards and protections that value individuals, the data, and NVIDIA’s contribution to AI. This applies to every model, across domains and use cases. Figure 4 shows an example of the Explainability subcard. Each Model Card++ will include a dedicated section of fields and responses for each of the subsections. What is shown in the response section for each field is not meant to represent a real-world model, but to illustrate what will be provided based on current understanding and the latest research.

The Explainability subcard provides information about example domains for an AI model, intended users, decision logic, and compliance review. NVIDIA model cards aim to present AI models using clear, consistent, and concise language.

How was Model Card++ built?

Model Card++ is the result of a disciplined, cross-functional approach in partnership with engineering, product, research, product security, and legal teams. Building on existing NGC model cards, we reviewed model cards from other organizations and templates, including GitHub and Hugging Face, to find out what other information could be provided consistently.

We worked with engineering to pilot what could be consistently provided in addition to information that is currently provided. We discovered that although our model cards have a section for ethical considerations, more could be provided, including measures taken to mitigate unwanted bias. We also found we could describe dataset provenance and traceability, dataset storage, and quality validation.

This highlighted the importance of disclosing key details regarding dataset demographic make-up, performance metrics for different demographic groups, and specific mitigation efforts we have taken to address unwanted bias. We also worked with an algorithmic bias consultant to develop a process for assessing unwanted bias that is compliant with data privacy laws and coupled that with our latest market research.

As we built Model Card++, we also corroborated our work with market research by surveying developers who used our models and those from across the industry. We validated the desired information and structured it with our user design experience team to present it in a clear and organized format. We are excited to introduce Model Card++ and hope to continue leading efforts that encourage inclusive AI for all.

What’s new in Model Card++?

NVIDIA supports the iterative development of model cards, with dedicated processes and tools such as the Model Card Generator to automate and standardize documentation. Whether you’re a developer, data scientist, or someone with minimal technical knowledge, this product simplifies the process of generating model cards by automating the extraction of relevant information from code.

In addition, NVIDIA iteratively updates its model card documentation in alignment with evolving regulations, including global frameworks such as the EU AI Act. These updates help ensure that NVIDIA documentation meets compliance requirements for transparency, safety, and accountability.

Get started with trustworthy AI

NVIDIA has broadened its AI transparency framework by introducing documentation for additional program classes, including blueprints, systems, datasets, and containers. To check out the free, open source templates NVIDIA has provided to support the development of transparent AI documentation, visit the NVIDIA/Trustworthy-AI GitHub repo. With these expanded resources, NVIDIA is making it easier for customers and partners to responsibly develop AI and advance transparency and accountability across the AI supply chain.

This post was updated in October 2025.