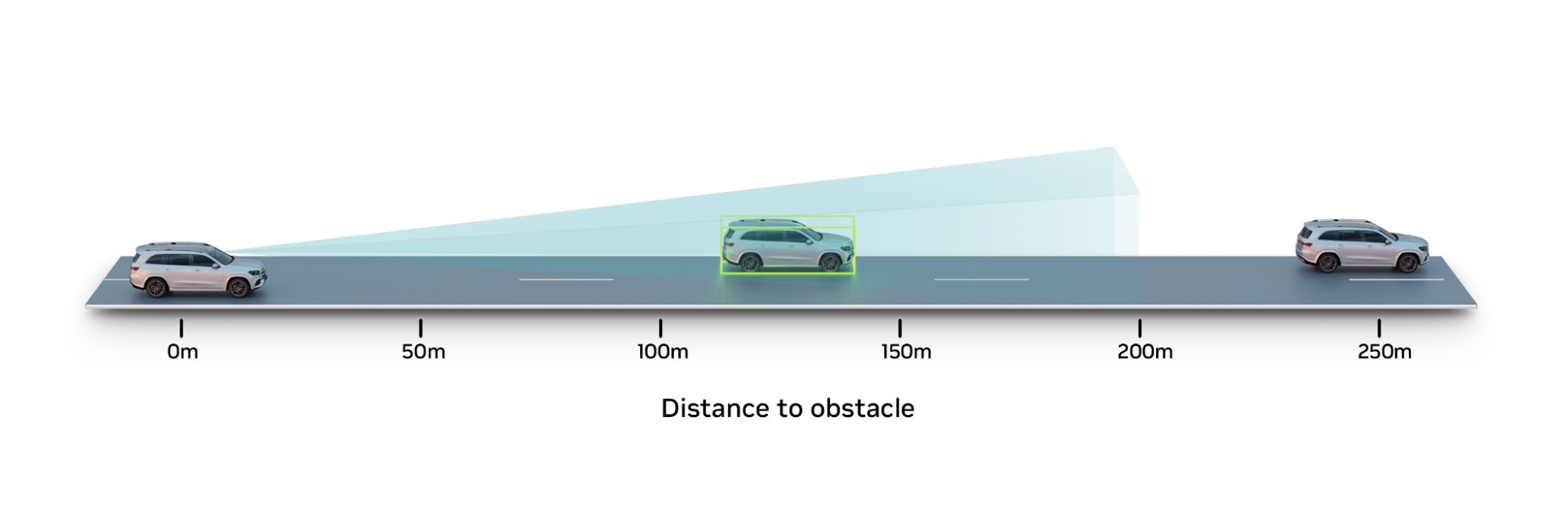

Detecting far-field objects, such as vehicles that are more than 100 m away, is fundamental for automated driving systems to maneuver safely while operating on highways.

In such high-speed environments, every second counts. Thus, if the perception range of an autonomous vehicle (AV) can be increased from 100 m to 200 m while traveling at 70 mph, the vehicle has significantly more time to react.

However, extending this range is particularly challenging for camera-based perception systems typically deployed in mass production passenger vehicles. Training camera perception systems for far-field object detection requires collecting large amounts of camera data, as well as ground truth (GT) labels, such as 3D bounding boxes and distance.

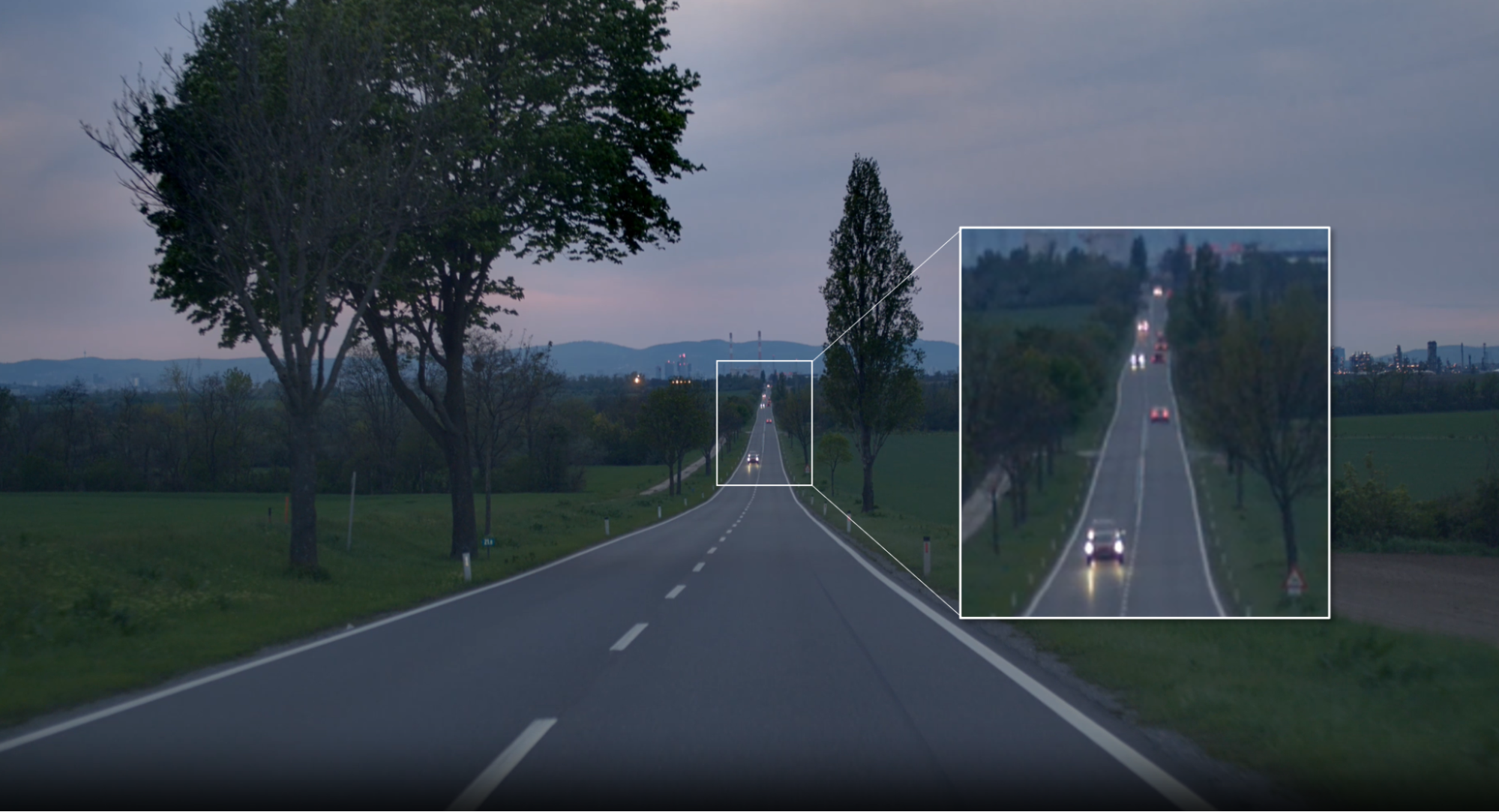

Extracting this GT data becomes more difficult for objects that are beyond 200 m. The farther away an object is, the smaller it becomes in the image—eventually becoming just a few pixels wide. Typically, sensors like lidar are used with aggregation and auto-labeling techniques to extract 3D and distance information, but this data becomes sparse and noisy beyond the lidar’s operational range.

The NVIDIA DRIVE AV team needed to address this exact challenge during the course of development. To do so, we generated synthetic GT data for far-field objects in NVIDIA DRIVE Sim, leveraging the capabilities of NVIDIA Omniverse Replicator.

NVIDIA DRIVE Sim is an AV simulator built on Omniverse and includes physically based sensor models that have been thoroughly validated for high-fidelity sensor simulation. For more details, see Validating NVIDIA DRIVE Sim Camera Models.

NVIDIA DRIVE Sim enables querying the location of every object in the simulated scene, including objects placed 400 m or 500 m away from the ego vehicle at any camera resolution, with pixel-level accuracy.

When the vehicle position information is combined with physically based synthetic camera data, it is possible to generate the necessary 3D and distance GT labels for perception.

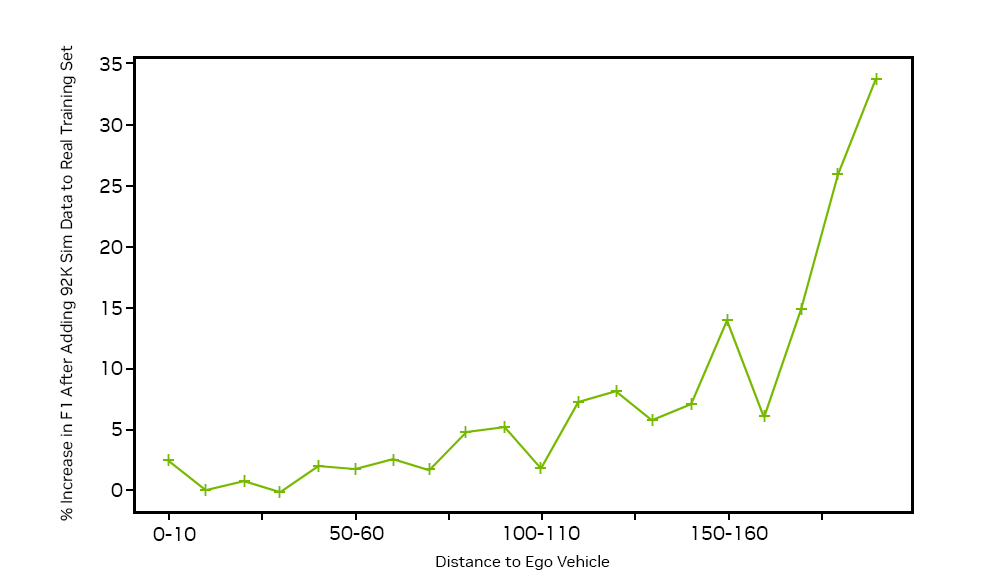

By adding this synthetic GT data to our existing real dataset, we were able to train networks to detect cars at long range, and achieve an F1 score improvement of 33% for cars at 190 m to 200 m.

Synthetic GT data generation for far-field objects

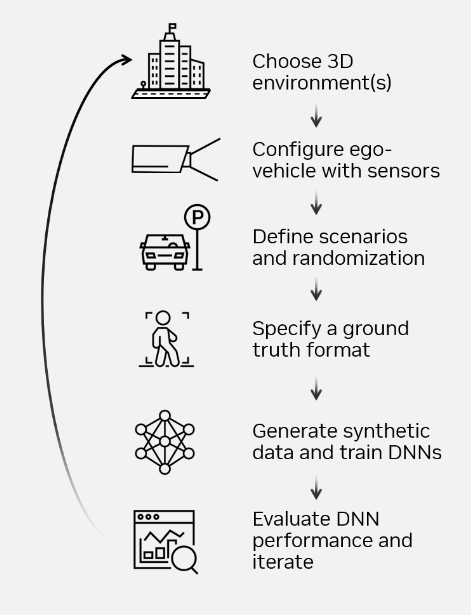

To address the scarcity of accurately labeled far-field data, we aimed to generate a synthetic dataset of nearly 100 K images of objects at long distances, to augment the existing real dataset. Figure 3 presents the process of generating these datasets in NVIDIA DRIVE Sim using Omniverse Replicator, from choosing 3D environments to evaluating deep neural network (DNN) performance.

After selecting 3D environments that address highway use cases, we configured an ego vehicle with the requisite camera sensors.

NVIDIA DRIVE Sim leverages the domain randomization APIs built on the Omniverse Replicator framework to programmatically change the appearance, placement, and motion of 3D assets. By using the ASAM OpenDRIVE map API, we placed vehicles and obstacles at far-field distances of 100 m to 350 m—and beyond—in a context-aware manner.

The NVIDIA DRIVE Sim action system enables simulation of a variety of challenging cases that introduce occlusions, such as lane changes or close cut-ins. This provides critical data for scenarios that are difficult to encounter in the real world.

In the final step before data generation, we leveraged the GT writers from Omniverse Replicator to generate the requisite labels, including 3D bounding boxes, velocities, semantic labels, and object IDs.

Improving camera perception performance with synthetic camera data

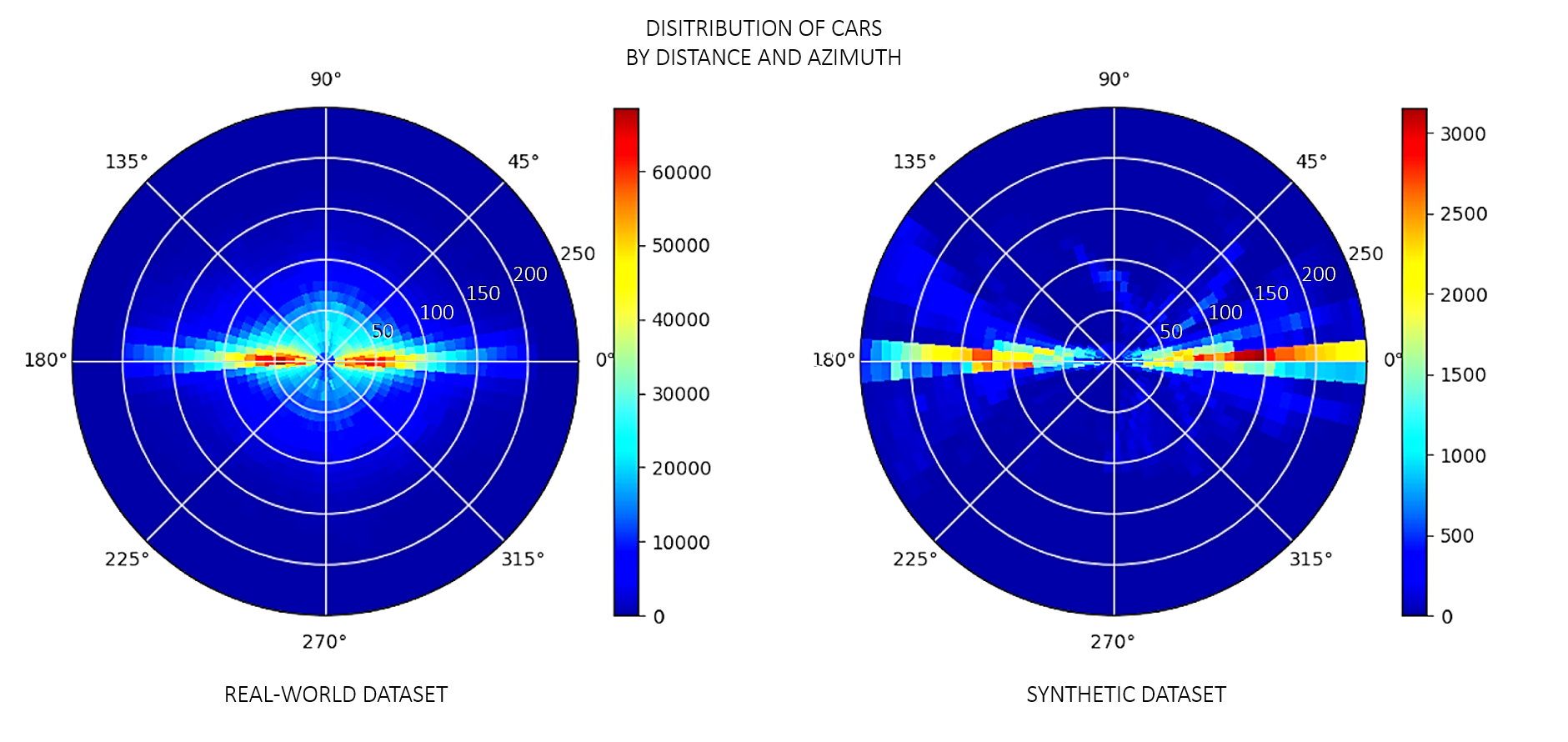

For this use case, the real training dataset consists of more than 1 million images containing GT labels for vehicles in highway scenarios closer than 200 m. The distribution of cars in these real images peaks at less than a 100 m from the data collection vehicle, as shown on the left side of Figure 4. For objects at greater distances, GT labels are sparse and insufficient to boost perception.

In this case, we generated ~92 K synthetic images with ~371 K instances of cars and GT labels focused on a distribution of long-range vehicles placed up to 350 m. The distribution of cars in the synthetic dataset is skewed more toward farther ranges of 150 m and beyond. By adding the ~92 K synthetic images to this real dataset, we introduced the required labeled far-field objects to the training distribution.

After training the perception algorithm on the combined dataset, we tested the network against a real dataset with a distribution of cars up to 200 m. The KPI for perception performance improvement by distance shows up to 33% improvement in the F1 score—a measure of a model’s accuracy on a dataset—for cars between 190 m to 200 m.

Summary

Synthetic data is driving a major paradigm shift in AV development, unlocking new use cases that were not previously possible. With NVIDIA DRIVE Sim and NVIDIA Omniverse Replicator, you can prototype new sensors, evaluate new ground truth types and AV perception algorithms, and simulate rare and adverse events, all in a virtual proving ground at a fraction of the time and cost it would take in the real world.

The rich set of possibilities synthetic datasets enable for AV perception continues to evolve. To see our workflow in action and learn more, watch the NVIDIA GTC DRIVE Developer Day session, How to Generate Synthetic Data with NVIDIA DRIVE Replicator.