From credit card transactions, social networks, and recommendation systems to transportation networks and protein-protein interactions in biology, graphs are the go-to data structure for modeling and analyzing intricate connections. Graph neural networks (GNNs), with their ability to learn and reason over graph-structured data, have emerged as a game-changer across various domains.

However, uncovering the hidden patterns and valuable insights within these graphs can be challenging, especially in data sampling and end-to-end training of GNNs.

To address this gap, NVIDIA has released accelerated GNN framework containers for DGL and PyG with features such as:

- GPU acceleration for data sampling

- GNN Training and Deployment Tool (GNN Tool)

This post provides an overview of the benefits of NVIDIA accelerated DGL and PyG containers, showcases how customers are using them in production, and provides metrics on the performance.

Introducing the DGL container in the NGC catalog

Deep Graph Library (DGL) is one of the popular open-source libraries available for implementing and training GNNs on top of existing DL frameworks such as PyTorch.

We are excited to announce that DGL is now accelerated with other NVIDIA libraries and is publicly available as a container through the NGC Catalog—the hub for GPU-accelerated AI/ML, HPC applications, SDKs, and tools. The catalog provides faster access to performance-optimized software and simplifies building and deploying AI solutions bringing your solutions to market faster. For more information, see 100s of Pretrained Models for AI, Digital Twins, and HPC in the NGC Catalog (video).

The 23.09 release of the DGL container improves data sampling and training performance for DGL users. The following are the top features of this release.

GPU acceleration for data loader sampling

RAPIDS cuGraph’s sampler can process hundreds of billions of edges in a matter of seconds, and compute samples for thousands of batches at one time for even the world’s largest GNN datasets. The DGL container comes with cuGraph-DGL, an accelerated extension to DGL, which enables users to take advantage of this incredible performance.

Even on midsize datasets (~1B edges), cuGraph data loading performance is at least 2–3x faster than native DGL, based on benchmarks run with eight V100 GPUs. cuGraph-DGL sampling offers better than linear scaling for up to 100B edges, by distributing the graph across multiple nodes and multiple GPUs, also saving memory in the process.

cuGraph can sample 100B edges in only 16 seconds! cuGraph-ops, the proprietary NVIDIA library, has accelerated GNN operators and models, such as cuGraphSAGE, cuGraphGAT, and cuGraphRGCN, cutting model forward time in half.

GNN Training and Deployment Tool

GNN Tool is a flexible platform for training and deploying GNN models with minimal effort. This tool, built on top of the popular Deep Graph Library (DGL) and PyTorch Geometric (PyG) frameworks, enables you to build end-to-end workflows for rapid GNN experimentation.

It provides a fully modular and configurable workflow that enables fast iteration and experimentation for custom GNN use cases. NVIDIA includes example notebooks in our containers for easy experimentation.

Multi-arch support

The DGL containers published in NGC have both x86 and ARM64 versions to support the new NVIDIA Grace Hopper GPU. Both versions use the same container tag. When you pull the container from an Arm-based Linux system, you pull the ARM64 container.

Example of training GNN on Grace Hopper with an ARM64-based DGL container

The Unified Virtual Addressing (UVA) mode in GNN training benefits tremendously from the connection between the NVIDIA Grace CPU and NVIDIA Hopper GPU in Grace Hopper. On Grace Hopper, training the same GraphSAGE model with the ogbn-papers100M dataset takes 1.9 seconds/epoch, which is about 9x faster compared to training on the H100 + Intel CPUs with PCIe connections (Table 1).

| System | GH200 | H100 + Intel CPU | A100 + AMD (DGX A100) | AMD Genoa (CPU only) |

| (seconds / epoch) | 1.9 | 16.92 | 24.8 | 107.11 |

These numbers take advantage of the huge pages on Grace Hopper for the graph and its features. They were benchmarked on a 512-GB Grace Hopper node. The model is run with batch size 4096 and a (30,30) fanout (looking at up to 30 neighbors of each node in a two-layer GraphSAGE model). This is running on DGL version 1.1 on CUDA 12.1.

DGL training performance

One of the challenges in training GNNs is the data loading process. In some cases, such as node classification using GraphSAGE for the ogb-papers100M dataset, the data loading process takes more than 90% of the end-to-end training time. DGL 0.8v enabled the UVA mode for efficient GPU loading of graph features, which has improved the performance since then.

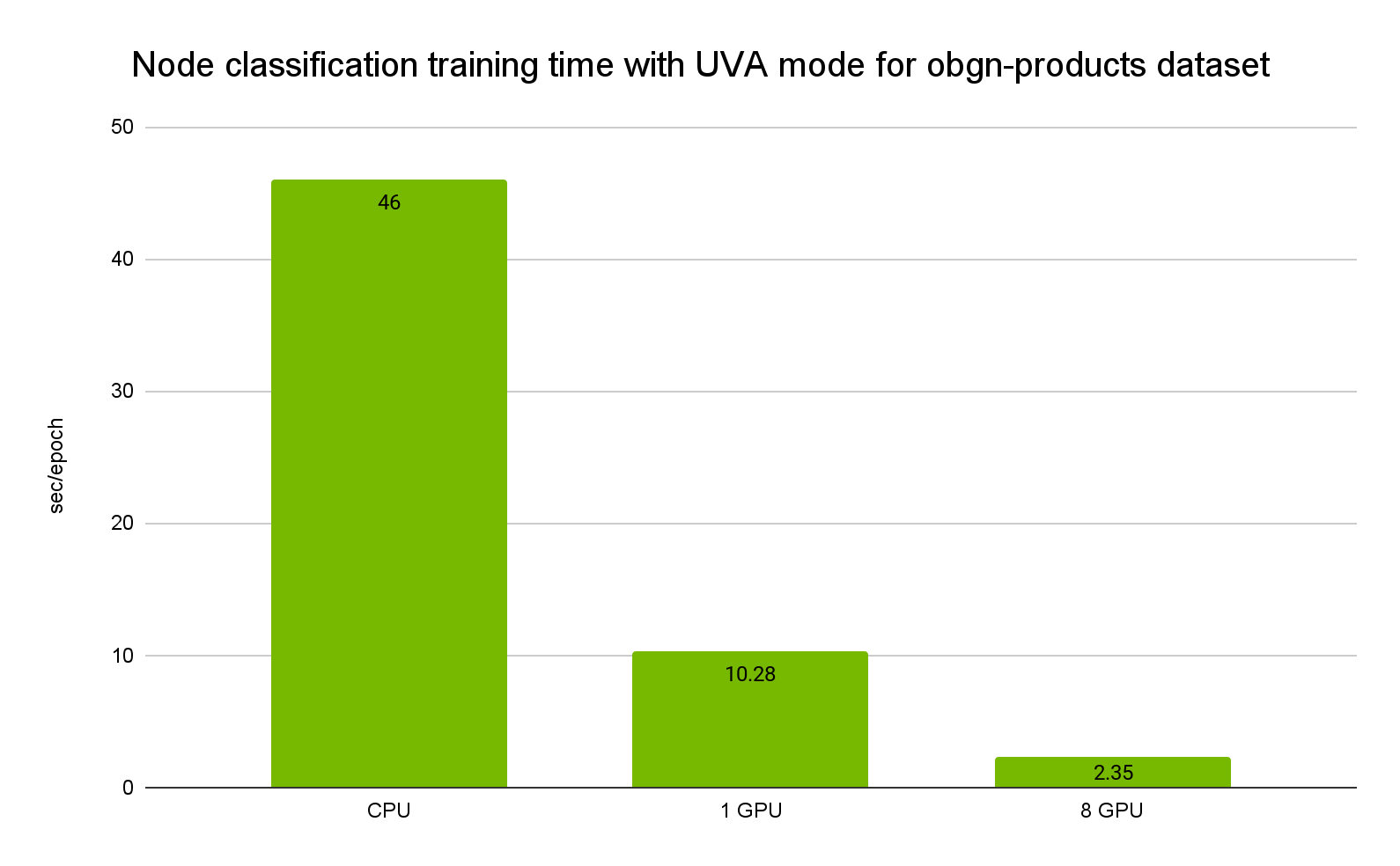

Consider the GraphSAGE model with the ogbn-products dataset for a node classification task. It has 2.4M nodes and 61.9M edges. On a DGX-1 V100 GPU, with the UVA mode, it can give up to a 20x speed-up compared to CPU-only training (Figure 1).

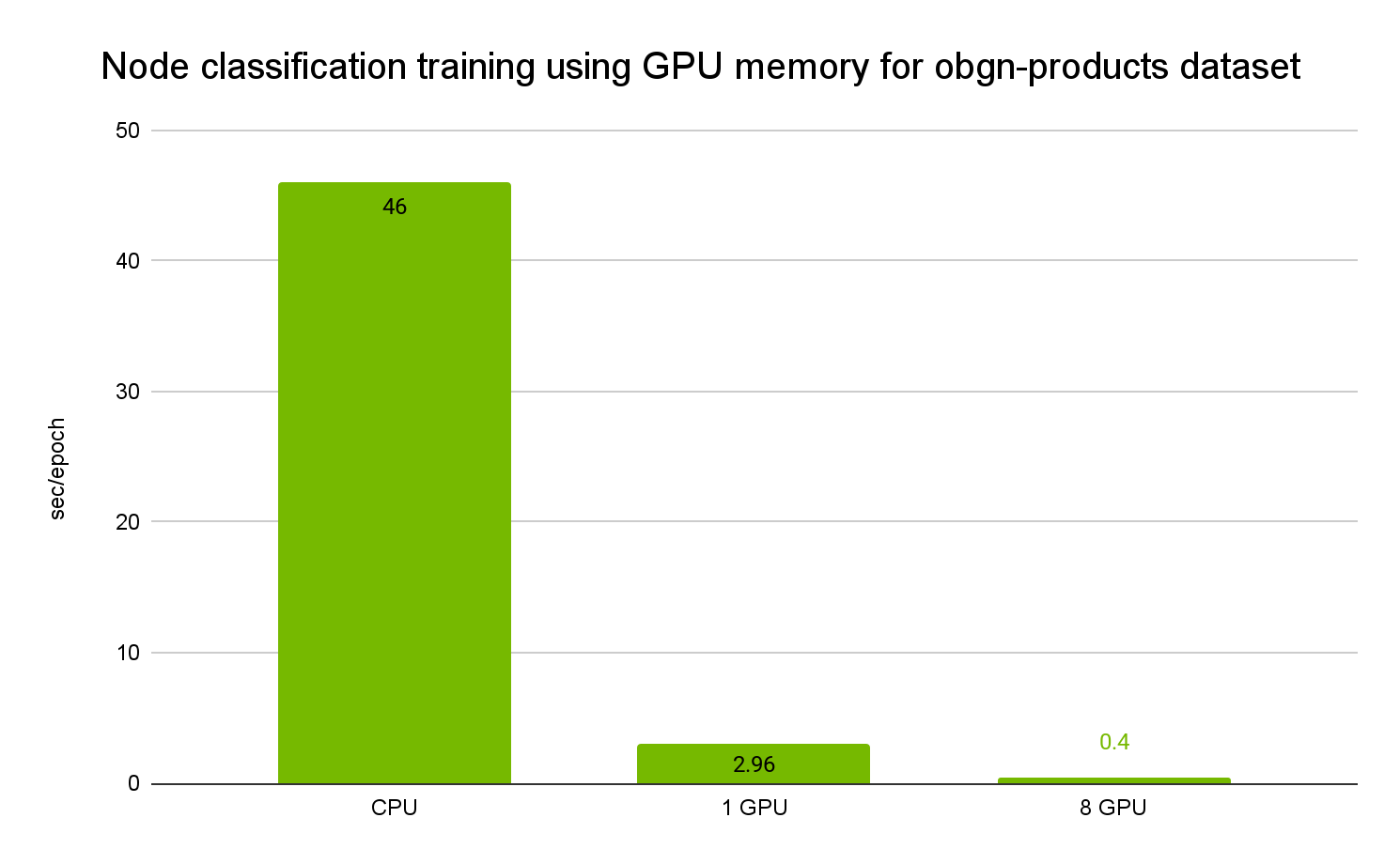

As the ogbn-product dataset can be loaded into GPU memory, it can be even faster with an up to 115x speed-up (Figure 2).

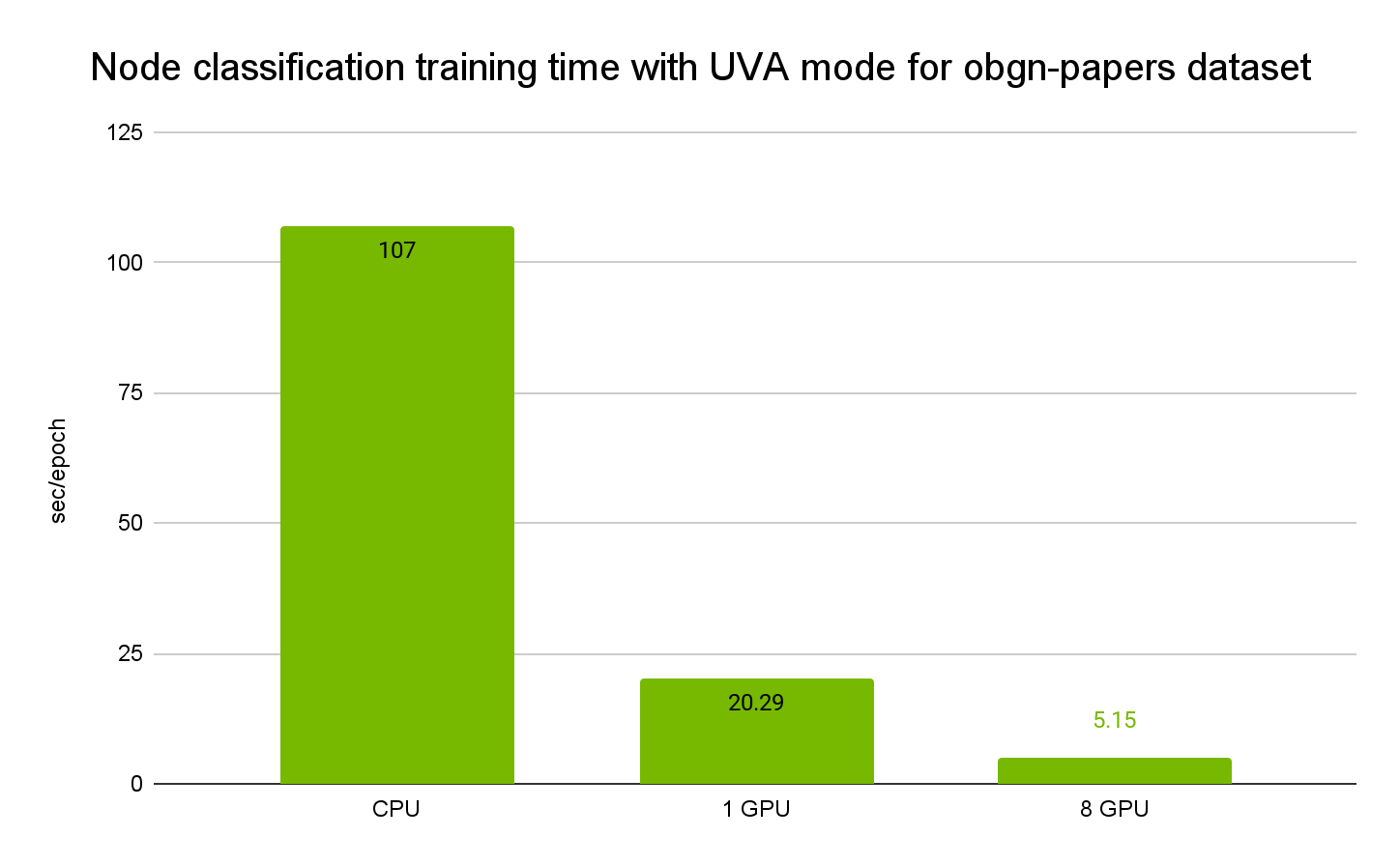

For large datasets, such as ogbn-papers100M, UVA mode must be turned on.

Figure 3 shows the per epoch training time in seconds for ogbn-papers100M for the node classification task. It has 111M nodes and 3.2B edges.

You can find the node classification script in the NGC DGL 23.09 container under the /workspace/examples/multigpu directory.

PyTorch Geometric container

PyTorch Geometric (PyG) is another popular open-source library for writing and training GNNs for a wide range of applications. We are launching the PyG container accelerated with NVIDIA libraries such as cuGraph.

On midsize datasets (~1B edges), cuGraph data loading performance is at least 4x faster than native PyG, based on benchmarks run with eight A100 GPUs.

We are already observing several customers getting benefits from the PyG container, and we plan on leveraging PyG acceleration for use with NVIDIA BioNeMo models as well.

Customer success stories

Here’s how different companies have been using the NVIDIA Accelerated DGL and PyG containers to accelerate their workflows.

GNNs for physics-based ML

NVIDIA PhysicsNeMo is an open-source framework for building, training, and fine-tuning physics-based machine learning (ML) models in Python.

With the growing interest and application of GNNs across disciplines that include computational fluid dynamics, molecular dynamics simulations, and material science, NVIDIA PhysicsNeMo started supporting GNNs by leveraging the DGL and cuGraphOps libraries.

NVIDIA PhysicsNeMo currently supports GNNs that include the MeshGraphNet, AeroGraphNet, and GraphCast models for mesh-based simulations and global weather forecasting. In addition to the network architectures, PhysicsNeMo includes training recipes for developing models for weather forecasting, aerodynamic simulation, and vortex shedding.

GNNs for fraud detection

Drawing upon decades of experience, American Express has a significant track record of using AI-powered tools and models to monitor and mitigate fraud risks. They also effectively identify individuals engaged in fraudulent activities within the credit card industry.

At American Express AI Labs, research is continuously being conducted to gain a deeper understanding of fraudster networks through the implementation of graph-based machine learning solutions.

The DGL containers published on NGC enabled AmEx to experiment with a variety of GNN architectures and exploit node and edge information at scale. This results in computational efficiency when dealing with millions of nodes and billions of edges using NVIDIA libraries in a multi-node, multi-GPU environment. Moreover, the user-friendly and adaptable libraries enabled them to easily customize the components such as the loss functions, sampling techniques, and more.

GNNs for drug discovery

Astellas, a leading pharmaceutical company known for its forward-thinking drug development approaches, is harnessing the capabilities of GNNs for a range of pivotal tasks in drug discovery. These tasks encompass the application of generative probabilistic deep learning models diffusion-based models for 3D molecular conformation generation, feature extraction, and predictive ML models, particularly in de novo protein design and engineering.

As such, Astellas taps into the computational efficiency of the NVIDIA PyG and DGL containers to facilitate and amplify GNN-based AI/ML solutions in drug discovery research activities. The integration of AI and ML-based pipelines powered by PyG and DGL enabled Astellas to boost its internal capabilities, granting researchers and AI practitioners with the expertise needed for developing and implementing AI-powered cutting technologies.

As one use case, an Astellas scientist can achieve acceleration rates of at least 50x compared to traditional simulation-based methods for 3D molecular conformations.

Genentech, a biotechnology company and member of the Roche Group, is using GNNs with the NVIDIA PyG container to accelerate their small molecule prediction training. The PyG container provided a reliable base for the PyG framework, which enabled the development team to focus more on development rather than setting up the dev environments.

Next steps

With NVIDIA accelerated DGL and PyG containers, you can also significantly improve the data sampling and training performance of GNNs.

To get started, download the following resources:

- DGL container: The overview section on the catalog page provides detailed steps for pulling and running the DGL container.

- PyG container