NVIDIA PhysicsNeMo

NVIDIA PhysicsNeMo is an open-source Python framework for building, training, and fine-tuning physics AI models at scale.

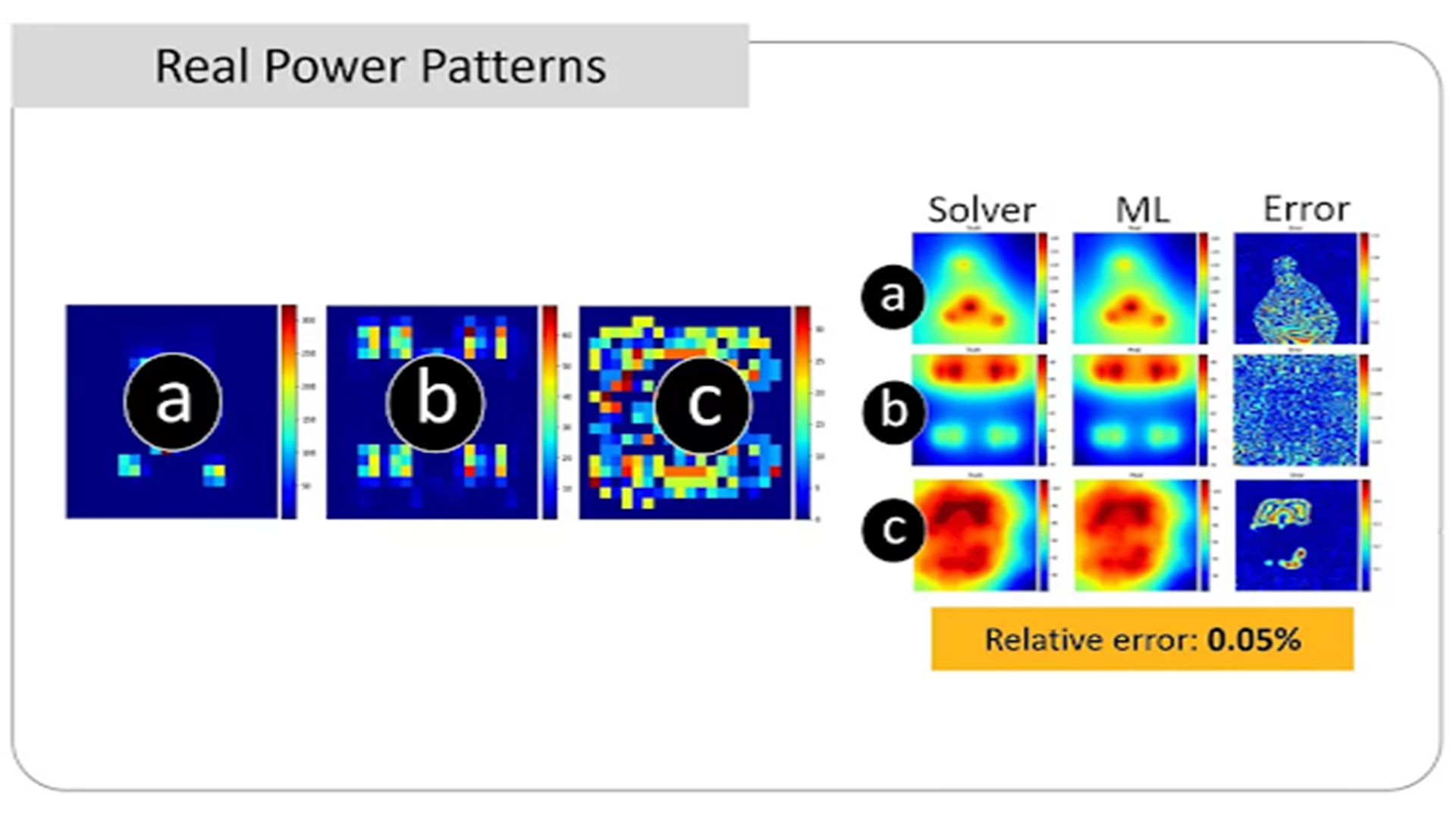

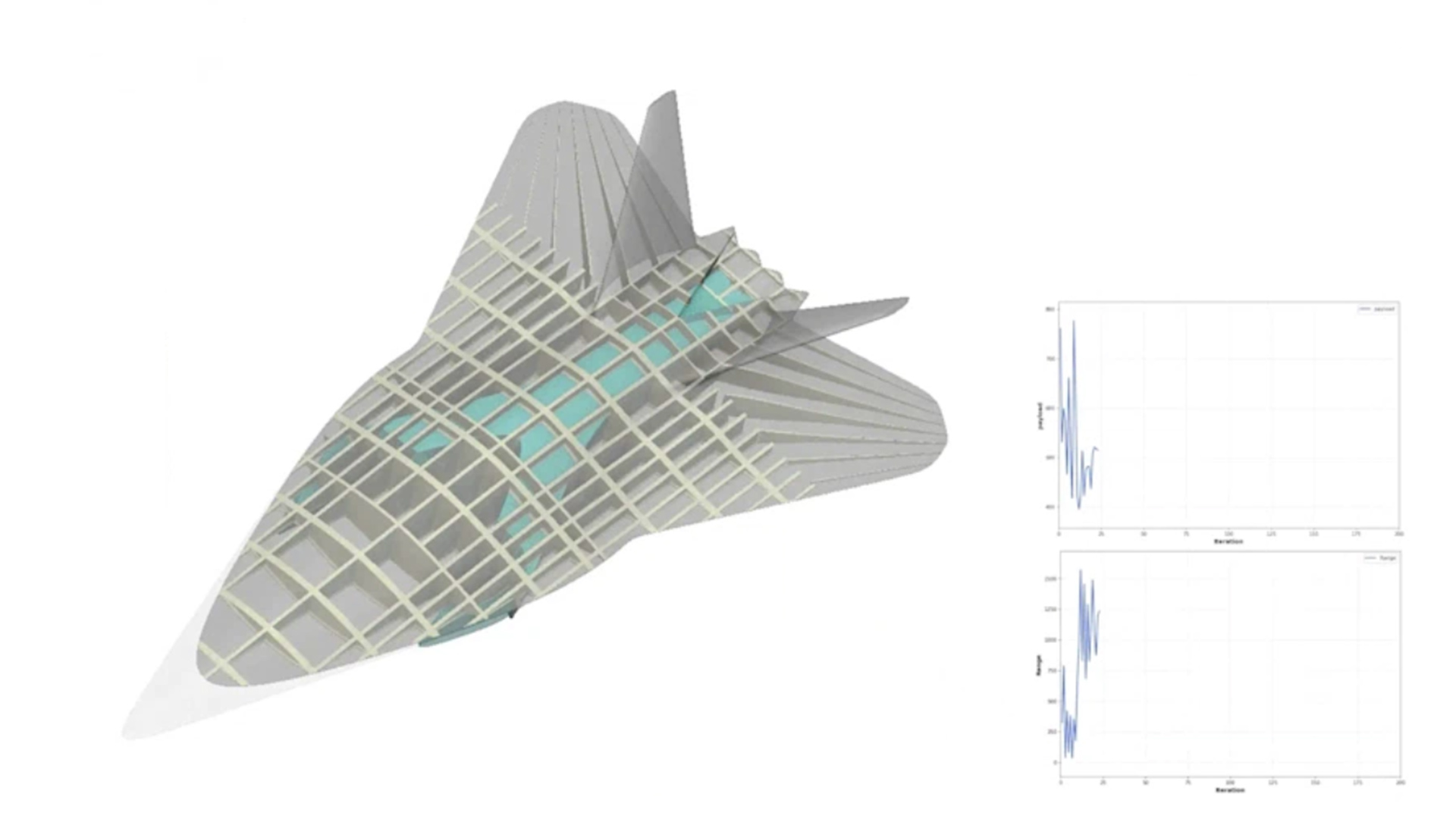

NVIDIA PhysicsNeMo provides utilities that enable developers to build AI surrogate models that combine physics-driven causality with simulation and observed data, enabling real-time predictions. From neural operators and GNNs to generative AI models, developers can develop proprietary AI models to enhance engineering simulations and generate higher-fidelity data for scalable, responsive designs. NVIDIA PhysicsNeMo supports the creation and validation of large-scale digital twin models across various physics domains, from computational fluid dynamics and structural mechanics to electromagnetics.

Use NVIDIA PhysicsNeMo to bolster your engineering simulations with AI. You can build and validate models for enterprise-scale digital twin applications across multiple physics domains, from computational fluid dynamics and structural to electromagnetics.

Physics-Informed Machine Learning for Surrogate Models

What’s New In NVIDIA PhysicsNeMo

NVIDIA AI Physics Transforms Aerospace and Automotive Design — Accelerating Engineering by 500x

NVIDIA, industry leaders and software tool providers reinvent computational engineering with AI-powered simulation and modeling.

Luminary Cloud Releases SHIFT-Wing, a Physics AI Model for Transonic Wing Design, in Collaboration With Otto Aviation Leveraging NVIDIA Technologies

SHIFT-WING unlocks radically faster wing design, reducing risk and cost while accelerating innovation in next-gen aircraft.

NVIDIA Blackwell Accelerates Computer-Aided Engineering Software Ecosystem

Leading Software Providers Including Ansys, Altair, Cadence, Siemens and Synopsys Adopt NVIDIA Blackwell

Features

PhysicsAI Model Architectures

NVIDIA PhysicsNeMo offers a variety of approaches tuned for training physics AI models, from purely physics-driven models like physics-informed neural networks (PINNs) to physics-based, data-driven architectures, such as neural operators, graph neural networks (GNNs), and generative AI-based diffusion models.

NVIDIA PhysicsNeMo includes curated physics-ML model architectures, Fourier feature networks, Fourier neural operators, GNNs, point cloud and diffusion models trained on NVIDIA DGX™ across open-source, free datasets found in the documentation.

Training State-of-the-Art Physics AI Models

NVIDIA PhysicsNeMo provides an end-to-end pipeline and utilities for training physics-ML models—from ingesting geometry to adding partial differential equations. NVIDIA PhysicsNeMo also includes training recipes in the form of reference applications. View documentation.

Training at Scale

NVIDIA PhysicsNeMo provides GPU accelerated distributed framework to build foundational scale models. It provides data parallel and model parallel training pipelines scaled to multi-node training to suit the needs of industrial scale problems. For instance, developers can train a GNN or a point cloud on a 50 million node mesh quite easily. View documentation.

Physics AI Reference Pipelines

NVIDIA PhysicsNeMo offers a diverse set of reference pipelines as a starting point for developers to customize and build their own solutions. These reference samples show how one can model a specific problem with AI as well as showcase some relevant architectures and datasets. This spans use cases from computational fluid dynamics (CFD) and thermal analysis to climate and weather. View the GitHub repo for the complete list.

Benefits

NVIDIA PhysicsNeMo is an open-source, freely available AI framework for developing physics-ML models and novel AI architectures for engineering systems.

AI Toolkit for Physics

Quickly configure, build, and train AI models for physical systems in any domain, from engineering simulations to life sciences, with simple Python APIs.

Customize Models

Download, build on, and customize state-of-the-art pretrained models from the NVIDIA NGC™ catalog.

Near-Real-Time Inference

Deploy AI surrogate models as digital twins of your physical systems to simulate in near real time.

Scale With NVIDIA AI

Leverage NVIDIA AI to scale training performance from a single GPU to multi-node implementations.

Open-Source Design

Experience the benefits of open source. PhysicsNeMo is built on top of PyTorch and is released under the Apache 2.0 license.

Standardized

Work with the best practices of AI development for physics-ML models, with an immediate focus on engineering applications.

User Friendly

Boost productivity with user-comprehensible error messages and easy-to-program Pythonic API interfaces.

High Quality

Use high-quality software with enterprise-grade development, tutorials for getting started, and robust validation and documentation.

Contribute to NVIDIA PhysicsNeMo Development

NVIDIA PhysicsNeMo provides a unique platform for collaboration within the scientific community. Domain experts are invited to contribute and accelerate physics-ML across a variety of use cases and applications.

Customer Adoption Stories

Ansys to Drive Major Advances in AI-Powered Semiconductor Design Using NVIDIA AI

Ansys is integrating NVIDIA PhysicsNeMo into its SeaScape platform to significantly speed up semiconductor design optimization, enabling faster thermal simulations and improved designer productivity for a wide range of applications, including GPUs, HPC chips, and smartphone processors.

Luminary Cloud and nTop Streamline AI Physics and Cut Engineering Design Time From Months to Hours With NVIDIA Technology

Luminary Cloud and nTop have integrated NVIDIA PhysicsNeMo into their platforms, revolutionizing engineering design by automating physics-based AI optimization and reducing the time to analyze hundreds of design variations from weeks to mere hours.

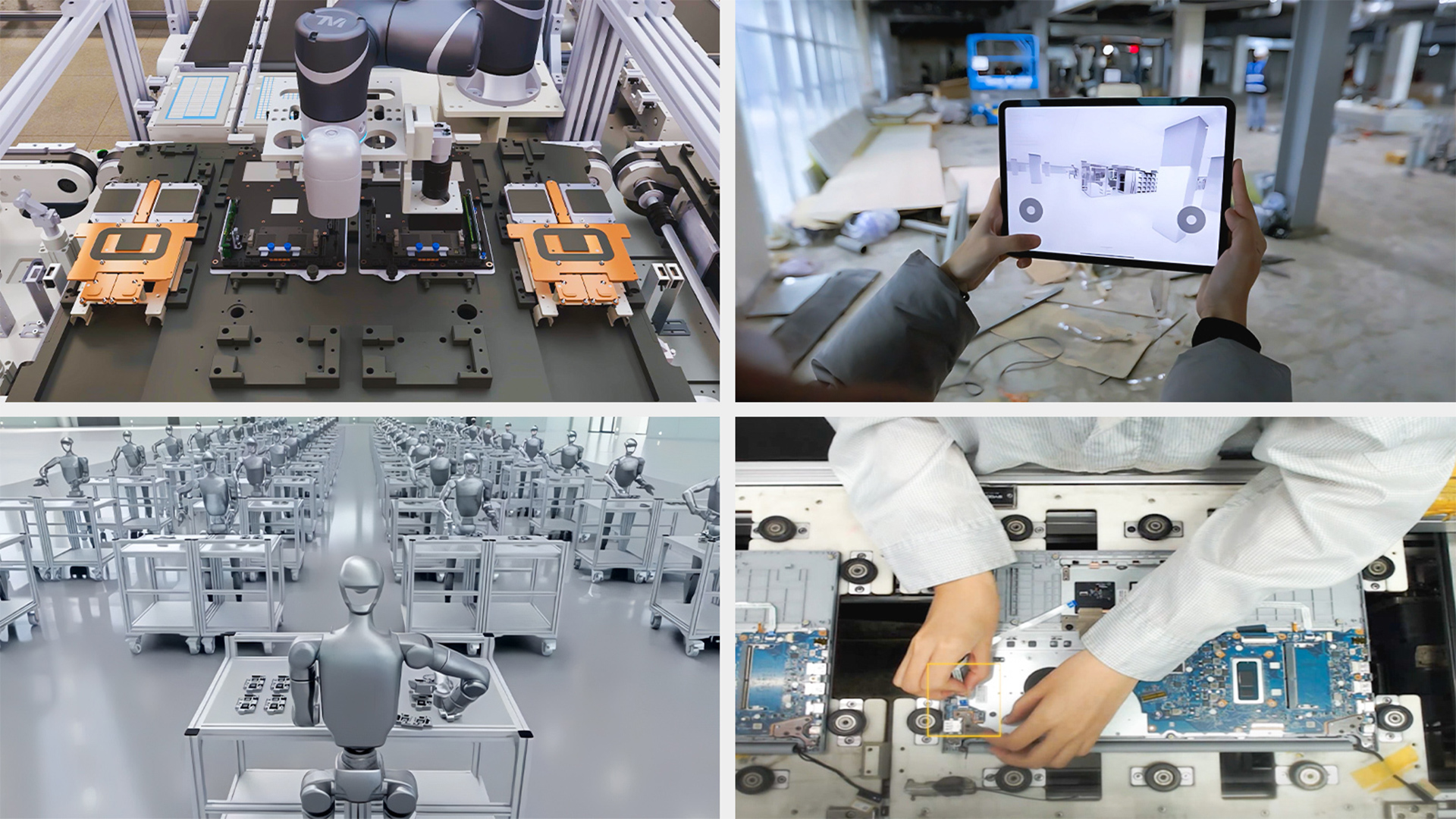

NVIDIA Omniverse Digital Twins Help Taiwan Manufacturers Drive Golden Age of Industrial AI

NVIDIA Omniverse and PhysicsNeMo are enabling Taiwan’s leading manufacturers, including Foxconn, TSMC, and Wistron, to optimize factory planning, accelerate robotics development, and enhance operational efficiencies through physically accurate digital twins and AI-driven simulations.

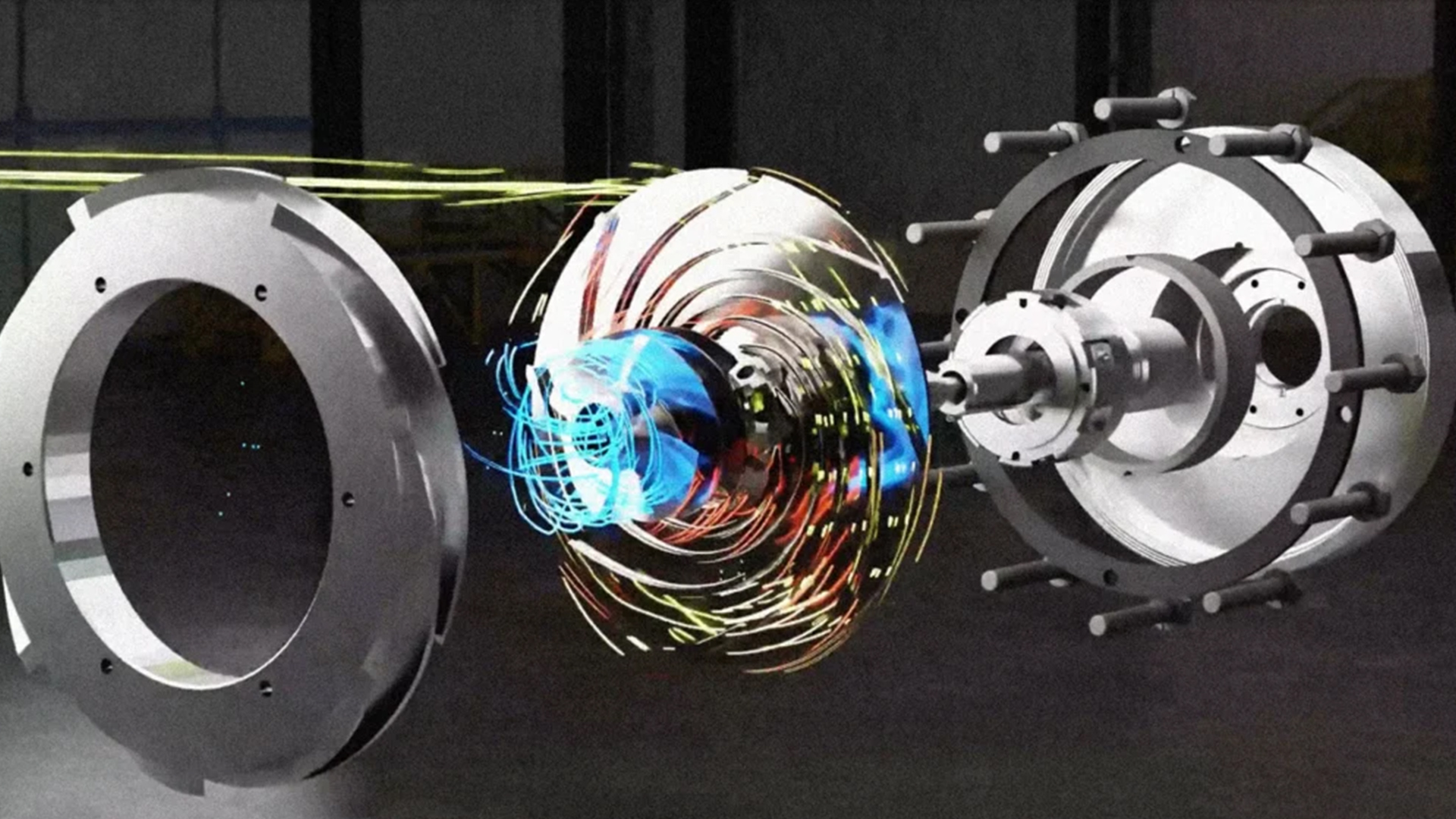

SimScale Unveils the World’s First Foundation AI Model for Centrifugal Pump Simulation Built With NVIDIA PhysicsNeMo

SimScale, the leader in cloud-native engineering simulation, has unveiled the world’s first foundation AI model for centrifugal pump simulation built with NVIDIA PhysicsNeMo, revolutionizing simulation-driven engineering by enabling instant design optimization, reducing computational costs, and enhancing accuracy and efficiency.

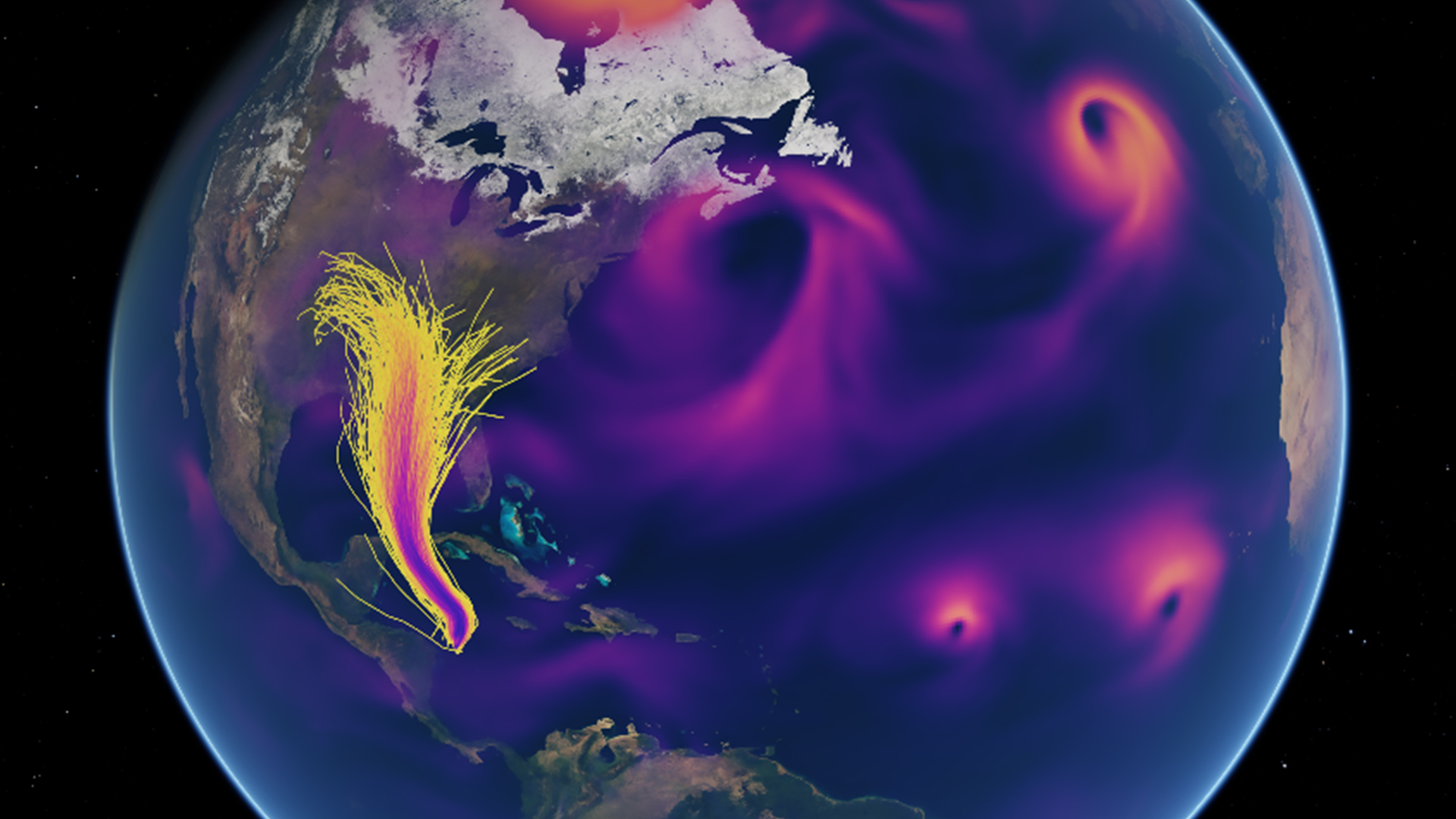

Spotlight: AXA Explores AI-Driven Hurricane Risk Assessment

AXA is leveraging NVIDIA PhysicsNeMo and the NVIDIA Earth-2 platform to conduct AI-driven simulations of hurricanes, generating large ensembles of hypothetical scenarios that enhance the accuracy and efficiency of risk assessment for rare, high-impact events.

NVIDIA Earth-2 Powers Regional AI Weather Forecasting in the United Arab Emirates

G42, a leading AI and cloud computing company in the UAE, has developed a high-resolution regional weather forecasting system using NVIDIA Earth-2 and PhysicsNeMo, enabling precise predictions of extreme weather events at up to 200 meters resolution and significantly reducing forecast times from hours to minutes.

Spotlight: Siemens Energy Accelerates Power Grid Asset Simulation 10,000x Using NVIDIA PhysicsNeMo

Siemens Energy is accelerating power grid asset simulation by 10,000x using NVIDIA PhysicsNeMo, developing AI surrogate models for transformer bushings and gas-insulated switchgears to predict thermal behavior and optimize grid operations in near real time.

NVIDIA AI Turbocharges Industrial Research, Scientific Discovery in the Cloud on Rescale HPC-As-A-Service Platform

NVIDIA and Rescale are partnering to bring NVIDIA AI, including the NVIDIA PhysicsNeMo framework for physics-ML, to Rescale’s HPC-as-a-service platform, enabling industrial giants to turbocharge their research and scientific discovery in the cloud with faster, more efficient simulations and AI workflows.

Spotlight: BRLi and Toulouse INP Develop AI-Based Flood Models Using NVIDIA PhysicsNeMo

BRLi and Toulouse INP have developed an AI-based flood forecasting system using NVIDIA PhysicsNeMo, which can predict flooding up to 6 hours ahead in just 19 milliseconds on a single GPU, revolutionizing real-time disaster preparedness and risk mitigation.

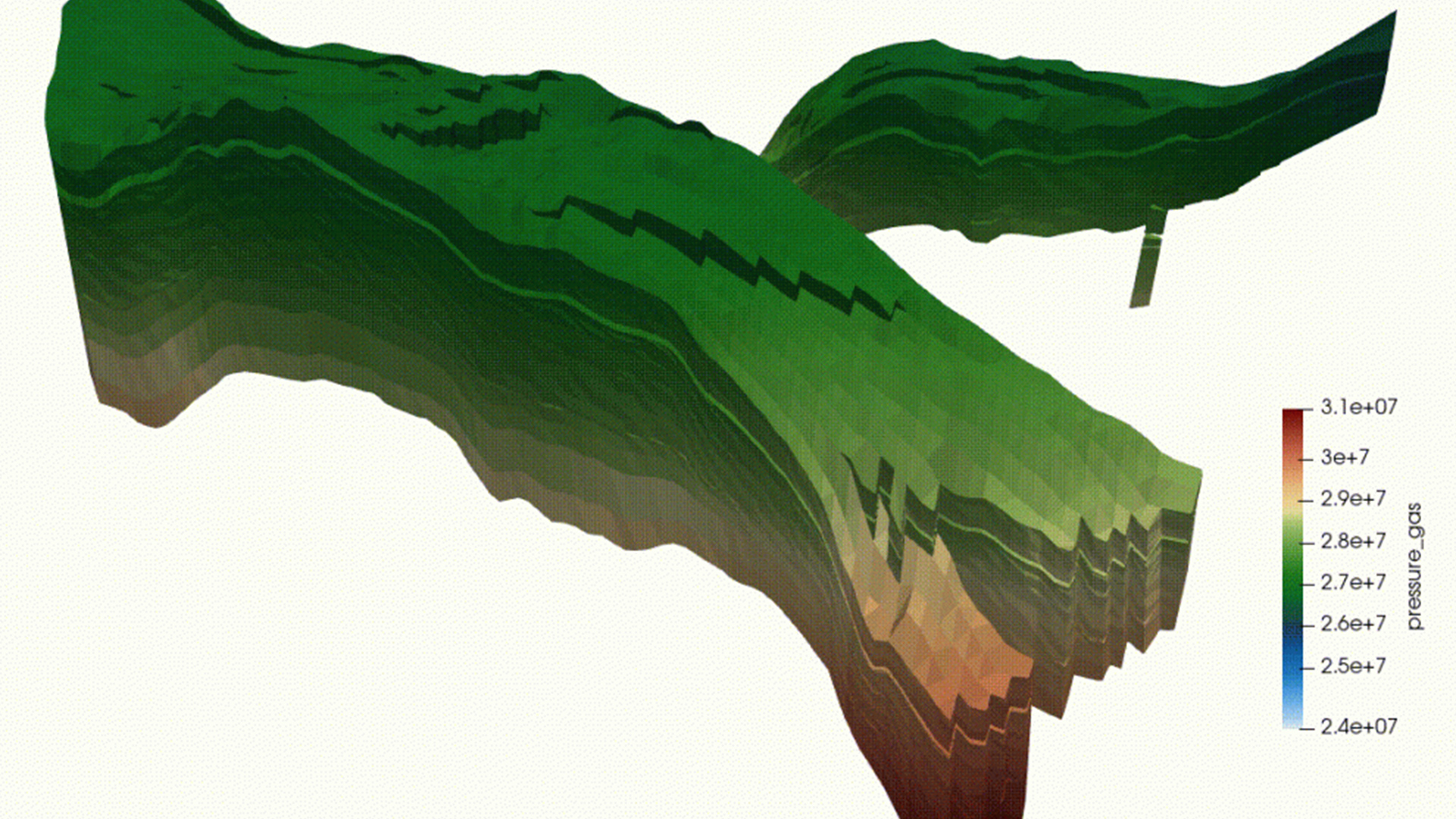

Spotlight: Stone Ridge Technology Accelerates Reservoir Simulation Workflows With NVIDIA PhysicsNeMo on AWS

Stone Ridge Technology has accelerated reservoir simulation workflows by integrating its ECHELON simulator with NVIDIA PhysicsNeMo on AWS, generating full-field proxy models that are 10 to 100 times faster than traditional simulations while maintaining high accuracy, enabling rapid uncertainty quantification and field optimization.

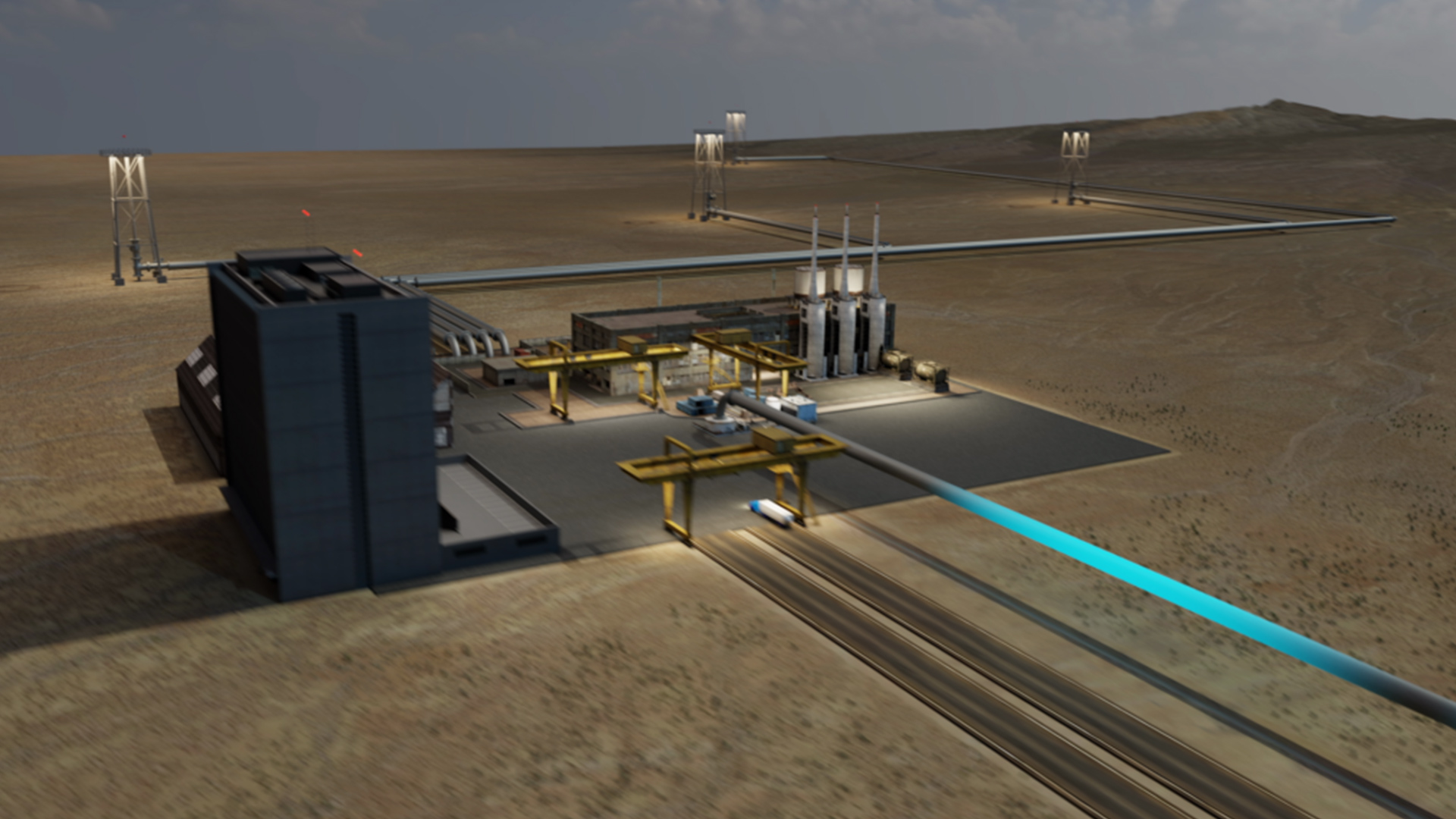

Spotlight: Shell Accelerates CO2 Storage Modeling 100,000x Using NVIDIA PhysicsNeMo

Shell and NVIDIA have developed an AI framework using NVIDIA PhysicsNeMo that accelerates CO2 storage modeling by 100,000 times, enabling rapid and accurate screening of potential storage sites while maintaining high prediction accuracy for CO2 plume migration and pressure buildup.

See NVIDIA PhysicsNeMo in Action

Earth-2 Goes Down to Street Level

Speed and Accuracy of Gen AI Helps Combat Climate Change

Accelerating Extreme Weather Prediction with FourCastNet

Maximizing Wind Energy Production Using Wake Optimization

Accelerating Carbon Capture and Storage With Fourier Neural Operator and NVIDIA PhysicsNeMo

Predicting Extreme Weather Events Three Weeks in Advance with FourCastNet

Ways to Get Started With NVIDIA PhysicsNeMo

PhysicsNeMo Framework

NVIDIA PhysicsNeMo provides utilities to build Physics AI solutions combining physics with data at enterprise scale.

Computer-Aided Engineering (CAE)

Explore how NVIDIA is enabling computer-aided engineering industry developers to accelerate physics-based CAE simulations and embrace real-time interactive design using AI-accelerated digital twins.

External Aerodynamics NIM

This very accurate pretrained model based on DoMINO architecture and trained on a large and diverse automotive dataset is packaged as an NVIDIA NIM™ microservice for performant and scalable cloud deployment. Developers can customize the model further using the training recipe in PhysicsNeMo.

Weather and Climate (Earth-2)

Explore how NVIDIA is enabling the weather and climate ecosystem for better predictions and disaster mitigation leveraging microservices.

Containers and Models for Development

Develop physics-ML models using NVIDIA PhysicsNeMo container and pretrained models, available for free on NVIDIA NGC.

Enterprise-Scale Workflows

NVIDIA PhysicsNeMo is available with NVIDIA AI Enterprise, an end-to-end AI software platform optimized to accelerate an enterprise’s time to production, certifications to deploy AI everywhere, and enterprise-grade support, security, and API stability while mitigating the potential risks of open-source software.

Higher Education and Research Developer Resources

Open Hackathons and Bootcamps

Accelerate and optimize research applications with mentors by your side.

Teaching Kit for Educators

A DLI Teaching Kit is available to qualified university educators interested in physics-ML. Comprehensive and modular, the kit can help you integrate lecture materials, hands-on exercises, GPU cloud resources, and more into your curriculum.

Self-Paced Online Course

Take a hands-on introductory course from the NVIDIA DLI to explore physics-informed machine learning with NVIDIA PhysicsNeMo.

Access CourseExplore More Resources

Using NVIDIA PhysicsNeMo and Omniverse Wind Farm Digital Twin for Siemens Gamesa

Siemens Energy Taps NVIDIA to Develop Industrial Digital twin of Power Plant in Omniverse and PhysicsNeMo

Developing Digital Twins for Weather, Climate, and Energy

Ethical AI

NVIDIA’s platforms and application frameworks enable developers to build a wide array of AI applications. Consider potential algorithmic bias when choosing or creating the models being deployed. Work with the model’s developer to ensure that it meets the requirements for the relevant industry and use case; that the necessary instruction and documentation are provided to understand error rates, confidence intervals, and results; and that the model is being used under the conditions and in the manner intended.