MLPerf

Mar 16, 2026

How NVIDIA Dynamo 1.0 Powers Multi-Node Inference at Production Scale

Reasoning models are growing rapidly in size and are increasingly being integrated into agentic AI workflows that interact with other models and external...

14 MIN READ

Nov 12, 2025

NVIDIA Blackwell Architecture Sweeps MLPerf Training v5.1 Benchmarks

The NVIDIA Blackwell architecture powered the fastest time to train across every MLPerf Training v5.1 benchmark, marking a clean sweep in the latest round of...

10 MIN READ

Jun 04, 2025

Reproducing NVIDIA MLPerf v5.0 Training Scores for LLM Benchmarks

The previous post, NVIDIA Blackwell Delivers up to 2.6x Higher Performance in MLPerf Training v5.0, explains how the NVIDIA platform delivered the fastest time...

11 MIN READ

Apr 02, 2025

NVIDIA Blackwell Delivers Massive Performance Leaps in MLPerf Inference v5.0

The compute demands for large language model (LLM) inference are growing rapidly, fueled by the combination of growing model sizes, real-time latency...

10 MIN READ

Nov 13, 2024

NVIDIA Blackwell Doubles LLM Training Performance in MLPerf Training v4.1

As models grow larger and are trained on more data, they become more capable, making them more useful. To train these models quickly, more performance,...

8 MIN READ

Sep 24, 2024

NVIDIA GH200 Grace Hopper Superchip Delivers Outstanding Performance in MLPerf Inference v4.1

In the latest round of MLPerf Inference – a suite of standardized, peer-reviewed inference benchmarks – the NVIDIA platform delivered outstanding performance...

7 MIN READ

Aug 28, 2024

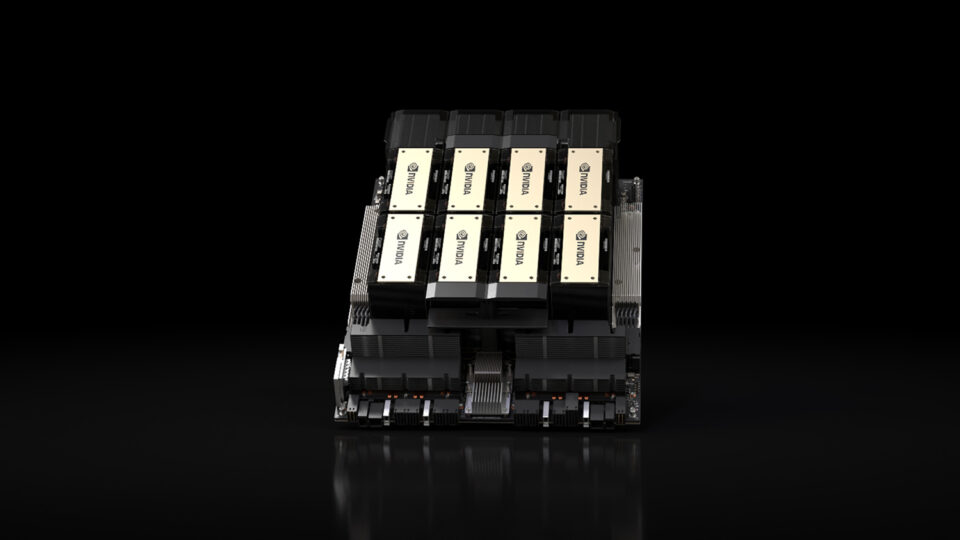

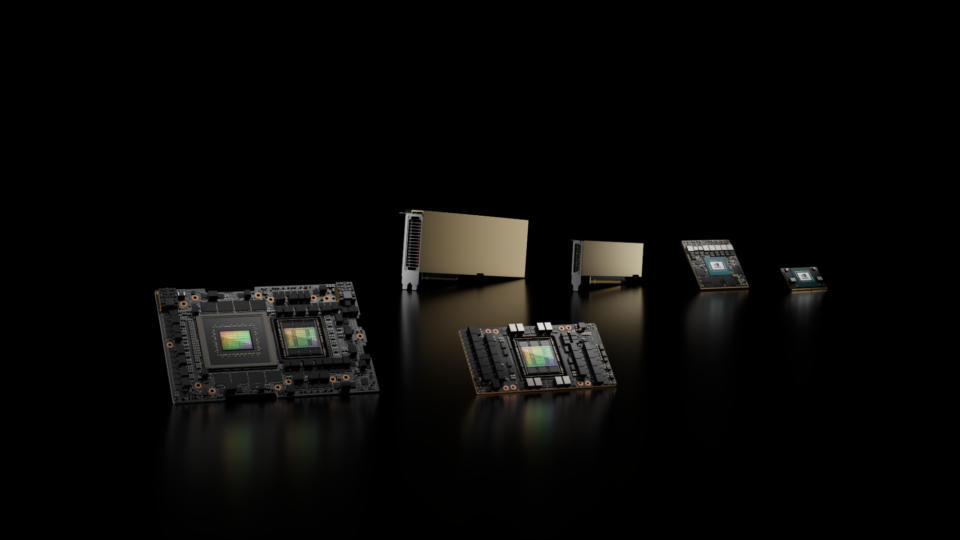

NVIDIA Blackwell Platform Sets New LLM Inference Records in MLPerf Inference v4.1

Large language model (LLM) inference is a full-stack challenge. Powerful GPUs, high-bandwidth GPU-to-GPU interconnects, efficient acceleration libraries, and a...

13 MIN READ

Jun 12, 2024

NVIDIA Sets New Generative AI Performance and Scale Records in MLPerf Training v4.0

Generative AI models have a variety of uses, such as helping write computer code, crafting stories, composing music, generating images, producing videos, and...

11 MIN READ

Mar 27, 2024

NVIDIA H200 Tensor Core GPUs and NVIDIA TensorRT-LLM Set MLPerf LLM Inference Records

Generative AI is unlocking new computing applications that greatly augment human capability, enabled by continued model innovation. Generative AI...

11 MIN READ

Feb 28, 2024

Optimizing OpenFold Training for Drug Discovery

Predicting 3D protein structures from amino acid sequences has been an important long-standing question in bioinformatics. In recent years, deep learning–based...

7 MIN READ

Nov 08, 2023

Setting New Records at Data Center Scale Using NVIDIA H100 GPUs and NVIDIA Quantum-2 InfiniBand

Generative AI is rapidly transforming computing, unlocking new use cases and turbocharging existing ones. Large language models (LLMs), such as OpenAI’s GPT...

19 MIN READ

Sep 09, 2023

Leading MLPerf Inference v3.1 Results with NVIDIA GH200 Grace Hopper Superchip Debut

AI is transforming computing, and inference is how the capabilities of AI are deployed in the world’s applications. Intelligent chatbots, image and video...

13 MIN READ

Jul 06, 2023

New MLPerf Inference Network Division Showcases NVIDIA InfiniBand and GPUDirect RDMA Capabilities

In MLPerf Inference v3.0, NVIDIA made its first submissions to the newly introduced Network division, which is now part of the MLPerf Inference Datacenter...

9 MIN READ

Jun 27, 2023

Breaking MLPerf Training Records with NVIDIA H100 GPUs

At the heart of the rapidly expanding set of AI-powered applications are powerful AI models. Before these models can be deployed, they must be trained through...

15 MIN READ

Apr 05, 2023

Setting New Records in MLPerf Inference v3.0 with Full-Stack Optimizations for AI

The most exciting computing applications currently rely on training and running inference on complex AI models, often in demanding, real-time deployment...

15 MIN READ

Nov 09, 2022

Tuning AI Infrastructure Performance with MLPerf HPC v2.0 Benchmarks

As the fusion of AI and simulation accelerates scientific discovery, the need has arisen for a means to measure and rank the speed and throughput for building...

14 MIN READ