For over a decade, NVIDIA GPUs have been built with dedicated encoders and decoders called NVENC and NVDEC. They have a highly parallelized architecture, support popular codec formats, and provide direct access to GPU memory for optimized encode and decode operations.

GPU-accelerated video means offloading your video processing to NVENCs and NVDECs, reducing CPU cycles and harnessing the more optimized hardware units. To enable GPU acceleration, NVIDIA offers the Video Codec SDK: a rich API that enables high-performance encoding and decoding.

Last year, NVIDIA introduced the Ada Lovelace architecture, with the new eighth-generation NVENC. The Video Codec SDK was updated to support AV1 encode on NVIDIA Ada Lovelace, and split encoding to harness the power of multiple NVENCs simultaneously.

Video Codec SDK 12.1 is the newest release, available now. This post highlights new features supported in version 12.1, and how they’re being used to enhance video processing use cases like content creation and streaming on NVIDIA GPUs.

Explicit split-frame encoding

With split encoding, an input frame is split and each part is processed in parallel by multiple NVENCs on the chip, which results in a significant speedup compared to sequential encoding. Split encoding was initially implemented by default on predetermined HQ and LL presets on 4K and 8K resolutions for HEVC and AV1.

In Video Codec SDK 12.1, you control the implementation of split encoding with a flag:

- Disabling the flag results in the original default functionality, enabled on predetermined presets on 4K and 8K resolutions.

- Enabling the flag implements split encoding across all resolutions and presets.

You can also control the split across two or three NVENCs depending on the number of NVENCs present on the GPU. For more information, see the GPU matrix.

| Default split encoding for 4K, 8K | Split encoding on all presets and resolutions | |

| Split encoding API flag = enabled | YES, for some presets | YES |

| Split encoding API flag = disabled | YES, for some presets | NO |

The features and performance gains using split encoding were highlighted in the Video Encoding 8K60 and Beyond with Ada Lovelace GTC session.

NVENC low-level APIs for H.264, HEVC, and AV1

Video Codec SDK 12.1 exposes a few more low-level APIs for H.264, HEVC, and AV1 encoders to control the hardware encoder’s internal state. These APIs are useful for applications that want fine control over encoding quality.

- Iterative encoding: The iterative encoding enables you to freeze the encoder’s automatic state-advancing. You can then re-encode the same frame, each time with a different delta-QP. NVENC stores all possible new states and enables you to stop iterating and manually force advancement based on any one of the iterations.

- ReCon: You can access the higher-quality, reconstructed frame in the hardware encoder’s recon loop. NVENC also supports the QP and bit-count stats at the row and block levels.

- External look ahead: This API offers enhanced control for lookahead functionality. The API enables you to decide the scaling for the lookahead pass. The default is 1/16th resolution.

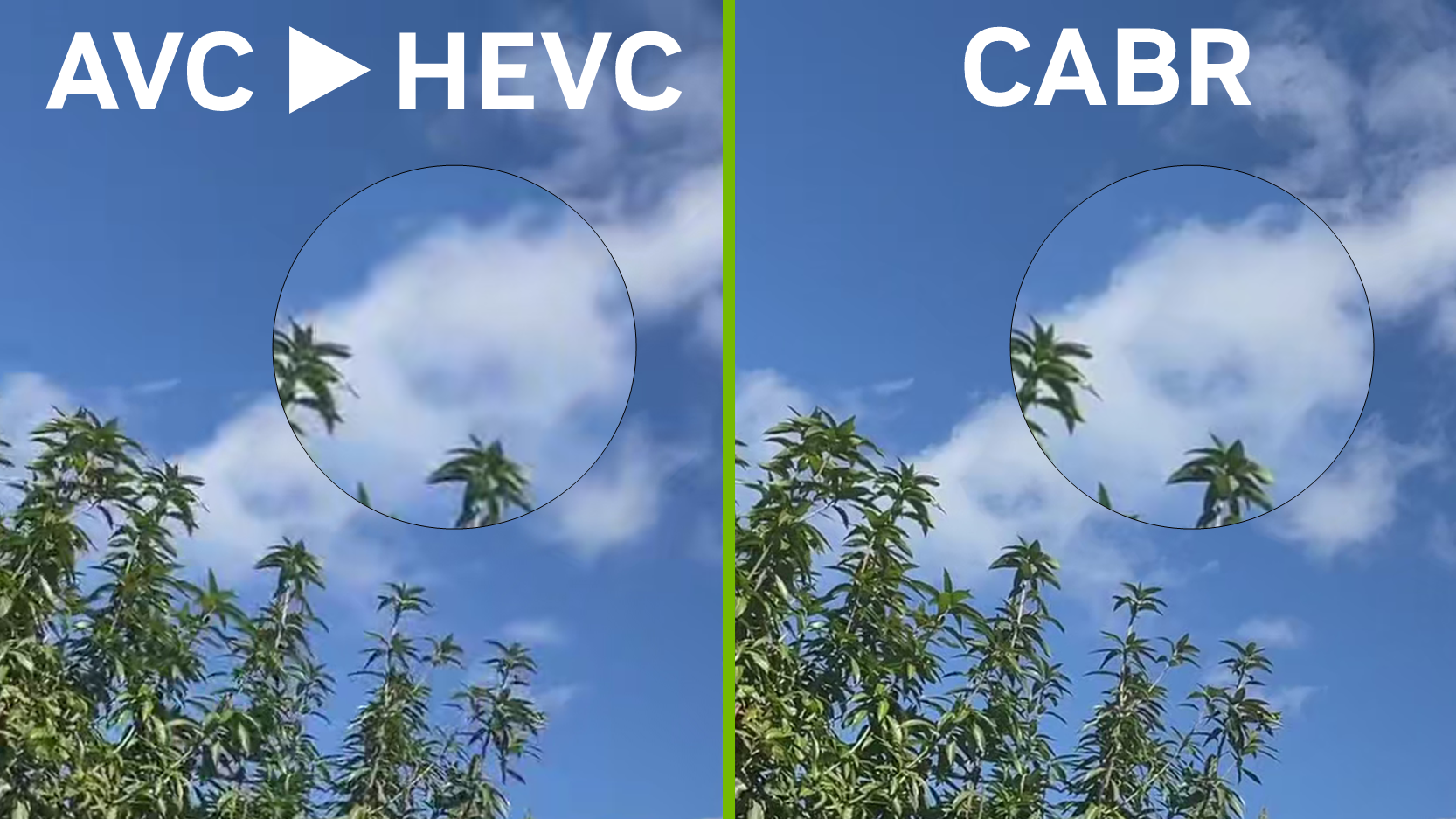

Iterative encoding, recon, and external look ahead are used by Beamr’s Content Adaptive Bit Rate (CABR) library, which works jointly with NVENC to create fully standard-compliant bitstreams. These bitstreams have the lowest possible bit rate for a given perceptual quality, achieved by finding the maximum compression level per frame without any visible quality degradation.

Using the CABR library with the Video Codec SDK enables various use cases including the following:

- Encoder optimization: For existing NVENC users, CABR can be used with the same configuration and bit rate settings to generate compressed videos of the same perpetual quality as the original NVENC encode. The CABR-optimized video can provide 10-30% in savings as compared to the original NVENC compression.

- Encoder modernization: The CABR-NVENC optimizations can be used without specifying any bit rate. In this case, the algorithm produces a compressed video of perceptually identical quality to the original with up to 50% compression gain. This flow enables it to transcode from codec to codec and preserve the same quality between bitstreams.

Bitrate savings can vary greatly per file and scene, but typically average 30% – 40%. These savings from CABR video lessen storage needs, significantly reducing costs where millions of streams must be stored in high quality.

In situations where the underlying encode is already aggressive relative to the native resolution, it may be impossible to reduce bitrate further without degrading quality. On the other hand, in cases like user-generated content (UGC) on smartphones, a conservative bitrate is used to guarantee the quality, and CABR can achieve up to 60%-70% savings. These optimizations also reduce content delivery network (CDN) costs by minimizing bandwidth requirements.

Before integration with NVENC, CABR was only possible with offline processing. Now, powered by NVIDIA GPUs, CABR-NVENC runs in real time, transforming its use to optimize live video creation services.

Empowered video creation with CABR-NVENC

Wochit is a cloud-based solution for video content creation, offering streamlined tools that organizations use to make professional videos at scale. They recently launched the Wochit Wizard, an AI-powered, prompt-based video creation tool. Beamr’s CABR on NVIDIA GPUs helped Wochit build its new AI service with significant storage and delivery savings.

“We have been encoding, decoding, and transcoding video on GPUs for over 10 years; offering a powerful platform for content creation and handling a million videos annually. Beamr’s CABR technology enables us to significantly reduce file sizes while preserving quality. With CABR’s port to NVIDIA GPUs, we can now leverage it in real time instead of lengthy and costly offline processing. We are excited to use Beamr’s solutions on NVIDIA GPUs to explore new frontiers of AI video creation and enhance our customer’s experience!” – Dror Ginzberg, co-founder and CEO at Wochit.

Optimized cloud streaming for NVIDIA GPUs

V-Nova has been collaborating with NVIDIA to optimize Low Complexity Enhancement Video Coding (LCEVC), a new video standard by MPEG, on NVIDIA GPUs.

LCEVC specifies an enhancement layer that combines with a base-encoded stream to produce an enhanced video stream. LCEVC is particularly amenable to cloud gaming and VR/XR applications. In these use cases, content is rendered by a gaming engine in the cloud, encoded as video, and sent through a standard IP connection to a light, power-efficient client device.

LCEVC enables the necessary bitrates to be lowered beyond what is possible with AVC, HEVC, or AV1 alone while maintaining minimum latency, reducing latency jitter, and with a low computational load.

V-Nova worked with NVIDIA to optimize LCEVC on NVIDIA GPUs, enabling support for lower-end devices on the decoding side (1080p60 low-latency decoding on mobile phones and 4Kp90 on XR headsets). To produce limited contention between the LCEVC GPU processing and rendering operations, LCEVC harnesses Tensor Cores when available.

This is particularly relevant to cloud-rendered, ultra-low-latency, XR streaming use cases, where wireless distribution to XR headsets requires transmission below 25 Mbit/s. Figure 3 shows HEVC compared to LCEVC-enhanced HEVC at 25 Mbit/s.

In terms of latency, the aim was to avoid any type of buffering and to maintain the encoding time of 3680×1920 stereoscopic video within a frame. Most of the encoding stages were moved onto the GPU, linking closely to the rendering engine and to NVENC.

LCEVC also leveraged ReCon, the new Video Codec SDK 12.1 feature that enables access to the reconstructed frame from NVENC. This eliminates the NVDEC stage and produces an encoding time per frame of ~5 ms. Overall, LCEVC on NVIDIA GPUs enables a 10x speedup compared to CPU implementations.

Get started with Video Codec SDK 12.1

Download Video Codec SDK 12.1 now.

For more information about the new video capabilities, see Improving Video Quality and Performance with AV1 and NVIDIA Ada Lovelace Architecture.