CUDA is the software development platform for building GPU-accelerated applications, providing all the components needed to develop applications targeting every NVIDIA GPU platform for general purpose compute acceleration. The latest CUDA release, CUDA 11.2, is focused on improving the user experience and application performance for CUDA developers.

CUDA 11.2 has several important features including programming model updates, new compiler features, and enhanced compatibility across CUDA releases. This post offers an overview of the key CUDA 11.2 software features and highlights:

- Stream-ordered CUDA memory suballocator:

cudaMallocAsyncandcudaFreeAsync - Updates to CUDA graphs and cooperative groups

- Compiler upgrade to LLVM 7 and CUDA kernel link-time optimization

- Enhanced CUDA compatibility support

CUDA 11.2 is available to download now.

CUDA programming model enhancements

With every CUDA release, we continue to enhance the CUDA programming model to enable you to get the most out of NVIDIA GPUs, while maintaining the programming flexibility of the higher-level APIs. In this release, we added an exciting new feature for stream-ordered memory allocation and extended some of the APIs for improving the functionality of cooperative groups and CUDA graphs.

Stream-ordered memory allocator

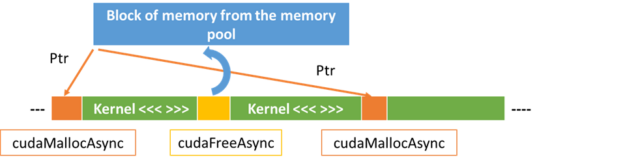

One of the highlights of CUDA 11.2 is the new stream-ordered CUDA memory allocator. This feature enables applications to order memory allocation and deallocation with other work launched into a CUDA stream such as kernel launches and asynchronous copies. This improves application performance by taking advantage of stream-ordering semantics to reuse memory allocations, using and managing memory pools to avoid expensive calls into the OS. The new asynchronous memory allocation and free API actions allow you to manage memory use as part of your application’s CUDA workflow. For many applications, this reduces the need for custom memory management abstractions, and makes it easier to create high-performance custom memory management for applications that need it. Moreover, this feature makes it easier to share memory pools across entities within an application.

cudaMallocAsync(&ptr, size, stream); // Allocates physical memory kernel<<<...,stream>>>(ptr); cudaFreeAsync(ptr, stream); // releases memory back into a pool cudaMallocAsync(&ptr, size, stream); // Reuses previously freed pointer kernel<<<...,stream>>>(ptr); cudaFreeAsync(ptr, stream); // releases memory back into a pool .... // Executes other work in the stream

As shown in this example, CUDA 11.2 introduces new stream-ordered versions of cudaMalloc and cudaFree—called cudaMallocAsync and cudaFreeAsync—which take a stream as an additional argument. The first call to cudaMallocAsync in the example allocates memory from the OS, but the subsequent call to cudaFreeAsync does not free it back to the OS. Instead, the memory is stored in a pool maintained by the CUDA driver, which allows the second call to cudaMallocAsync to reuse the memory previously freed, if it is of sufficient size.

For more information, see cudaMallocAsync in the C++ API Routines topic in the CUDA Toolkit documentation. For more information about how stream-ordered allocation works, the performance benefits, and how to port your application to use the new APIs, see Using the NVIDIA CUDA Stream-Ordered Memory Allocator posts.

Cooperative groups

Cooperative groups, introduced in CUDA 9, provides device code API actions to define groups of communicating threads and to express the granularity at which threads synchronize for more efficient parallel decompositions. For more information, see Cooperative Groups: Flexible CUDA Thread Programming.

When you are using cooperative groups to launch kernels into separate streams with cuLaunchCooperativeKernel, these kernels can now execute concurrently on a GPU. Prior to CUDA 11.2, cooperative kernels were always serialized as if launched into the same stream. Kernels A and B launched into separate streams would execute sequentially on the GPU, with B waiting for A to finish before it could start. With CUDA 11.2, cooperative kernels now run concurrently if they can fit together within the GPU resources.

You can take advantage of this functionality with the existing cuLaunchCooperativeKernel API action. If you were already using multiple streams in your application, you may not even need to modify your application code to benefit from this feature.

CUDA graphs

CUDA graphs were introduced in CUDA 10.0 and have seen a steady progression of new features with every CUDA release. For more information about the performance enhancement, see Getting Started with CUDA Graphs.

CUDA 11.2 introduces a new mechanism for synchronization between graph workloads and non-graph workloads. CUDA graphs now support two pairs of graph node types for external synchronization: signal and wait for CUDA events (available since CUDA 11.1), and external semaphore signal and wait (new in CUDA 11.2). These enhance existing graph functionality allowing internal graph operations to depend upon external work. Allowing graphs to inter-operate with the existing external semaphore infrastructure in CUDA enables new types of synchronization between graph workloads and non-CUDA workloads.

cudaGraphCreate(&graph, 0); // Create the graph

cudaGraphAddKernelNode(&a, graph, NULL, 0, &nodeParams); // create the nodes

cudaGraphAddKernelNode(&b, graph, NULL, 0, &nodeParams);

..

cudaGraphAddExternalSemaphoresSignalNode( &ext_sem, graph, NULL, 0, &nodeParams); // New node for external semaphore signal

..

// Now set up dependencies on each node

cudaGraphAddDependencies(graph, &a, &b, 1); // A->B

..

CUDA 11.2 now also allows graph update to change the kernel function launched by a kernel node, using either explicit node update with cudaGraphExecKernelNodeSetParams or whole graph update with cudaGraphExecUpdate. This is an enhancement when compared to prior releases, where the kernel function could not be modified and had to match the original value.

CUDA compiler

In CUDA 11.2, the compiler tool chain gets multiple feature and performance upgrades that are aimed at accelerating the GPU performance of applications and enhancing your overall productivity.

The compiler toolchain has an LLVM upgrade to 7.0, which enables new features and can help improve compiler code generation for NVIDIA GPUs. The CUDA C++ compiler, libNVVM, and NVRTC shared library have all been upgraded to the LLVM 7.0 code base. The libNVVM library provides GPU extensions to LLVM in support of the wider community of developers of compilers, DSL translators, and parallel applications targeting computational workloads on NVIDIA GPUs. The NVRTC shared library helps compile dynamically generated CUDA source code at runtime.

Link-time optimization for device kernel code (Device LTO), introduced as a preview feature in the CUDA 11.0 toolkit release, is now available as a full-featured optimization capability in CUDA 11.2. Device LTO enables you to enjoy the productivity benefits of separate compilation of device code without incurring an undue runtime performance overhead relative to whole-program device compilation.

The 11.2 CUDA C++ compiler can optionally generate a diagnostic report on inline functions which can provide insights into the compiler’s function inlining decisions. These diagnostic reports can aid in advanced application performance analysis and tuning efforts.

The CUDA C++ compiler aggressively inlines device functions into call sites by default. This can make assembly-level debugging of optimized device code a difficult task. For source code compiled using the 11.2 CUDA C++ compiler toolchain, the cuda-gdb and NVIDIA Nsight Compute debugger can display names of inlined device functions in call-stack backtraces, thereby improving the debugging experience. You can single step through inline functions just like any other device function.

Nsight Developer Tools

NVIDIA Developer Tools are a collection of applications, spanning desktop and mobile targets, which enable you to build, debug, profile, and develop CUDA applications that use the latest visual computing hardware. The NVIDIA Nsight tools have introduced some new functionality as well in CUDA 11.2.

Nsight Systems is a system-wide performance analysis tool, designed to help developers tune and scale software across CPUs and GPUs. The new 2020.5 update enhances Vulkan ray tracing, and profile tracing for NVIDIA Collectives Communication Library (NCCL) and CUDA memory allocation. It also delivers performance and UX improvements.

NVIDIA Nsight Systems 2020.5 is now available for download.

The 2020.3 release of NVIDIA Nsight Compute included in the 11.2 CUDA Toolkit introduces several new features that simplify the process of CUDA kernel profiling and optimization. The update for Nsight Compute introduces a new Profile Series feature enabling you to configure ranges for multiple kernel parameters, and a source file import functionality.

NVIDIA Nsight Compute 2020.3 is now available for download.

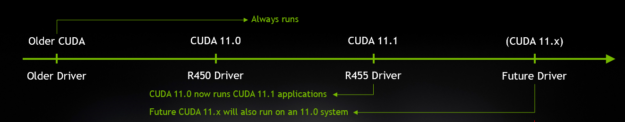

CUDA enhanced compatibility

Here’s a review of the enhanced CUDA compatibility support that was enabled in CUDA 11.1 and what it means for CUDA developers. By leveraging semantic versioning across components in the CUDA Toolkit, these components remain binary-compatible across all minor versions of a toolkit release. This means that CUDA has relaxed the minimum driver version check for the CUDA Toolkit and no longer requires a driver upgrade with minor releases. This is especially important for users who don’t have root privileges on their system.

For enterprise users, upgrading to the newer version of the CUDA driver was particularly cumbersome as it required quite a bit of planning and execution to ensure that all components in the production stack dependent on the driver were accounted for and validated. With enhanced compatibility, you can upgrade to a newer version of the CUDA Toolkit while still using an older version of the CUDA driver.

Enhanced CUDA compatibility also gives you the general flexibility to move to newer toolkits and features, only excepting the ones that have new APIs or which depend on the kernel mode driver. You get the compatibility of the CUDA Toolkit with the CUDA driver across all minor versions. An application can be built for one CUDA minor release (for example, 11.1) and work across all future minor releases within the major family (for example, 11.x), as shown in Figure 2.

For more information about the enhanced compatibility feature and the overall CUDA compatibility model in the toolkit documentation, see the CUDA Compatibility guide.

Summary

To learn more about the CUDA 11 generation toolkit capabilities and introductions, see CUDA 11 Features Revealed and follow future CUDA posts. For more