RTX Global Illumination (RTX GI) creates changing, realistic rendering for games by computing diffuse lighting with ray tracing. It allows developers to extend their existing light probe tools, knowledge, and experience with ray tracing to eliminate bake times and avoid light leaking.

Hardware-accelerated programmable ray tracing is now accessible through DXR, VulkanRT, OptiX, Unreal Engine, and Unity. This enables many ways to compute global illumination. RTX GI is a new strategy for lighting that expands developers’ options.

VFX Breakdown

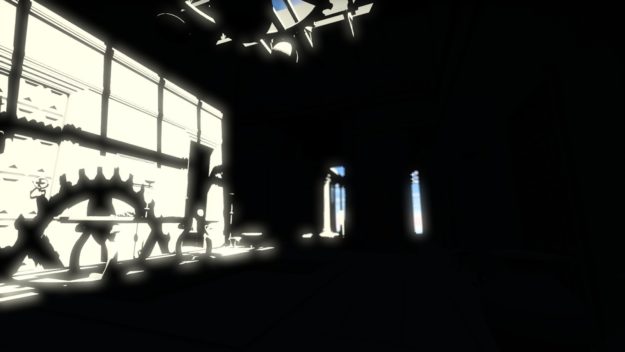

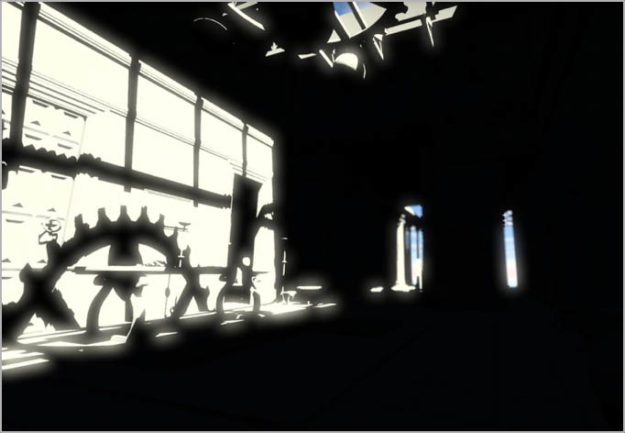

Let’s look at an example of the image quality that RTX GI provides. These images were rendered at 1080p on GeForce RTX 2080 Ti at 90 fps. RTX GI contributed 1 ms/frame to the render cost. That includes the amortized bounding volume hierarchy (BVH) update, ray cast, ray shade, and gathering in screen space during deferred shading.

DDGI is about diffuse lighting, but it also accelerates very rough and second-bounce glossy global illumination. Moreover, this article explains how to combine the ray trace operations for smooth glossy illumination with RTX GI to improve the ray tracing throughput of each. The sample glossy GI cost an additional 2 ms/frame in this implementation, where it is configured for highest image quality.

Figures 1 through 4 below highlight how different aspects of RTX GI come together to create a visually stunning whole while maintaining acceptable performance.

This video gives you a feel for what it looks like in real-time.

Advantages

RTX GI is a good fit for:

- Indoor-outdoor and indoor: RTX GI avoids light leaks

- Game runtimes: RTX GI guarantees performance, scales across GPUs and resolutions up to 4k, and has no noise

- Game production: RTX GI avoids baking time and per-probe tuning and accelerates your existing light probe workflow and expertise

- Any light type: RTX GI automatically works with point, line, and area lights, skybox lighting, and emissive objects

- Engines: RTX GI upgrades existing light probe engine data paths and tools

- Content creation: RTX GI works with fully dynamic scenes and does not require manual intervention

- Scalability: One code path gives a smooth spectrum from baked light probes that avoid leaks on legacy platforms, through slowly updating runtime GI on budget GPUs, all of the way up to instant dynamic GI on enthusiast GPUs. Unlock the power of high end PCs while still supporting a broad base.

Costs

The major costs and limitations of RTX GI are:

- 5 MB GPU RAM per cascade, peak 20 MB for all cascades and intermediates

- 1-2 ms per frame for best performance in fixed-time mode, or 1-2 Mrays per frame for ultra, fixed-quality mode (on 2080 Ti those are the same)

- Overhead is minimized when also using ray tracing for other effects, such as glossy and shadow rays

- RTX GI should be introduced early in look development and production. GI changes lighting substantially, and requires more physically-plausible geometry and tuning than direct light, and offers new gameplay opportunities.

- Lighting flows slower on low-end GPUs (world-space lag, no screen-space ghosting)

- Shadowmap-like bias parameter must be tuned to scene scale

- Cannot prevent leaks from zero-thickness/single-sided walls

- Must be paired with a separate glossy global illumination solution, such as screen-space ray tracing, denoised geometric ray tracing, or environment probes

Details

This article is the first of two parts, containing an introduction to RTX GI and a review of real-time global illumination. It sets the stage for part II, which will be a detailed overview of RTX GI.

In addition this article, several previous presentations describe the basic ideas and give targeted experimental results. We first presented RTX GI as Dynamic Diffuse Global Illumination (DDGI) at GTC’19 and GDC’19 in the NVIDIA sponsored sessions. You can see the video online.

Global Illumination Highlights

Let’s dive into a simplified introduction of the core concepts of global illumination for real-time application developers. I’ll focus on introducing the terms needed to understand RTX GI and the underlying technology, Dynamic Diffuse Global Illumination. I’m skipping a lot of technical detail and using contemporary game artist jargon. I mention the academic terms in passing to connect to research publications. The Graphics Codex has a deeper explanation that is still friendly.

Direct Illumination

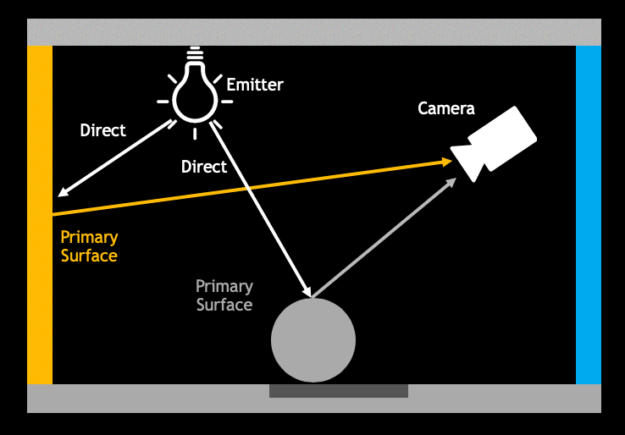

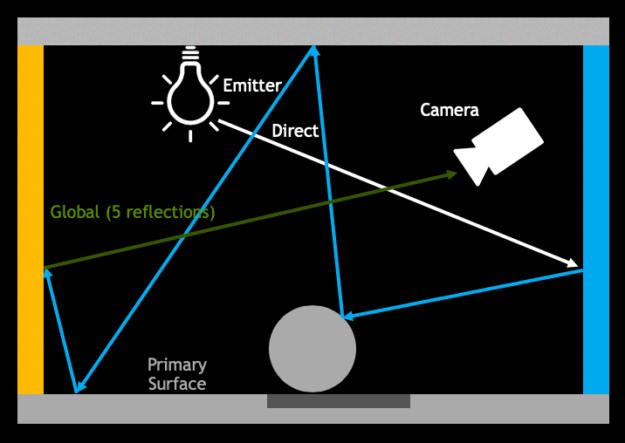

Figure 5 shows a 2D schematic of a gray box. The left wall is painted yellow and the right wall is painted cyan (blue-green). At the top of the box is a light bulb, which I’ll call an emitter to distinguish it clearly from the “light” energy in rays. On the top right of the box is the camera, and resting on the bottom is a gray sphere. The dark rectangle on the floor represents the shadow of the sphere.

Direct illumination is the light arriving at a surface straight from the emitter. I’ve drawn the path of two direct illumination light rays as bright white arrows. There are an infinite number of other direct illumination rays.

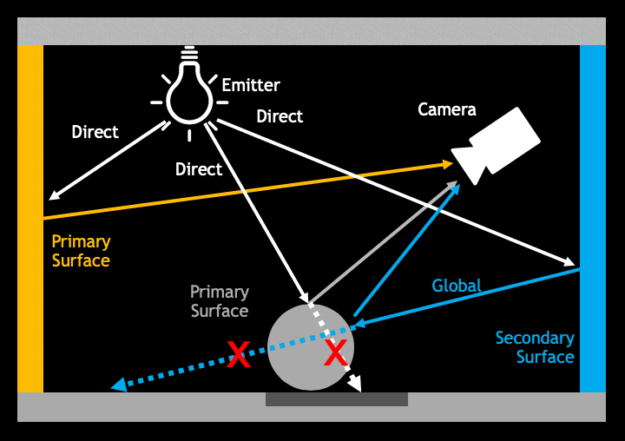

When the direct illumination scatters off surfaces into the camera, it can see those surfaces. The color that we see for a surface is the color of light that the surface reflected. This is represented by the gray arrow for the gray sphere and a yellow arrow for the yellow wall. We call these surfaces that the camera sees from its viewpoint the “[camera] primary surfaces”. Note that any surface that receives direct illumination is a primary surface from the light’s viewpoint.

An example of direct illumination in a 3D scene is shown in figure 7. The sun shines through a hole in the roof on the left wall. Shadows are completely black because no direct line can be traced to those locations from the sun. The emissive skybox is also visible in the distance. The image is very dark because most of the scene is not lit by direct illumination.

Direct illumination is what is computed with pixel shaders and shadow maps in real-time for most games today.

Global Illumination

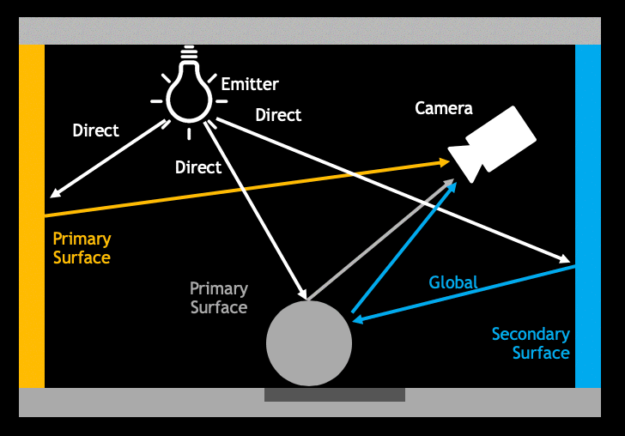

There are also secondary surfaces that the camera can’t see directly, but that affect the image. Figure 7 shows some direct illumination striking the cyan wall behind the camera. The cyan wall reflects cyan light onto the sphere in the center. (Light reflection is interchangeably also called “scattering” or “bouncing”, and it is the same process as “shading”.)

While the camera can’t see the cyan wall, it can see the side of the sphere that lit by cyan reflected light. That light is indirect, or global illumination, abbreviated as “GI”.

In this case, the cyan light on the side of the sphere has reflected twice before reaching the camera.

Since the light keeps reflecting off surfaces until it is completely absorbed, there is also global illumination of three, four, five, and more reflections. The image on the right shows a path with five reflections. It is dark green by the end because the cyan wall absorbed all of the red and the yellow wall absorbed all of the blue.

In the real world, global illumination can reflect infinitely. However, some light is absorbed at each surface. After a few reflections there is so little that it is not worth simulating any further. Offline rendering for movies typically uses between five and ten reflections.

Global illumination in games has previously been baked (precomputed) offline during production for games and stored in light maps or irradiance probes. There are some clever tricks for dynamic GI such as screen space ray tracing, but most global illumination in previous games can’t change with the scene. With GPU accelerated geometric ray tracing, upcoming games can now compute high-quality global illumination in real time.

In academic terminology, “global” illumination includes the direct light. In game terminology, “global” usually refers to only the indirect light and direct light is computed separately.

The 3D scene in figure 9 contains both direct and full global illumination, taken as a screenshot from our real-time implementation. I’m showing just the light, without materials (textures). The global illumination has a red tint because the unseen wall materials are red. Most of the light in this scene is from global illumination.

The renderer used thousands of reflections for each light path. But some light is lost at each reflection and there is finite precision in the texture map. So, only the first twenty or so reflections make a noticable difference in image quality. For example, with 90% reflective walls, after 20 reflections 1 − 0.920 = 87% of the light has been absorbed.\

Visibility

In discussing rendering, visibility generalizes from “what the camera sees” to whether there is an unobstructed straight path between any two points. Primary surfaces have visibility to the camera. Points that receive direct illumination have visibility to the emitter. Shadows are locations that do not have visibility to the emitter, as shown in figure 10.

It’s important that objects can cast indirect light shadows for correct global illumination. This means computing visibility for all points and only allowing light to reflect between ones that have visibility. It seems obvious that objects should block indirect light as well as direct light, since they do in the real world. Computing visibility for global illumination can be tricky, however. Just as 3D games before the year 2000 often did not have correct shadows, many games today either intentionally omit visibility for global illumination or struggle to hide errors in that visibility computation. We call these light leaks or shadow leaks depending on whether the visual error is bright or dark.

Glossy Reflection

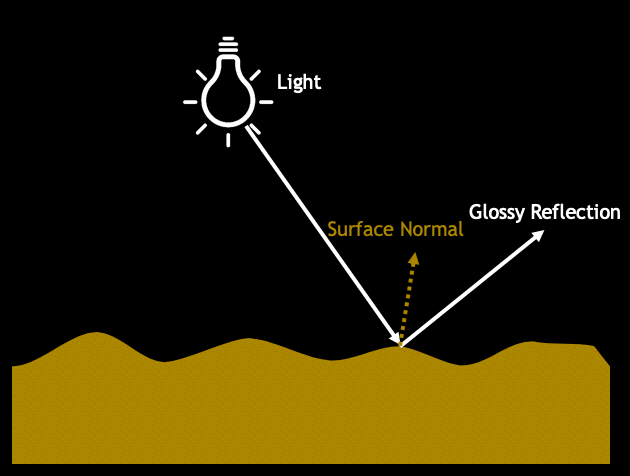

The diagram in figure 11 shows a schematic of a “flat” surface viewed under a magnifying glass. Everything is usually a little bit rough at a microscopic level. The surface acts like a broken mirror even for surfaces that you wouldn’t think of as mirror-like, such as rocks, apples, or leather. These large numbers of mirror fragments are called microfacets.

When light hits the surface and reflects right off a microfacet, that creates a glossy reflection. (The terms specular and shiny are also used for this, and GGX and Blinn-Phong are two microfacet models that you might be familiar with.)

Glossy Reflection

Glossy reflections cover an array of lighting, including specular, shiny, microfacet, roughness/smoothness, GGX, Blinn-Phong, highlights, and so on, as seen in figure 12. They have some commonalities:

- Light scatters at the surface

- Appearance changes with angle

- Reflection color is often unchanged by the material

The reflection direction follows the mirror direction of the microfacet—it bounces like a billiard ball. If the surface is microscopically smooth, you see a perfect mirror reflection. Rough surfaces generate reflection blurry or dull reflections because different facets send light in different directions.

If you look directly at something really bright in the reflection, such as an emitter, then you see a highlight. But really everything is being reflected; sometimes it’s just too dark to notice unless you look carefully.

RTX GI will light the surfaces seen in glossy reflections and can incorporate second-order glossy reflections into the GI paths that lead to the final diffuse reflections. But it does not render glossy reflections for the camera’s view. This article briefly describes a technique for glossy ray tracing but does not discuss how to optimize it in production as the focus here is on diffuse GI.

Diffuse Reflection

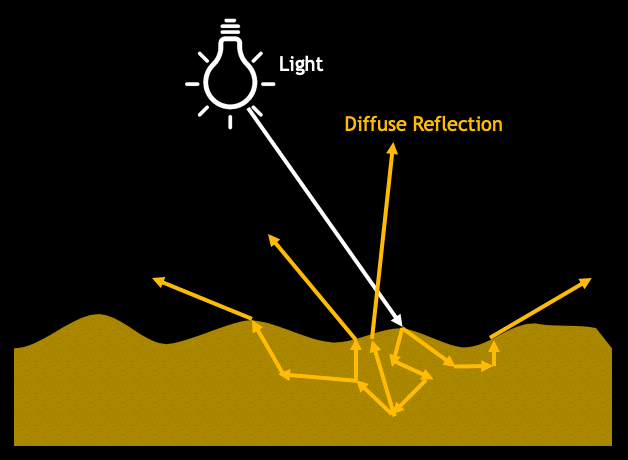

You don’t see much glossy surface reflection in some surfaces, such as office walls. Most of the light that shines on those surfaces penetrates a little under the surface instead of scattering off the surface. The light scatters around and then comes back out in a random direction. This is diffuse reflection, diagrammed in figure 13.

Since the light interacts heavily with the material, the color of light that emerges has been filtered by the material. This works a little differently in the real world than in real-time rendering because most rendering only tracks the three color contributions of red, green, and blue. However, that approximation is good enough most of the time, at least for entertainment applications.

Diffuse Reflection

Examples of diffuse reflection techniques include Matte, Lambertian, Oren-Nayar, Henyey-Greenstein, Diffuse BRDF, and more. The common characteristics include:

- Light scatters just below the surface

- Visible from all directions

- Color is the product of the light and material colors

The same physical process gives rise to deep subsurface scattering and transmission, which we don’t need for describing RTX GI.

The picture in figure 14 shows the diffuse portion of global illumination in our 3D scene. This includes some diffuse light scattered off tiny dust and fog particles in the air, creating volumetric effects.

In physics terminology, the diffuse global illumination I’ve described is the irradiance field. It consists of all kinds of reflection, where the last reflection before the camera is diffuse. Modern light maps/radiosity normal maps, voxels, and irradiance probes store this kind of lighting, although often with some problems handling visibility. The diffuse GI/irradiance field is different from radiosity, which requires all reflection everywhere to be diffuse. That’s what Quake III and older light maps stored.

Summary

We’ve now covered all of the terms needed to understand what the RTX GI technique provides:

- Dynamic – Everything can change at runtime: camera position, emitters, geometry, and even materials.

- Diffuse – The last reflection into the camera is from the diffuse part of the material. The GI paths up to the last reflection can be both glossy and diffuse. The glossy part of the last reflection is handled separately with glossy ray tracing or screen-space ray tracing.

- Global Illumination – Light that has reflected off at least two surfaces for reaching the camera, and up to infinite reflections within texture map precision.

as well as the key idea of visibility for global illumination, which RTX GI computes in a new way to prevent light leaks and speed workflow.

Part II will dive into the details of RTX GI with ray tracing.

I wrote this blog article in collaboration with my colleagues Zander Majercik and Adam Marrs at NVIDIA.

The team at NVIDIA continues to work with partners on the first engine and game integrations of DDGI. During that process I’m updating this article with new information on best practices and additional implementation detail. Game and engine developers can contact Morgan McGuire or on Twitter for integration support from NVIDIA.

If you are working on ray-traced games, we also recommend looking at our newly released Nsight Graphics 2019.3, a debugging and GPU profiling tool which has been updated to include support for DXR and NVIDIA VKRay.