As vision AI complexity increases, streamlined deployment solutions are crucial to optimizing spaces and processes. NVIDIA accelerates development, turning ideas into reality in weeks rather than months with NVIDIA Metropolis AI workflows and microservices.

In this post, we explore Metropolis microservices features:

- Cloud-native AI application development and deployment with NVIDIA Metropolis microservices

- Simulation and synthetic data generation with NVIDIA Isaac Sim

- AI model training and fine-tuning with NVIDIA TAO Toolkit

- Automated accuracy tuning with PipeTuner

Cloud-native AI application development and deployment with Metropolis microservices and workflows

Managing and automating infrastructure with AI is challenging, especially for large and complex spaces like supermarkets, warehouses, airports, ports, and cities. It’s not just about scaling the number of cameras, but building vision AI applications that can intelligently monitor, extract insights, and highlight anomalies across hundreds or thousands of cameras in spaces of tens or hundreds of thousands of square feet.

A microservices architecture enables scalability, flexibility, and resilience for complex multi-camera AI applications by breaking them down into smaller, self-contained units that interact through well-defined APIs. This approach enables the independent development, deployment, and scaling of each microservice, making the overall application more modular and easier to maintain.

Key components of real-time, scalable multi-camera tracking and analytics applications include the following:

- A multi-camera tracking module to aggregate local information from each camera and maintain global IDs for objects across the entire scene

- Different modules for behavior analytics and anomaly detection

- Software infrastructure like a real-time scalable message broker (for example, Kafka), database (for example, Elasticsearch)

- Standard interfaces to connect with downstream services needing on-demand metadata and video streams

- Each module must be a cloud-native microservice to enable your application to be scalable, distributed, and resilient

Metropolis microservices give you powerful, customizable, cloud-native building blocks for developing vision AI applications and solutions. They make it far easier and faster to prototype, build, test, and scale deployments from edge to cloud with enhanced resilience and security. Accelerate your path to unlock business insights for a wide range of spaces, ranging from warehouses and supermarkets to airports and roadways.

For more information and a comprehensive list of microservices, see the NVIDIA Metropolis microservices documentation.

The next sections cover some key microservices in more detail:

- Media Management

- Perception

- Multi-Camera Fusion

Media Management microservice

The Media Management microservice is based on the NVIDIA Video Storage Toolkit (VST) and provides an efficient way to manage cameras and videos. VST features hardware-accelerated video decoding, streaming, and storage.

It supports ONVIF S-profile devices ONVIF discovery with control and dataflow. You can manage devices manually by IP address or RTSP URLs. It supports both H264 and H265 video formats. VST is designed for security, industry-standard protocols, and multiplatform.

Perception microservice

The Perception microservice takes input data from the Media Management microservice and generates perception metadata (bounding boxes, single-camera trajectories, Re-ID embedding vectors) within individual streams. It then sends these data to downstream analytics microservices for further reasoning and insight.

The microservice is built with the NVIDIA DeepStream SDK. It offers a low-code or no-code approach to real-time video AI inference by providing pre-built modules and APIs that abstract away low-level programming tasks. With DeepStream, you can configure complex video analytics pipelines through a simple configuration file, specifying tasks such as object detection, classification, tracking, and more.

Multi-Camera Fusion microservice

The Multi-Camera Fusion microservice aggregates and processes information across multiple camera views, taking perception metadata from the Perception microservice through Kafka (or any custom source with a similar message schema) and extrinsic calibration information from the Camera Calibration Toolkit as inputs.

- Inside the microservice, the data goes to the Behavior State Management module to maintain the behavior of previous batches and concatenates with data from incoming micro-batches, creating trajectories.

- Next, the microservice performs two-step hierarchical clustering, re-assigning behaviors that co-exist and suppressing overlapping behaviors.

- Finally, the ID Merging module consolidates individual object IDs into global IDs, maintaining a correlation of objects observed across multiple sensors.

Metropolis AI workflows

Reference workflows and applications are provided to help you evaluate and integrate advanced capabilities.

For example, the Multi-Camera Tracking (MTMC) workflow is a reference workflow for video analytics that performs multi-target, multi-camera tracking and provides a count of the unique objects seen over time.

- The application workflow takes live camera feeds as input from the Media Management microservice.

- It performs object detection and tracking through the Perception microservice.

- The metadata from the Perception microservice goes to the Multi-Camera Fusion microservice to track the objects in multiple cameras.

- A parallel thread goes to the extended Behavior Analytics microservice to first preprocess the metadata, transform image coordinates to world coordinates, and then run a state management service.

- The data then goes to the Behavior Analytics microservice, which with the MTMC microservice, provides various analytics functions as API endpoints.

- The Web UI microservice visualizes the results.

Learn how to use the reference workflow to build and deploy the multi-camera tracking system, read the blog and the quick start guide.

Interface camera calibration

In most Metropolis workflows, analytics are performed in real-world coordinate systems. To convert camera coordinates to real-world coordinates, a user-friendly, web-based Camera Calibration Toolkit is provided, with features such as the following:

- Easy camera import from VMS

- Interface for reference point selection between camera image and floorplan

- On-the-fly reprojection error for self-checking

- Add-ons for ROIs and tripwires

- File upload for images or building map

- Export to web or API

This intuitive toolkit simplifies the process of setting up and calibrating cameras for seamless integration with Metropolis workflows and microservices.

2024 AI City Challenge

The NVIDIA Multi-Camera Tracking workflow was evaluated using the Multi-Camera People Tracking Dataset from the 8th 2024 AI City Challenge Workshop in conjunction with CVPR 2024. This dataset is the largest in this field, including 953 cameras, 2,491 people, and over 100M bounding boxes, divided into 90 subsets. The total duration of the dataset’s videos is 212 minutes, captured in high definition (1080p) at a frame rate of 30 frames per second.

The NVIDIA approach achieved an impressive HOTA score of 68.7%, ranking second among 19 international teams (Figure 9).

This benchmark only focuses on accuracy in batch mode, where the application has access to entire videos. In online or streaming operating conditions, the application can only access historical data but not future data compared to its current frame. This may make some submitted approaches impractical or require significant re-architecting for real deployment. Factors that are not considered in the benchmark include the following:

- Latency from input to prediction

- Runtime throughput (how many streams can run given a compute platform or budget)

- Deployability

- Scalability

As a result, most teams do not have to optimize for these factors.

In contrast, Multi-Camera Tracking, as part of Metropolis microservices, must consider and optimize for all these factors in addition to accuracy for real-time, scalable, multi-camera tracking to be deployed in production use cases.

One-click microservices deployment

Metropolis microservices support one-click deployment on AWS, Azure, and GCP. The deployment artifacts and instructions are downloadable on NGC, so you can quickly bring up an end-to-end MTMC application on your own cloud account by providing a few prerequisite parameters. Each workflow is packaged with a Compose file, enabling deployment with Docker Compose as well.

For edge-to-cloud camera streaming, cameras at the edge can be connected, using a Media Management client (VST Proxy) running at the edge, to a Metropolis application running in any of the CSPs for analytics.

This streamlined deployment process empowers you to rapidly prototype, test, and scale their vision AI applications across various cloud platforms, reducing the time and effort required to bring solutions to production.

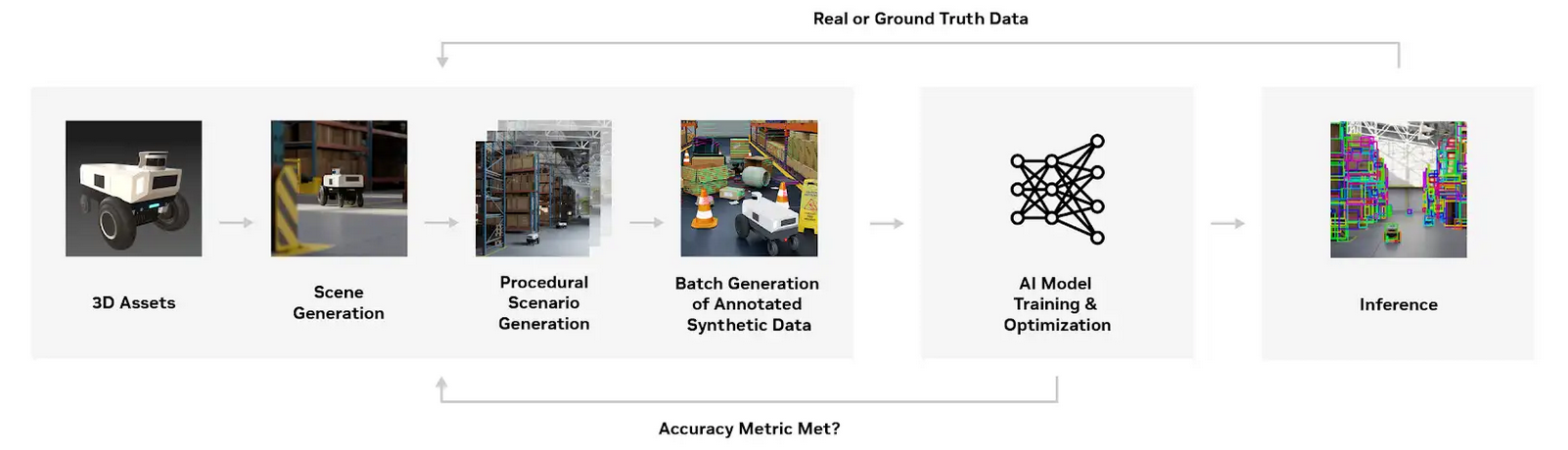

Simulation and synthetic data generation with Isaac Sim

Training AI models for specific use cases demands diverse, labeled datasets, often costly and time-intensive to collect. Synthetic data, generated through computer simulations, offers a cost-effective alternative that reduces training time and expenses.

Simulation and synthetic data play a crucial role in the modern vision AI development cycle:

- Generating synthetic data and combining it with real data to improve model accuracy and generalizability

- Helping develop and validate applications with multi-camera tracking and analytics

- Adjusting deployment environments like proposing optimized camera angles or coverage

NVIDIA Isaac Sim integrates seamlessly into the synthetic data generation (SDG) pipeline, providing a sophisticated companion to enhance AI model training and end-to-end application design and validation. You can generate synthetic data across a wide range of applications, from robotics and industrial automation to smart cities and retail analytics.

The Omni.Replicator.Agent (ORA) extension in Isaac Sim streamlines the simulation of agents like people and autonomous moving robots (AMRs) and the generation of synthetic data from scenes containing them.

ORA offers GPU-accelerated solutions with default environments, assets, and animations, supporting custom integration. It includes an automated camera calibration feature, producing calibration information compatible with the workflows in Metropolis microservices, such as the Multi-Camera Tracking (MTMC) workflow mentioned later in this post.

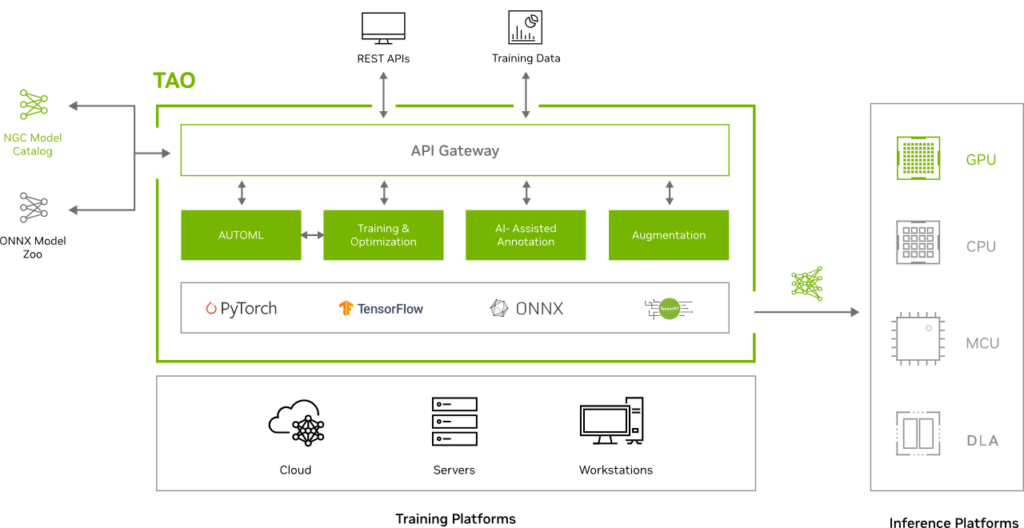

AI model training and fine-tuning with TAO Toolkit

Metropolis microservices employ some CNN-based and Transformer-based models, which are initially pretrained on a real dataset and augmented with synthetic data for more robust generalization and handling of rare cases.

- CNN-based models:

- PeopleNet: Based on NVIDIA DetectNet_v2 architecture. Pretrained on over 7.6M images with more than 71M person objects.

- ReidentificationNet: Uses a ResNet-50 backbone. Trained on a combination of real and synthetic datasets, including 751 unique IDs from the Market-1501 dataset and 156 unique IDs from the MTMC people tracking dataset.

- Transformer-based models:

- PeopleNet-Transformer: Uses the DINO object detector with a FAN-Small feature extractor. Pretrained on the OpenImages dataset and fine-tuned on a proprietary dataset with over 1.5M images and 27M person objects.

- ReID Transformer model: Employs a Swin backbone and incorporates self-supervised learning techniques such as SOLIDER to generate robust human representations for person re-identification. The pretraining dataset includes a combination of proprietary and open datasets such as Open Image V5, with a total of 14,392 synthetic images with 156 unique IDs and 67,563 real images with 4,470 IDs.

In addition to directly using these models, you can use the NVIDIA TAO Toolkit to easily fine-tune on custom datasets for improved accuracy and optimize newly trained models for inference throughput on practically any platform. The TAO Toolkit is built on TensorFlow and PyTorch. Read this blog to learn how to fine-tune AI models with NVIDIA TAO, NVIDIA Isaac Sim and synthetic data.

Automated accuracy tuning with PipeTuner

PipeTuner is a new developer tool designed to simplify the tuning of AI pipelines.

AI services typically incorporate a wide array of parameters for inference and tracking, and finding the optimal settings to maximize accuracy for specific use cases can be challenging. Manual tuning requires deep knowledge of each pipeline module and becomes impractical with extensive, high-dimensional parameter spaces.

PipeTuner addresses these challenges by automating the process of identifying the best parameters to achieve the highest possible key performance indicators (KPIs) based on the dataset provided. By efficiently exploring the parameter space, PipeTuner simplifies the optimization process, making it accessible without requiring technical knowledge of the pipeline and its parameters.

Summary

Metropolis microservices simplify and accelerate the process of prototyping, building, testing, and scaling deployments from edge to cloud, offering improved resilience and security. The microservices are flexible, easy to configure with zero coding, and packaged with efficient CNN and transformer-based models to fit your requirements. Deploy entire end-to-end workflows with a few clicks to the public cloud or in production.

You can create powerful, real-time multi-camera AI solutions with ease using NVIDIA Isaac Sim, NVIDIA TAO Toolkit, PipeTuner, and NVIDIA Metropolis microservices. This comprehensive platform empowers your business to unlock valuable insights and optimize your spaces and processes across a wide range of industries.

For more information, see the following resources:

- Download NVIDIA Metropolis microservices

- More ‘Get Started’ resources

- NVIDIA Metropolis Microservices Developer Guide

- Learn more about AI-powered multi camera tracking use case

- Access the forum for technical questions. Please note that you must first apply for software access using this form. Forum access is granted only after software access is approved.

- Tech Blog: Optimize Processes for Large Spaces with the Multi-Camera Tracking Workflow

- Tech Blog: Enhance Multi-Camera Tracking Accuracy by Fine-Tuning AI Models with Synthetic Data