Collision-free motion generation in unknown environments is a core building block for robotic applications. Generating such motions is challenging. The motion generator must be fast enough for real-time performance and reliable enough for practical deployment.

Many methods addressing these challenges have been proposed, ranging from using local controllers to global planners. However, these traditional motion planning solutions are unable to overcome shortcomings when the environment is unknown and dynamic. They also require complex visual processing procedures, such as SLAM, to generate obstacle representations by aggregating camera observations from multiple viewpoints. These representations ultimately require costly updates when the objects move and the environment changes.

Motion Policy Networks (MπNets), pronounced “M Pi Nets,” is a new end-to-end neural policy developed by the NVIDIA Robotics research team. MπNets generates collision-free, smooth motion in real time, by using a continuous stream of data coming from a single static camera. The technology is able to circumvent the challenges of traditional motion planning and is flexible enough to be applied in unknown environments.

We will be presenting this work on December 18 at the Conference on Robot Learning (CoRL) 2022 in New Zealand.

Large-scale synthetic data generation

To train the MπNets neural policy, we first needed to create a large-scale dataset for learning and benchmarking. We turned to simulation for synthetically generating vast amounts of robot trajectories and camera point cloud data.

The expert trajectories are generated using a motion planner that creates consistent motion around complex obstacles while accounting for a robot’s physical and geometric constraints. It consists of a pipeline of geometric fabrics from NVIDIA Omniverse, an AIT* global planner, and spline-based temporal resampling.

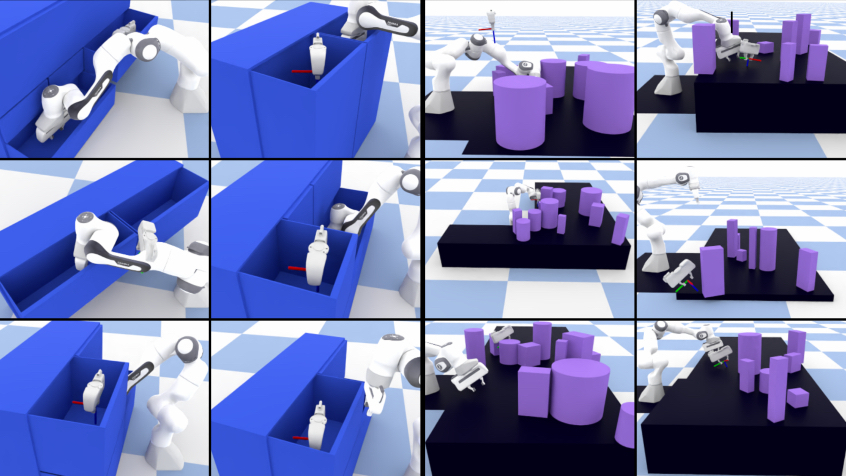

MπNets was trained with more than 3 million expert trajectories and 700 million point clouds rendered in 500,000 simulated environments. Training the neural policy on large-scale data was crucial for generalizing to unknown environments in the real world.

End-to-end architecture for motion planning

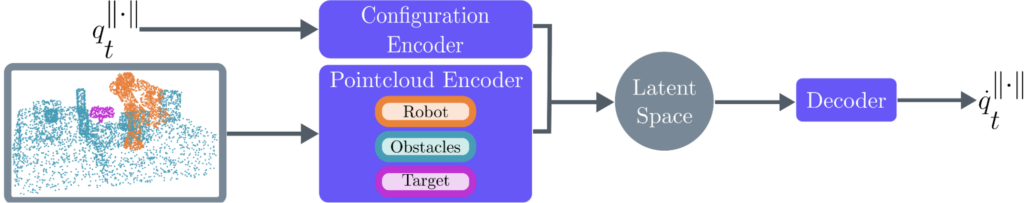

An end-to-end neural network policy, MπNets maps directly from camera point cloud observations to robot joint positions. The policy jointly encodes three inputs: a single-view point cloud camera observation of the scene, the robot’s current state configuration, and desired target pose that the user commands the robot to achieve.

It outputs joint positions to achieve the specified target pose, which we then execute on the robot’s low-level controller.

The input point cloud is automatically labeled with three classes: the robot, the obstacles, and the specified target pose of the robot. The target pose is represented as a point cloud of the robot’s gripper.

Sim2Real Transfer to the Real World

MπNets generalizes well to a real robot system with a single, static depth camera. The policy directly transfers to the real world without needing real data, due to the low domain gap in point cloud observations (vis-a-vis RGB images).

As shown in Figure 3, it reaches into tightly confined spaces without colliding with obstacles such as the plates and mug, scenarios commonplace in human spaces. With its end-to-end policy architecture, MπNets can also be executed in a closed-loop real robot system running at 9 Hz, and react immediately to dynamic scenes, as shown in Figure 3.

Fast, global, and avoids local optima

MπNets solution time is much shorter than a state-of-the-art sampling-based planner. It is 46% more likely to find a solution than MPNets, despite not requiring a collision checker. MπNets is less likely to get stuck in challenging situations, such as tightly confined spaces, because it is learned from long-term global planning information.

In Figure 4 both STORM and geometric fabrics are stuck in the first drawer because they can’t figure out how to retract and go into the second drawer. Neither reaches the final target pose.

Getting started with MπNets

When trained on a large dataset of simulated scenes, MπNets is faster than traditional planners, more successful than other local controllers, and transfers well to a real robot system even in dynamic and partially observed scenes.

To help you get started with MπNets, our paper is published on Arxiv and the source code is available on the Motion Policy Networks GitHub. You can also load our pre-trained weights and play around using our ROS RViz user interface.

Learn more about neural motion planning, in the context of robot benchmarking, at the Benchmarking workshop during CoRL on December 15.