Predictive maintenance is used for early fault detection, diagnosis, and prediction when maintenance is needed in various industries including oil and gas, manufacturing, and transportation. Equipment is continuously monitored to measure things like sound, vibration, and temperature to alert and report potential issues. To accomplish this in computers, the first step is to determine the root cause of any type of failure or error. The current industry-standard practice uses complex rulesets to continuously monitor specific components, but such systems typically only alert on previously observed faults. In addition, these regular expressions (regex) rulesets do not scale. As data becomes more voluminous and heterogeneous, maintaining these rulesets presents a neverending catch-up task. Since they only alert on what has been seen in the past, they cannot detect new root causes with patterns that were unknown to analysts before.

The approach

To create a more proactive approach for predictive maintenance, we’ve implemented uses Natural Language Processing (NLP) to monitor and interpret kernel logs. The RAPIDS CLX team collaborated with the NVIDIA Enterprise Experience (NVEX) team to test and run a proof-of-concept (POC) to evaluate this NLP-based solution. The project seeks to:

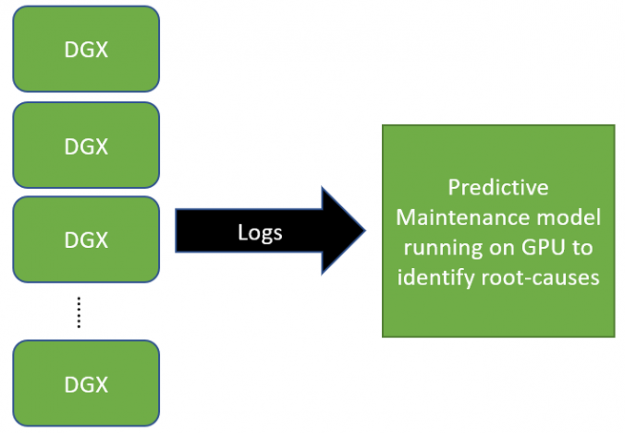

- Drastically reduce the time spent manually analyzing kernel logs of NVIDIA DGX systems by pinpointing important lines in the vast amount of logs,

- Probabilistically classify sequences, giving the team the capability to fine-tune a threshold to decide whether a line in the log is a root cause or not.

A complete example of a root cause workflow can be found in the RAPIDS CLX GitHub repository. For final deployment past the POC, the team is using NVIDIA Morpheus, an open AI framework for developers to implement cybersecurity-specific inference pipelines. Morpheus provides a simple interface for security developers and data scientists to create and deploy end-to-end pipelines that address cybersecurity, information security, and general log-based pipelines. It is built on a number of other pieces of technology, including RAPIDS, Triton, TensorRT, Streamz, CLX, and more.

The POC is outlined as follows:

- The first step identifies root causes that caused past failures. NVEX provides a dataset that contains lines in kernel logs that have been marked as a root cause to date.

- Next, the problem is framed as a classification problem by sorting the logs into two groups, ordinary, and root cause. Ordinary logs are labeled as 0 and root cause lines as 1.

We have fine-tuned a pre-trained BERT model from HuggingFace to perform classification. More information about the BERT model can be found in the original paper. The code block below shows the pre-trained model called “bert-base-uncased” is loaded to be used for sequence classification.

seq_classifier.init_model("bert-base-uncased")seq_classifier = SequenceClassifier()

seq_classifier.init_model("bert-base-uncased")Training with our own datasets fine-tuned this model.

seq_classifier.train_model(X_train["log"], y_train, epochs=1)

Epoch: 100%|██████████| 1/1 [25:29<00:00, 1529.92s/it]

Validation Accuracy: 0.9988754734848485

High validation accuracy (close to one) implies most of the predictions are aligned with the original labels.

Evaluation

Once the training was completed, we ran inference on a separate set of logs (the test set).

seq_classifier.evaluate_model(X_test["log"], y_test)

0.9992423076467732Like validation accuracy, test set accuracy is also close to one, which means most of the predicted classes are the same as the original labels. We performed an inference run for classification with two goals:

- Check the number of false positives. In our context, this means the number of lines in the kernel logs that are predicted to be a root cause but are not of interest.

- Check the number of false negatives. In our context, this refers to the lines that are root causes but predicted to be ordinary.

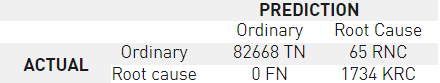

Unlike the conventional evaluation of classification tasks, having a labelled test set does not translate into interpretable results as one of our main targets is to predict previously unseen root causes. The best way to understand how the model performs is to check the resulting confusion matrix.

In our use case, the confusion matrix gives the following outputs:

- TN (True Negatives): These are the ordinary lines that were not labeled as a root cause, and the model correctly marks 82668 of them.

- FN (False Negatives): Zero false negatives mean the model does not mark any of the known root causes as ordinary.

- NRC (New Root Causes): 65 new lines that were marked as ordinary are predicted to be root causes. These are the lines that would have been missed with the existing methods.

- KRC (Known Root Causes): This is the number of lines correctly marked as root cause.

NVEX analysts have reviewed our predictions and noticed some interesting logs that were not marked as a root cause of issues with the conventional methods. With regex-based methods, such new issues might have cost a significant amount of person-hours to triage, develop, and harden.

Applying our solution to more use cases

In the next phase, we plan to position similar solutions in NVIDIA platforms to alert users of potential problems or execute corrective actions with Morpheus. By building on the success of root cause analysis here, we seek to extend this into a predictive maintenance task by continuous monitoring of the logs. This use case is certainly not limited to DGX systems. For example, telecommunication infrastructure equipment, including radio, core, and transmission devices, generate a diverse set of logs. Their outages may result in loss of service and severe fines. Identifying the root cause of outages imposes a significant cost, both in terms of dollars spent and person-hours. We believe all systems that generate text-based logs, especially the ones that run mission-critical applications, would benefit from such NLP based predictive maintenance solutions immensely as it would reduce the mean time to resolution.