NVIDIA Technical Blog

-

Agentic AI / Generative AIRun Step 3.7 Flash on NVIDIA GPUs with Enterprise-Ready Multimodal AI

-

Top StoriesNVIDIA Dynamo Snapshot: Fast Startup for Inference Workloads on Kubernetes

-

Agentic AI / Generative AINVIDIA Blackwell Sets STAC-AI Record for LLM Inference in Finance

-

Developer Tools & TechniquesDevelop High-Performance GPU Kernels in C++ with NVIDIA CUDA Tile

-

Developer Tools & TechniquesNVIDIA CUDA 13.3 Enhances GPU Development with Tile Programming in C++, Compiler Autotuning, and Python Updates

Recent

May 29, 2026

How to Automate AI Model Documentation with the NVIDIA MCG Toolkit

As AI models grow in complexity and regulatory scrutiny intensifies under frameworks including California’s AB-2013 and the EU AI Act, software teams...

7 MIN READ

May 28, 2026

Run Step 3.7 Flash on NVIDIA GPUs with Enterprise-Ready Multimodal AI

AI applications are moving beyond text generation to multimodal systems that can perceive, search, and reason across images, documents, video, and language in...

3 MIN READ

May 27, 2026

NVIDIA Dynamo Snapshot: Fast Startup for Inference Workloads on Kubernetes

The cold-start problem In production inference deployments, demand fluctuates over time, requiring inference replicas to scale elastically. However,...

15 MIN READ

May 27, 2026

NVIDIA Blackwell Sets STAC-AI Record for LLM Inference in Finance

Large language models (LLMs) are revolutionizing the financial trading landscape by enabling sophisticated analysis of vast amounts of unstructured data to...

10 MIN READ

May 27, 2026

What's New for Game Developers in NVIDIA RTX: DLSS 4.5 for UE5 and Multilingual AI Characters

NVIDIA RTX provides game developers with direct paths to AI-driven characters, frame generation, and ray-traced rendering. This post walks through a meaningful...

5 MIN READ

May 26, 2026

Extract More Kernel Performance with NVIDIA CompileIQ Auto-Tuning

NVIDIA CompileIQ tackles one of the hardest problems in performance engineering: finding the compiler options that unlock the best performance for a specific...

12 MIN READ

May 26, 2026

Develop High-Performance GPU Kernels in C++ with NVIDIA CUDA Tile

Developers can now use NVIDIA CUDA Tile programming within large existing C++ GPU codebases to develop highly optimized GPU kernels using tile-based...

14 MIN READ

May 26, 2026

NVIDIA CUDA 13.3 Enhances GPU Development with Tile Programming in C++, Compiler Autotuning, and Python Updates

NVIDIA CUDA 13.3 brings new capabilities and performance optimizations to developers across the CUDA ecosystem. The launch of NVIDIA CUDA Tile programming in...

13 MIN READ

Inference Performance

May 07, 2026

Model Quantization: Post-Training Quantization Using NVIDIA Model Optimizer

Model quantization is an effective method to reduce VRAM usage and improve inference performance on consumer devices such as NVIDIA GeForce RTX GPUs. By...

8 MIN READ

Apr 17, 2026

Full-Stack Optimizations for Agentic Inference with NVIDIA Dynamo

Coding agents are starting to write production code at scale. Stripe’s agents generate 1,300+ PRs per week. Ramp attributes 30% of merged PRs to agents....

17 MIN READ

Mar 23, 2026

Deploying Disaggregated LLM Inference Workloads on Kubernetes

As large language model (LLM) inference workloads grow in complexity, a single monolithic serving process starts to hit its limits. Prefill and decode stages...

14 MIN READ

Mar 09, 2026

Enhancing Distributed Inference Performance with the NVIDIA Inference Transfer Library

Deploying large language models (LLMs) requires large-scale distributed inference, which spreads model computation and request handling across many GPUs and...

13 MIN READ

Feb 27, 2026

Maximizing GPU Utilization with NVIDIA Run:ai and NVIDIA NIM

Organizations deploying LLMs are challenged by inference workloads with different resource requirements. A small embedding model might use only a few gigabytes...

11 MIN READ

Feb 18, 2026

Unlock Massive Token Throughput with GPU Fractioning in NVIDIA Run:ai

As AI workloads scale, achieving high throughput, efficient resource usage, and predictable latency becomes essential. NVIDIA Run:ai addresses these challenges...

13 MIN READ

Feb 09, 2026

Automating Inference Optimizations with NVIDIA TensorRT LLM AutoDeploy

NVIDIA TensorRT LLM enables developers to build high-performance inference engines for large language models (LLMs), but deploying a new architecture...

9 MIN READ

Jan 26, 2026

Adaptive Inference in NVIDIA TensorRT for RTX Enables Automatic Optimization

Deploying AI applications across diverse consumer hardware has traditionally forced a trade-off. You can optimize for specific GPU configurations and achieve...

9 MIN READ

Build AI Agents

May 19, 2026

NVIDIA-Verified Agent Skills Provide Capability Governance for AI Agents

Autonomous AI agents are becoming more capable. Open models, Model Context Protocol (MCP)-connected tools, and portable skills are also making agents easier to...

8 MIN READ

Apr 17, 2026

Build a More Secure, Always-On Local AI Agent with OpenClaw and NVIDIA NemoClaw

Agents are evolving from question-and-answer systems into long-running autonomous assistants that read files, call APIs, and drive multi-step workflows....

10 MIN READ

Feb 04, 2026

How to Build a Document Processing Pipeline for RAG with Nemotron

What if your AI agent could instantly parse complex PDFs, extract nested tables, and "see" data within charts as easily as reading a text file? With NVIDIA...

9 MIN READ

Jan 15, 2026

How to Train an AI Agent for Command-Line Tasks with Synthetic Data and Reinforcement Learning

What if your computer-use agent could learn a new Command Line Interface (CLI)—and operate it safely without ever writing files or free-typing shell commands?...

11 MIN READ

Jan 05, 2026

How to Build a Voice Agent with RAG and Safety Guardrails

Building an agent is more than just “call an API”—it requires stitching together retrieval, speech, safety, and reasoning components so they behave like one...

9 MIN READ

Dec 12, 2025

How to Build Privacy-Preserving Evaluation Benchmarks with Synthetic Data

Validating AI systems requires benchmarks—datasets and evaluation workflows that mimic real-world conditions—to measure accuracy, reliability, and safety...

11 MIN READ

Nov 07, 2025

Building an Interactive AI Agent for Lightning-Fast Machine Learning Tasks

Data scientists spend a lot of time cleaning and preparing large, unstructured datasets before analysis can begin, often requiring strong programming and...

8 MIN READ

Oct 22, 2025

Create Your Own Bash Computer Use Agent with NVIDIA Nemotron in One Hour

What if you could talk to your computer and have it perform tasks through the Bash terminal, without you writing a single command? With the NVIDIA Nemotron...

14 MIN READ

Agentic AI / Generative AI

May 22, 2026

Synthesize Realistic 3D Medical Images at Scale to Ship Pre‑Trained Models

High‑quality 3D medical imaging data is the foundation of modern radiology AI, but access to it is often constrained by data scarcity, privacy restrictions,...

10 MIN READ

May 21, 2026

Automating and Optimizing Financial Signal Discovery with Multi-Agent Systems

In quantitative finance, researchers build algorithms to trade assets, derivatives, and other financial instruments. A key part of that work is finding...

12 MIN READ

May 21, 2026

Unlock Exascale Performance on NVIDIA GB200 NVL72 with Slurm Topology-Aware Job Scheduling

As AI models grow in scale and complexity, realizing the full performance of modern accelerated infrastructure depends as much on how workloads are placed as...

10 MIN READ

May 21, 2026

Building Token‑Metered AI Services on Telco AI Factories

Telcos around the world are building sovereign AI factories based on the NVIDIA Cloud Partner (NCP) reference architecture, giving governments, enterprises,...

10 MIN READ

May 20, 2026

Mastering Agentic Techniques: AI Agent Customization

Autonomous AI agents are taking on all types of work for businesses: routing logistics fleets, triaging support tickets, generating code, and orchestrating...

16 MIN READ

May 20, 2026

Add a Specialized Deep Research Skill to Agent Harnesses

Agent harnesses like Claude Code, Codex, and LangChain Deep Agents are excellent orchestrators. They manage sessions, chain tools, execute code, and respond to...

8 MIN READ

May 19, 2026

Mastering Agentic Techniques: AI Agent Evaluation

Evaluating an AI model and evaluating an AI agent are related—but they answer fundamentally different questions. A model benchmark tests the capability of a...

6 MIN READ

May 14, 2026

How the NVIDIA Vera Rubin Platform is Solving Agentic AI’s Scale-Up Problem

Agentic inference has fundamentally changed the runtime dynamics of inference workloads by introducing non-deterministic trajectories—actions, observations,...

8 MIN READ

Robotics

Apr 20, 2026

Maximizing Memory Efficiency to Run Bigger Models on NVIDIA Jetson

The boom in open source generative AI models is pushing beyond data centers into machines operating in the physical world. Developers are eager to deploy these...

14 MIN READ

Apr 08, 2026

Integrate Physical AI Capabilities into Existing Apps with NVIDIA Omniverse Libraries

Physical AI—AI systems that perceive, reason, and act in physically grounded simulated environments—is changing how teams design and validate robots and...

13 MIN READ

Mar 31, 2026

Build and Stream Browser-Based XR Experiences with NVIDIA CloudXR.js

Delivering high-fidelity VR and AR experiences to enterprise users has typically required native application development, custom device management, and complex...

8 MIN READ

Mar 25, 2026

How Centralized Radar Processing on NVIDIA DRIVE Enables Safer, Smarter Level 4 Autonomy

In the current state of automotive radar, machine learning engineers can't work with camera-equivalent raw RGB images. Instead, they work with the output of...

11 MIN READ

Mar 23, 2026

NVIDIA IGX Thor Powers Industrial, Medical, and Robotics Edge AI Applications

Industrial and medical systems are rapidly increasing the use of high-performance AI to improve worker productivity, human-machine interaction, and downtime...

11 MIN READ

Mar 16, 2026

Using Simulation to Build Robotic Systems for Hospital Automation

Healthcare faces a structural demand–capacity crisis: a projected global shortfall of ~10 million clinicians by 2030, billions of diagnostic exams annually...

9 MIN READ

Mar 16, 2026

Newton Adds Contact-Rich Manipulation and Locomotion Capabilities for Industrial Robotics

Physics forms the foundation of robotic simulation, enabling realistic modeling of motion and interaction. For tasks like locomotion and manipulation,...

14 MIN READ

Mar 13, 2026

Scale Synthetic Data and Physical AI Reasoning with NVIDIA Cosmos World Foundation Models

The next generation of AI-driven robots like humanoids and autonomous vehicles depends on high-fidelity, physics-aware training data. Without diverse and...

8 MIN READ

Data Science

May 07, 2026

Real-Time Performance Monitoring and Faster Debugging with NCCL Inspector and Prometheus

Distributed deep learning depends on fast, reliable GPU-to-GPU communication using the NVIDIA Collective Communication Library (NCCL). When training slows...

7 MIN READ

May 04, 2026

Optimize Supply Chain Decision Systems Using NVIDIA cuOpt Agent Skills

Modern supply chains operate under the constant pressures of fluctuating demand, volatile costs, constrained capacity, and interdependent decision-making....

6 MIN READ

Apr 30, 2026

Automating GPU Kernel Translation with AI Agents: cuTile Python to cuTile.jl

NVIDIA CUDA Tile (cuTile) is a tile-based programming model that enables developers to write GPU kernels in terms of tile-level operations—loads, stores, and...

9 MIN READ

Apr 28, 2026

Scaling Biomolecular Modeling Using Context Parallelism in NVIDIA BioNeMo

For decades, computational biology has operated under a reductionist compromise. To fit complex biological systems into the limited memory of a single GPU,...

9 MIN READ

Apr 28, 2026

24/7 Simulation Loops: How Agentic AI Keeps Subsurface Engineering Moving

The subsurface industry is at a critical point in its digital evolution. For decades, unlocking reservoir potential has relied on experts performing essential...

8 MIN READ

Apr 24, 2026

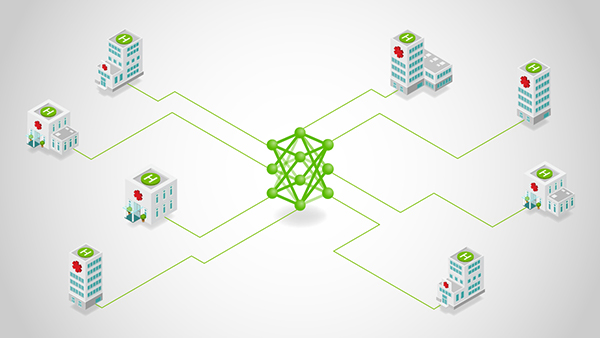

Federated Learning Without the Refactoring Overhead Using NVIDIA FLARE

Federated learning (FL) is no longer a research curiosity—it’s a practical response to a hard constraint: the most valuable data is often the least movable....

8 MIN READ

Apr 23, 2026

Winning a Kaggle Competition with Generative AI–Assisted Coding

In March 2026, three LLM agents generated over 600,000 lines of code, ran 850 experiments, and helped secure a first-place finish in a Kaggle playground...

8 MIN READ

Apr 20, 2026

Run High-Throughput Reinforcement Learning Training with End-to-End FP8 Precision

As LLMs transition from simple text generation to complex reasoning, reinforcement learning (RL) plays a central role. Algorithms like Group Relative Policy...

9 MIN READ

Simulation / Modeling / Design

Apr 17, 2026

Accelerate Clean, Modular, Nuclear Reactor Design with AI Physics

The development of socially acceptable nuclear reactors requires that they are safe, clean, efficient, economical, and sustainable. Meeting these requirements...

12 MIN READ

Apr 14, 2026

Building Custom Atomistic Simulation Workflows for Chemistry and Materials Science with NVIDIA ALCHEMI Toolkit

For decades, computational chemistry has faced a tug-of-war between accuracy and speed. Ab initio methods like density functional theory (DFT) provide high...

14 MIN READ

Mar 31, 2026

Stream High-Fidelity Spatial Computing Content to Any Device with NVIDIA CloudXR 6.0

Spatial computing is moving from visualization to active collaboration, adding increasingly more GPU demands on XR hardware to render photorealistic,...

8 MIN READ

Mar 25, 2026

Designing Protein Binders Using the Generative Model Proteina-Complexa

Developing new protein-based therapies and catalysts involves the challenging task of designing protein binders, or proteins that bind to a target protein or...

10 MIN READ

Mar 16, 2026

Design, Simulate, and Scale AI Factory Infrastructure with NVIDIA DSX Air

Building AI factories is complex and requires efficient integration across compute, networking, security, and storage systems. To achieve rapid Time to AI and...

5 MIN READ

Mar 12, 2026

Build Accelerated, Differentiable Computational Physics Code for AI with NVIDIA Warp

Computer-aided engineering (CAE) is shifting from human-driven workflows toward AI-driven ones, including physics foundation models that generalize across...

18 MIN READ

Mar 09, 2026

CUDA 13.2 Introduces Enhanced CUDA Tile Support and New Python Features

CUDA 13.2 arrives with a major update: NVIDIA CUDA Tile is now supported on devices of compute capability 8.X architectures (NVIDIA Ampere and NVIDIA Ada), as...

15 MIN READ

Mar 05, 2026

Controlling Floating-Point Determinism in NVIDIA CCCL

A computation is considered deterministic if multiple runs with the same input data produce the same bitwise result. While this may seem like a simple property...

7 MIN READ

Computer Vision / Video Analytics

May 13, 2026

Transform Video Into Instantly Searchable, Actionable Intelligence with AI Agents and Skills

In today’s data-driven world, organizations increasingly rely on video to capture critical information, yet extracting meaningful, real-time insights from...

12 MIN READ

Apr 16, 2026

How to Build Vision AI Pipelines Using NVIDIA DeepStream Coding Agents

Developing real-time vision AI applications presents a significant challenge for developers, often demanding intricate data pipelines, countless lines of code,...

9 MIN READ

Jan 07, 2026

Build and Orchestrate End-to-End SDG Workflows with NVIDIA Isaac Sim and NVIDIA OSMO

As robots take on increasingly dynamic mobility tasks, developers need physics-accurate simulations that translate across environments and workloads. Training...

12 MIN READ

Dec 16, 2025

Optimizing Semiconductor Defect Classification with Generative AI and Vision Foundation Models

In the heart of every modern electronic device lies a silicon chip, built through a manufacturing process so precise that even a microscopic defect can...

12 MIN READ

Dec 11, 2025

Getting Started with Edge AI on NVIDIA Jetson: LLMs, VLMs, and Foundation Models for Robotics

Running advanced AI and computer vision workloads on small, power-efficient devices at the edge is a growing challenge. Robots, smart cameras, and autonomous...

9 MIN READ

Dec 02, 2025

NVIDIA-Accelerated Mistral 3 Open Models Deliver Efficiency, Accuracy at Any Scale

The new Mistral 3 open model family delivers industry-leading accuracy, efficiency, and customization capabilities for developers and enterprises. Optimized...

6 MIN READ

Nov 25, 2025

Making Robot Perception More Efficient on NVIDIA Jetson Thor

Building autonomous robots requires robust, low-latency visual perception for depth, obstacle recognition, localization, and navigation in dynamic...

15 MIN READ

Nov 10, 2025

Upcoming Livestream: Build Visual AI Agents with NVIDIA Cosmos Reason and Metropolis

On November 18, learn how to fine-tune the NVIDIA Cosmos Reason VLM with your own data to create visual AI agents.

1 MIN READ

Content Creation / Rendering

Apr 30, 2026

Speed Up Unreal Engine NNE Inference with NVIDIA TensorRT for RTX Runtime

Neural network techniques are increasingly used in computer graphics to boost image quality, improve performance, and streamline content creation. Approaches...

7 MIN READ

Apr 30, 2026

Build AI-Powered Games with NVIDIA DLSS 4.5, RTX, and Unreal Engine 5

Today, game developers can begin integrating NVIDIA DLSS 4.5 with Dynamic Multi Frame Generation, Multi Frame Generation 6X, and the second-generation...

7 MIN READ

Apr 30, 2026

How to Build, Run, and Scale High-Quality Creator Workflows in ComfyUI

Creative and visualization teams today produce more assets, in more formats, with leaner teams. Generative AI can accelerate that work – compressing tasks that...

11 MIN READ

Mar 24, 2026

Building NVIDIA Nemotron 3 Agents for Reasoning, Multimodal RAG, Voice, and Safety

Agentic AI is an ecosystem where specialized models work together to handle planning, reasoning, retrieval, and safety guardrailing. As these systems scale,...

10 MIN READ

Mar 10, 2026

NVIDIA RTX Innovations Are Powering the Next Era of Game Development

NVIDIA RTX ray tracing and AI-powered neural rendering technologies are redefining how games are made, enabling a new standard for visuals and performance. At...

13 MIN READ

Mar 10, 2026

Reliable AI Coding for Unreal Engine: Improving Accuracy and Reducing Token Costs

Agentic code assistants are moving into daily game development as studios build larger worlds, ship more DLCs, and support distributed teams. These assistants...

6 MIN READ

Feb 05, 2026

How Painkiller RTX Uses Generative AI to Modernize Game Assets at Scale

Painkiller RTX sets a new standard for how small teams can balance massive visual ambition with limited resources by integrating generative AI. By upscaling...

14 MIN READ

Jan 22, 2026

Scaling NVFP4 Inference for FLUX.2 on NVIDIA Blackwell Data Center GPUs

In 2025, NVIDIA partnered with Black Forest Labs (BFL) to optimize the FLUX.1 text-to-image model series, unlocking FP4 image generation performance on NVIDIA...

9 MIN READ

Edge Computing

May 13, 2026

Accelerated X-Ray Analysis for Nanoscale Imaging (XANI) of Novel Materials

A massive-scale X-ray free-electron laser (XFEL) enables tracking structural and electron dynamics in novel systems, including fusion materials,...

11 MIN READ

May 05, 2026

How to Build In-Vehicle AI Agents with NVIDIA: From Cloud to Car

The automotive cockpit is undergoing a fundamental shift from rule-based interfaces to agentic, multimodal AI systems capable of reasoning, planning, and...

15 MIN READ

Apr 02, 2026

Bringing AI Closer to the Edge and On-Device with Gemma 4

The Gemmaverse expands with the launch of the latest Gemma 4 multimodal and multilingual models, designed to scale across the full spectrum of deployments,...

6 MIN READ

Mar 17, 2026

Building the AI Grid with NVIDIA: Orchestrating Intelligence Everywhere

AI-native services are exposing a new bottleneck in AI infrastructure: As millions of users, agents, and devices demand access to intelligence, the challenge...

11 MIN READ

Mar 16, 2026

Scaling Autonomous AI Agents and Workloads with NVIDIA DGX Spark

Autonomous AI agents are driving the next wave of AI innovation. These agents must often manage long-running tasks that use multiple communication channels and...

10 MIN READ

Mar 12, 2026

Build Next-Gen Physical AI with Edge‑First LLMs for Autonomous Vehicles and Robotics

Physical AI is rapidly evolving, from next-generation software-defined autonomous vehicles (AVs) to humanoid robots. The challenge is no longer how to run a...

7 MIN READ

Feb 10, 2026

Using Accelerated Computing to Live-Steer Scientific Experiments at Massive Research Facilities

Scientists and engineers who design and build unique scientific research facilities face similar challenges. These include managing massive data rates that...

13 MIN READ

Jan 08, 2026

Building Generalist Humanoid Capabilities with NVIDIA Isaac GR00T N1.6 Using a Sim-to-Real Workflow

To make humanoid robots useful, they need cognition and loco-manipulation that span perception, planning, and whole-body control in dynamic environments. ...

8 MIN READ

Data Center / Cloud

May 26, 2026

Run Key Genomics and Protein Folding Workloads Faster with NVIDIA RTX PRO 4500 Blackwell

Precision medicine depends on two fundamental capabilities: understanding disease at the genomic level and identifying treatments at the molecular level. ...

7 MIN READ

May 21, 2026

Get Real-Time Visibility into GPU Usage Across Kubernetes Clusters

Maximizing the value of AI infrastructure demands deep visibility into GPU utilization. Yet many platform teams running AI workloads on Kubernetes operate with...

6 MIN READ

May 11, 2026

Introducing NVIDIA Fleet Intelligence for Real-Time GPU Fleet Visibility and Optimization

The compute capability of large GPU fleets presents unprecedented opportunities to innovate and provide value to customers in record time. Yet these...

8 MIN READ

May 08, 2026

Streaming Tokens and Tools: Multi-Turn Agentic Harness Support in NVIDIA Dynamo

An agentic exchange must preserve a structured interaction: assistant turns interleave reasoning with one or more tool calls, and subsequent user turns return...

17 MIN READ

May 07, 2026

Achieving Peak System and Workload Efficiency on NVIDIA GB200 NVL72 with Slurm Block Scheduling

NVIDIA GB200 NVL72 introduces a fundamentally new way to build GPU clusters by extending NVIDIA NVLink coherence across an entire rack. This design enables...

11 MIN READ

May 05, 2026

Building for the Rising Complexity of Agentic Systems with Extreme Co-Design

Generative AI’s explosive first chapter was defined by humans sending requests and models responding. The agentic chapter is different. Agents don't...

12 MIN READ

Apr 29, 2026

Powering AI Factories with NVIDIA Enterprise Reference Architectures

The next wave of enterprise productivity is being built on AI factories. As organizations deploy agentic AI systems capable of reasoning, automation, and...

8 MIN READ

Apr 22, 2026

Scaling the AI-Ready Data Center with NVIDIA RTX PRO 4500 Blackwell Server Edition and NVIDIA vGPU 20

AI integration is redefining mainstream enterprise applications, from productivity software like Microsoft Office to more complex design and engineering tools....

11 MIN READ

Networking / Communications

May 12, 2026

How to Eliminate Pipeline Friction in AI Model Serving

The path from a trained AI model to production should be smooth, but rarely is. Many teams invest weeks fine-tuning models, only to discover that exporting to...

10 MIN READ

Apr 14, 2026

NVIDIA NVbandwidth: Your Essential Tool for Measuring GPU Interconnect and Memory Performance

When you’re writing CUDA applications, one of the most important things you need to focus on to write great code is data transfer performance. This applies to...

8 MIN READ

Apr 09, 2026

Running Large-Scale GPU Workloads on Kubernetes with Slurm

Slurm is an open source cluster management and job scheduling system for Linux. It manages job scheduling for over 65% of TOP500 systems. Most organizations...

9 MIN READ

Apr 07, 2026

Running AI Workloads on Rack-Scale Supercomputers: From Hardware to Topology-Aware Scheduling

The NVIDIA GB200 NVL72 and NVIDIA GB300 NVL72 systems, featuring NVIDIA Blackwell architecture, are rack-scale supercomputers. They’re designed with 18 tightly...

11 MIN READ

Apr 02, 2026

Accelerating Vision AI Pipelines with Batch Mode VC-6 and NVIDIA Nsight

In vision AI systems, model throughput continues to improve. The surrounding pipeline stages must keep pace, including decode, preprocessing, and GPU...

10 MIN READ

Apr 01, 2026

Accelerate Token Production in AI Factories Using Unified Services and Real-Time AI

In today’s AI factory environment, performance is not theoretical. It is economic, competitive, and existential. A 1% drop in usable GPU time can mean millions...

8 MIN READ

Mar 25, 2026

Scaling Token Factory Revenue and AI Efficiency by Maximizing Performance per Watt

In the AI era, power is the ultimate constraint, and every AI factory operates within a hard limit. This makes performance per watt—the rate at which power is...

10 MIN READ

Mar 16, 2026

Introducing NVIDIA BlueField-4-Powered CMX Context Memory Storage Platform for the Next Frontier of AI

AI‑native organizations increasingly face scaling challenges as agentic AI workflows drive context windows to millions of tokens and models scale toward...

12 MIN READ