Advanced video analysis solutions are in great demand across multiple industries. Some of the popular use-cases include retail aisles to understand customer brand sentiment, occupancy analytics for crowd management in mass transit locations, optimizing vehicle traffic patterns in cities, hospitals, and malls for social distancing protocols, and defect detection in manufacturing facilities.

Building and deploying these solutions involves massive engineering efforts such as gathering and collecting relevant datasets, extensive training of AI models for high accuracy, real-time performance with large scale deployment, and manageability.

Transfer Learning Toolkit helps novice AI application developers and software developers accelerate AI training by providing various pre-trained AI models and in-built capabilities such as transfer learning, pruning, fine-tuning, and Quantization Aware Training. Developers can build highly accurate AI for several popular use cases using purpose-built models such as PeopleNet, VehicleMakeNet, TrafficCamNet, DashCamNet, and more.

NVIDIA DeepStream SDK helps developers and companies build performant vision AI apps and services that can be deployed at scale and managed with ease using Kubernetes and Helm Charts.

We’ve added major features and enhancements requested by our developer community for the production release of our Vision AI software suite: Transfer Learning Toolkit 2.0 and DeepStream SDK 5.0. Here is an overview of key features available today:

Major Feature Enhancements:

General Availability: Transfer Learning Toolkit 2.0

Transfer Learning Toolkit (TLT) eliminates the time-consuming process of training from scratch. It enables developers with limited AI expertise to create highly accurate AI models for deployment. This release extends training support on several popular and state-of-the-art networks to achieve greater inference throughput. The pre-trained models and TLT container are freely available and can be downloaded from NGC. Key highlights include:

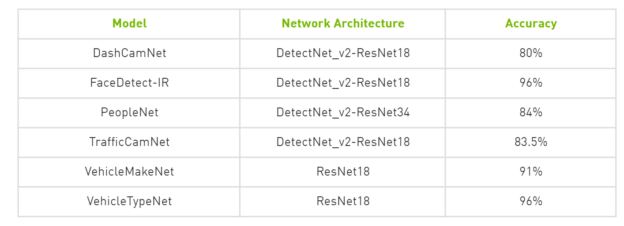

- New NVIDIA purpose-built models: Deploy AI applications for common use cases such as people counting, vehicle tracking, and heatmap generation using highly accurate purpose-built models. Each of these production quality models are free to download from NGC:

- Quantization AwareTraining: Achieve 2x inference speedup with INT8 precision while maintaining comparable to FP16/FP32 using quantization aware training. Read more about QAT

- Accelerate training and reduce memory bandwidth with Automatic Mixed Precision (AMP) running on Tensor Cores for NVIDIA Volta and Turing GPUs

- Get pixel level accuracy with instance segmentation using MaskRCNN network architecture. Read our MaskRCNN developer tutorial

- Extended support for object detection models such as YOLO-V3, SSD, and FasterRCNN, RetinaNet, DSSD and DetectNet_v2

- End-to-end vision AI performance: Out of the box compatibility with DeepStream SDK 5.0 makes it easier for you to unlock greater throughput and allows you to quickly deploy models from TLT. See the end-to-end throughput results using TLT and DeepStream including highest accuracy and FPS results for production quality models tested on NVIDIA T4, Jetson Nano, AGX Xavier, and Xavier NX on our product page

- Speed-up AI model training with multi-GPU support

Getting Started

- Download Transfer Learning Toolkit and pretrained models

- Check out the latest developer tutorials:

Learn more about TLT features and capabilities through our developer tutorials, sample apps and webinars

General Availability: DeepStream SDK 5.0

DeepStream is a streaming analytics toolkit for AI-based image and video understanding. By using DeepStream, you can build efficient edge applications to derive real-time insights. This production release software introduces enhancements and features for AI application developers, software partners, and OEMs building vision AI or advanced video analytics apps and services across many industries including smart cities, retail analytics, health and safety, sports analytics, robotics, manufacturing, logistics and more. Key highlights include:

- Run popular Deep Learning frameworks natively with DeepStream: New inference capability with Triton Inference Server (previously TensorRT Inference Server) enables developers to deploy a model natively in TensorFlow, TensorFlow-TensorRT, PyTorch, or ONNX in the DeepStream pipeline

- Python-based development: Select from either C/C++ or Python-based applications for building your DeepStream pipeline. The two development options provide comparable performance

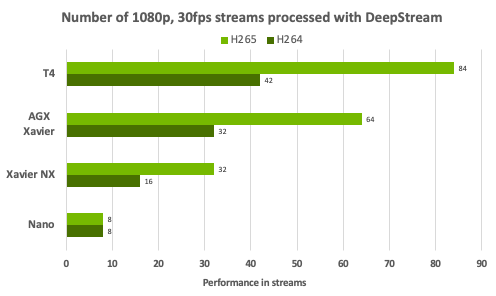

- Real-time performance: DeepStream accelerated framework offers the highest stream processing density for complex vision AI pipelines. This plot summarizes stream density achieved at 1080p/30 FPS across various NVIDIA platforms supported by DeepStream SDK.

- Build and deploy DeepStream apps natively in Red Hat Enterprise Linux (RHEL)

- Smart recording on the edge: Save valuable disk space on the edge with a selective recording that enables faster searchability. Use cloud-to-edge messaging to control recording from the cloud

- IoT capabilities

- Manage and control DeepStream app from the cloud with edge/cloud bi-directional IoT messaging

- OTA AI model update for zero app downtime

- Secure communication between edge and cloud using SASL/Plain based authentication and TLS authentication

- Tighter interoperability with Transfer Learning Toolkit 2.0 for out-of-box model deployment with DeepStream. See the end-to-end throughput results using TLT and DeepStream including highest accuracy and FPS results for production quality models tested on NVIDIA T4, Jetson Nano, AGX Xavier, and Xavier NX on DeepStream product page

- Introducing Jetson Xavier NX support: Deploy AI applications on the world’s smallest AI supercomputer at the edge. Reference apps available and are common among all supported NVIDIA platforms.

Getting Started

- Download DeepStream SDK

- Check out DeepStream performance tutorial YouTube

- Read our latest DeepStream 5.0 feature deep dive developer blog

- Learn more about DeepStream features and capabilities through our developer tutorials, sample apps and webinars

- View the free self-paced online DeepStream DLI course on Getting Started with DeepStream for Video Analytics on Jetson Nano

New Developer Webinar:

- Join NVIDIA technology experts in this developer webinar and demo session “Create Intelligent Places Using NVIDIA Vision AI Models and DeepStream SDK” on Aug 25th 11am PDT. Register now

Sign up to the NVIDIA developer program and stay in the know about upcoming Vision AI and IoT announcements, software releases, contests, projects, and more.