Autonomous vehicle development is all about scale. Engineers must collect and label massive amounts of data to train self-driving neural networks.

This data is then used to test and validate the AV system, which is also an immense undertaking to ensure robustness. Simulation is an important tool to reach this level of scale, but accuracy is key to its effectiveness.

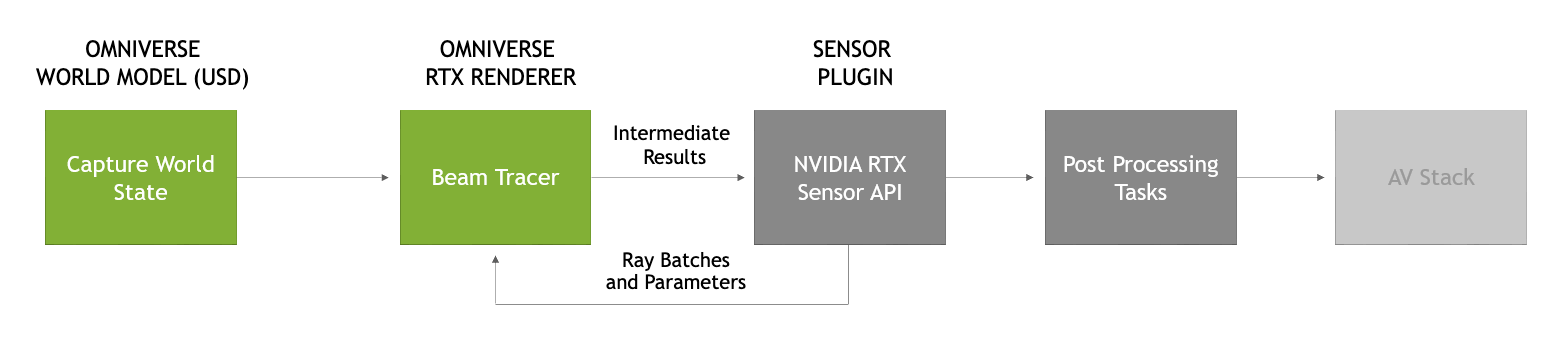

NVIDIA DRIVE Sim, built on NVIDIA Omniverse, addresses this challenge with a physically based, end-to-end simulation platform, architected from the ground up to run large-scale, physically accurate, multi-sensor simulations. It enables you to generate synthetic data to train AV perception and validate motion control in a closed-loop simulation with high fidelity and accurate sensor data.

AV sensors can be categorized as follows:

- Passive: Cameras

- Active: Lidar, radar, and ultrasonic

This ability to validate that our models match reality ensures that DRIVE Sim produces trustworthy results. Now, we’re sharing the work we’ve done to correlate our lidar models to the real world. In the Lidar Validation whitepaper, we present the process used to validate accuracy and precision for DRIVE Sim lidar models.

At NVIDIA, we explore multiple ways to approach sensor validation, such as comparing neural networks trained on real-world data and networks trained on synthetic data. Also, we validate a sensor’s accuracy by comparing the synthetic data itself to the sensors’ specifications and real-world experiments.

Read the Lidar Validation whitepaper—the second in a series on sensor validation in simulation—and catch up on the previous post covering camera sensor validation.