Using a newly developed AI algorithm, researchers from the University of Texas Southwestern Medical Center are making early detection of aggressive forms of skin cancer possible. The study, recently published in Cell Systems, creates a deep learning model capable of predicting if melanoma will aggressively spread, by examining cell features undetectable to the human eye.

“We now have a general framework that allows us to take tissue samples and predict mechanisms inside cells that drive disease, mechanisms that are currently inaccessible in any other way,” said senior author Gaudenz Danuser, the Patrick E. Haggerty Distinguished Chair in Basic Biomedical Science at the University of Texas Southwestern.

Melanoma—a serious form of skin cancer caused by changes in melanocyte cells— is the most likely of all skin cancers to spread if not caught early. Quickly identifying it helps doctors create effective treatment plans, and when diagnosed early, has a 5-year survival rate of about 99%.

Doctors often use biopsies, blood tests, or X-rays, CT, and PET scans to determine the stage of melanoma and whether it has spread to other areas of the body, known as metastasizing. Changes in cellular behavior could hint at the likelihood of the melanoma to spread, but they are too subtle for experts to observe.

The researchers thought using AI to help determine the metastatic potential of melanoma could be very valuable, but up until now AI models have not been able to interpret these cellular characteristics.

“We propose an algorithm that combines unsupervised deep learning and supervised conventional machine learning, along with generative image models to visualize the specific cell behavior that predicts the metastatic potential. That is, we map the insight gained by AI back into a data cue that is interpretable by human intelligence,” said Andrew Jamieson, study coauthor and assistant professor in bioinformatics at UT Southwestern.

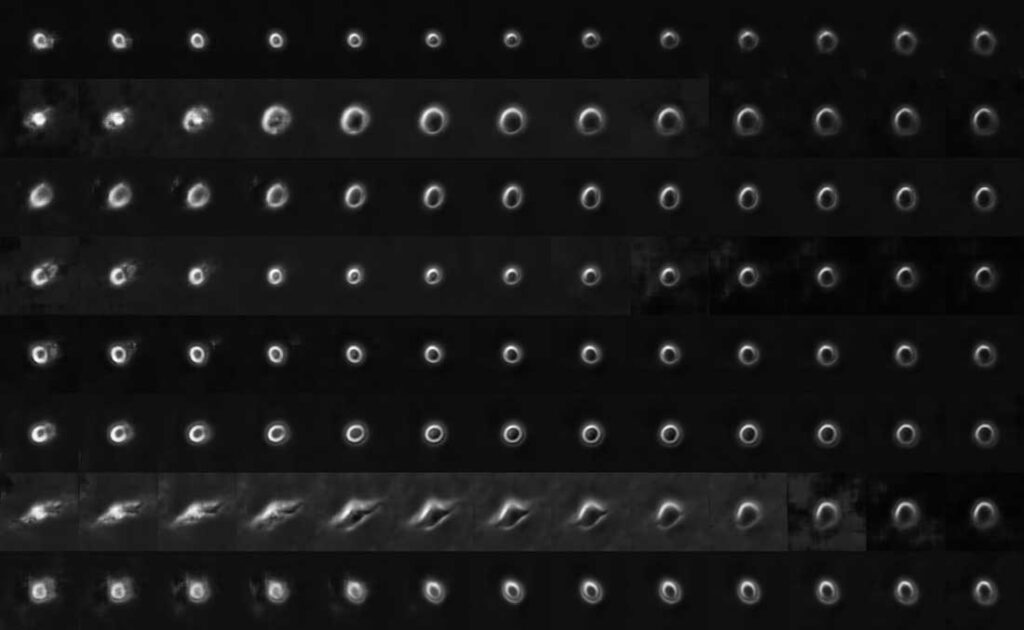

Using tumor images from seven patients with a documented timeline of metastatic melanoma, the researchers compiled a time-lapse dataset of more than 12,000 single melanoma cells in petri dishes. Resulting in approximately 1,700,000 raw images, the researchers used a deep learning algorithm to identify different cellular behaviors.

Credit: Danuser et al/Cell Systems

Based on these features, the team then “reverse engineered’’ a deep convolutional neural network able to tease out the physical properties of aggressive melanoma cells and predict whether cells have high metastatic potential.

The experiments were run on the UT Southwestern Medical Center BioHPC cluster with CUDA-accelerated NVIDIA V100 Tensor Core GPUs. They trained multiple deep learning models on the 1.7 million cell images to visualize and explore the massive data set that started at over five TBs of raw microscopy data.

The researchers then tracked the spread of melanoma cells in mice and tested whether these specific predictors lead to highly metastatic cells. They found the cell types they’d classified as highly metastatic spread throughout the entire animal, while those classified low did not.

There is more work to be done before the research can be deployed in a medical setting. The team also points out that the study raises questions about whether this applies to other cancers, or if melanoma metastasis is an outlier.

“The result seems to suggest that the metastatic potential, at least of melanoma, is set by cell-autonomous rather than environmental factors,” Jamieson said.

Applications of the study could also go beyond cancer, and transform diagnoses of other diseases.

Read the full article in Cell Systems>>

Read more >>