GPU-accelerated workloads are thriving across all industries, from the use of AI for better customer engagement and data analytics for business forecasting to advanced visualization for quicker product innovation.

One of the biggest challenges with GPU-accelerated infrastructure is choosing the right hardware systems. While the line of business cares about performance and the ability to use a large set of developer tools and frameworks, enterprise IT teams are additionally concerned with factors such as management and security.

The NVIDIA-Certified Systems program was created to answer the needs of both groups. Systems from leading system manufacturers equipped with NVIDIA GPUs and network adapters are put through a rigorous test process. A server or workstation is stamped as NVIDIA-Certified if it meets specific criteria for performance and scalability on a range of GPU-accelerated applications, as well as proper functionality for security and management capabilities.

Server configuration challenges

The certification tests for each candidate system are performed by the system manufacturer in their labs, and NVIDIA works with each partner to help them determine the best passing configuration. NVIDIA has studied hundreds of results across many server models, and this experience has allowed us to identify and solve configuration issues that can negatively impact performance.

High operating temperature

GPUs have a maximum supported temperature, but operating at a lower temperature can improve performance. A typical server has multiple fans to provide air cooling, with programmable temperature-speed fan curves. A default fan curve is based on a generic base system and does not account for the presence of GPUs and similar devices that can produce a lot of heat. The certification process can reveal performance issues due to temperature and can determine which custom fan curves give best results.

Non-optimal BIOS and firmware settings

BIOS settings and firmware versions can impact performance as well as functionality. The certification process validates the optimal BIOS settings for best performance and identifies the best values for other configurations, such as NIC PCI settings and boot grub settings.

Improper PCI slot configuration

Rapid transfer of data to the GPU is critical to getting the best performance. Because GPUs and NICs are installed on enterprise systems through the PCI bus, improper placement can result in suboptimal performance. The certification process exposes these issues and determines the optimal PCI slot configuration.

Certification goals

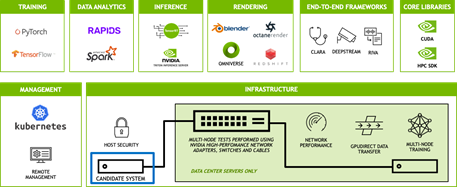

The certification is designed to exercise the performance and functionality of the candidate system by running a suite of more than 25 software tests that represent a wide range of real-world applications and operations.

The goal of these tests is to optimize a given system configuration for performance, manageability, security, and scalability.

Performance

The test suite includes a diverse set of applications that stress the system in multiple ways. They cover the following issues:

- Deep learning training and AI inference

- End-to-end AI frameworks such as NVIDIA Riva and NVIDIA Clara

- Data science applications such as Apache Spark and RAPIDS

- Intelligent video analytics

- HPC and CUDA functions

- Rendering with Blender, Octane, and similar tools

Manageability

Certification tests are run on the NVIDIA Cloud Native core software stack using Kubernetes for orchestration. This validates that the certified servers can be fully managed by leading cloud-native frameworks, such as Red Hat OpenShift, VMware Tanzu, and NVIDIA Fleet Command.

Remote management capabilities using Redfish are also validated.

Security

The certification analyzes the platform-level security of hardware, devices, system firmware, low-level protection mechanisms, and the configuration of various platform components.

Trusted Platform Module (TPM) functionality is also verified, which enables the system to support features like secure boot, signed containers, and encrypted disk volumes.

Scalability

NVIDIA-Certified data center servers are tested to validate multi-GPU and multi-node performance using GPUDirect RDMA, as well as performance running multiple workloads using Multi-Instance GPU (MIG). There are also tests of key network services. These capabilities enable IT systems to scale accelerated infrastructure to meet workload demands.

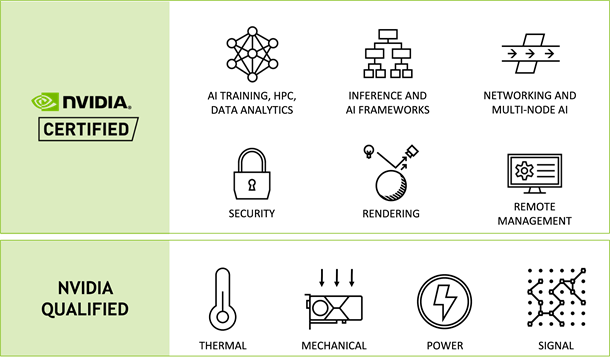

Qualification vs. certification

It’s important to understand the difference between qualification and NVIDIA certification. A qualified server has undergone thermal, mechanical, power, and signal integrity tests to ensure that a particular NVIDIA GPU is fully functional in that server design.

Servers in qualified configurations are supported for production use, and qualification is a prerequisite for certification. However, if you want a system that is both supported and optimally designed and configured, you should always choose a certified system.

NVIDIA-Certified system categories

NVIDIA-Certified Systems are available in a range of categories that are optimized for particular use cases. You can choose a system from the category that best matches your needs.

The design of systems in each category is determined by the system models and GPUs best suited for the target workloads. For instance, enterprise-class servers can be provisioned with NVIDIA A100 or NVIDIA A40 for data centers, whereas compact servers can use NVIDIA A2 for the edge.

The certification process is also tailored to each category. For example, workstations are not tested for multinode applications, and industrial edge systems must pass all tests while running in the environment for which the system was designed, such as elevated temperatures.

| Category | Workloads | Example Use Cases |

| Data Center Compute Server | AI Training and Inferencing, Data Analytics, HPC | Recommender Systems, Natural Language Processing |

| Data Center General Purpose Server | Visualization, Rendering, Deep Learning | Off-line Batch Rendering, Accelerating Desktop Rendering |

| High Density Virtualization Server | Virtual Desktop, Virtual Workstation | Office Productivity, Remote Work |

| Enterprise Edge | Edge Inferencing in controlled environments | Image and Video Analytics, Multi-access Edge Computing (MEC) |

| Industrial Edge | Edge Inferencing in industrial or rugged environments | Robotics, Medical instruments, Field-deployed Telco Equipment |

| Workstation | Design, Content Creation, Data Science | Product & Building Design, M&E Content Creation |

| Mobile Workstation | Design, Content Creation, Data Science, Software Development | Data Feature Exploration, Software Design |

Push the easy button for enterprise IT

With NVIDIA-Certified Systems, you can confidently choose and configure performance-optimized servers and workstations to power accelerated computing workloads, both in smaller configurations and at scale. NVIDIA-Certified Systems provide the easiest way for you to be successful with all your accelerated computing projects.

A wide variety of system types are available, including popular data center and edge server models, as well as desktop and mobile workstations from a vast ecosystem of NVIDIA partners. For more information, see the following resources:

- Webinar: Choosing Hardware Systems for AI in the Enterprise’ on May 17 at 10 am PT

- NVIDIA-Certified Systems product page

- Accelerate Compute-Intensive Workloads with NVIDIA-Certified Systems whitepaper

- Qualified Systems Catalog