NVIDIA Kernel-based Virtual Machine (KVM) takes open source KVM and enhances it to support the unique capabilities of the NVIDIA DGX-2 server, creating a full virtualization solution for NVIDIA GPUs and NVIDIA NVSwitch devices with PCI passthrough. The DGX-2 server incorporates 16 NVIDIA Tesla V100 32GB GPUs, 12 NVSwitch chips, two 24 core Xeon CPUs, 1.5 TB of DDR4 DRAM memory, Mellanox InfiniBand EDR adapters, and 30 TB of NVMe storage into a single system.

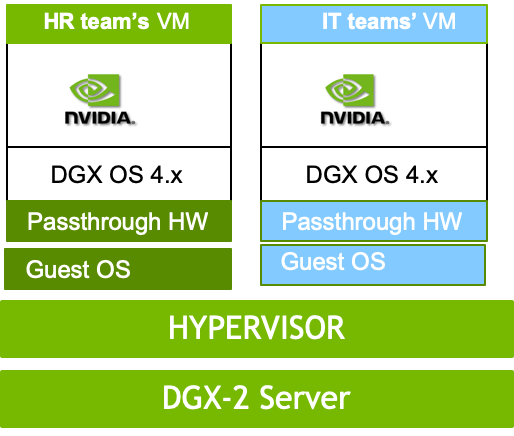

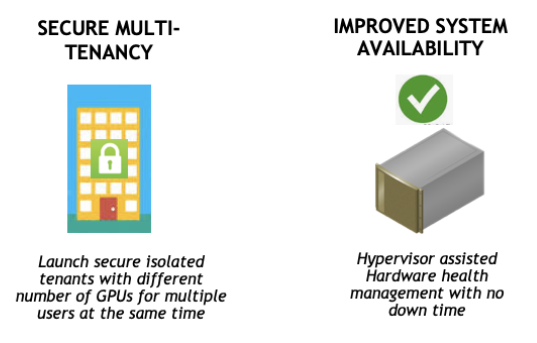

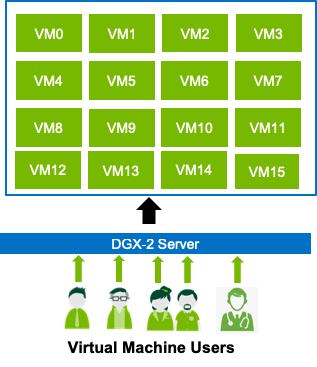

NVIDIA KVM provides secure multi-tenancy for GPUs, NVSwitch chips, and NVIDIA NVLink interconnect technology. This allows multiple users to run deep learning (DL) jobs concurrently in isolated virtual machines (VMs) on the same DGX-2 server, as shown in figure 1. It also improves system availability through fault isolation, since faults affect only the VM containing the faulting component rather than the entire system.

NVIDIA KVM can support any combination of 1/2/4/8/16-GPU tenants running concurrently so long as the total number of GPUs being utilized does not exceed sixteen GPUs.

KVM-Based Compute GPU VM Benefits

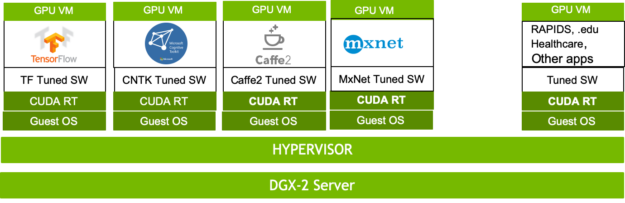

KVM-based VMs can run applications that primarily use compute GPUs, such as deep learning (DL), machine learning (ML), High Performance Computing (HPC), or Healthcare. Configuring NVSwitch chips enables NVIDIA KVM to enhance the performance of individual VMs by allowing data movement over the NVLink interconnects while keeping each tenant secure Hardware remains isolated between VMs.

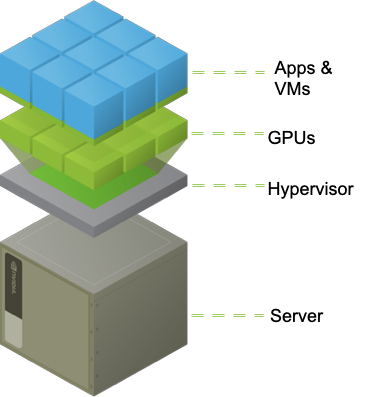

This allows the DGX-2 server to simultaneously run different versions of NVIDIA CUDA drivers, guest operating systems, DL frameworks, and so on for each VM, as seen in figure 2. NVIDIA GPU Cloud (NGC) Containers run as-is inside the VM with minimal performance impact. See the NVIDIA KVM Performance Improvements section later for details.

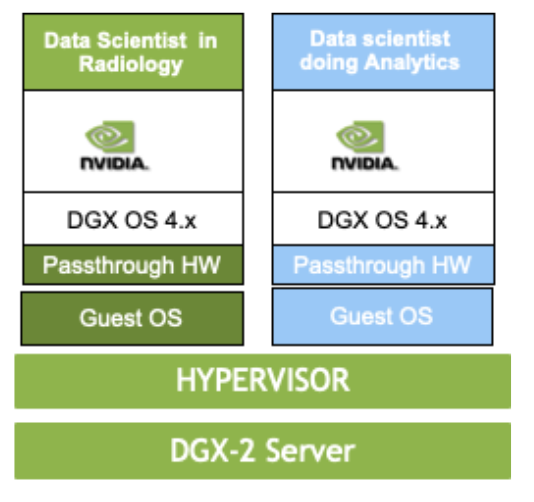

Secure multi-tenancy gives multiple departments the ability to share the same DGX-2 server without visibility to other VMs. Each VM’s hardware, GPUs, and NVLink interconnects are completely isolated, illustrated in figure 3. In addition, the hypervisor provides additional security, preventing a user from downloading malicious code to the hardware or from being able to reset PCI devices.

Virtualizing the DGX-2 server using KVM offers a number of benefits.

KVM splits resources into secure, GPU enabled VMs with multiple tenants to support multiple users simultaneously, as shown in figure 4. These users include cloud service provider users, health care researchers, students at higher education institutes, or IT system admins.

NVIDIA KVM includes optimized templates which allow users to easily deploy containers from NVIDIA GPU Cloud. KVM delivers preconfigured DGX Operating System (OS) images with optimized drivers and CUDA libraries. KVM also enables non-disruptive software and security updates on the host and guest OS. Users can run different OS or software in different VMs at the same time. Templates make it easier to relaunch VMs by reusing them.

NVIDIA KVM improves system utilization by allowing multiple users on the DGX-2 server to simultaneously run jobs across subsets of GPUs in a secure VM. Multiple users can run in VMs during the day in a higher education or research setting yet quickly reprovision the system for HPC or other GPU-compute intensive workloads at night.

Improve system availability since hardware errors are isolated to affected VM and do not impact other running VMs. KVM provides GPU and other device fault isolation.

Since GPU and NVSwitch chips are pass-through devices, users will see near-native application performance.

DGX-2 KVM Implementation

NVIDIA KVM on the DGX-2 server consists of the Linux hypervisor, the DGX-2 KVM host, guest images, and NVIDIA tools to support GPU multi-tenant virtualization. NVIDIA built the Linux hypervisor a modified QEMU (v2.11.1) and libvirt (v4.0.0). The guest OS image is a DGX OS software image which includes NVIDIA driver, CUDA runtime and libraries, the NVIDIA Container Runtime for Docker, and other software components for running DL containers.

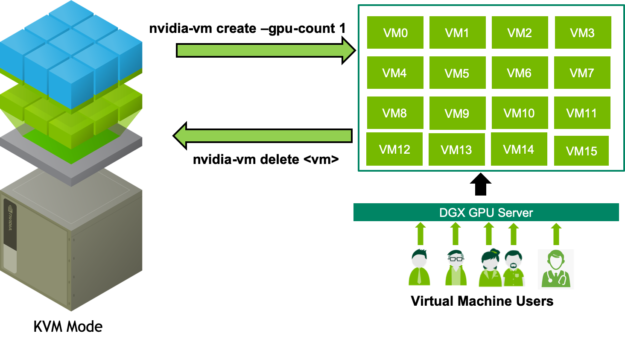

The experience of running within an NVIDIA VM image is very similar to running bare-metal because the OS image is the same as the bare metal DGX OS. A new NVIDIA hypervisor tool, nvidia-vm allows managing guest OS images, monitoring KVM host resources, and deploying GPU VMs.

Tesla GPUs, NVSwitch chips, and IB adapters remain in PCI Passthrough mode to achieve optimal performance with DL jobs. CPU cores and RAM are tuned using libvirt settings.

The nvidia-vm tool provides various templates for launching GPU VMs. It simplifies deploying VMs by incorporating various operations required to launch GPU VMs into a single command. See section 12.9.1 of the DGX-2 Server User Guide for template sizing details. It also provides an easy way to manage Guest OS images, including upgrading to newer versions.

VMs deployed with nvidia-vm use preconfigured templates. You can modify the VM by overriding the default settings to customize memory allocation, OS disk size, and the number of vCPUs. Figure 5 shows the virtualization software stack on the DGX-2 server.

DGX KVM Software Installation

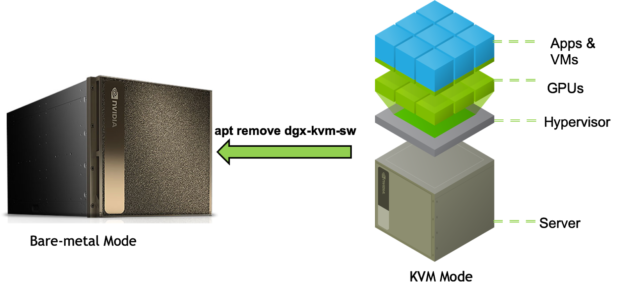

An existing bare metal DGX-2 server needs to be converted to a DGX-2 KVM host before using NVIDIA KVM. This requires installing the DGX KVM software and Guest OS images. You can restore back to DGX-2 bare metal by simply uninstalling DGX KVM software and Guest OS images.

Convert the DGX-2 server to a KVM Host

The following instructions show how to convert a DGX-2 server to a KVM host.

First, Update the internal database with the latest package versions and install the NVIDIA KVM software.

$ sudo apt-get update $ sudo apt-get install dgx-kvm-sw $ sudo reboot

Verify the version of NVIDIA KVM installed with nvidia-vm

$ nvidia-vm version show 19.02.3

Next, Get a list of supported Guest OS images.

$ sudo apt-cache policy dgx-kvm-image-*

Install the preferred image(s)

$ sudo apt-get install

Example for image dgx-kvm-image-4-0-5:

$ sudo apt-get install dgx-kvm-image-4-0-5

For further Details, See section 12.2 in the DGX-2 Server User Guide.

DGX KVM lifecycle

This section provides an example to launch, stop, restart, and delete a GPU VM. Figure 6 outlines this process.

Use nvidia-vm to launch a GPU VM. As GPUs are used as PCI passthrough devices, specify the number of GPUs and index of the first GPU.

Launch a 4 GPU VM:

$ nvidia-vm create --gpu-count 4 --gpu-index 0 --domain my4gpuvm

You can find additional details in section 12.3 in the DGX-2 Server User Guide

Stopping a GPU VM

The libvirt tool virsh can be used to stop and release resources of a running VM, such as GPUs, NVSwitch chips, memory, and CPUs of that VM without releasing its OS and Data Drives. Use nvidia-vm delete to destroy and release its storage.

Shutdown the 4 GPU VM:

virsh shutdown my4gpuvm

More details can be found in section 12.4.1 in the DGX-2 Server User Guide.

Starting a stopped GPU VM

The libvirt tool virsh can also be used to start an already created GPUs VMs.

Start the 4 GPU VM:

$ virsh start --console my4gpuvm

Learn more in section 12.4.2 in the DGX-2 Server User Guide.

Deleting a GPU VM

Deleting a VM involves multiple operations, such as stopping the VM, deleting the OS and Data drives used by the VM, and removing any temporary files. This is simplified by the nvidia-vm delete command.

Deleting the 4 GPU VM:

$ nvidia-vm delete --domain my4gpuvm

See Section 12.4.3 in the DGX-2 Server User Guide for more information.

GPU Guest OS image upgrade

Use nvidia-vm tool to list or upgrade Guest OS image(s).

List Guest OS images:

$ nvidia-vm image show Currently installed images on in "/var/lib/nvidia/kvm/images" dgx-kvm-image --> dgx-kvm-image-4-0-5 dgx-kvm-image-4-0-5 Additional VM Images available to download from connected repos dgx-kvm-image-4-0-2 dgx-kvm-image-4-0-3 dgx-kvm-image-4-0-4 $

Install new Guest OS Images:

$ nvidia-vm image install dgx-kvm-image-4-0-2 .. Unpacking dgx-kvm-image-4-0-2 (4.0.2~180918-d47d38.1) ... Setting up dgx-kvm-image-4-0-2 (4.0.2~180918-d47d38.1) ... $

Further Details on upgrading the guest OS image reside in section 12.4.3 in the DGX-2 Server User Guide.

Revert the DGX-2 server to bare metal

Run the following commands to revert the DGX-2 server back to bare metal, as shown in figure 7.

$ sudo apt-get purge --auto-remove dgx-kvm-sw $ sudo reboot

See Section 12.2 in the DGX-2 Server User Guide for more.

DL Run example

Let’s look at an example for launching a 4-GPU VM and running TensorFlow using nvidia-docker runtime:

$ nvidia-vm create --gpu-count 4 --gpu-index 0 --domain my4gpuvm $ virsh console my4gpuvm Login: nvidia Password: my4gpuvm $ sudo nvidia-docker run --runtime=nvidia --rm -it nvcr.io/nvidia/tensorflow:19.03-py3 # mpiexec --allow-run-as-root --bind-to socket -np 16 python /opt/tensorflow/nvidia-examples/cnn/resnet.py --layers=50 --precision=fp16 --batch_size=512

For alternative examples see the Tensorflow User Guide instructions, these can be used unmodified inside a GPU VM and have been verified to work.

NVIDIA KVM Performance Improvements

During GPU VM creation, nvidia-vm applies the following optimizations to maximize the performance of a VM:

- Leverage Hardware topology in VM templates by using NVLINK interconnects and PCI topology to select GPU and NVSwitch chips. Memory and CPU core access is handled via non-uniform memory access (NUMA)

- Use multiple queues for network and block devices

- Pin virtual CPUs of a VM to physical CPUs on the Host, so that the thread(s) shall execute only on the designated cores

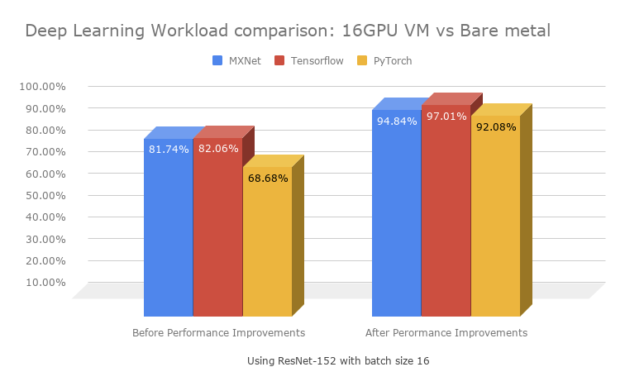

Typical virtualization adds a 20-30% performance overhead (see OpenBenchMarking.org: KVM vs Baremetal Benchmarks). Without NVIDIA performance improvements, running the DL Workload ResNet-152 with a batch size 16 on a 16-GPU VM showed a 13% to 22% overhead. With NVIDIA performance improvements, this overhead has been reduced to between 3% and 8% as shown in the chart in figure 8.

Next Steps

You now have the knowledge to deploy NVIDIA KVM for secure multi-tenancy on your DGX-2. With a few commands outlined in this blog and also available in the DGX-2 User Guide you can quickly and easily deploy NVIDIA KVM. This enables you to securely support any combination of 1/2/4/8/16-GPU tenants running concurrently, leveraging the same NGC containers that you are hopefully already familiar with.

References

- NVIDIA DGX-2 Server User Guide (Chapter 12)

- NVIDIA DGX Best Practices for DGX-2 KVM Networking

- NVIDIA DGX Best Practices for DGX-2 KVM Performance Tuning