Many PC games are designed around an eight-core console with an assumption that their software threading system ‘just works’ on all PCs, especially regarding the number of threads in the worker thread pool. This was a reasonable assumption not too long ago when most PCs had similar core counts to consoles: the CPUs were just faster and performance just scaled.

In recent years though, the CPU landscape has changed and there is now a complex matrix of performance variables to navigate:

- Higher core counts

- The introduction of heterogeneous P/E cores from Intel

- Asymmetrical caches from AMD

- More complex scheduling algorithms

- Power management techniques from OS vendors such as Microsoft

This complexity means that the previous thread count determination algorithm (and its derivatives) is no longer sufficient:

num_worker_threads = num_logical_cores - 2

This traditional thread count determination algorithm was based on logical core counts and reserved two cores for critical threads.

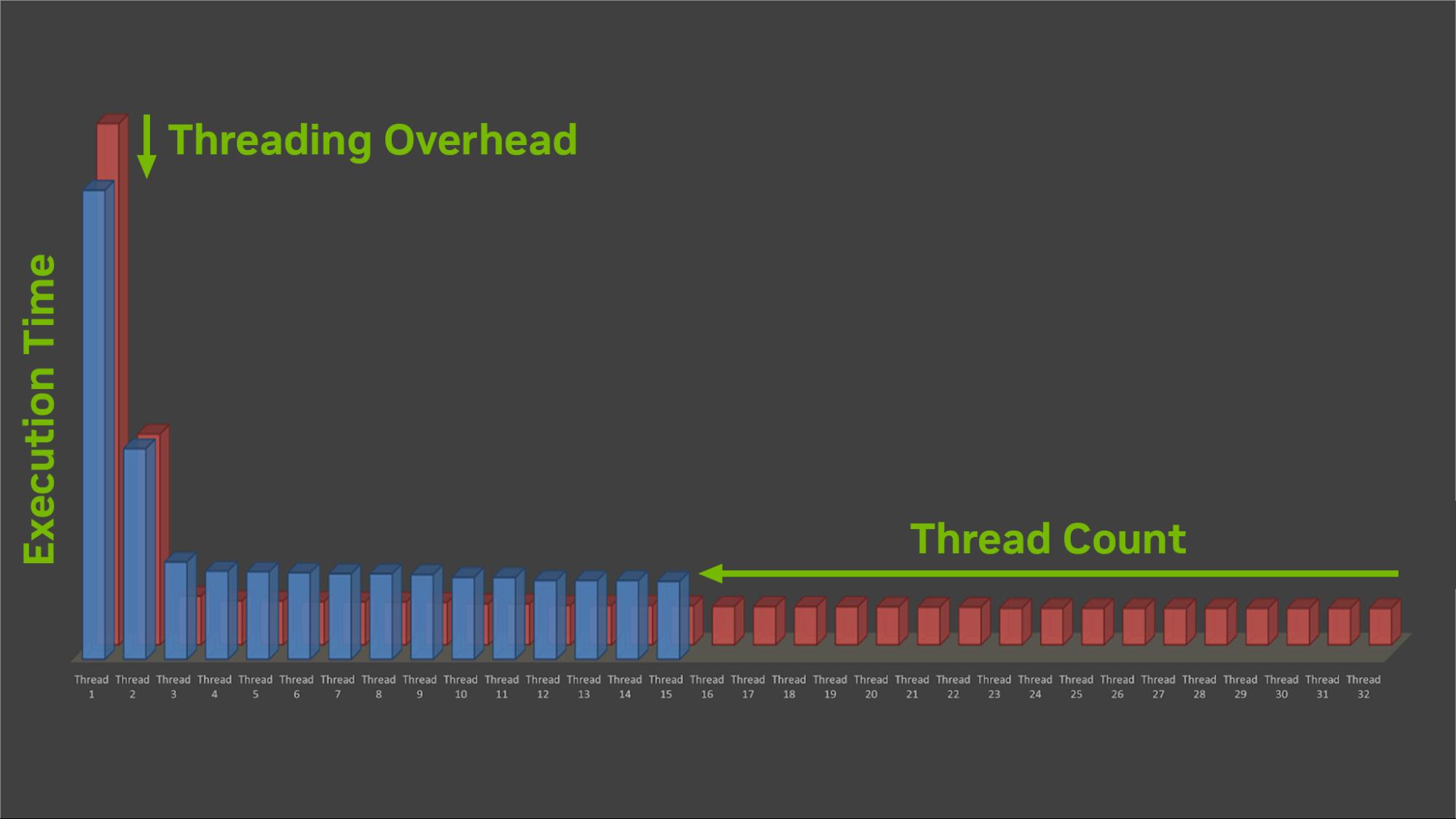

Many CPU-bound games actually degrade in performance when the core count increases beyond a certain point, so the benefits of the extra threading parallelism are outweighed by the overhead.

On high-end desktop systems with greater than eight physical cores for example, some titles can see performance gains of up to 15% by reducing the thread count of their worker pools to be less than the core count of the CPU.

The reasons for the performance drop are complex and varied. Where one title may see a performance drop of 10%, another may see a performance gain of 10% on the same system, thus highlighting the difficulty in providing a one-size-fits-all solution across all titles and all systems.

Instead, a game’s thread count should be tailored to fit the workload. Light CPU workloads should use fewer threads.

Performance solutions

If the performance of your game is not scaling as expected on higher-core-count machines, potentially even reducing its performance, there can be several common reasons:

- Hardware performance: Higher-core-count CPUs sometimes have lower CPU speeds. Reducing the number of threads may enable the active cores to boost their frequency.

- Hardware resource contention: Reducing the thread count can often decrease the pressure on the memory subsystem, reducing latency and enabling the CPU caches to be more efficient. This is especially true for chiplet-based architectures that do not have a unified L3 cache. Threads executing on different chiplets can cause high cache thrashing.

- Executing threads on both logical cores of a single physical core (hyperthreading or simultaneous multi-threading) can add latency as both threads must share the physical resource (caches, instruction pipelines, and so on). If a critical thread is sharing a physical core, then its performance may decrease. Targeting physical core counts instead of logical core counts can help to reduce this on larger core count systems.

- Software resource contention: Locks and atomics can have much higher latency when accessed by many threads concurrently, adding to the memory pressure. False sharing can exacerbate this.

- OS scheduling issues: An over-subscription of threads to active cores leads to a high number of context switches which can be expensive and can put extra pressure on the CPU memory subsystem.

- On systems with P/E cores, work is scheduled first to physical P cores, then E cores, and then hyperthreaded logical P cores. Using fewer threads than total physical cores enables background threads, such as OS threads, to execute on the E cores without disrupting critical threads running on P cores by executing on their sibling logical cores.

- Power management: Reducing the number of threads can enable more cores to be parked, saving power and potentially allowing the remaining cores to run at a higher frequency.

- Core parking has been seen to be sensitive to high thread counts, causing issues with short bursty threads failing to trigger the heuristic to unpark cores. Having longer running, fewer threads helps the core parking algorithms.

There are several solutions to this scaling issue, depending on the root cause of the problem:

- Dynamic load balancing of thread counts

- Lockless threading models that scale with core count

- Use QoS and thread priority APIs to help steer threads to specific cores

- Other solutions…

The simplest method may be to find how many threads your game actually needs and then let the OS schedule the threads effectively.

Figure 1 shows that reducing the number of threads your game uses may reduce some of the overhead, often from critical threads, which may directly improve the performance of your game.

Test your game on different systems at different settings and with different thread counts. You will likely find a thread count sweet spot or a small number of sweet spots that work for your game.

Ensure that you test hyperthreading to see whether you should align to physical or logical cores when enumerating your threads on the different systems. Hyperthreading often helps on low-core-count systems that don’t have enough physical cores to efficiently execute your workload but can hinder performance on larger core-count systems.

Your testing may produce a modified algorithm where you tailor max_thread_count to suit your workload. The following thread-count determination algorithm is modified to limit the thread count to a predefined maximum:

max_thread_count = ini_file.get(“max_thread_count”)

num_worker_threads = num_logical_cores - 2

if (num_worker_threads > max_thread_count )

num_worker_threads = max_thread_count

If max_thread_count is added to your game .ini file, it is easy for your IHV partners, QA teams, and gamers alike to find the right number of threads for their own PC setup to ensure that maximum performance is achieved.

Summary

CPU performance matters and worker thread count is an integral part of the performance equation. Measuring your game’s CPU performance on a matrix of CPUs and adjusting the thread count to fit the workload are simple optimizations that can produce large double-digit performance gains.

Providing an override for thread count in an .ini file ensures that gamers can find the right value to maximize the performance on their PC.