Deep learning is being adopted in robotics to accurately navigate indoor environments, detect and follow objects of interest, and maneuver without collisions. However, the increasing complexity of deep learning makes it challenging to accommodate these workloads on embedded systems. While you can make trade-offs between accuracy and deep learning model size, compromising accuracy to meet real-time demands often proves counterproductive in most robotics applications.

Ease of use and deployment have made the NVIDIA Jetson platform a logical choice for developers, researchers, and manufacturers building and deploying robots, such as JetBot, MuSHR, and MITRaceCar. In this post, we present deep learning models for classification, object detection, and human pose estimation with ROS 2 on Jetson. We also provide a ROS 2 node for in-deployment monitoring of various resources and operating parameters of Jetson. ROS 2 offers lightweight implementations as it removes dependency of bridge nodes and delivers various advantages in embedded systems.

We take advantage of existing NVIDIA frameworks for deep learning model deployment, such as TensorRT to improve model inference performance. We also integrate NVIDIA DeepStream SDK with ROS 2 so that you can perform stream aggregation and batching and deploy with various AI models for classification and object detection, including ResNet18, MobileNetV1/V2, SSD, YOLO, FasterRCNN. Furthermore, we implement ROS 2 nodes for popular Jetson-based projects such as trt_pose and jetson_stats by developers around the world. Finally, we provide a GitHub repository—including ROS 2 nodes and Dockerfiles—for each application mentioned above, such that you can easily deploy nodes on the Jetson platform. For more information about each project, see the following sections.

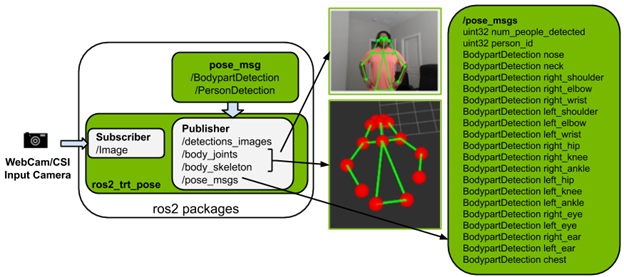

ROS 2 nodes for human pose estimation

The ros2_trt_pose package is implemented based on trt_pose, which enables pose estimation on the Jetson platform. The repository provides two trained models for pose estimation using resnet18 and densenet121. To understand human pose, pretrained models infer 17 body parts based on the categories from the COCO dataset.

Here are the key features of the ros2_trt_pose package:

- Publishes

pose_msgssuch ascount of personandperson_id. For eachperson_id, it publishes 17 body parts. - Provides launch file for easy usage and visualizations on Rviz2:

- Image messages

- Visual markers:

body_joints,body_skeleton

- Contains a Jetson-based Docker image for easy install and usage.

For more information, see the NVIDIA-AI-IOT/ros2_trt_pose GitHub repo.

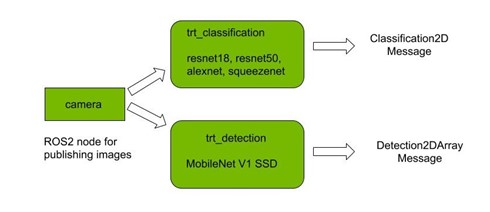

ROS 2 packages for PyTorch and TensorRT

There are two packages for classification and detection using PyTorch, each with their corresponding TRT versions implemented. These four packages are a good starting point for roboticists using ROS 2 to get started with deep learning using PyTorch.

TensorRT has been integrated into the packages with the help of torch2trt for accelerated inference. It generates a runtime engine which is optimized according to the architecture of the network and the deployment device.

The main features of the packages are as follows:

- For classification, you can select from various ImageNet pretrained models, including Resnet18, AlexNet, SqueezeNet, and Resnet50.

- For detection, MobileNetV1-based SSD is currently supported, trained on the COCO dataset.

- The TRT packages provide a significant speedup in carrying out inference relative to the PyTorch models performing inference directly on the GPU.

- The inference results are published in the form of vision_msgs.

- On running the node, a window is also shown with the inference results visualized.

- A Jetson-based Docker image and launch file is provided for ease of use.

For more information, see the NVIDIA-AI-IOT/ros2_torch_trt GitHub repo.

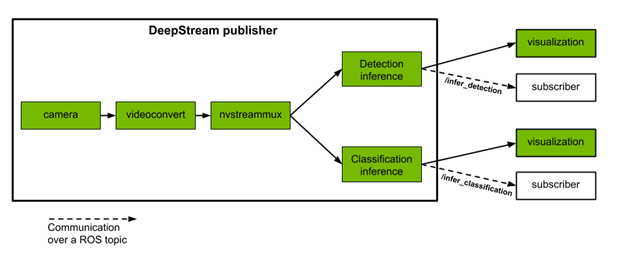

ROS 2 nodes for DeepStream SDK

The DeepStream SDK delivers a complete streaming analytics toolkit to build end-to-end AI-based solutions using multi-sensor processing, video, and image understanding. It offers support for popular object detection and segmentation models such as state of the art SSD, YOLO, FasterRCNN, and MaskRCNN.

NVIDIA provide ROS 2 nodes that perform two inference tasks based on the DeepStream Python Apps project as follows:

- Object detection: Four classes of objects are detected:

Vehicle,Person,RoadSign, andTwoWheeler. - Attribute classification: Three types of attributes are classified for objects of class Vehicle:

Color,Make, andType.

These publisher nodes take single or multiple video streams as input from camera or file. They perform inference and publish results of detection and classification to different topics. We also provide sample ROS 2 subscriber nodes that subscribe to these topics and display results in vision_msgs format. Each inference task also spawns a visualization window with bounding boxes and labels around detected objects. Additional inference tasks and custom models can be integrated with the DeepStream pipeline provided in this project.

In the video, the console at the bottom shows the average rate (in hertz) at which the multi-stream publisher node is publishing the classification output.

Sample classification output in vision_msgs Classification2D format:

[vision_msgs.msg.ObjectHypothesis(id='silver', score=0.7280375957489014), vision_msgs.msg.ObjectHypothesis(id='toyota', score=0.7242303490638733), vision_msgs.msg.ObjectHypothesis(id='sedan', score=0.6891725063323975)]

For more information, see the NVIDIA-AI-IOT/ros2_deepstream GitHub repo.

ROS 2 Jetson stats

The ros2_jetson_stats package is a community build package that monitors and controls your Jetson device. It can run on your terminal and provides a Python package for easy integration in Python scripts. Take advantage of the ros2_jetson_stats library and build ROS 2 diagnostic messages and services.

The ros2_jetson_stats package features the following ROS 2 diagnostic messages:

- GPU/CPU usage percentage

- EMC/SWAP/Memory status (% usage)

- Power and temperature of SoC

You can now control the following through the ROS 2 command line:

- Fan (

ModeandSpeed) - Power model (

nvpmodel) jetson_clocks

You can also provide a parameter to set the frequency of reading diagnostic messages.

For more information, see the NVIDIA-AI-IOT/ros2_jetson_stats GitHub repo.

ROS 2 containers for Jetson

To easily run different versions of ROS 2 on Jetson, NVIDIA has released various Dockerfiles and build scripts for ROS 2 Eloquent and Foxy, in addition to ROS Melodic and Noetic. These containers provide an automated and reliable way to install ROS or ROS 2 on Jetson and build your own ROS-based applications.

Because Eloquent and Melodic already provide prebuilt packages for Ubuntu 18.04, these versions of ROS are installed into the containers by the Dockerfiles. On the other hand, Foxy and Noetic are built from a source inside the container as those versions only come prebuilt for Ubuntu 20.04. With the containers, using these versions of ROS or ROS 2 is the same, regardless of the underlying OS distribution.

To build the containers, clone the repo on your Jetson device running JetPack 4.4 or newer, and launch the ROS build script:

$ git clone https://github.com/dusty-nv/jetson-containers $ cd jetson-containers $ ./scripts/docker_build_ros.sh all # build all: melodic, noetic, eloquent, foxy $ ./scripts/docker_build_ros.sh melodic # build only melodic $ ./scripts/docker_build_ros.sh noetic # build only noetic $ ./scripts/docker_build_ros.sh eloquent # build only eloquent $ ./scripts/docker_build_ros.sh foxy # build only foxy

This command creates containers with the following tags:

ros:melodic-ros-base-l4t-r32.4.4ros:noetic-ros-base-l4t-r32.4.4ros:eloquent-ros-base-l4t-r32.4.4ros:foxy-ros-base-l4t-r32.4.4

To launch the ROS 2 Foxy container for example, run the following command:

$ sudo docker run --runtime nvidia -it --rm --network host ros:foxy-ros-base-l4t-r32.4.4

Using the --runtime nvidia flag automatically enables GPU passthrough in the container, in addition to other hardware accelerators on your Jetson device, such as the video encoder and decoder. To stream a MIPI CSI camera in the container, include the following flag:

--volume /tmp/argus_socket:/tmp/argus_socket

To stream a V4L2 USB camera in the container, mount the desired /dev/video* device when launching the container:

--device /dev/video0

For more information, see the dusty-nv/jetson-containers GitHub repo.

NVIDIA Omniverse Isaac Sim for ROS developers

The NVIDIA Isaac Sim simulation toolkit built on the NVIDIA Omniverse platform brings several useful improvements over existing robotics workflows:

- It takes advantage of the Omniverse highly accurate physics simulation and photo realistic ray-traced graphics, bringing direct integration with industry-leading physics frameworks, such as the NVIDIA PhysX SDK for rigid body dynamics.

- It has a renewed focus on interoperability, deep integration with the NVIDIA Isaac SDK, and extensions for ROS.

- It is readily extensible. With its Python-based scripting interface, it allows you to adapt to your own unique use cases.

- It is built to be deployable, with an architecture that supports workflows on your local workstation and through the cloud with NVIDIA NGC.

Next steps

As a ROS developer, you can now take advantage of Isaac SDK capabilities while preserving your software investment. With the Isaac-ROS bridge, you can use Isaac GEMS in your ROS implementation.

We provide easy-to-use packages for ROS 2 on the NVIDIA Jetson platform to build and deploy key applications in robotics. For more projects on AI-IOT and robotics, you can take advantage of following resources: