Generative AI has captured the attention and imagination of the public over the past couple of years. From a given natural language prompt, these generative models are able to generate human-quality results, from well-articulated children’s stories to product prototype visualizations.

Large language models (LLMs) are at the center of this revolution. LLMs are universal language comprehenders that codify human knowledge and can be readily applied to numerous natural and programming language understanding tasks, out of the box. These include summarization, translation, question answering, and code annotation and completion.

The ability of a single foundation language model to complete many tasks opens up a whole new AI software paradigm, where a single foundation model can be used to cater to multiple downstream language tasks within all departments of a company. This simplifies and reduces the cost of AI software development, deployment, and maintenance.

Introduction to creating a custom large language model

While potent and promising, there is still a gap with LLM out-of-the-box performance through zero-shot or few-shot learning for specific use cases. In particular, zero-shot learning performance tends to be low and unreliable. Few-shot learning, on the other hand, relies on finding optimal discrete prompts, which is a nontrivial process.

As explained in GPT Understands, Too, minor variations in the prompt template used to solve a downstream problem can have significant impacts on the final accuracy. In addition, few-shot inference also costs more due to the larger prompts.

Parameter-efficient fine-tuning techniques have been proposed to address this problem. Prompt learning is one such technique, which appends virtual prompt tokens to a request. These virtual tokens are learnable parameters that can be optimized using standard optimization methods, while the LLM parameters are frozen.

This post walks through the process of customizing LLMs with NVIDIA NeMo Framework, a universal framework for training, customizing, and deploying foundation models.

Prompt learning with NVIDIA NeMo

Prompt learning within the context of NeMo refers to two parameter-efficient fine-tuning techniques, as detailed below. For more information, see Adapting P-Tuning to Solve Non-English Downstream Tasks.

- In prompt-tuning, soft prompt embeddings are initialized as a 2D matrix. Each task has its own 2D embedding matrix associated with it. Tasks do not share any parameters during training or inference. All LLM parameters are frozen and only the embedding parameters for each task are updated during training. NeMo prompt tuning implementation is based on The Power of Scale for Parameter-Efficient Prompt Tuning.

- In p-tuning, an LSTM model, or “prompt encoder,” is used to predict virtual token embeddings. LSTM parameters are randomly initialized at the start of p-tuning. All LLM parameters are frozen, and only the LSTM weights are updated at each training step. LSTM parameters are shared between all tasks that are p-tuned at the same time, but the LSTM model outputs unique virtual token embeddings for each task. NeMo p-tuning implementation is based on GPT Understands, Too.

Prompt learning for this example uses two open-source components of the NeMo ecosystem: the NeMo OSS toolkit and public NeMo models.

The process of prompt learning on a small GPT-3 345M parameter model is detailed in the NeMo Multitask Prompt and P-Tuning tutorial on GitHub. This tutorial demonstrates the end-to-end process of prompt learning: downloading and preprocessing data, downloading the model, training a prompt-learning model, and inferring on three different applications.

The sections below first walk through the notebook while summarizing the main concepts. Then this notebook will be extended to carry out prompt learning on larger NeMo models.

Prerequisite

You can experience NeMo through the NeMo Docker container. This provides a self-sufficient and reproducible environment for experimenting with NeMo. The NeMo Multitask Prompt and P-Tuning tutorial was tested with the NeMo 23.02 container, but you can try later releases of the same container. Download and run this container using the following script:

docker run -u $(id -u ${USER}):$(id -g ${USER}) --rm -it --net=host nvcr.io/nvidia/nemo:23.02 bashThen from within the container interactive bash environment, start Jupyter lab:

cd /workspace

jupyter lab --ip 0.0.0.0 --allow-root --port=8888From Jupyter lab, you will find NeMo examples, including the above-mentioned notebook, under /workspace/nemo/tutorials/nlp/Multitask_Prompt_and_PTuning.ipynb.

In addition, you will need one GPU for working with the smaller 5B and 1.3B GPT-3 models, and four NVIDIA Ampere architecture or NVIDIA Hopper architecture GPUs to work with the 20B model, as it has four degrees of tensor parallelism (TP).

Data preparation

The notebook will walk you through data collection and preprocessing for the SQuAD question answering task.

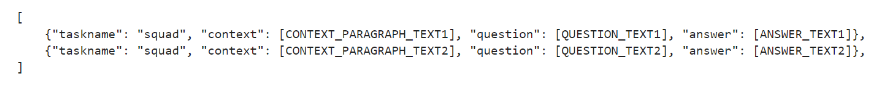

The dataset should be in a .jsonl format containing a collection of JSON objects. Each JSON object must include the field task name, which is a string identifier for the task the data example corresponds to. Each should also include one or more fields corresponding to different sections of the discrete text prompt. See Figure 1 for an example.

Prompt template

You should determine and adhere to a pattern when forming the prompt. This pattern is called the prompt template and varies according to the use case. An example for question and answer is shown below.

{

"taskname": "squad",

"prompt_template": "<|VIRTUAL_PROMPT_0|> Context: {context}\n\nQuestion: {question}\n\nAnswer:{answer}",

"total_virtual_tokens": 10,

"virtual_token_splits": [10],

"truncate_field": "context",

"answer_only_loss": True,

"answer_field": "answer",

}The prompt contains all the 10 virtual tokens at the beginning, followed by the context, the question, and finally the answer. The corresponding fields in the training data JSON object will be mapped to this prompt template to form complete training examples. NeMo supports pruning specific fields to meet the model token length limit (typically 2,048 tokens for Nemo public models using the HuggingFace GPT-2 tokenizer).

Training

The default NeMo prompt-tuning configuration is provided in a yaml file, available through NVIDIA/NeMo on GitHub. The notebook loads this yaml file, then overrides the training options to suit the 345M GPT model. The NeMo p-tuning enables multiple tasks to be learned concurrently. NeMo leverages the PyTorch Lightning interface, so training can be done as simply as invoking a trainer.fit(model) statement.

Inference

Finally, once trained, the model can be used for inference on new samples (omitting the “answer_field”) by invoking the model.generate(inputs=test_examples) statement.

Prompt learning on larger models

The 345M GPT-3 model process demonstrated in the notebook can be applied to larger public NeMo GPT-3 models, up to 1.3B GPT-3 and 5B GPT-3. Models of this size require only a single GPU of sufficient memory capacity, such as the NVIDIA V100, NVIDIA A100, and NVIDIA H100. After downloading the model, substitute the model name; in particular, in the following cell:

# Download the model from NGC

gpt_file_name = "megatron_gpt_345m.nemo"

!wget --content-disposition https://api.ngc.nvidia.com/v2/models/nvidia/nemo/megatron_gpt_345m/versions/1/files/megatron_gpt_345m.nemo -Instead of downloading the 345M GPT model from NGC, download either the 1.3B GPT-3 or 5B GPT-3 models following the instructions on HuggingFace, then point the gpt_file_name variable to the .nemo model file.

Note that for the 5B model there are two variants, one with a TP degree of 1 (nemo_gpt5B_fp16_tp1.nemo) and other with TP=2 (nemo_gpt5B_fp16_tp2.nemo, nemo_gpt5B_bf16_tp2.nemo). The notebook can only support the TP=1 variant. With everything else left unchanged, you can execute the same notebook end-to-end.

Multi-GPU prompt learning

Due to the limitations of the Jupyter notebook environment, the prompt learning notebook only supports single-GPU training. Leveraging multi-GPU training for larger models, with a higher degree of TP (such as 4 for the 20B GPT-3, and 2 for other variants for the 5B GPT-3) requires use of a different NeMo prompt learning script. This script is supported by a config file where you can find the default values for many parameters.

Models

This section demonstrates the process of prompt learning of a large model using multiple GPUs on the assistant dataset that was downloaded and preprocessed as part of the prompt learning notebook.

You can download either the 5B GPT model with TP=2 (nemo_gpt5B_fp16_tp2.nemo) or the 20B GPT-3 model with TP=4. Note that these models are stored in a .nemo zip archive. To speed up model loading substantially, unzip the model beforehand, and use this unzipped folder in the NeMo configuration. Use the following script:

tar -xvf nemo_gpt5B_fp16_tp2.nemo -C nemo_gpt5B_fp16_tp2.nemo.extractedThen use the extracted directory nemo_gpt5B_fp16_tp2.nemo.extracted in NeMo config.

Configuration

A configuration file suitable for the assistant dataset (intent and slot detection application) is shown below:

name: megatron_virtual_prompt_gpt

trainer:

devices: 2

accelerator: gpu

num_nodes: 1

precision: 16

logger: False # logger provided by exp_manager

enable_checkpointing: False

replace_sampler_ddp: False

max_epochs: 25 # min 25 recommended

max_steps: -1 # consumed_samples = global_step * micro_batch_size * data_parallel_size * accumulate_grad_batches

log_every_n_steps: 10 # frequency with which training steps are logged

val_check_interval: 1.0 # If is an int n > 1, will run val every n training steps, if a float 0.0 - 1.0 will run val every epoch fraction, e.g. 0.25 will run val every quarter epoch

gradient_clip_val: 1.0

resume_from_checkpoint: null # The path to a checkpoint file to continue the training, restores the whole state including the epoch, step, LR schedulers, apex, etc.

benchmark: False

exp_manager:

explicit_log_dir: null

exp_dir: null

name: ${name}

create_wandb_logger: False

wandb_logger_kwargs:

project: null

name: null

resume_if_exists: True

resume_ignore_no_checkpoint: True

create_checkpoint_callback: True

checkpoint_callback_params:

monitor: val_loss

save_top_k: 2

mode: min

save_nemo_on_train_end: False # Should be false, correct prompt learning model file is saved at model.nemo_path set below,

filename: 'megatron_gpt_prompt_tune--{val_loss:.3f}-{step}'

model_parallel_size: ${model.tensor_model_parallel_size}

save_best_model: True

model:

seed: 1234

nemo_path: ${name}.nemo # .nemo filename/absolute path to where the virtual prompt model parameters will be saved

virtual_prompt_style: 'p-tuning' # one of 'prompt-tuning', 'p-tuning', or 'inference'

tensor_model_parallel_size: 1 # intra-layer model parallelism

pipeline_model_parallel_size: 1 # inter-layer model parallelism

global_batch_size: 8

micro_batch_size: 4

restore_path: null # Path to an existing p-tuned/prompt tuned .nemo model you wish to add new tasks to or run inference with

language_model_path: ??? # Path to the GPT language model .nemo file, always required

save_nemo_on_validation_end: True # Saves an inference ready .nemo file every time a checkpoint is saved during training.

existing_tasks: [] # List of tasks the model has already been p-tuned/prompt-tuned for, needed when a restore path is given

new_tasks: ['squad'] # List of new tasknames to be prompt-tuned

## Sequence Parallelism

# Makes tensor parallelism more memory efficient for LLMs (20B+) by parallelizing layer norms and dropout sequentially

# See Reducing Activation Recomputation in Large Transformer Models: https://arxiv.org/abs/2205.05198 for more details.

sequence_parallel: False

## Activation Checkpoint

activations_checkpoint_granularity: null # 'selective' or 'full'

activations_checkpoint_method: null # 'uniform', 'block', not used with 'selective'

# 'uniform' divides the total number of transformer layers and checkpoints the input activation

# of each chunk at the specified granularity

# 'block' checkpoints the specified number of layers per pipeline stage at the specified granularity

activations_checkpoint_num_layers: null # not used with 'selective'

task_templates: # Add more/replace tasks as needed, these are just examples

- taskname: "squad"

prompt_template: "<|VIRTUAL_PROMPT_0|> Context: {context}\n\nQuestion: {question}\n\nAnswer:{answer}"

total_virtual_tokens: 10

virtual_token_splits: [10]

truncate_field: null

answer_only_loss: False

"answer_field": "answer"

prompt_tuning: # Prompt tunin specific params

new_prompt_init_methods: ['text'] # List of 'text' or 'random', should correspond to tasks listed in new tasks

new_prompt_init_text: ['some init text goes here'] # some init text if init method is text, or None if init method is random

p_tuning: # P-tuning specific params

encoder_type: "tpmlp" # ['tpmlp', 'lstm', 'biglstm', 'mlp']

dropout: 0.0

num_layers: 2 # number of layers for MLP or LSTM layers. Note, it has no effect for tpmlp currently as it always assumes it is two layers.

encoder_hidden: 2048 # encoder hidden for biglstm and tpmlp

init_std: 0.023 # init std for tpmlp layers

data:

train_ds: ???

validation_ds: ???

add_eos: True

shuffle: True

num_workers: 8

pin_memory: True

train_cache_data_path: null # the path to the train cache data

validation_cache_data_path: null # the path to the validation cache data

test_cache_data_path: null # the path to the test cache data

load_cache: False # whether to load from the cache data

optim:

name: fused_adam

lr: 1e-4

weight_decay: 0.01

betas:

- 0.9

- 0.98

sched:

name: CosineAnnealing

warmup_steps: 50

min_lr: 0.0 # min_lr must be 0.0 for prompt learning when pipeline parallel > 1

constant_steps: 0 # Constant steps should also be 0 when min_lr=0

monitor: val_loss

reduce_on_plateau: falseUsing the Jupyter lab interface, create a file with this content and save it under /workspace/nemo/examples/nlp/language_modeling/conf/megatron_gpt_prompt_learning_squad.yaml.

Most important in the config file is the prompt template shown below:

prompt_template: "<|VIRTUAL_PROMPT_0|> Context: {context}\n\nQuestion: {question}\n\nAnswer:{answer}"

total_virtual_tokens: 10

virtual_token_splits: [10]

truncate_field: null

answer_only_loss: False

"answer_field": "answer"Here, 10 virtual prompt tokens are used together with some permanent text markers.

Training

To begin training, open a terminal window from within the Jupyter lab interface (File → New → Terminal). Then issue the bash command:

python /workspace/nemo/examples/nlp/language_modeling/megatron_gpt_prompt_learning.py \

--config-name=megatron_gpt_prompt_learning_squad.yaml \

trainer.devices=2 \

trainer.num_nodes=1 \

trainer.max_epochs=25 \

trainer.precision=bf16 \

model.language_model_path=/workspace/nemo/tutorials/nlp/nemo-megatron-gpt-5B/nemo_gpt5B_fp16_tp2.nemo.extracted \

model.nemo_path=/workspace/nemo/examples/nlp/language_modeling/squad.nemo \

model.tensor_model_parallel_size=2 \

model.pipeline_model_parallel_size=1 \

model.global_batch_size=16 \

model.micro_batch_size=1 \

model.optim.lr=1e-4 \

model.data.train_ds=[/workspace/nemo/tutorials/nlp/data/SQuAD/squad_train.jsonl] \

model.data.validation_ds=[/workspace/nemo/tutorials/nlp/data/SQuAD/squad_val.jsonl]Note the following:

model.tensor_model_parallel_sizeshould be set to 2 for the 5B GPT model (nemo_gpt5B_fp16_tp2.nemo) or 4 for the 20B GPT-3 modeltrainer.devicesshould be set to a multiple of the TP value. If 4 for the 5B models, there will be two data-parallel workers, each with two GPUsmodel.language_model_pathshould be set to the absolute path of the model extracted directorymodel.data.train_ds,model.data.validation_dsshould be set to the location of the train and validation data

Inference

Finally, once trained, carry out inference in NeMo using the following script:

python /workspace/nemo/examples/nlp/language_modeling/megatron_gpt_prompt_learning_eval.py \

virtual_prompt_model_file=/workspace/nemo/examples/nlp/language_modeling/intent_n_slot.nemo \

gpt_model_file=/workspace/nemo/tutorials/nlp/nemo-megatron-gpt-5B/nemo_gpt5B_fp16_tp2.nemo.extracted \

inference.greedy=True \

inference.add_BOS=False \

inference.tokens_to_generate=128 \

trainer.devices=2 \

trainer.num_nodes=1 \

tensor_model_parallel_size=2 \

pipeline_model_parallel_size=1 \

data_paths=["/workspace/nemo/tutorials/nlp/data/SQuAD/squad_test.jsonl"] \

pred_file_path="test-results.txt"Note the following:

model.tensor_model_parallel_sizeshould be set to 2 for the 5B GPT model (nemo_gpt5B_fp16_tp2.nemo) or 4 for the 20B GPT-3 modeltrainer.devicesshould be set to equal the TP value (above)pred_file_pathis the file where test results will be recorded, one line per test sample

Get started customizing your language model using NeMo

This post walked through the process of customizing LLMs for specific use cases using NeMo and techniques such as prompt learning. From a single public checkpoint, these models can be adapted to numerous NLP applications through a parameter-efficient, compute-efficient process.

Visit NVIDIA/NeMo on GitHub to get started with LLM customization. You are also invited to join the open beta.