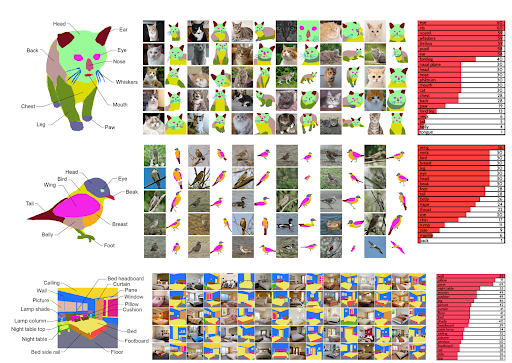

The desire to edit photos of cats, cars, or even antique paintings, has never been more accessible thanks to a generative adversarial network (GAN) model called EditGAN. The work—from NVIDIA, the University of Toronto, and MIT researchers—builds off DatasetGAN, an artificial intelligence vision model that can be trained with as few as 16 human-annotated images and performs as effectively as other methods that require 100x more images. EditGAN takes the power of the previous model and empowers the user to edit or manipulate the desired image with simple commands, such as drawing, without compromising the original image quality.

What is EditGAN?

According to the paper: “EditGAN is the first GAN-driven image-editing framework, which simultaneously offers very high-precision editing, requires very little annotated training data (and does not rely on external classifiers), can be run interactively in real time, allows for straightforward compositionality of multiple edits, and works on real embedded, GAN-generated, and even out-of-domain images.”

The model learns a specific number of editing vectors, which can be applied to an image interactively. Essentially, it forms an intuitive understanding of the images and their content, which can then be leveraged by users for specific modifications and editing. The model learns from similar images and recognizes different components and specific parts of the objects inside the images. A user can utilize this for targeted modifications of the different subparts or for editing within specific areas. Because of how precise the model is, the image is not distorted outside of the parameters set by the user.

“The framework allows us to learn an arbitrary number of editing vectors, which can then be directly applied on other images at interactive rates.” The researchers explained in their study. “We experimentally show that EditGAN can manipulate images with an unprecedented level of detail and freedom while preserving full image quality. We can also easily combine multiple edits and perform plausible edits beyond EditGAN’s training data. We demonstrate EditGAN on a wide variety of image types and quantitatively outperform several previous editing methods on standard editing benchmark tasks.”

From adding smiles, changing the direction someone is looking, creating a new hairstyle, or giving a car a nicer set of wheels, the researchers show just how intrinsic the model can be with minimal data annotation. The user can draw a simple sketch or mask corresponding to the desired editing and guides the AI model to realize the modification, such as bigger cat ears or cooler headlights on a car. The AI then renders the image while maintaining a very high level of accuracy and maintaining the quality of the original image. Afterwards, the same edit can be applied to other images in real-time.

How does this GAN work?

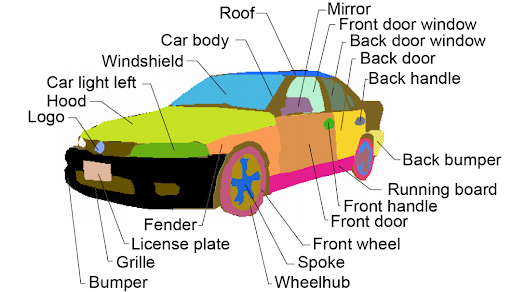

EditGAN assigns each pixel of the image to a category, such as a tire, windshield, or car frame. These pixels are controlled within the AI latent space and based on the input of the user, who can easily and flexibly edit those categories. EditGAN manipulates only those pixels associated with the desired change. The AI knows what each pixel represents based on other images used in training the model, so you could not attempt to add cat ears to a car with accurate results. But when used within the correct model, EditGAN is a phenomenal tool that provides exceptional image editing results.

EditGAN’s potential

AI-driven photo and image editing have the potential to streamline the workflow of photographers and content creators and to enable new levels of creativity and digital artistry. EditGAN also enables novice photographers and editors to produce high-quality content, along with the occasional viral meme.

“This AI may transform how we edit photos and perhaps eventually video. It allows someone to take an image and alter it by using simple text commands. If you have a photo of a car and you want to make the wheels bigger, just type “make wheels bigger,” and poof!—there’s a completely photorealistic picture of the same car with bigger wheels.” – Fortune magazine

EditGAN may also be used for other important applications in the future. For example, EditGAN’s editing capabilities could be utilized to create large image datasets with certain characteristics. Such specific datasets can be useful when training downstream machine-learning models on different computer vision tasks.

Furthermore, the EditGAN framework may impact the development of future generations of GANs. While the present version of EditGAN focuses on image editing, similar methods could potentially be used to edit 3D shapes and objects, which would be useful when creating virtual 3D content for games, movies, or the metaverse.

To read more about this amazing methodology, check out their paper.

NVIDIA is always on the cutting edge of technology, check out NVIDIA Research for more innovative research.