Join NVIDIA November 8-11, showcasing over 500 GTC sessions covering the latest breakthroughs in AI and deep learning, as well as many other GPU technology interest areas.

Below is a preview of some of the top AI and deep learning sessions including topics such as training, inference, frameworks, and tools—featuring speakers from NVIDIA.

Training

Deep Learning Demystified: AI has evolved and improved methods for data analysis and complex computations, solving problems that seemed well beyond our reach only a few years ago. Learn the fundamentals of accelerated data analytics, high-level use cases, and problem-solving methods—and how deep learning is transforming every industry. We’ll cover demystifying AI, machine learning, and deep learning; understanding the key challenges organizations face in adopting this new approach; and the latest tools and technologies that can help deliver breakthrough results.

Fundamentals of Deep Learning for Multi-GPUs: Modern deep learning challenges leverage increasingly larger datasets and more complex models. As a result, significant computational power is required to train models effectively and efficiently. In this course, you will learn how to scale deep learning training to multiple GPUs. Using multiple GPUs for deep learning can significantly shorten the time required to train lots of data, making solving complex problems with deep learning feasible.

Inference

Maximize AI Inference Serving Performance with NVIDIA Triton Inference Server: NVIDIA Triton is an open-source inference serving software that simplifies the deployment of AI models at scale in production. Deploy deep learning and machine learning models from any framework (TensorFlow, TensorRT, PyTorch, OpenVINO, ONNX Runtime, XGBoost, or custom) on any GPU- or CPU-based infrastructure with NVIDIA Triton. We’ll discuss some of the new backends, support for embedded devices, new integrations on the public cloud, model ensembles, and other new features.

Accelerate PyTorch Inference with TensorRT: Learn how to accelerate PyTorch inference without leaving the framework with Torch-TensorRT. Torch-TensorRT makes performance of the NVIDIA TensorRT GPU optimizations available in PyTorch for any model. You’ll learn about the key capabilities of Torch-TensorRT, how to use them, and the performance benefits you can expect. We’ll walk through how to transition from a trained model to an inference deployment fine-tuned for your specific hardware, all with just a few lines of familiar code.

Accelerate Deep Learning Inference in Production with TensorRT: TensorRT is an SDK for high-performance deep learning inference used in production to minimize latency and maximize throughput. The latest generation of TensorRT provides a new compiler to accelerate specific workloads optimized for NVIDIA GPUs. Deep learning compilers need to have a robust method to import, optimize, and deploy models. We’ll show a workflow to accelerate frameworks including PyTorch, TensorFlow, and ONNX.

Tools and Frameworks

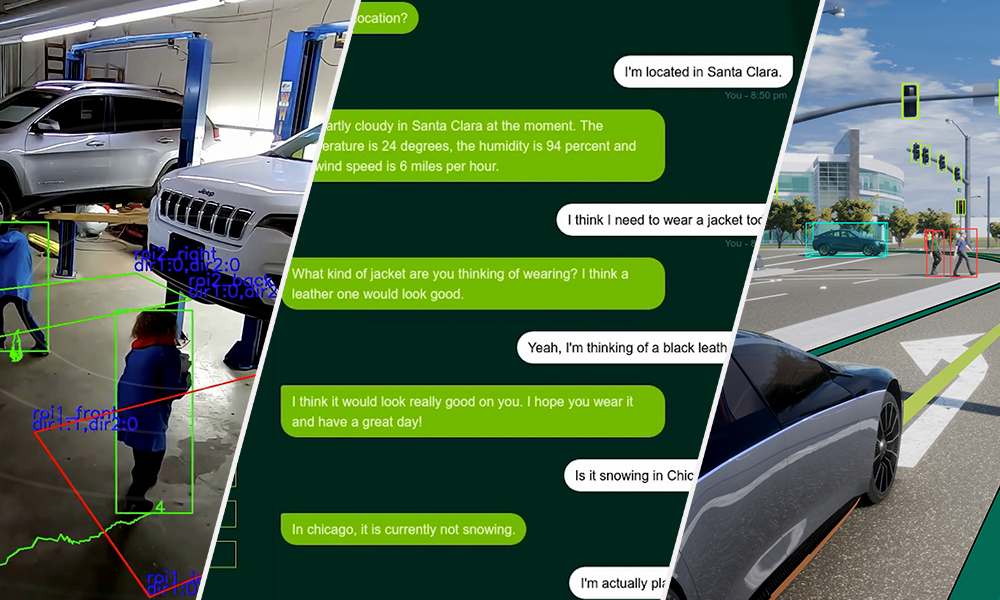

A Zero-code Approach to Creating Production-ready AI Models: NVIDIA TAO is a UI-driven, guided workflow-based model adaptation solution that allows users to produce highly accurate computer vision or conversational AI models in hours rather than months. This eliminates the need for large training runs and deep AI expertise. We’ll demonstrate how TAO empowers users by taking a pre-trained model, fine-tuning it with data, and optimizing for inference without writing a single piece of code.

Accelerating AI Workflows with NGC: To simplify AI development, NVIDIA NGC provides development tools and SDKs that speed up training, inference, and deployment of AI across industries including health care, smart cities, and robotics. We will cover how data scientists and developers use NGC (through containers, pretrained models, and SDKs) to build and deploy AI solutions faster across on-premises, hybrid, and edge platforms. We’ll end with a demo showing how to easily start building a computer vision solution, and leave you with a Jupyter notebook so you can rebuild a custom solution offline.