Posts by Jay Rodge

Agentic AI / Generative AI

Oct 28, 2024

Creating RAG-Based Question-and-Answer LLM Workflows at NVIDIA

The rapid development of solutions using retrieval augmented generation (RAG) for question-and-answer LLM workflows has led to new types of system...

11 MIN READ

Agentic AI / Generative AI

Sep 25, 2024

Deploying Accelerated Llama 3.2 from the Edge to the Cloud

Expanding the open-source Meta Llama collection of models, the Llama 3.2 collection includes vision language models (VLMs), small language models (SLMs), and an...

6 MIN READ

Agentic AI / Generative AI

May 29, 2024

Generative AI Agents Developer Contest: Top Tips for Getting Started

Join our contest that runs through June 17 and showcase your innovation using cutting-edge generative AI-powered applications using NVIDIA and LangChain...

3 MIN READ

Data Science

Mar 18, 2024

RAPIDS cuDF Accelerates pandas Nearly 150x with Zero Code Changes

At NVIDIA GTC 2024, it was announced that RAPIDS cuDF can now bring GPU acceleration to 9.5M million pandas users without requiring them to change their code. ...

5 MIN READ

Data Science

Jul 11, 2023

Accelerated Data Analytics: Machine Learning with GPU-Accelerated Pandas and Scikit-learn

If you are looking to take your machine learning (ML) projects to new levels of speed and scalability, GPU-accelerated data analytics can help you deliver...

14 MIN READ

Data Science

Jul 20, 2022

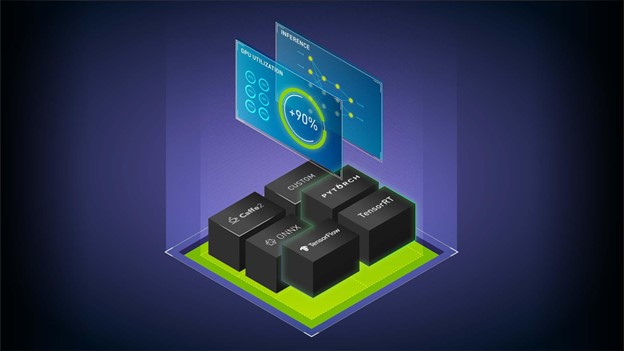

Optimizing and Serving Models with NVIDIA TensorRT and NVIDIA Triton

Imagine that you have trained your model with PyTorch, TensorFlow, or the framework of your choice, are satisfied with its accuracy, and are considering...

11 MIN READ