By JC Li

Editor’s note: This is the latest post in our NVIDIA DRIVE Labs series, which takes an engineering-focused look at individual autonomous vehicle challenges and how NVIDIA DRIVE addresses them. Catch up on all automotive posts.

AI can now make it easier for cars to see in the dark, while ensuring other vehicles won’t be blinded by the light.

High beam lights can increase the night-time visibility range of standard headlights significantly; however, they can create hazardous glare to other drivers. Most commercially-available high beam light systems still require manual on/off control, which can be confusing and a hassle, resulting in high beam under use or misuse.

In this DRIVE Labs video, we explain how AI can overcome these limitations, using perception to reduce glare for oncoming vehicles.

We have trained a camera-based deep neural network (DNN)—called AutoHighBeamNet—on camera images to automatically generate control outputs for the vehicle’s high beam light system, increasing nighttime driving visibility and safety.

Rather than generating high beam control signals based on lux levels of other light sources on the scene, AutoHighBeamNet can learn from a much broader set of conditions for truly automated and robust high beam control.

The network reacts to active vehicles in the perceived camera frame. An active vehicle is defined as any automobile with its headlight or taillight turned on. For example, a roadside parked vehicle with all its lights turned off is an inactive vehicle that AutoHighBeamNet ignores.

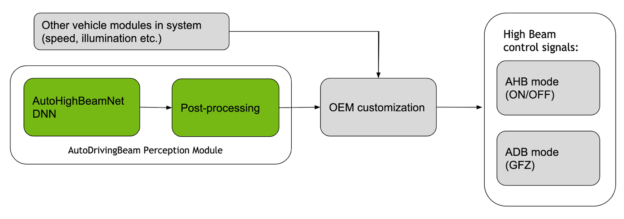

AutoHighBeamNet is part of the AutoDrivingBeam visual perception module, which takes in image data from a monocular front-facing camera, as shown in Figure 1.

Per-frame AutoHighBeamNet detections feed into a post-processing sub-module that performs both per-frame and temporal post-processing. The output of the AutoDrivingBeam module can then be customized by the auto OEM, subject to homologation rules and policies based on input signals from other vehicle modules (for example, the speed of the ego car, environmental illumination conditions, and so on) Based on these customizations, the final high beam control signals are generated.

The high beam control signals can take on two different modes: auto high beam (AHB) mode, which provides binary on/off control; and adaptive driving beam (ADB) mode, which provides precise control of individual high beam LED arrays to create glare-free zones (GFZ).

AHB mode

In this mode, the vehicle’s high beam lights will automatically turn on in poorly illuminated nighttime driving conditions. However, when an active vehicle is detected, high beams will automatically turn off and switch to low beam. After the active vehicle passes by, the high beams turn back on automatically.

ADB mode

Adaptive Driving Beam (ADB) is a new standard for high beam controls. In ADB mode, vehicles shape the high beam in a way that prevents glare to active vehicles by dimming individual LEDs in the high beam LED array headlamp. This selective dimming creates glare-free zones as needed by traffic patterns.

Similar to AHB mode, after the active vehicles exit the scene, the dimmed area turns back to full luminance automatically. Consequently, in ADB mode, high beams can always be kept on for better nighttime driving safety, without causing glare to other road users.

ADB was adopted in Europe in 2016. It is also under active evaluation by NHTSA, although it has not yet been ratified and approved for the U.S. market.

Glare-Free Zone masks

In ADB mode, the Glare-Free Zone (GFZ) is the data structure designed to represent areas in the camera-perceived frame where either high beams should be kept out completely or a dimmed high beam should cast to avoid reflection.

GFZs output to a matrix LED-based lighting system where each LED can be controlled individually.

GFZ outputs are in perceiving camera coordinates. A calibration process is required to map GFZs to vehicle lighting system coordinates. The calibration tool and its procedures are closely tied to specific vehicle setup and ADB-compatible lighting system choice.

Our API will provide AHB mode support in the NVIDIA DRIVE Software 10.0 release and will expose information needed by developers and OEMs to define their own policies for auto high beam control. For more information, see NVIDIA DRIVE Networks.