Researchers at Los Alamos National Laboratory in New Mexico are working toward earthquake detection with a new machine learning algorithm capable of global monitoring. The study uses Interferometric Synthetic Aperture Radar (InSAR) satellite data to detect slow-slip earthquakes. The work will help scientists gain a deeper understanding of the interplay between slow and fast earthquakes, which could be key to making future predictions of quake events.

“Applying machine learning to InSAR data gives us a new way to understand the physics behind tectonic faults and earthquakes,” Bertrand Rouet-Leduc, a geophysicist in Los Alamos’ Geophysics group said in a press release. “That’s crucial to understanding the full spectrum of earthquake behavior.”

Discovered a couple of decades ago, slow earthquakes remain a bit of a mystery. They occur at the boundary between plates and can last from days to months without detection due to their slow and quiet nature.

They typically happen in areas where faults are locked due to frictional resistance, and scientists believe they may precede major fast quakes. Japan’s 9.0 magnitude earthquake in 2011, which also caused a tsunami and the Fukushima nuclear disaster, followed two slow earthquakes along the Japan Trench.

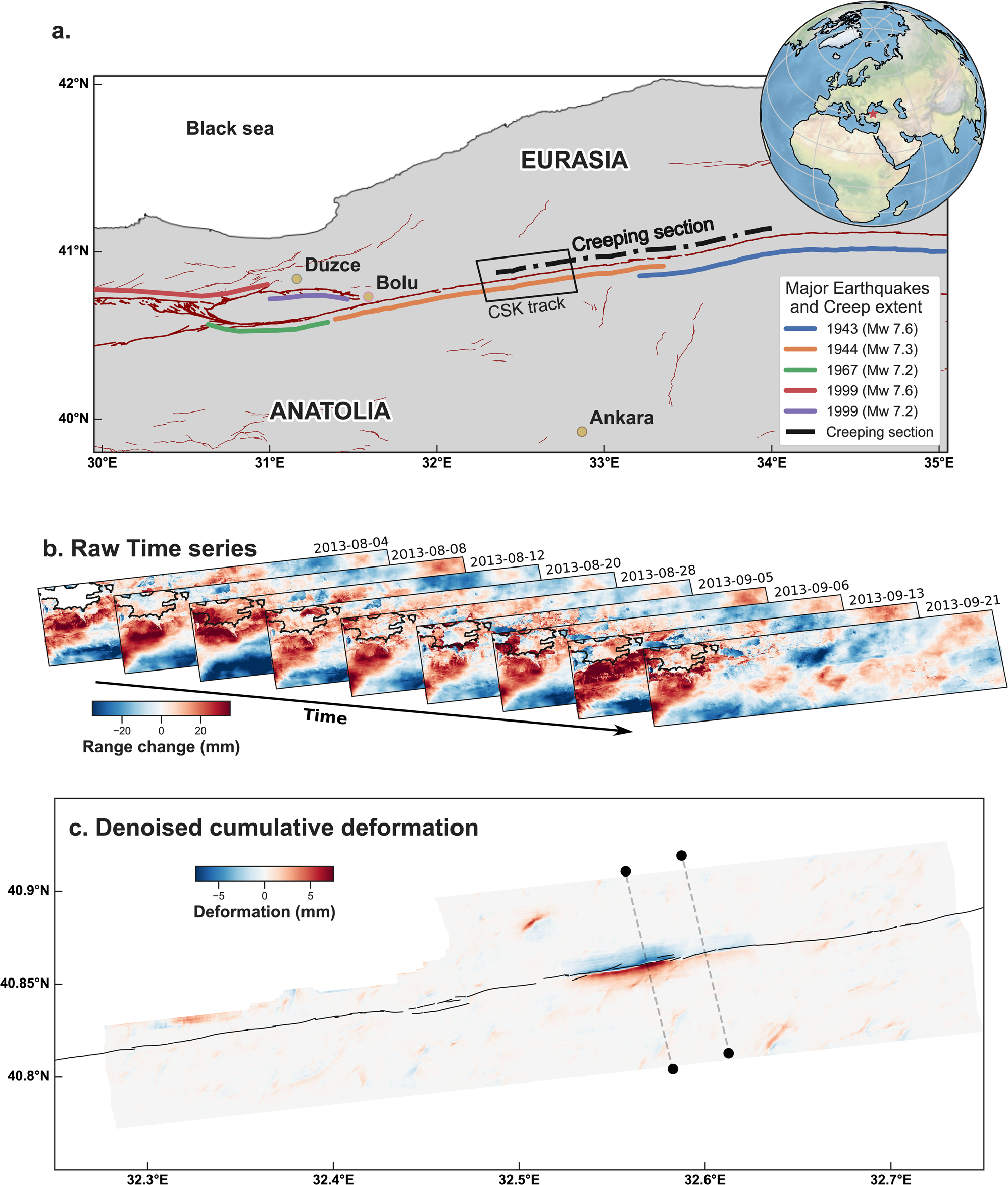

Scientists can track earthquake behavior with InSAR satellite data. The radar waves have the benefit of penetrating clouds and also work effectively at night, making it possible to track ground deformation continuously. Comparing radar images over time, researchers can detect ground surface movement.

But these movements are small, and existing approaches limit ground deformation measurements to a few centimeters. Ongoing monitoring of global fault systems also creates massive data streams that are too much to interpret manually.

The researchers created deep learning models addressing both of these limitations. The team trained convolutional neural networks on several million time series of synthetic InSAR data to detect automatically and extract ground deformation.

Using cuDNN-accelerated TensorFlow deep learning framework distributed over multiple NVIDIA GPUs, the new methodology operates without prior knowledge of a fault’s location or slip behavior.

To test their approach, they applied the algorithm to a time series built from images of the North Anatolian fault in Turkey. As a major plate boundary fault, the area has ruptured several times in the past century.

With a finer temporal resolution, the algorithm identified previously undetected slippage events, showing that slow earthquakes happen much more often than expected. It also spotted movement as small as two millimeters, something experts would have overlooked due to the subtlety.

“The use of deep learning unlocks the detection on faults of deformation events an order of magnitude smaller than previously achieved manually. Observing many more slow slip events may, in turn, unveil their interaction with regular, dynamic earthquakes, including the potential nucleation of earthquakes with slow deformation,” Rouet-Leduc said.

The team is currently working on a follow-up study, testing a model on the San Andreas Fault that extends roughly 750 miles through California. According to Rouet-Leduc, the model will soon be available on GitHub.

Read the published research in Nature Communications. >>

Read the press release. >>