Fifth-generation networks (5G) are ushering in a new era in wireless communications that delivers 1000X the bandwidth and 100X the speed at 1/10th the latency of 4G. 5G also allows for millions of connected devices per square km and is being deployed as an alternative to WiFi at edge locations like factories and retail stores. These applications demand a new network architecture that is fully software-defined, dynamically reconfigurable, easily deployed, and easily managed to guarantee a specific quality of service.

A 5G, cloud-native, radio access network (CloudRAN) is a software-defined computing architecture that brings real-time, high-bandwidth, low-latency access to 5G communications. CloudRANs are ideal for both centralized and distributed RAN architectures.

NVIDIA is uniquely positioned to deliver the tools needed to build a high-performance 5G CloudRAN with innovations across the full stack. The key components of an end-to-end (E2E) 5G CloudRAN edge computing system include NVIDIA V100, NVIDIA Mellanox SmartNICs, cloud-native system software (Kubernetes and containers), and software components such as the NVIDIA Aerial SDK.

Network system developers all over the world are now using the Aerial SDK to build massive multiple-input and multiple-output (MIMO)–capable, cloud-native RANs. They’re supporting a wide range of next-generation AI and IoT services, all on the same commercial off-the-shelf (COTS) server.

I/O communication

The advanced 5T for 5G technology embedded in Mellanox ConnectX-6 Dx SmartNIC exceeds stringent industry-standard timing specifications for eCPRI-based RANs by ensuring clock accuracy of 16ns or less. 5T for 5G enables packet-based, ethernet RANs to provide precise time-stamping of packets for delivering highly accurate time references to 5G fronthaul and backhaul networks. That enables the entworks to efficiently handle time-sensitive network traffic.

Unique features, such as eCPRI windowing, allows the transmission of eCPRI ethernet packets from distributed unit (DU) to radio unit (RU) accurately and precisely within the 1 uSec transmission window specified in the O-RAN specification.

The Accelerated Switching and Packet Processing (ASAP2) time-bound packet flow engine enables software-defined, hardware-accelerated, virtual network functions (VNF) and containerized network functions (CNF) to precisely steer traffic in the ingress and egress directions, as desired by networks services and applications. Thus, timing reference, accuracy, and precision are extended to ASAP2 and all other acceleration engines supported today by ConnectX-6 Dx SmartNIC.

SmartNICs provide GPUDirect capability for better packet placing and pacing than traditional FPGAs. Using the GPU Data Plane Development Kit (DPDK) bypasses the OS and fills the DPDK queue with descriptors. This reduces the packet processing time.

Cloud stack

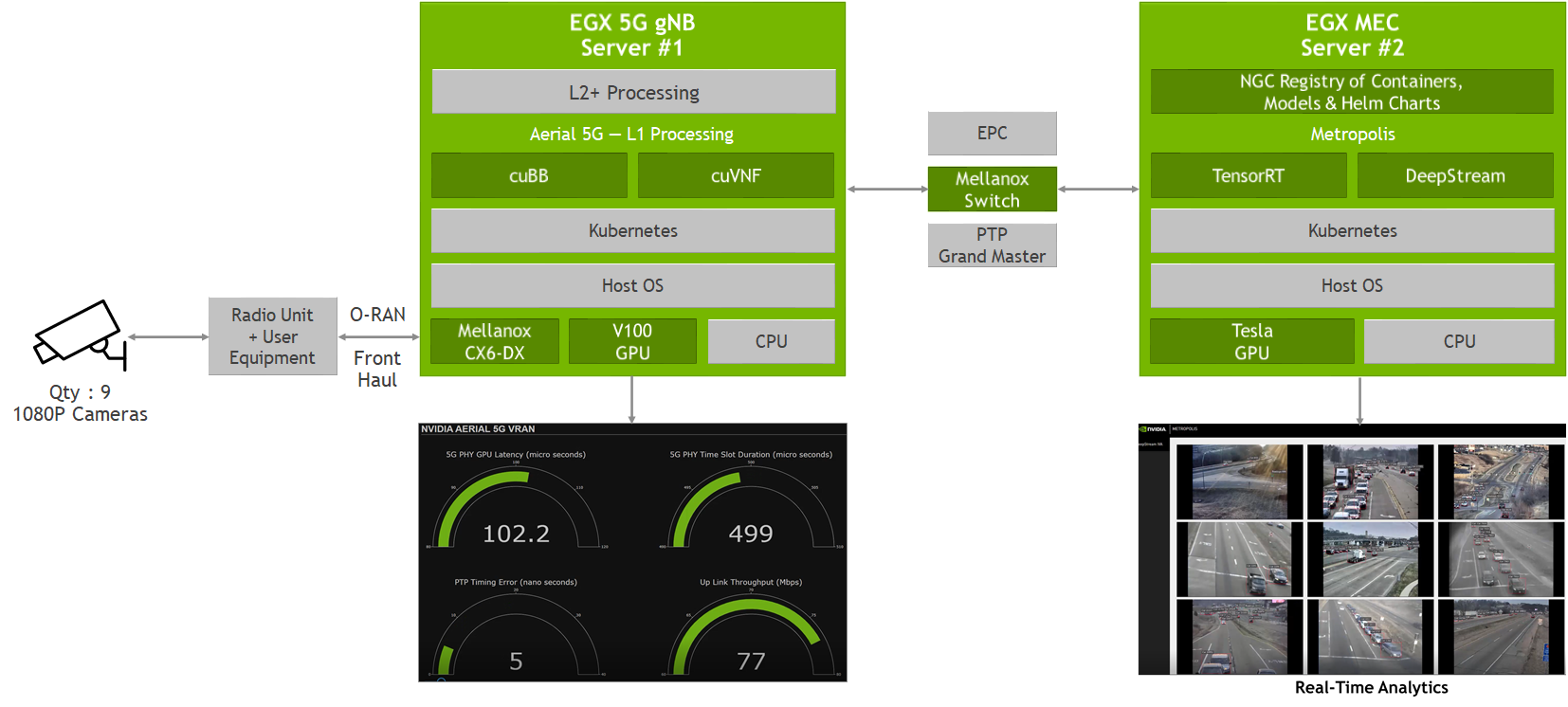

The E2E system design uses NVIDIA EGX servers for 5G signal processing and AI computation. The servers include an NVIDIA EGX optimized software stack on Dell infrastructure that features NVIDIA drivers, a Kubernetes plugin, a container runtime, and containerized AI frameworks and applications, including NVIDIA TensorRT, the Aerial SDK, and the NVIDIA DeepStream SDK.

The NVIDIA EGX platform is a high-performance, intelligent, edge computing platform that delivers AI, IoT, and 5G-based services efficiently, powerfully, and securely. A family of hardware and software products, NVIDIA EGX is composed of an easy-to-deploy, cloud native software stack, a range of edge servers and devices, and a vast ecosystem of partners who offer EGX through their products and services.

NVIDIA EGX allows for AI scalability from data center to edge, simplified IT management for edge deployments, and compatibility with the leading Kubernetes management platforms.

Aerial SDK

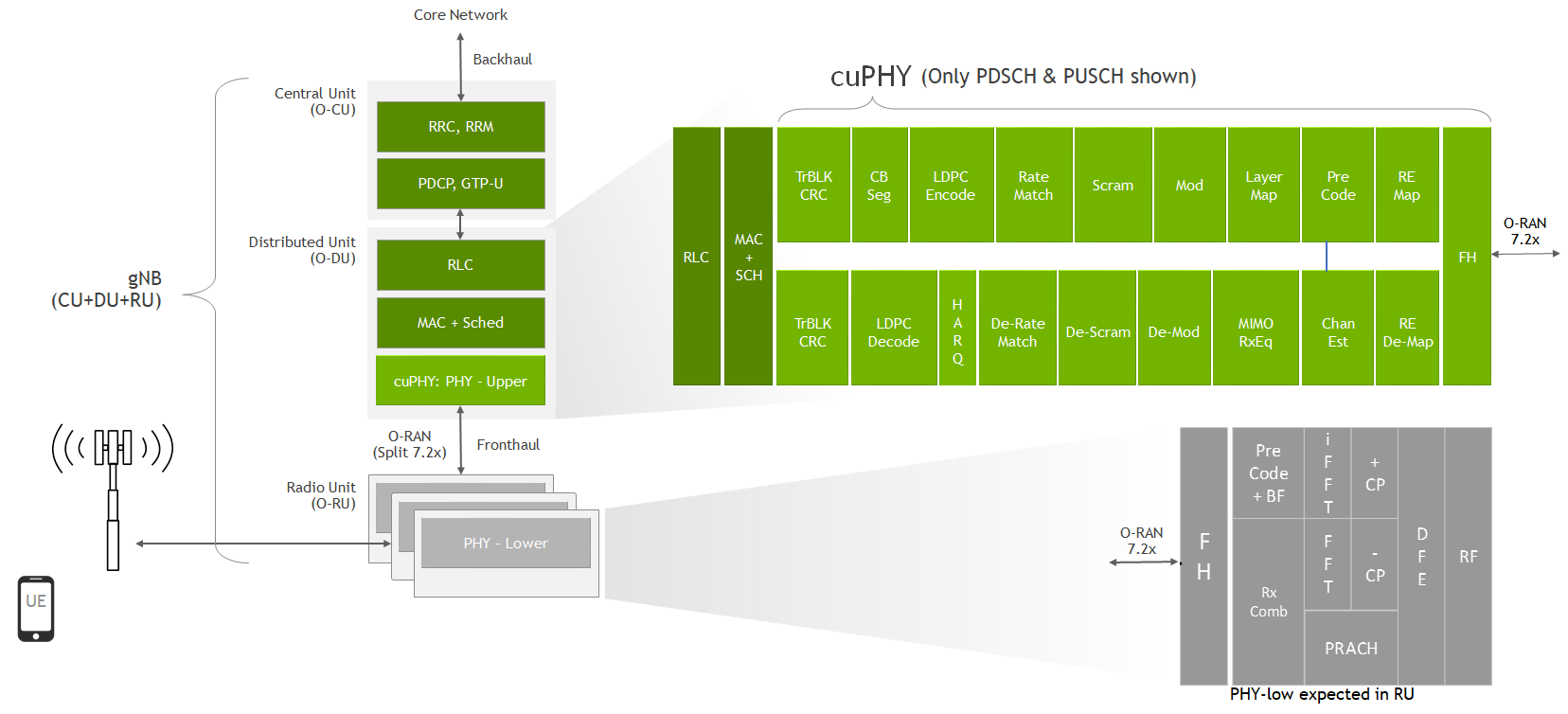

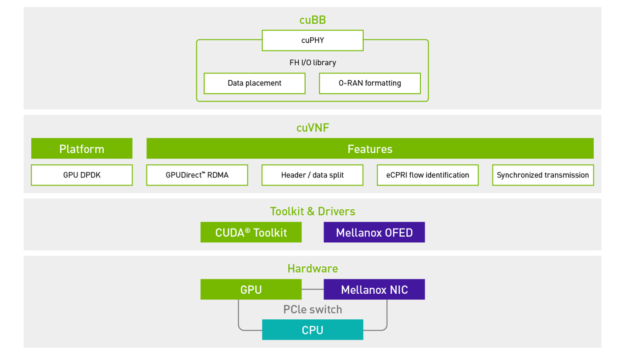

The Aerial SDK implements L1 PHY processing with cuVNF and cuPHY SDK.

The cuVNF SDK (CUDA-based VNFs) provides networking libraries and features to optimize packet placement and data transmission and reception, to and from the GPU memory.

Instead of using traditional CPU host memory, GPUDirect Remote Direct Memory Access (RDMA) is used to store data by eliminating unnecessary duplicates and decreasing latency. By reducing the back and forth with the CPU memory, GPUDirect saves computation cycles for memory access. With cuVNF, you have the header/data split functionality that allows agile packet filtering. O-RAN format identification feature does faster packet flow steering based on the MAC address.

Traditional COTS-based solutions require static-function hardware to accelerate portions of the 5G physical layer pipeline. This traditional “look-aside” architecture results in repeated transfers of data in and out of local CPU caches and across bandwidth-constrained PCIe busses, causing system-level, performance-limiting bottlenecks.

The Aerial SDK takes a unique approach to accelerate the workload of the 5G physical layer by processing the entire pipeline on the GPU. This “inline” architecture allows the physical layer data to remain within the GPU’s high-performance memory subsystem and keep the GPU processing engines operating at peak efficiency. Full physical layer acceleration employed by cuPHY makes RAN implementation possible on a COTS-based platform due to the GPU’s unmatched performance scalability and ease of programming.

E2E system

This E2E system demonstrates the most advanced teleco transformation, showcasing the value of NVIDIA GPU-based, programmable, cloud-native, and scalable edge compute platform for telcos. EGX-based platforms deliver new AI services to consumers and businesses while supporting the deployment of 5G and virtual RAN infrastructures. This flexibility enables the convergence of B2C/B2B applications and network services in a single COTS platform.

This is the NVIDIA vision for the EGX A100 converged accelerator family, a new family of products that combines the latest NVIDIA Ampere architecture with a Mellanox ConnectX-6 Dx SmartNIC. Turn any server into a secure edge supercomputing platform capable of delivering unprecedented AI performance at the edge while handling most demanding 5G use cases.

One such use case is CloudRAN and MEC convergence. For this E2E configuration, the traffic cameras stream using the Real-Time Streaming Protocol (RTSP). The streams enter the UE-EM which is a combination of UE and O-RAN RU (O-RU). The UE stack processes these camera packets and sends data to the O-RU which performs the lower PHY functionality. Using the Control and User planes of the O-RAN fronthaul protocol, the O-RU transports the IQ waveform to the 5G gNB which is received using the Mellanox CX6-DX SmartNIC. Along with the Mellanox SmartNIC, the 5G gNB contains the Aerial SDK and third-party L2+ stack within a Docker container. Strict system timing is observed by the use of a PTP Grandmaster.

The Aerial SDK is the world’s first GPU-based, software-defined virtualized RAN. Inline acceleration reduces latency by streamlining packet movement within the GPU.

- Aerial 5G cuBB (CUDA Baseband) performs physical layer signal processing and provides transport packets to the third-party L2+ stack.

- The L2+ stack performs the MAC, RLC, and PDCP functionality that interface to the core network (EPC).

- The EPC provides GTP-u tunneling by adding appropriate headers to the packets.

- These packets are then sent to the DeepStream SDK for video codec and analytics.

- DeepStream receives the RTSP input, then runs a pretrained ResNet model on input streams to perform object detection on cars, pedestrians, and road signs, applying bounding boxes to the identified objects.

- DeepStream re-streams analyzed output to the web app using RTSP for display.

Using a CloudRAN implementation on GPUs with CUDA programming results in faster compute capability for matrix calculations for the 5G PHY layer functions. The Aerial SDK supports up to 16 downlink MIMO layers and 8 uplink MIMO layers. Spectral efficiency increases, as each layer is essentially a parallel transmission channel transmitted at the same time. With a broader frequency spectrum, higher data rates are achieved for the system config.

With the NVIDIA E2E system, telcos can build this new network architecture by taking advantage of network function virtualization and cloud native infrastructure. For more information and to be a part of the CloudRAN developer community, see NVIDIA Aerial SDK.

You can download the Aerial SDK and run your AI application at the edge using the EGX stack. For more information about the new 5T for 5G technology, follow the 5T for 5G news.