This post was originally published on the Mellanox blog.

In the first post of this series, I argued that it is a function and not a form that distinguishes a SmartNIC from a data processing unit (DPU). I introduced the category of datacenter NICs called SmartNICs, which include both hardware transport and a programmable data path for virtual switch acceleration. These capabilities are necessary but not sufficient for a NIC to be a DPU. A true DPU must also include an easily extensible, C-programmable Linux environment that enables datacenter architects to virtualize all resources in the cloud and make them appear as local. To understand why DPUs need this, I go back to what created the need for DPUs in the first place.

Why the world needs DPUs

One of the most important reasons why the world needs DPUs is that modern workloads and datacenter designs impose too much networking overhead on the CPU cores. With faster networking (now up to 200 Gb/s per link), the CPU just spends too much of its valuable cores classifying, tracking, and steering network traffic. These expensive CPU cores are designed for general purpose application processing, and the last thing needed is to consume all this processing power simply looking at and managing the movement of data. After all, application processing that analyzes data and produces results is where the real value creation occurs.

The introduction of compute virtualization makes this problem worse, as it creates more traffic on the server both internally–between VMs or containers—and externally to other servers or storage. Applications such as software-defined storage (SDS), hyperconverged infrastructure (HCI), and big data also increase the amount of east-west traffic between servers, whether virtual or physical, and often Remote Direct Memory Access (RDMA) is used to accelerate data transfers between servers.

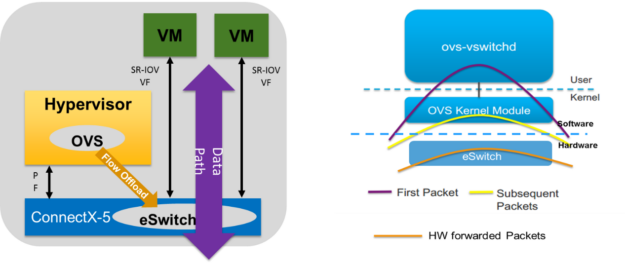

Through traffic increases and the use of overlay networks such as VXLAN, NVGRE, or GENEVE, increasingly popular for public and private clouds, adds further complications to the network by introducing layers of encapsulation. Software-defined networking (SDN) imposes additional packet steering and processing requirements and adds additional burden to the CPU with even more work, such as running the Open vSwitch (OVS).

DPUs can handle all this virtualization (SR-IOV, RDMA, overlay network traffic encapsulation, OVS offload) faster, more efficiently, and at lower cost than standard CPUs.

Another reason: Security isolation

Sometimes, you might want to isolate the networking from the CPU for security reasons. The network is the most likely vector for a hacker attack or malware intrusion and the first place you’d look to detect or stop a hack. It’s also the most likely place to implement in-line encryption.

The DPU, being a NIC, is the first, easiest, best place to inspect network traffic, block attacks, and encrypt transmissions. This has both performance and security benefits, as it eliminates the frequent need to route all incoming and outgoing data back to the CPU and across the PCIe bus. It provides security isolation by running separately from the main CPU. If the main CPU is compromised, then the DPU can still detect or block malicious activity. The DPUs can work to detect or block attacks without immediately involving the CPU.

For more information about the security benefits of a DPU, see Foreshadowing the Future of Security Meltdowns and the Spectre of a Breach.

Virtualizing storage and the cloud

A newer use case for DPUs is to virtualize software-defined storage, hyperconverged infrastructure, and other cloud resources. Before the virtualization explosion, most servers just ran local storage, which is not always efficient but it’s easy to consume. Every OS, application, and hypervisor knows how to use local storage.

Then came the rise of network storage: SAN, NAS, and more recently NVMe over Fabrics (NVMe-oF). However, not every application is natively SAN-aware. Some operating systems and hypervisors, like Windows and VMware, don’t speak NVMe-oF yet. Something DPUs can do is virtualize networked storage, which is more efficient and easier to manage, to look like local storage, which is easier for applications to consume. A DPU could even virtualize GPUs or other neural network processors so that any server can access as many GPUs as it needs whenever it needs them, over the network.

A similar advantage applies to software-defined storage and hyperconverged infrastructure. Both use a management layer, often running as a VM or as a part of the hypervisor itself, to virtualize and abstract the local storage and the network to make it available to other servers or clients across the cluster. This is wonderful for rapid deployments on commodity servers and is good at sharing storage resources. However, the layer of management and virtualization soaks up many CPU cycles that should be running the applications. As with standard servers, the faster the networking runs and the faster the storage devices are, the more CPU must be devoted to virtualizing these resources.

Here again is where the intelligent DPU creates efficiencies. First, it offloads and helps virtualize the networking. They accelerate the private and public cloud, which is why they are sometimes called CloudNICs. They can offload both the networking and much or all the storage virtualization. DPUs can also offload a wide variety of functions for SDS and HCI, such as compression, encryption, deduplication, RAID, reporting, and so on. This is all in the name of sending more expensive CPU cores back to what they do best: running applications.

Must have hardware acceleration

Having covered the major DPU use cases, you know when you need them and where they can provide the greatest benefit. They must be able to accelerate and offload network traffic. They also might need to virtualize storage resources, share GPUs over the network, support RDMA, and perform encryption.

Now what are the top DPU requirements? First, all DPUs must have hardware acceleration. Hardware acceleration offers the best performance and efficiency, which also means more offloading with less spending. The ability to have dedicated hardware for certain functions is key to the justification for a DPU.

Must be programmable

For the best performance, most of the acceleration functions must run on hardware. For the greatest flexibility, the control and programming of these functions must run in software.

There are many functions that could be programmed on a DPU, a few of which are outlined in the feature table of my previous post. Usually, the specific offload methods, encryption algorithms, and transport mechanisms don’t change much, but the routing rules, flow tables, encryption keys, and network addresses change all the time. The former functions are the data plane and the latter functions are the control plane. The data plane rules and algorithms can be coded into silicon after they are standardized and established. The control plane rules and programming change too quickly to be hard-coded in silicon but can be run on an FPGA (modified occasionally, but with difficulty) or in a C-programmable Linux environment (modified easily and often).

| DPU function | Use case | Run in hardware (data plane) | Run in hardware (control plane) |

| Packet inspection | Intrusion detection, firewall | Packet filtering, header inspection and rewrite | Rules, reporting, packet content inspection |

| Flow table processing | vRouter, OVS, firewall | Packet switching | Define switching rules and flow tables |

| Encryption | Security, privacy | Encryption/decryption | Key management |

| RDMA | Faster networking | Transport, networking | Addressing, connections |

| DPDK/OVS | NFV | Packet switching | Rules, reporting |

| VXLAN overlays | Private/public cloud | Encryption/decryption, VTFP | Overlay definitions |

| NVMe-oF | Flash storage | NVMe-oF protocol, RDMA | Connection setup, RAID, provisioning |

How much programming has to live on the DPU?

You have a choice on how much of a DPU’s programming is done on the adapter. That is, the adapter’s handling of packets must be hardware-accelerated and programmable, but the control of that programming can live on the adapter or elsewhere. If it’s the former, we say the adapter has a programmable data plane for executing the packet processing rules and control plane for setting up and managing the rules. In the latter case, the adapter only does the data plane while the control plane lives somewhere else, like the CPU.

For example with Open vSwitch, the packet switching can be done in software or hardware, and the control plane can run on the CPU or on the DPU. With a regular foundational or dumb NIC, all the switching and control is done by software on the CPU. With a SmartNIC, the switching is run on the adapter’s ASIC but the control is still on the CPU. With a true DPU, the switching is done by ASIC-type hardware on the adapter while the control plane also runs on the adapter in easily programmable Arm cores.

Which is best, DPU or SmartNIC?

To achieve application efficiency in the datacenter, both transport offload and a programmable data path with hardware offload for virtual switching are vital functions. According to the definition, these functions are part of a SmartNIC and are table stakes on the path to a DPU. However, just transport and programmable virtual switching offload by themselves don’t raise a SmartNIC to the level of a DPU.

Customers often tell us they must have a DPU because they need programmable virtual switching hardware acceleration. This is mainly because another vendor competitor with an expensive, barely programmable offering has told them a “DPU” is the only way to achieve this. In this case, we are happy to deliver the same functionality with the ConnectX family of SmartNICs, which are very smart NICs after all.

But by my reckoning, there are a few more things required to take a NIC to the exalted level of a DPU, such as running the control-plane on the NIC and offering C-programmability with a Linux environment. In those cases, we’re proud to offer the BlueField DPU, which includes all the smarter NIC features of ConnectX adapters plus from 4 to 16 64-bit Arm cores, all running Linux, of course, and easily programmable.

As you plan your next infrastructure build-out or refresh, remember these key points:

- DPUs are increasingly useful for offloading networking functions and virtualizing resources like storage, networking, and GPUs

- SmartNICs (or smarter NICs) accelerate data plane tasks in hardware but run the control plane in software

- The control plane software and other management software can run on the regular CPU or on a DPU.

- NVIDIA offers best-in class, intelligent SmartNICs (ConnectX), FPGA NICs (Innova), and fully programmable data plan/control plane DPUs (BlueField programmable DPU).

For more information, see the following resources: