Computer vision is a rapidly growing field in research and applications. Advances in computer vision research are now more directly and immediately applicable to the commercial world.

AI developers are implementing computer vision solutions that identify and classify objects and even react to them in real time. Image classification, face detection, pose estimation, and optical flow are some of the typical tasks. Computer vision engineers are a subset of deep learning (DL) or machine learning (ML) engineers that program computer vision algorithms to accomplish these tasks.

The structure of DL algorithms lend themselves well to solving computer vision problems. The architectural characteristics of convolutional neural networks (CNNs) enable the detection and extraction of spatial patterns and features present in visual data.

The field of computer vision is rapidly transforming industries like automotive, healthcare, and robotics, and it can be difficult to stay up-to-date on the latest discoveries, trends, and advancements. This post highlights the core technologies that are influencing and will continue to shape the future of computer vision development in 2022 and beyond:

- Cloud computing services that help scale DL solutions.

- Automated ML (AutoML) solutions that reduce the repetitive work required in a standard ML pipeline.

- Transformer architectures developed by researchers that optimize computer vision tasks.

- Mobile devices incorporating computer vision technology.

Cloud computing

Cloud computing provides data storage, application servers, networks, and other computer system infrastructure to individuals or businesses over the internet. Cloud computing solutions offer quick, cost-effective, and scalable on-demand resources.

Storage and high processing power are required for most ML solutions. The early-phase development of dataset management (aggregation, cleaning, and wrangling) often requires cloud computing resources for storage or access to solution applications like BigQuery, Hadoop, or BigTable.

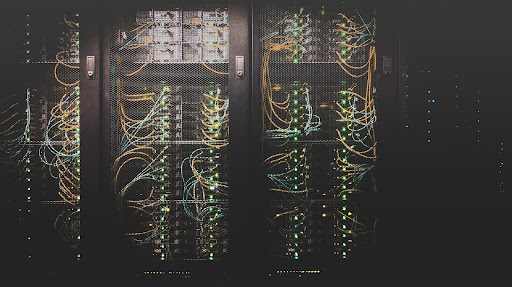

(Photo by Taylor Vick on Unsplash)

Recently, there has been a notable increase in devices and systems enabled with computer vision capabilities, such as pose estimation for gait analysis, face recognition for smartphones, and lane detection in autonomous vehicles.

The demand for cloud storage is growing rapidly, and it is projected that this industry will be valued at $390.33B—five times the market’s current value in 2021. The increased market size will lead to an increase in the use of inbound data to train ML models. This correlates directly to larger data storage capacity requirements and increasingly more powerful compute resources.

GPU availability has accelerated computer vision solutions. However, GPUs alone aren’t always enough to provide the scalability and uptime required by these applications, especially when servicing thousands or even millions of consumers. Cloud computing provides the needed resources to startup and supplement existing on-premises infrastructure gaps.

Cloud computing platforms, including Amazon Web Services (AWS), Google Cloud Platform (GCP), and Microsoft Azure, provide end-to-end solutions to core components of the ML and data science project pipeline, including data aggregation, model implementation, deployment, and monitoring. For computer vision developers designing vision systems, it’s important to be aware of these major cloud service providers their strengths, and how they can be configured to meet specific and complex pipeline needs.

Computer vision at scale requires cloud service integration

The following are examples of NVIDIA services that support typical computer vision systems.

The NGC Catalog of pretrained DL models reduces the complexity of model training and implementation.

DL scripts provide ready-made customizable pipelines. The robust model deployment solution automates delivery to end users.

NVIDIA Triton Inference Server enables the deployment of models from frameworks such as TensorFlow and PyTorch on any GPU– or CPU-based infrastructure. Triton Inference Server provides scalability of models across various platforms, including cloud, edge, and embedded devices.

The NVIDIA partnership with cloud service providers such as AWS enables the deployment of computer vision-based assets, so computer vision engineers can focus more on model performance and optimization.

Businesses reduce costs and optimize strategies wherever feasible. Cloud computing and cloud service providers accomplish both by providing billed solutions based on usage and scaling based on demand.

AutoML

ML algorithms and model development involve a number of tasks that can benefit from automation like, feature engineering and model selection.

Feature engineering involves the detection and selection of relevant characteristics, properties, and attributes from datasets.

Model selection involves evaluating the performance of a group of ML classifiers, algorithms, or solutions to a given problem.

Both feature engineering and model selection activities require considerable time for ML engineers and data scientists to complete. Software developers frequently revisit these phases of the workflow to enhance model performance or accuracy.

(Photo by Stephen Dawson on Unsplash)

There are several large ongoing projects to simplify the intricacies of an ML project pipeline. AutoML focuses on automating and augmentation workflows and their procedures to make ML easy accessible, and less manually intensive for non-ML experts.

Looking at the market value, projections expect the AutoML market to reach $14 billion by 2030. This would mean an increase ~42x higher than its current value.

This particular marriage of ML and automation is gaining traction, but there are limitations.

AutoML in practice

AutoML saves data scientists and computer engineers time. AutoML capabilities enable computer vision developers to dedicate more effort to other phases of the computer vision development pipeline that best use their skillset like model training, evaluation, and deployment. AutoML helps accelerate data aggregation, preparation, and hyperparameter optimization, but these parts of the workflow still require human input.

Data preparation and aggregation are needed to build the right model, but they are repetitive, time-consuming tasks that depend on locating appropriate data quality sources.

Likewise, hyperparameter tuning can take a lot of time to iterate to get the right algorithm performance. It involves a trial-and-error process with educated guesses. The amount of repeated work that goes into finding the appropriate hyperparameters can be tedious but critical for enabling the model’s training to achieve the desired accuracy.

For those interested in exploring GPU-powered AutoML, the widely used Tree-based Pipeline Optimization Tool (TPOT) is an automated ML library aimed at optimizing ML processes and pipelines through the utilization of genetic programming. RAPIDS cuML provides TPOT functionalities accelerated with GPU compute resources. For more information, see Faster AutoML with TPOT and RAPIDS.

Machine learning libraries and frameworks

ML libraries and frameworks are essential elements in any computer vision developer’s toolkit. Major DL libraries such as TensorFlow, PyTorch, Keras, and MXNet received continuous updates and fixes in 2021, and will likely continue to do so in the future.

More recently, there have been exciting advances going on in mobile-focused DL libraries and packages that optimize commonly used DL libraries.

MediaPipe extended its pose estimation capabilities in 2021 to provide 3D pose estimation through the BlazePose model, and this solution is available in the browser and on mobile environments. In 2022, expect to see more pose estimation applications in use cases involving dynamic movement and those that require robust solutions, such as motion analysis in dance and virtual character motion simulation.

PyTorch Lightning is becoming increasingly popular among researchers and professional ML practitioners due to its simplicity, abstraction of complex neural network implementation details, and augmentation of hardware considerations.

State-of-the-art deep learning

DL methods have long been used to tackle computer vision challenges. Neural network architectures for face detection, lane detection, and pose estimation all use deep consecutive layers of CNNs. A new architecture for computer vision algorithms is emerging: transformers.

The Transformer is a DL architecture introduced in Attention Is All You Need. The paper methodology creates a computational representation of data by using the attention mechanism to derive the significance of one part of the input data relative to other segments of the input data.

The Transformer does not use the conventions of CNNs, but research has shown the applications of transformer models in vision-related tasks. Transformers have made a considerable impact within the NLP domain. For more information, see Generative Pre-trained Transformer (GPT) and Bidirectional Encoder Representation From Transformer (BERT).

Explore a transformer model through the NGC Catalog that includes details of the architecture and utilization of an actual transformer model in PyTorch.

For more information about applying the Transformer network architecture to computer vision, see the Transformers in Vision: A Survey paper.

Mobile devices

Edge devices are becoming increasingly powerful. On-device inference capabilities are a must-have feature for mobile applications used by customers who expect quick service delivery and AI features.

(Photo by Homescreenify on Unsplash)

The incorporation of computer vision-enabling functionalities within mobile devices, like image and pattern recognition, reduces the latency for obtaining model inference results and provides benefits such as the following:

- Reduced waiting time for obtaining inference results due to on-device computing.

- Enhanced privacy and security due to the limited transfer of data between and to cloud servers.

- Reduced cost of removing dependencies on cloud GPU and the CPU server for inference.

Many businesses are exploring mobile offerings, which includes exploring how existing AI functionality can be replicated on mobile devices. Here are several platforms, tools, and frameworks to implement mobile-first AI solutions:

Summary

Computer vision technology continues to increase as AI becomes more integrated in our daily lives. Computer vision is also becoming more and more common in the latest news headlines. As this technology scales, the demand for specialists with knowledge in computer vision systems will also rise due to trends in cloud computing service, Auto ML pipelines, transformers, mobile-focused DL libraries, and computer vision mobile applications.

In 2022, increased development in augmented and VR applications will enable computer vision developers to extend their skills into new domains, like developing intuitive and efficient methods of replicating and interacting with real objects in a 3D space. Looking ahead, computer vision applications will continue to change and influence the future.