To improve brain simulation technology, a team of researchers from the University of Sussex developed a GPU-accelerated approach that can generate brain simulation models of almost-unlimited size.

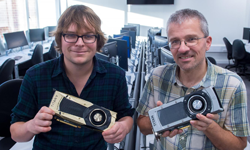

Researchers Dr. James Knight and Thomas Nowotny from the University of Sussex’s School of Engineering and Informatics detailed the work in a paper published in Nature Computational Science journal.

Using a GPU-accelerated system composed of an NVIDIA TITAN RTX GPU, the team created a cutting-edge model of a Macaque’s visual cortex with 4.13 x 106 neurons and 24.2 x 109 synaptic weights, a simulation that could previously only be done on a supercomputer.

The neural network-based simulator uses the large amount of computational power of the GPU to procedurally generate connectivity and synaptic weights as spikes are triggered, without having to store connectivity data in memory, the researchers explained.

“Large-scale simulations of spiking neural network models are an important tool for improving our understanding of the dynamics and ultimately the function of brains. However, even small mammals such as mice have on the order of 1 × 1012 synaptic connections meaning that simulations require several terabytes of data – an unrealistic memory requirement for a single desktop machine,” the researchers explained.

According to the team, the initialization of the model took six minutes, and the simulation of each biological second took 7.7 min in the ground state, and 8.4 min in the resting state – 35% less time than a previous supercomputer simulation.

On the software side, the team used the CUDA-based GPU enhanced Neuronal Networks (GeNN) package. GeNN can also be used through external interfaces such as SpineML and SpineCreator, a Python interface (PyGeNN), and a Brian interface via Brian2GeNN.

“This research is a game-changer for computational Neuroscience and AI researchers who can now simulate brain circuits on their local workstations, but it also allows people outside academia to turn their gaming PC into a supercomputer and run large neural networks.”

A pre-print of the paper is available on bioRxiv under open-access terms. The Nature Computational Science paper can be found here.