Research on neural fields has been an increasingly hot topic in computer graphics and computer vision in recent years. Neural fields can represent 3D data like shape, appearance, motion, and other physical quantities by using a neural network that takes coordinates as input and outputs the corresponding data at that location.

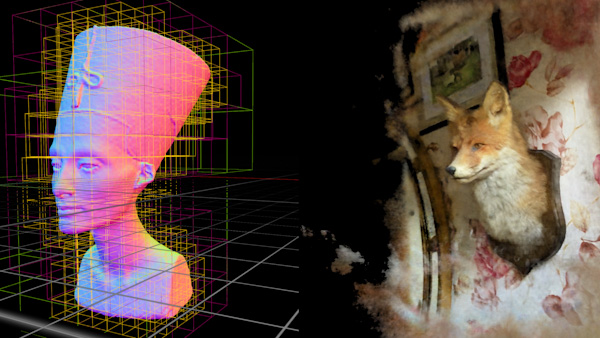

These representations have been proven to be useful in various applications like generative modeling and 3D reconstruction. NVIDIA projects such as NGLOD, GANcraft, NeRF-Tex, EG3D, Instant-NGP, and Variable Bitrate Neural Fields, are advancing state-of-the-art technology in neural fields, computer graphics, and computer vision in various ways.

Research challenges

Research on neural fields is moving fast, which means that standards and software often lag behind. Implementation differences can cause large variations in quality metrics and performance. The ramp-up cost for new projects can be considerable, with the components of neural fields increasing in complexity. Work is often duplicated among research groups–creating whole interactive applications to visualize the neural field outputs, for example.

One important milestone is NVIDIA Instant-NGP, which has recently attracted much attention from the research community due to its ability to fit various signals like neural radiance fields (NeRFs), signed distance fields (SDFs), and images at near-instant speeds. It unlocks a new frontier of practical applications and research directions due to its computational efficiency. However, this computational efficiency can also be a barrier for research due to the highly specialized and optimized code that can be difficult to adapt and extend.

NVIDIA Kaolin Wisp

NVIDIA Kaolin Wisp was developed as a fast-paced research-oriented library for neural fields to support researchers navigating the challenges of a growing discipline. It is built on top of the core Kaolin Library functionality, which includes more general and stable components for 3D deep learning research.

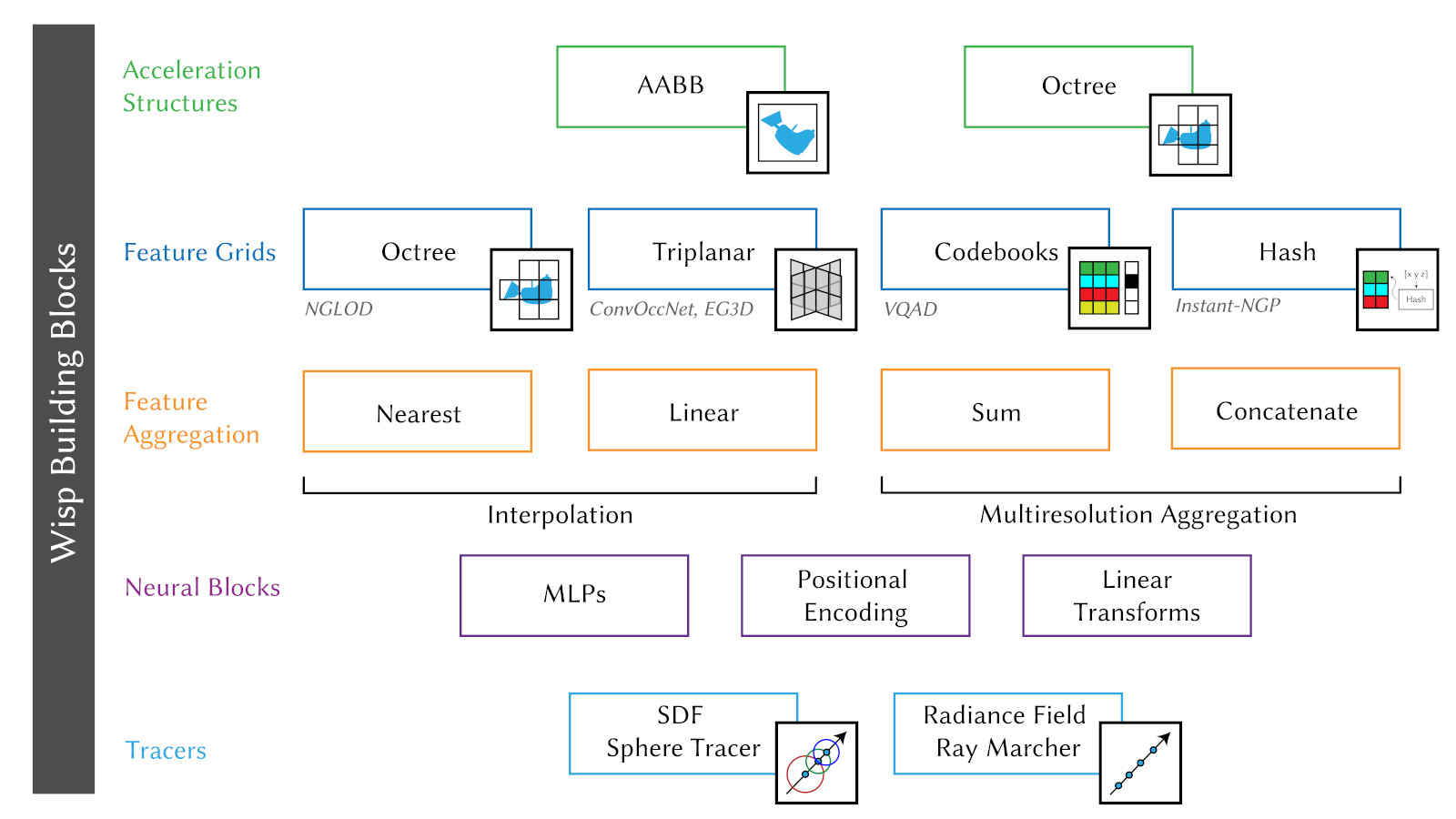

The goal of Wisp is to provide a common core library and framework for research on neural fields. The library consists of modular building blocks that can be used to create complex neural fields and an interactive app to train and visualize the neural fields.

Rather than providing a specific implementation, Wisp supplies the building blocks for neural fields. The framework is easily extensible for research purposes and consists of a modular pipeline where each pipeline component can be easily interchanged to provide a plug-and-play configuration for standard training.

Wisp does not aim to provide production-ready code, but to ship novel modules fast, staying at the leading edge of this technology. It also provides a rich set of examples that showcase the Kaolin Core framework and how Kaolin Core can be used to accelerate research.

NVIDIA Kaolin Wisp feature highlights

Kaolin Wisp uses a Python-based API, which builds on PyTorch, enabling users to develop a project quickly. Compatible with many other public PyTorch-based projects, Kaolin Wisp is easily customizable with PyTorch / CUDA-based building blocks.

While Wisp is designed for developer speed over compute performance, the building blocks that are provided in the library are optimized to train neural fields within minutes and visualize them interactively.

Kaolin Wisp is packed with building blocks to compose neural field pipelines with a mix-and-match approach. Notable examples are feature grids, which include:

- Hierarchical Octrees: From NGLOD for learning features on spatial subdivision trees. The Octree also supports ray tracing operations, which allows for training a multiview image-based NGLOD-NeRF variant in addition to SDFs.

- Triplanar Features: Used in EG3D and Convolutional Occupancy Networks papers to learn volumetric features on triplanar texture maps. The triplanes also support multiple levels of detail (LODs) in a multi-resolution pyramid structure.

- Codebooks: From Variable Bivariate Neural Fields, to learn compressed feature codebooks with differentiable learnable keys.

- Hash Grids: From the Instant-NGP paper for learning compact cache-friendly feature codebooks with performant memory access.

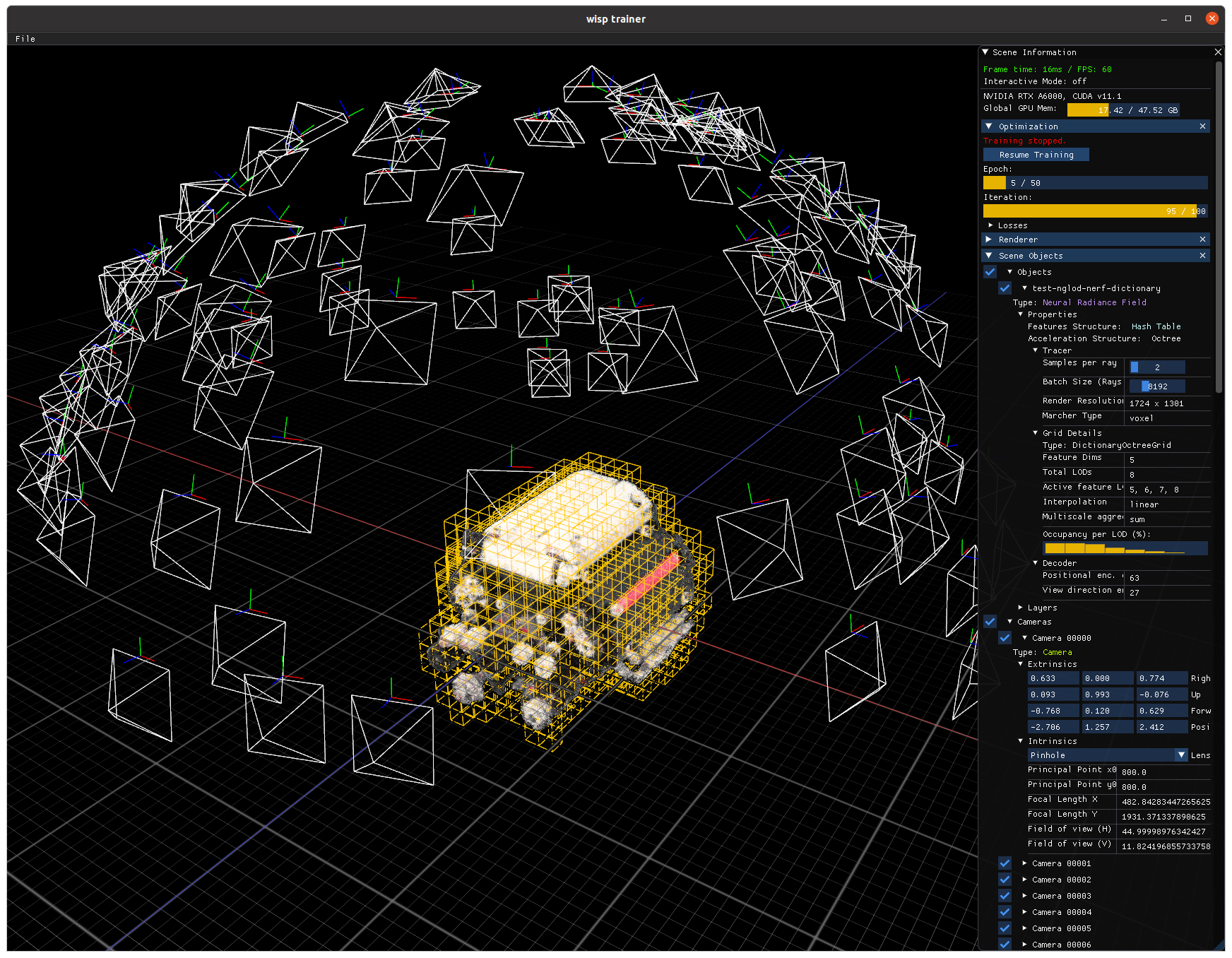

NVIDIA Kaolin Wisp is paired with an interactive renderer that supports flexible rendering of neural primitives pipelines, like variants of NeRF and neural SDFs. It allows the integration of new representations.

OpenGL style rasterized primitives can be mixed and matched with neural representations to add visualizations of more data layers, such as camera and occupancy structures. It also allows for easy-to-build customizable apps by supporting custom widgets on the GUI that can interact with the training and rendering.

Other useful features include property viewers, optimization controls, custom output render buffers, and camera object that allows for easy manipulation of scene cameras.

To learn more about Kaolin Wisp and other libraries, visit NVIDIA Research. You can access the kaolin-wisp project on GitHub.

Join NVIDIA 3D deep learning researchers and Kaolin library developers at SIGGRAPH 2022 for a session on Illuminating the Future of Graphics. Ask questions, watch demos, and learn how Kaolin Wisp can accelerate your neural network research.