NVIDIA Kaolin Library

Kaolin is a library for accelerating 3D Deep Learning research.

Kaolin is a suite of tools for 3D deep learning research

Key Features

The Kaolin library provides a PyTorch API for working with a variety of 3D representations. It includes a growing collection of GPU-optimized operations such as modular differentiable rendering, fast conversions between representations, data loading, camera classes, volumetric acceleration data structures, 3D checkpoints, and more.

Continuous Additions from NVIDIA Research

Follow library releases for new research components from the NVIDIA Toronto AI Lab and across NVIDIA. Latest releases included FlexiCubes, Deep Marching Tetrahedra, differentiable mesh subdivision, and structured point clouds (SPCs) acceleration data structure supporting efficient volumetric rendering.

Representation Agnostic Physics Simulation

We present a versatile framework for reduced elastic simulations of 3D objects in any geometric representation such as 3D Gaussian Splats, SDFs, point-clouds, and even medical scans. Our mesh-free, grid-free method utilizes implicit neural fields to construct a physics-aware subspace of the object via our data-free training process using the latest Simplicits method.

Modular Differentiable Rendering

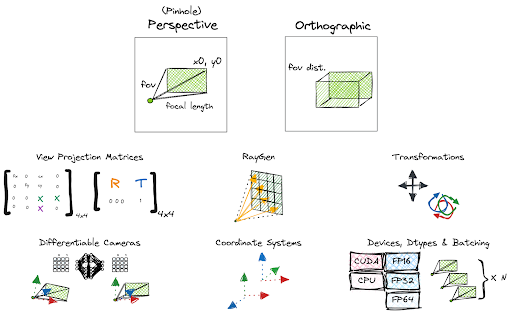

Develop cutting-edge inverse graphics applications using modular and optimized implementations of differentiable rendering. This includes a differentiable camera API, a mesh differentiable renderer with two rasterization backends, an implementation of Spherical Gaussians as environment maps for diffuse and specular lighting, DefTet tetrahedral meshes volumetric rendering, and ray-tracing features for SPCs, allowing both surface and volumetric differentiable rendering. Finally store the materials information in a PBRMaterial class.

Jupyter Notebook 3D Debugging

Many 3D deep learning methods use a custom rendering function, such as implicit representations or custom differentiable renderers. Kaolin Library provides a utility to debug and inspect these 3D renderings in an interactive viewer, directly in a Jupyter notebook, with only a couple lines of code.

USD Integration in Omniverse

Connecting 3D research to USD content on a live USD stage rendered in NVIDIA Omniverse is easy with Kaolin Library. For example, currently rendered 3D models can be easily converted into PyTorch tensors, ingestible by AI. For a code sample, refer to our AI Texture Painting extension.

GPU-Optimized 3D Operations

Convert between 3D representations using fast and reliable conversion operations, including marching cube, marching tetrahedra and Flexicubes, point cloud sampling from mesh and various conversion to SPCs. Use GPU-optimized implementations of 3D loss functions such as point-to-mesh distance, nearest point distance, chamfer distance, AMIPS loss, and a collection of other operations on 3D data, such as topology processing on mesh, extraction and projection of orthographic depth maps, and operations on SPCs such as sparse convolutions, trilinear interpolation, dual grid creation, and conversion from mesh.

Camera and Mesh API

Make use of convenient and modular differentiable Camera API, with many convenience methods and recipes. Simplify 3D mesh management in PyTorch with a convenient mesh class, consistent across imports from different 3D formats, including glTF, USD and OBJ.