With the introduction of hardware-accelerated ray tracing in NVIDIA Turing GPUs, game developers have received an opportunity to significantly improve the realism of gameplay rendered in real time. In these early days of real-time ray tracing, most implementations are limited to one or two ray-tracing effects, such as shadows or reflections. If the game’s geometry and materials are simple enough, you can use full path tracing to render the scenes, and still get 60 frames per second or more. Such a rendering method requires the use of sophisticated sampling and denoising algorithms, of course. The first game to do this is Quake II RTX, developed by NVIDIA Lightspeed Studios and based on the Q2VKPT project by Christoph Schied.

There are a significant number of papers published that cover various algorithmic parts of real-time ray tracers. For information about resampled importance sampling that can be used for direct lighting, see Energy Redistribution Path Tracing and Spatiotemporal reservoir resampling for real-time ray tracing with dynamic direct lighting.

Regardless of the sampling algorithms, any image rendered with real-time ray tracing is noisy and must be denoised. For more information about recent denoising work, see Spatiotemporal Variance-Guided Filtering: Real-Time Reconstruction for Path-Traced Global Illumination and Gradient Estimation for Real-Time Adaptive Temporal Filtering.

Denoising is a critical part of real-time ray tracing and rendering algorithms must be designed with denoising in mind. Typically, a denoiser is some combination of temporal and spatial filters that operate on one component of the lighting solution, such as diffuse or specular illumination of the primary surface. The other surface parameters, such as albedo or specular reflectivity, can be stored separately and applied in the end of the rendering pipeline, because that allows the denoiser to apply wide spatial blurs while preserving surface details.

An important problem that arises from this approach with splitting the lighting solution into components is that there must be a single primary surface. So, what’s the big deal, you might ask? G-buffers have been used extensively in game renderers for over a decade, and they almost always store just one primary surface. That works for conventional renderers because they deal with transparencies, such as glass, in a simplified way: these objects are rendered in a post-pass, when primary lighting has been already computed, and they reflect either a pre-rendered version of the scene with static lighting, or use screen space reflections (SSR). They also do not use real refraction. Instead, they typically are either completely flat and have refractive index of air or use simple screen space warping to achieve the look of bumpy glass or water with ripples.

However, in a path-traced game with fully dynamic lighting, static lighting or SSR look out of place. Instead, the renderer must shoot the reflection and refraction rays, find secondary surfaces, and shade them. If the glass itself is stored in the G-buffer, spatiotemporal denoising would not be applicable to the secondary surfaces, because such denoising relies on surface information, such as position and normals, to guide the filters. There would not be any information about the secondary surfaces. An easy solution to this problem would be to shade the secondary surfaces in a way that does not generate noise; that is, treat all lights as point lights and sample all of them for every pixel, ignore rough reflections, and use something like an irradiance cache for indirect lighting. That strategy has obvious drawbacks.

In this post, I describe a solution to this problem that combines two important concepts: Primary surface replacement (PSR) and checkerboarded split frame rendering (CSFR). This solution makes it easy to parallelize rendering of a single frame over multiple GPUs as a bonus.

Primary surface replacement

Begin with an easy problem: mirrors. Perfect mirrors are easier to deal with than glass because their BRDF has only one component: the reflection. There is no diffuse lighting on the mirror surface, or anything visible through the surface. Still, if only the mirror surface itself is stored in the G-buffer, you can’t use stochastic sampling on any secondary surfaces reflected in the mirror.

The solution to this problem is PSR. Instead of stopping at the mirror surface, the renderer should trace a reflection ray and store the reflected surface in the G-buffer instead. If the reflected surface is another mirror, the process can keep going until a different kind of surface is reached, or until the preset limit of recursive reflections.

The concept sounds simple, but PSR has significant implications on the rest of the renderer. Most importantly, subsequent shaders can’t reconstruct surface positions based on the camera view-projection matrix and the depth buffer. Similarly, they can’t assume that view vectors for the G-buffer surfaces are originating at the camera. Therefore, the G-buffer must be extended with multiple channels:

- Surface position

- Incoming ray direction

- Primary path length instead of primary depth (useful as a guide for denoisers or for effects like distance fade in open-world games)

- Number of bounces (also useful as a denoiser guide to avoid filtering across mirror boundaries)

- Throughput (product of reflective colors of all surfaces in the mirror chain, and extinction in the participating media, if any)

After this extended G-buffer has been formed, the renderer can work with it as if all the reflected surfaces were primary surfaces: shoot indirect lighting rays, sample direct lights, and run denoisers. After the denoisers have been applied, the final combine or compositing pass should collect all the lighting components and surface parameters, compute the final outgoing radiance, and then multiply that radiance by the throughput computed in the reflection pass.

An important aspect of any modern renderer is motion vectors because they are necessary for denoising and temporal anti-aliasing. With PSR, the motion vectors should describe the motion of the final G-buffer surface on the screen, after the chain of reflections. It’s not always possible to solve this problem but it is reasonably easy to solve it for a sequence of static flat mirrors. To do that, the renderer should first find the virtual position of the final object, which can be calculated as a product of the primary ray direction and the length of the reflection path. Then, apply virtual surface motion, which can be found by multiplying the world-space motion of the reflected surface by the reflection chain transform matrix. That matrix is a product of reflection matrices of each mirror encountered along the path, which can be computed using the Householder transformation. Finally, the updated (previous frame) virtual position should be multiplied by the previous camera view-projection matrix and subtracted from the current clip space position of the pixel being processed. Computing motion vectors for curved surfaces or moving mirrors is not in scope for this post.

One obvious drawback of this algorithm is that it only works for perfect mirrors. If a mirror is not perfect (it has nonzero roughness), the NDF-sampled reflection rays do not return coherent surfaces. Without coherent, continuous in screen space G-buffer surfaces, denoisers can’t operate effectively. A potential solution to this problem is to treat mirrors with low roughness as perfect mirrors, and then apply a spatial blur at the end. Alternatively, the reflection pass should just stop at rough mirrors and store them into the G-buffer, letting the subsequent shading passes deal with it.

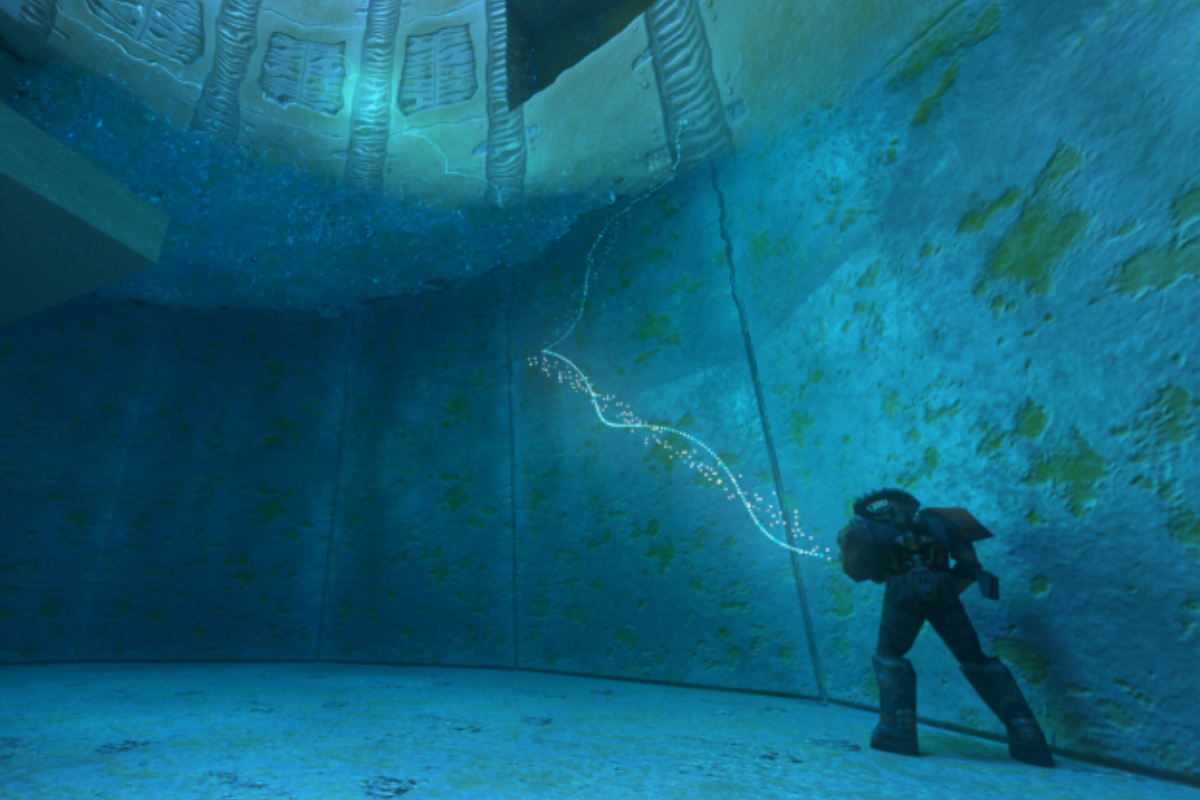

PSR alone is extremely useful and can enable a game to render perfect, well-lit, anti-aliased single and even multiple (hall-of-mirrors) reflections that are indistinguishable from regular primary surfaces in the game (Figure 2).

In Figure 2, the left image uses a simplified shading model for mirror reflections, which is normally only used for glossy reflections. Shading of reflections is improved in the middle image, but the lack of recursive reflections is apparent. That is fixed in the right image with the recursion limit set to 8.

PSR alone can’t deal with splitting paths, most importantly refraction, because there is still only one surface in the G-buffer, and a physically correct refraction needs two. It is possible, of course, to use two G-buffers. That would be expensive because both must be lit and denoised.

Checkerboarded split-frame rendering

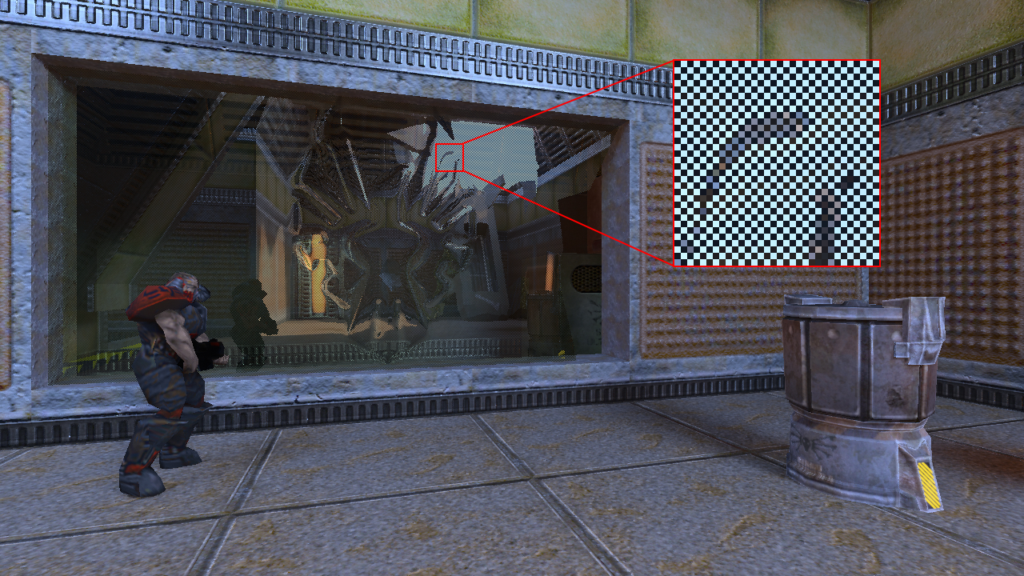

To address the two-surface G-buffer problem, the frame can be split into two parts in a checkerboard fashion. Pixels from different checkerboard fields are grouped together to form a continuous block. For example, all pixels from the even checkerboard field are moved to the left half of the frame, and all pixels from the odd field are moved to the right half of the frame, as shown on Figure 3. The checkerboard is pixel-scale, that is, each pixel on the screen is surrounded by four pixels from the other field.

The pixels are not really “moved” to either half of the screen, they are just renamed in the primary ray generation shader. That shader would take the output pixel position, figure out if it’s in the left or right half of the screen, and convert the position into a full-size image position. The result would look like the G-buffer contains two versions of the same frame side-by-side, with slightly different edge patterns on the objects, as shown on Figure 4.

This side-by-side G-buffer is then shaded and denoised as if it were a regular G-buffer with PSR applied. There would be minor changes to some passes. For example, the denoisers should test if the location they are sampling from belongs to the same half of the screen (checkerboard field), instead of testing the location against the full render size. Motion vectors should be multiplied by 0.5 on the X component. Spatial filter kernel weights should be adjusted to be narrower in the X direction as well.

After shading and denoising, the two checkerboard fields are interleaved back together into a complete frame, and regular post-processing such as temporal AA can be applied then.

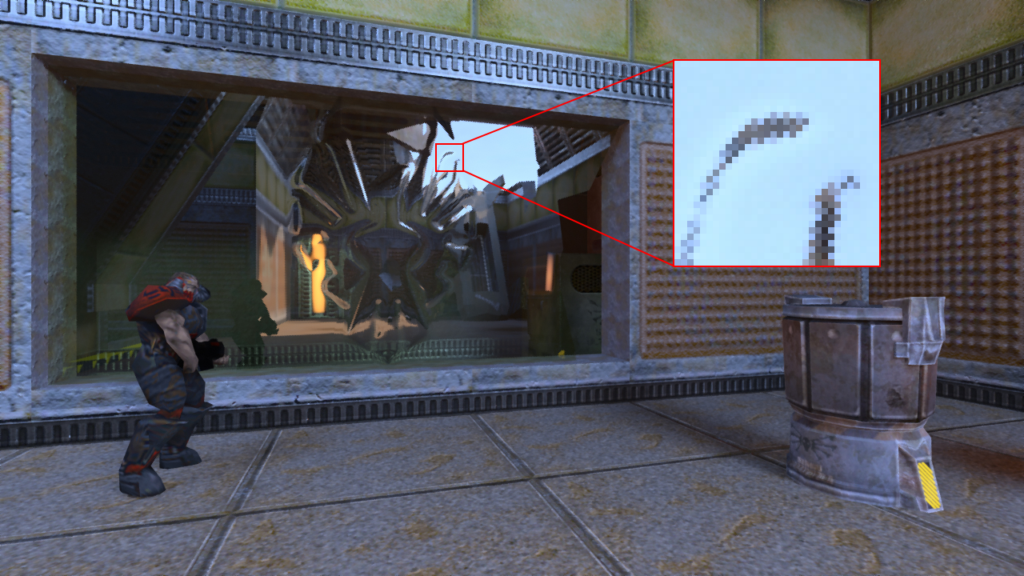

However, I omitted the most important part: how CSFR solves the refraction problem. The answer is that now you effectively have two primary surfaces: one in the even checkerboard field, and another in the odd field. Normally, they would be the same surface, just sampled at slightly different positions. When the reflection pass encounters a refractive surface, such as glass, it can split the path. For example, it can trace the reflection path for the even field, and the refraction path for the odd field. Or it can trace the reflection path for the even field and just stop for the odd field, to render a surface that has both perfect reflection and diffuse components in its BRDF. There are various possible behavior options. Figures 5 and 6 show the results of split-path tracing.

In the event of splitting the path, the throughput for both fields should be multiplied by two to account for half-resolution sampling. What you’re doing here is effectively sampling two paths with 50% probability each. To get correct results, the radiance returned from each path must be divided by that probability. In addition, the throughput should include the Fresnel term for reflections and its complement for refractions, where applicable, or a similar combination for other types of materials.

Obviously, when two checkerboard fields follow different paths and have different G-buffer surfaces, the final colors in these fields differ. Figure 7 shows that it is visible as a checker pattern after interleaving. This problem can be easily solved by applying a simple cross-shaped filter in the interleave pass for surfaces that follow split paths. However, it requires passing such information through one of the G-buffer channels. This solution, while easy, has an obvious drawback: reduced spatial resolution of reflections and refractions (Figure 8). In addition to that, when CSFR is combined with NVIDIA DLSS or regular Temporal Upsampling (TAAU), the upscaling filters tend to amplify the checkerboard pattern found on refractive objects.

There is a better option to resolve the two different checkerboard fields into a single image. It involves storing the previous frame’s colors before the checkerboard interleave pass. The interleave pass tries to find the color for the other field in the previous frame first. If that color is unusable (for example, if the surface has moved), it uses the spatial blur on the other field in the current frame. The result of this temporal checkerboard resolve is a pixel-accurate image in case nothing is moving. Some of the pixels are combinations of scene samples with different sub-pixel jitter: one from the current frame and another from the previous frame. The upscaler, like TAAU, should be aware of that.

Similarly, there are two different motion vectors available for such split surfaces. A single motion vector is necessary for temporal AA, which should be applied after checkerboard interleaving. You can’t just average or filter motion vectors like colors, that doesn’t make any sense. A better but not perfect solution is to pick the motion vector for the brighter surface of the two, based on the assumption that artifacts on the brighter surface would be more visible. However, per-pixel selection of one or another motion vector can produce minor artifacts of its own. Various heuristics based on content can be applied, for example, using the refraction motion vectors for vertical glass, because players normally look at something through that glass and reflection motion vectors for water.

Another important drawback of this approach is the handling of multiple refractive surfaces in the same pixel, or similarly complex paths involving other types of surfaces. The problem is, there are only two checkerboard fields, so the path can only be split one time. This drawback can be worked around by following only one path after the split. The question is: which path? A natural answer to that question would be the one that is based on stochastic sampling of the Fresnel term. However, denoisers require a continuous surface in the G-buffer, and stochastic sampling would break continuity. So, the implementation must choose one path, most likely based on a threshold of the Fresnel term. It doesn’t look perfect, but it’s something.

The checkerboarding approach could be extended to a greater number of fields, for example three or four. Those fields should be distributed on the screen in a uniform per-pixel fashion, to ensure the even sampling of surfaces. That would allow the implementation to split the path more than one time but would obviously require a larger spatial blur in the interleave pass in such a case, and the result would look blurrier. To be fair, I haven’t tried this.

Multi-GPU support

Anyone familiar with developing a renderer that works well on multiple GPUs knows that it is a painful experience. There are two primary approaches to multi-GPU rendering:

- Alternating frame rendering (AFR)

- Split frame rendering (SFR)

Historically, SFR was the first one to be implemented in 3DFx Voodoo graphics cards, and those would split the frame into alternating scan lines (Scan-Line Interleave, or the original SLI) and render those on different GPUs independently. With the increasing complexity of rendering algorithms, SFR became less and less practical: for example, shadow maps must be rendered on both GPUs because they are needed for the entire frame; spatial filters such as bloom also need information about the entire frame; and so on. AFR became more popular because it didn’t have any of these drawbacks. However, after temporal filters and GPU-based simulations became widely used, AFR also became more challenging to implement efficiently, because such techniques require transferring large amounts of data between GPUs on every frame.

Spatiotemporal denoising is one of these algorithms that require a lot of information about the previous frame, so these transfers would become expensive and diminish the positive effect of AFR. Unfortunately, it also requires a lot of information about the current frame in its entirety, which makes classic versions of SFR impractical as well.

The pixel-scale CSFR algorithm described here is a perfect fit for a multi-GPU implementation of a real-time path tracer. Just assign each checkerboard field to its own GPU and let them work on the fields separately all the way until the final surface colors are computed. Then, transfer the final colors and motion vectors from the secondary GPUs to the primary GPU, interleave, and run post-processing. Post-processing should take a relatively small fraction of the frame time anyway, because path tracing is so expensive. I’ve observed speedups of over 70% when switching from one to two Turing class GPUs in Quake II RTX, which can be considered a good result.

One drawback of this approach is that it only works for a number of GPUs that is equal to the number of fields in the image, that is, two in case of a regular two-field checkerboard pattern, and these GPUs should have similar performance. To distribute the rendering of a frame into more than two GPUs, you should use a more complex field pattern. However, that is likely to somewhat reduce image quality, as discussed in the previous section.

Conclusion

The PSR/CSFR combination described in this post solves many problems that arise during the development of a real-time path tracer: high-quality reflections and refractions with stochastic lighting, support for surfaces with ray-traced reflection and refraction at almost no additional cost, and support for multi-GPU SFR. There are also certain drawbacks: visible stipple patterns on some edges that are present in just one checkerboard field, slight blurriness of refractive surfaces, and no good solution for multiple refractive surfaces in one pixel.

These algorithms are implemented in Quake II RTX, and its source code is available on GitHub. They are also used in Minecraft with RTX.