Born in 1928 in Osaka, Japan, Osamu Tezuka is known in Japan and around the world as the “Father of Manga.” He’s also known as one of the world’s greatest illustrators, akin to Walt Disney, for his legendary comics that include Astro Boy, Princess Knight, Kimba the White Lion, Black Jack, and many more.

Tezuka died in 1989. To keep his legacy alive, a team of researchers and artists from Kioxia Corporation, a Japanese memory manufacturer, in collaboration with artists from Tezuka Productions and academic partners, used deep learning to create the world’s first AI-designed manga, reflecting Tezuka’s works.

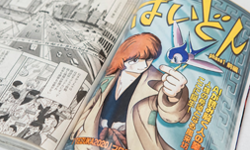

The new manga, PHAEDO, is released in the weekly comic magazine, Morning. To generate the new characters and stories featured in the manga, the team used NVIDIA StyleGAN to help with the character generation phase by analyzing hundreds of works by Tezuka, including Phoenix, Black Jack, and Astro Boy.

“The God of Manga, Osamu Tezuka, taught us the joy of depicting dreams through technology. At the same time, he insisted that science should not leave humanity behind. In 2020, we now live in a world that Osamu Tezuka imagined. If he was alive now, what would the future look like through his eyes?” the researchers wrote in their post, FutureMemories 01.

Ryohei Orihara, the project’s leader, and senior fellow at the Digital Process Innovation Center at Kioxia explained, “Future Memories 01, ‘Tezuka 2020’, is a project in which AI and humans create a piece of work from Tezuka’s many memories. Manga has become well-known as a manifestation of Japanese culture around the world, and it is read and loved across generations.”

The company worked in tandem with academic partners, including Satoshi Kurihara, professor at the Faculty of Science and Technology at Keio University and professor of AI at the University of Electro-Communications.

“In order to have AI learn the characteristics of Osamu Tezuka, we used manpower and AI that specializes in image recognition to convert the parts of characters’ faces into data and analyze the development of various scenarios. With AI learning the characteristics of Osamu Tezuka through this data, we generated characters and storylines that have Tezuka’s characteristics,” Kurihara stated.

At the core of the character generation phase is NVIDIA’s StyleGAN a generative adversarial network developed by NVIDIA researchers.

To build a training dataset to use with StyleGAN, Professor Kazushi Mukaiyama from Future University Hakodate enlisted his students’ help. Together, they compiled a dataset of over 10,000 facial images from Tezuka’s work that could be used to train the model.

“I had the computer learn character faces outside of Tezuka’s works as well as actual human faces, and I made it learn the augmented data generated by flipping the characters’ faces left and right,” Mukaiyama explained.

Training and inference were done using multiple NVIDIA V100 GPUs, and the cuDNN-accelerated TensorFlow deep learning framework, which StyleGAN is written in.

In the first phase, StyleGAN generated the faces in a single process. “For this reason, the team attempted to generate more complete images, in which fine details such as the eyes, nose, and mouth are gradually generated from rough depictions, such as contours,” the researchers said.

Next, the team decided to use only female characters from Tezuka’s manga.

Ultimately, the team used transfer learning to incorporate thousands of data points, to enable the Tezuka-like characters without fail.

“By combining different individual features of the characters, we found that characters with unprecedented characteristics could be created. This mechanism relies on creating a number of variations by gradually changing the ratio of the two characters that are mixed,” the researchers showed.

Orihara says he initially wasn’t convinced transfer learning could do this, and that some of the aspects of the inner workings of the model are unknown. However, that’s why deep learning is interesting, he said. “…[B]ecause things like this can happen. It was really fun to see the characters being created, one after another,” he added.

Toshihiro Miura, the Editor-in-Chief of Morning, the publication in which the final product appears, said that he first declined the project after learning how much human intervention was involved. He said that he changed his mind later after talking to professor Kurihara and seeing that the work reaffirms the work of human artists and how ‘incredible’ they are.

“I realized this project could also serve as an analysis of how humans create manga,” Miura said. “I wanted to show that the process of creating an AI, which can draw the manga in these works, is in itself a human creative process,” he added.

In the end, Miura quipped that humans have an edge over the AI. “AI can’t go for a drink with the writers, can it? I guess that’s my edge,” he laughed.

The developers have published an in-depth blog about the work, describing all phases of the human-AI collaboration. The researchers have also published a mini-documentary, produced by Tezuka productions, on YouTube, TEZUKA 2020 Official Video.

StyleGAN is available on GitHub.