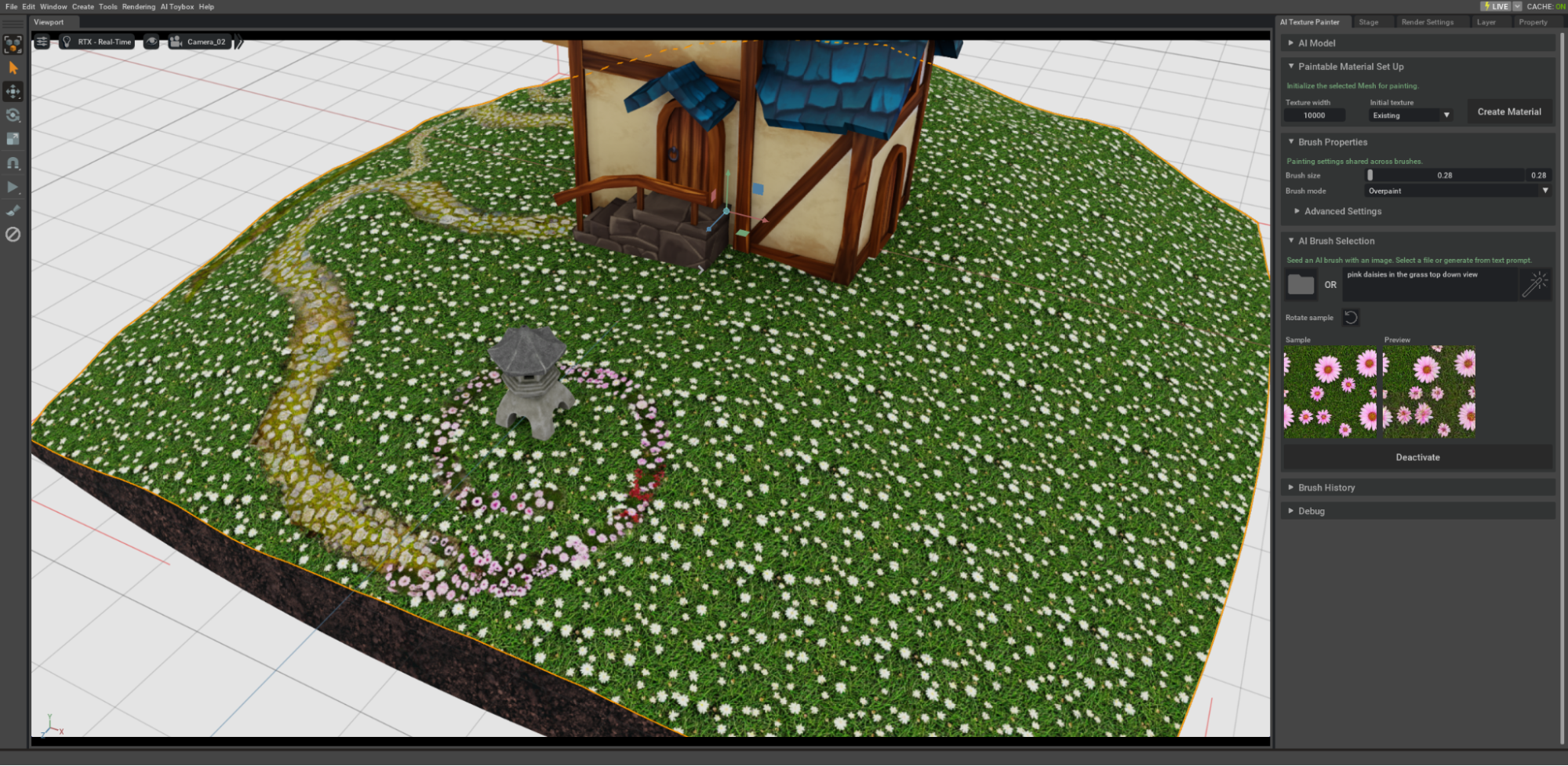

NVIDIA researchers took the stage at SIGGRAPH Asia Real-Time Live event in Sydney to showcase generative AI integrated into an interactive texture painting workflow, enabling artists to paint complex, non-repeating textures directly on the surface of 3D objects.

Rather than generating complete results with only high-level user guidance, this prototype shows how AI can function as a brush in the hands of an artist. It enables the interactive addition of local details with infinite texture variations and realistic transitions. If you missed the live show, see the prerecorded version of this demo.

This is one in a series of NVIDIA research projects seeking to harness the power of AI to support creativity by developing new iterative workflows with real-time AI inference and direct control. The same group showcased Gen AI Materials at SIGGRAPH in August 2023, winning the Real-Time Live show.

AI texture painting takes AI one step further into the interactive loop. Rather than enabling you to generate and iterate on square tiling physically based rendering (PBR) materials that can then be applied to UV-mapped 3D objects, this project enables you to directly control the placement, scale, and direction of textures by interactive painting. Every patch of the 3D paint stroke is generated by AI in real time.

Tailoring AI for creativity

Among all the aspects of designing tools for creativity, direct iterative control over the outcome is one of the most important. One of the challenges in integrating modern foundational image AI models into interactive workflows, such as painting, is that AI is simply too good at imagining things that may not necessarily be the artist’s intent. In some cases, this can lead to the need for careful prompt engineering and unpredictable results that appear difficult to control.

In the case of this interface, researchers opted not to include a text-based interface for either placement or identity of the texture. Following the proverb, “An image is worth a thousand words,” the AI brush is conditioned on an example image of the target texture.

Inspiration images are a common concept in 3D design. These images typically serve only as a reference and must be heavily processed before they can be integrated into the 3D scene.

The AI Material presentation at SIGGRAPH showed how an imperfect inspiration image can be converted to a tileable PBR material, making it much easier to bring inspiration from the real world into 3D workflows. In this new demo, inspirational images of any real-world textures can be turned into AI brushes that artists can use for painting in 3D. You control not just the stroke shape, but brush size and texture direction.

The AI in the prototype is designed to ensure that the brushstroke includes variations of the reference, without deviating too much from its identity. The backbone foundational AI model also provides realistic transitions between regions of different textures, without any reference of such transitions. For example, AI can fill in a realistic transition between the original grass texture and the rocky path interactively painted using the AI texture brush.

What if there is no inspirational image available to seed the brush?

Text-to-image AI can be used to generate several versions. You pick the exact brush you would like to use, opening up a wide array of creative possibilities with direct artist control in the interactive loop.

Empowered by NVIDIA technologies

Several NVIDIA technologies come together to enable this prototype. One of the requirements of interactive interfaces, as well as the Real-Time Live program, is speed. This prototype achieves an inference speed of 0.23-0.15s per brush stamp, enabled by accelerated inference on Tensor Cores in NVIDIA GPUs.

This prototype was developed as an NVIDIA Omniverse extension. Omniverse is a modular development platform of APIs and microservices for building applications and services powered by OpenUSD and NVIDIA RTX, empowering developers to build complex 3D tools incorporating AI.

In this case, efficient raycasting from the integrated NVIDIA Warp Library and efficient dynamic texture support allowed AI to deliver fast updates directly to the rendered object.

Under the hood, the method relies on the NVIDIA Kaolin Library for 3D deep learning for efficient offscreen rasterization and texture back-projection directly on the GPU.

Acknowledgments

This demo is the result of a cross-team effort by Anita Hu, Nishkrit Desai, Hassan Abu Alhaija, Alexander Zook, Seung Wook Kim, Ashley Goldstein, Carsten Klove, Daniela Hasenbring, Rajeev Rao, and Masha Shugrina. Anita Hu and Alexander Zook delivered the live presentation.