Generating road layout for different city styles is a time-consuming task. Artists need to manually create the road geometry and adjust its parameters like width and curvature by comparing it with real-world maps. But, what if there was a better way to do this?

At the International Conference on Computer Vision in Seoul, Korea, NVIDIA researchers, in collaboration with University of Toronto, the Vector Institute and MIT presented Neural Turtle Graphics (NTG), a novel generative model for spatial graphs, that as one of its applications, can automatically generate city road layouts.

“NTG is a sequential generative model parameterized by a neural network. It iteratively generates a new node and an edge connecting to an existing node conditioned on the current graph,” the researchers stated in their paper.

“City road layout modeling is an important problem with applications in various fields,” the researchers stated. “In urban planning, extensive simulation of city layouts are required for ensuring that the final construction leads to effective traffic flow and connectivity. Further demand comes from the gaming industry where on-the-fly generation of new environments enhances user interest and engagement.”

Road layout generation also plays an important role for self-driving cars, where diverse virtual city blocks are created for testing autonomous agents.

Data-driven end-to-end learning paradigm for city layout generation are still largely based on procedural modeling with hand-designed features. While these methods guarantee valid road topologies with user specified attribute inputs, the attributes are all hand-engineered and inflexible to use.

For example, if one wishes to generate a synthetic city that resembles London, tedious manual tuning of the attributes are required in order to get plausible results. Moreover, these methods cannot trivially be used in aerial road parsing, the researchers explained.

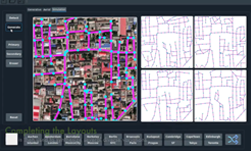

By combining the two tasks of aerial parsing and generation, NTG automatically simulates new cities for which it has not seen any part of the map during training time. NTG generates plausible new versions of cities, interactive generation of city road layouts, as well as aerial road parsing.

To train and run their model, the team used an NVIDIA TITAN V GPU, with the cuDNN-accelerated PyTorch deep learning framework.

In future work, the researchers say they will focus the generation of other city elements such as buildings and vegetation.