During the IEEE International Conference on Robotics and Automation (ICRA) May 13-17 in Yokohama, Japan, many people will be discussing geometric fabrics. That topic is the subject of one of seven papers submitted by members of the NVIDIA Robotics Research Lab, along with collaborators, and featured at ICRA this week.

What are geometric fabrics?

In robotics, trained policies are approximate by nature. They usually do the right thing, but sometimes, they move the robot too fast, collide with things, or jerk the robot around. There is no guarantee of what may occur.

So, any time that someone deploys trained policies and especially reinforcement learning-trained policies on a physical robot, they use a layer of low-level controllers to intercept the commands from the policy. Then, they translate those commands so that they satisfy the limitations of the hardware.

When you’re training RL policies, run those controllers with the policy during training. The researchers determined that a unique value that could be supplied with their GPU-accelerated RL training tools was to vectorize those controllers so they’re available both during training and deployment. That’s what this research does.

For example, companies working on humanoid robots may show demos with low-level controllers that balance the robot but also keep the robot from running its arms into its own body.

The controllers the researchers chose to vectorize are from a past line of work on geometric fabrics. The paper, Geometric Fabrics: Generalizing Classical Mechanics to Capture the Physics of Behavior, won a best paper award at last year’s ICRA.

DeXtreme policies

The in-hand manipulation tasks that the researchers address in this year’s paper also come from a well-known line of research on DeXtreme.

In this new work, the researchers merge those two lines of research to train DeXtreme policies over the top of vectorized geometric fabric controllers. This keeps the robot safer, guides policy learning through the nominal fabric behavior, and systematizes sim2real training and deployment to get one step closer to using RL tooling in production settings.

This creates a foundational infrastructure enabling the researchers to quickly iterate to get the domain randomization right during training for successful sim2real deployment. For instance, by iterating quickly between training and deployment, they could adjust the fabric structure and add substantial random perturbation forces during training to achieve a level of robustness far superior to previous work.

In the prior DeXtreme work, the real-world experiments proved extremely hard on the physical robot, wearing down the motors and sensors and changing the behavior of underlying control through the course of experimentation. At one point, the robot broke down and started smoking!

But with geometric fabric controllers underlying the policy and protecting the robot, the researchers found that they could be much more liberal in deploying and testing policies without worrying about the robot destroying itself.

For more information, see Geometric Fabrics: A Safe Guiding Medium for Policy Learning or watch the DeXtreme example videos.

More robotics research at ICRA

Other noteworthy papers submitted this year include the following:

- SynH2R

- Out of Sight, Still in Mind

- Point Cloud World Model

- SKT-Hang

SynH2R

The SynH2R authors propose a framework to generate realistic human grasping motions suitable for training a robot. For more information, see SynH2R: Synthesizing Hand-Object Motions for Learning Human-to-Robot Handovers.

Out of Sight, Still in Mind

The RDMemory authors test a robotic arm’s reaction to things previously seen but then occluded from view to ensure that it responds reliably in various environments. This work was done in both simulation and in real-world experiments.

For more information, see Out of Sight, Still in Mind: Reasoning and Planning About Unobserved Objects With Video Tracking Enabled Memory Models or watch the RDMemory example videos.

Point Cloud World Models

The Point Cloud World Models researchers set up a novel Point Cloud World Model (PCWM) and point cloud-based control policies that were shown to improve performance, reduce learning time, and increase robustness for robotic learners.

For more information, see Point Cloud Models Improve Visual Robustness in Robotic Learners.

SKT-Hang

The SKT-Hang authors look at the problem of how to use a robot to hang up a wide variety of objects on different supporting structures (Figure 1). While this might seem like an easy problem to solve, the variations in both the shapes of the objects as well as the supporting structures pose multiple challenges for the robot to overcome.

For more information, see SKT-Hang: Hanging Everyday Objects via Object-Agnostic Semantic Keypoint Trajectory Generation and the /HCIS-Lab/SKT-Hang GitHub repo.

Robots with surgical precision

Several new research papers have applications for use in hospital surgical environments.

ORBIT-Surgical

ORBIT-Surgical is a physics-based surgical robot simulation framework with photorealistic rendering powered by NVIDIA Isaac Sim on the NVIDIA Omniverse platform.

It uses GPU parallelization to train reinforcement learning and imitation learning algorithms that facilitate the study of robot learning to augment human surgical skills. It also enables realistic synthetic data generation for active perception tasks. The researchers demonstrate using ORBIT-Surgical sim-to-real transfer of learned policies onto a physical dVRK robot.

The underlying robotics simulation application for ORBIT-Surgical will be released as a free, open-source package upon publication.

For more information, see ORBIT-Surgical: An Open-Simulation Framework for Learning Surgical Augmented Dexterity.

DefGoalNet

The DefGoalNet paper focuses on shape servoing, which is a robotic task dedicated to controlling objects to create a specific goal shape. For more information, see DefGoalNet: Contextual Goal Learning From Demonstrations for Deformable Object Manipulation.

Meet NVIDIA Robotics partners at ICRA

NVIDIA robotics partners are showing off their latest developments at ICRA.

Zürich-based ANYbotics presents its ANYmal Research, which provides a complete software package that grants users access to low-level controls down to the ROS system. ANYmal Research is a community of hundreds of researchers working in top robotics research centers, including the AI Institute, ETH Zürich, and the University of Oxford. (Booth IC010)

Munich-based Franka Robotics highlights its work with NVIDIA Isaac Manipulator, an NVIDIA Jetson-based AI companion to power robot control and the Franka toolbox for Matlab. (Booth IC050)

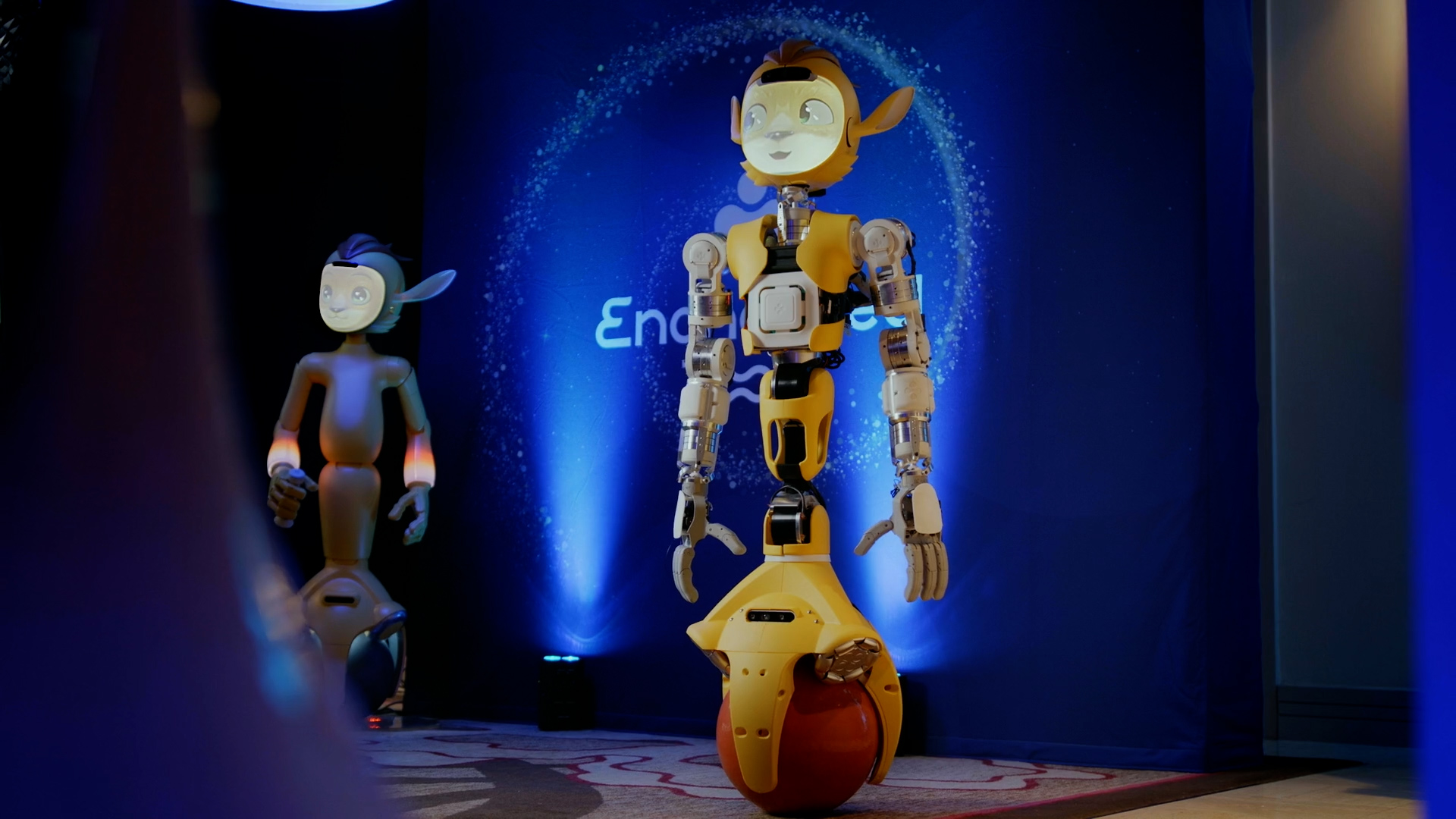

Enchanted Tools shows off its Jetson-powered Mirokaï robots. (Booth IC053)

Learn more

The NVIDIA Robotics Research Lab is a Seattle-based center of excellence focused on robot manipulation, perception, and physics-based simulation. It’s part of NVIDIA Research, which has more than 300 leading researchers around the globe, focused on topics spanning AI, computer graphics, computer vision, and self-driving cars.