The 65th annual Daytona 500 will take place on February 19, 2023 and for many this elite NASCAR event is the pinnacle of the car racing world. For now, there are no plans to see an autonomous vehicle racing against cars with drivers, but it’s not too hard to imagine that scenario at a future race. At CES in early January, there was a competition to test the best autonomous racing vehicles.

To make such a vision reality, you need some serious technology under the hood to power the vehicle and ensure proper track navigation.

For that reason, it’s easy to see why 3D Lidar is one of the main tools used in autonomous mapping and navigation. It isn’t sensitive to light conditions, it can detect color through reflective channels, it can provide a complete 360-degree view of the environment, and it doesn’t require any “learning” to detect obstacles.

The point cloud information from the Lidar can also easily enable mapping and localization as the vehicle knows where it is at all points. But Lidar can be expensive—and bulky—which limits its utility for many developers, especially if you’re working with a smaller-than-normal race car.

So what if there was an alternative to using Lidar that could accomplish similar functionality in an autonomous race car?

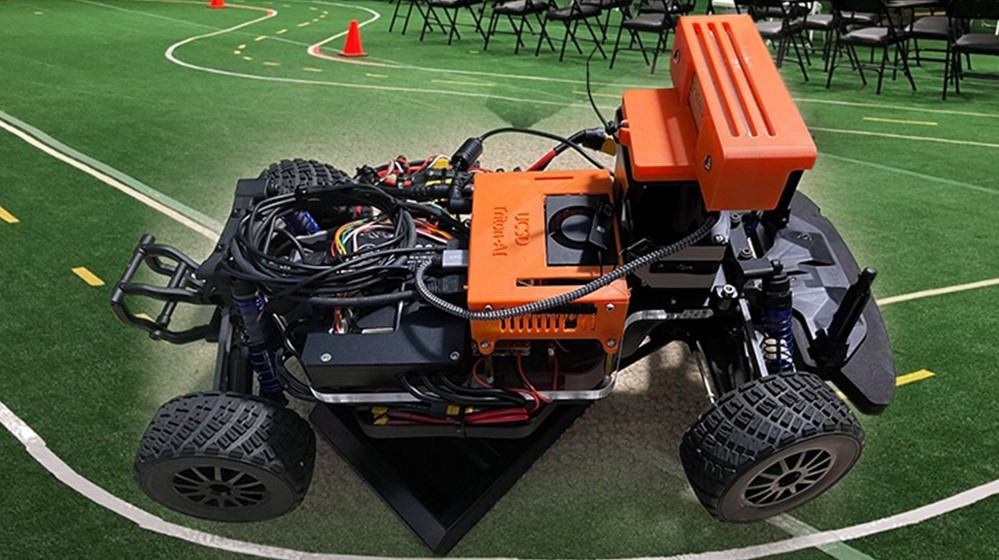

Triton AI Data Science project from UC San Diego

As a part of their 2021 Triton AI Data Science capstone program at UC San Diego, three students set out to build an AI-powered autonomous miniature race car. They explored the difficulties of ensuring effective autonomous navigation and simultaneous localization and mapping (SLAM) when opting for cheaper camera solutions sized for use on their race car instead of using costly Lidar sensors.

Using a camera in place of Lidar did have a few drawbacks, including requiring special programming to handle various light conditions. It required multiple cameras to create 360-degree vision.

Their project explores approaches to autonomous race car navigation using ROS, Detectron2‘s object detection and image segmentation capabilities for localization, object detection, and avoidance, and RTAB-Map (Real-Time Appearance-Based Mapping) for mapping.

The students, Youngseo Do, Jay Chong, and Siddharth Saha, had three main goals in their research:

- Mapping and localization with a single camera and other sensory information using the RTAB-Map SLAM algorithm

- Obstacle avoidance and lane following with a single camera using Facebook AI Detectron2 Deep Learning and ROS

- Fine-tuning the camera to be less sensitive to varying light conditions using ROS

rqt_reconfigure

Details about their project are available in the following video:

Here’s a quick demo of the cars in action:

How did the team work towards their goals?

Given the complexity of the project, the student opted to break the project into three distinct components:

- Mapping and localization through RTAB-Map SLAM

- Object avoidance using Detectron2

- Tuning the camera’s light sensitivity

Mapping and localization through RTAB-Map SLAM

The team considered options for navigation including GPS but noted in their presentation that it can be unreliable for fast motion and accurate readings. They chose to use RTAB-Map because it is compatible with their sensors and because they were able to try it out using an online simulator that helped them become familiar with how it worked.

Object avoidance using Detectron2

The aim was to use the image input to extract obstacle and boundary information from those images, so that the racecar can know what the optimal next move is while driving.

Tuning the camera’s light sensitivity

As the students noted in their presentation, the camera had “to be as insensitive to the light as possible” because changing light conditions affected what the car sees, especially with the yellow line in the center of the race track.

Project hardware

To bring this project to life, the students used the following hardware:

- A JetRacer with a Jetson Xavier NX developer kit inside.

- Intel Realsense D455: A depth-sensing camera designed for collision avoidance, 3D scanning, digital signage, and volumetric measurements.

- A Flipsky VESC speed controller to send movement commands to the JetRacer.

The car was tested on an indoor racetrack containing multiple traffic cones that are meant to be avoided by the racecar while trying to get it to stay within the track at all times.

Future improvements

At the time of their project, the students wanted to change from manually coded driving rules to reinforcement learning techniques, to increase flexibility in behavior. This will come in handy if they need more sensors and rules. They also wanted to train the model to detect other cars so that it could be used in head-to-head races, but the pandemic prevented that from happening at the time.

Is NASCAR in their future? It could be. Siddharth Saha, one of the students from this project, and fellow UC San Diego student Haoru Xue were the technical leads on two autonomous vehicle racing challenges:

- In November, their team placed second at the Indy Autonomous Challenge race held at the Texas Motor Speedway.

- In early January, their team took third at the Autonomous Challenge at CES at the Las Vegas Motor Speedway.

Both students started their study of autonomous vehicles during their work with NVIDIA Jetson at UC San Diego.

For more information about this Jetson Project of the Month, see the capstone project website.

For more information about autonomous race cars, see what Bay Area developers have worked on previously: DIY Autonomous Car Racing with NVIDIA Jetson.