Over 55% of the global population uses social media, easily sharing online content with just one click. While connecting with others and consuming entertaining content, you can also spot harmful narratives posing real-life threats.

That’s why VP of Engineering at Pendulum, Ammar Haris, wants his company’s AI to help clients to gain deeper insight into the harmful content being generated online about them. These are the kinds of falsehoods that often spread like a fast-moving, wildfire across video, audio, and text on social media platforms.

As is the case with wildfires, early detection of a detrimental online narrative could hold the key to snuffing out any damaging effects.

Pendulum is a member of the NVIDIA Inception program, which helps startups evolve by providing access to cutting-edge technology and NVIDIA experts.

Speech AI and NLP for society’s wellbeing

Back in 2021, Sam Clark and Mark Listes founded Pendulum with the goal of helping clients identify damaging content. The business partners knew that their platform could apply speech AI and natural language processing (NLP) to help protect online reputations or even help keep staff safe in real-time.

Over the next year, the engineering team developed a cadre of AI systems to detect and characterize injurious falsehoods plaguing global society’s wellbeing.

Today, Pendulum’s platform is making previously undiscoverable narratives finally accessible, despite the massive quantity of data to process. Engineers at Pendulum are familiar with the challenges of searching troves of media.

“Video on YouTube, BitChute, Rumble, and TikTok, not to mention audio in podcasts, were hard to search and even harder to put into context. That’s why, too often, only the metadata is searched in others’ approaches, rather than by the actual raw content,” explained Haris.

AI engines finding real falsehoods

How has processing the data landscape changed? By using accelerated speech AI and NLP, Pendulum’s Intelligence Explorer and Narrative Engine now enable smart, deep searches to home in on the needle (the harmful narrative) in a haystack of enormous media corpora.

In fact, it’s likely you are already familiar with many online cases of large-scale falsehoods and how they are apt to mutate online. To date, for instance, Pendulum’s engine has zeroed in on the following:

- False information purported about celebrities

- Physical threats against a company’s employees

- Conspiracies about supply chain delays

- COVID-19 vaccine disinformation

- Disinformation about the war in Ukraine

- A recent attempt to cause harm at the FIFA World Cup 2022

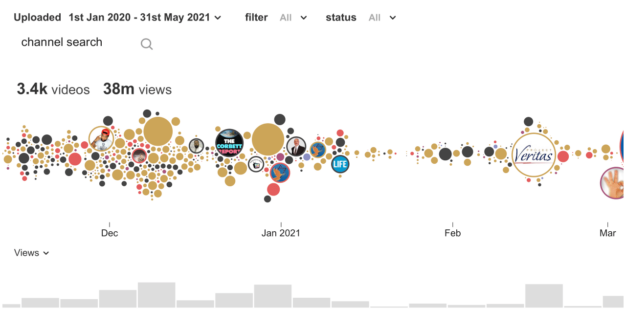

Figure 1 shows that Pendulum identified 3,360 videos, accounting for 38M views, likely to support the false narrative that COVID vaccines modify your DNA. Of these, 1,600 videos are still available on the platform, accounting for 16M views as of the date of writing. False narratives are circles over time with the size corresponding to the number of views.

How does Narrative Engine detect these narratives online and generate alerts? Pendulum developed an automated method to discover and classify YouTube channels, capable of transcribing tens of thousands of videos every day, primarily by analyzing text transcribed by automatic speech recognition (ASR).

The engine combs through the text, searching billions of items for supporting data in the form of dialogs, speeches, podcasts, and talk tracks, largely independent of media type or social media platform. The content of interest then gets tagged to alert the client to any risks or trends identified.

The technology behind the solution

The speed of ASR processing can become an issue, unless a GPU-based implementation can handle the throughput. NVIDIA Riva Enterprise made sense for Pendulum and turned out to be a great solution.

“The transcripts are more accurate than other cloud services that we evaluated, while achieving a higher throughput and at a lower cost,” Haris said.

With Riva’s Helm charts, the engineering team did not have to deal with too much overhead during setup. They were able to quickly bring up an accelerated version of the engine. Riva permits self-hosting of the ASR service on-premises or in the cloud, streamlined with Helm chart configuration.

Pendulum currently runs the Riva Enterprise service on NVIDIA-powered GPU instances on Amazon Web Services (AWS) to scale out the amount of audio and video content that can be rapidly transcribed and processed.

With the ASR step complete, Pendulum’s Narrative Engine applies further AI resources from Riva to the freshly transcribed text or to text gleaned elsewhere. For example, the raw output of the ASR process is normally a long, unbroken stream of uncapitalized words. This is hardly the kind of data that you might think could be finessed into an actionable intelligence report.

Considering the output, Pendulum next applies Riva’s punctuation and capitalization AI model to convert the rambling stream of words into sentences. The output is complete with capitalized proper nouns, well-placed commas, and terminating periods or question marks, as appropriate.

Referring to the example in Figure 1, in Pendulum’s narrative discovery methodology, proprietary NLP subsystems further process the text. For instance, the engine splits the text captions of 14 million videos into 205 million snippets (segments of text about 100 tokens long). The result is further filtered down to videos containing one or more COVID anchor terms, including forms of the words “vaccine” and “DNA”. This process results in a set of 9,200 videos and 15,689 snippets.

Finally, Pendulum applies a proprietary, hybrid, zero-shot learning algorithm to result in a detection precision of 0.74 and recall of 0.83. In this case, 74% of snippets predicted to support the narrative id indeed support the narrative, while 83% of snippets supporting the narrative were identified by this method. This is an impressive result.

To keep up with demand as their business grows, the Pendulum team has now deployed a multi-node GPU cluster on AWS to meet throughput and latency requirements. After that, what else does it take, besides capable hardware, to achieve these challenging requirements?

NVIDIA Triton Inference Server software on the GPU server handles multiple requests against all of Pendulum’s various AI models. Triton Inference Server supports models logically chained together into an ensemble to be fully processed in the GPU, thus avoiding the slow GPU-to-CPU memory copy pitfall.

Real-world challenges ahead

The capabilities of Pendulum’s platform are set to reach further into social media brands, as developers add support beyond the currently available YouTube, Rumble, BitChute, Tik Tok, and podcasts.

Still, the company’s leadership can’t adjudicate the truth just through the application of their engines. In fact, avoiding that sort of complicated situation has permitted Pendulum to open its aperture wider and to mount new challenges.

Take, for instance, how we all know that videos can have more meaning than just their spoken words, especially accompanied by emotional imagery and an evocative music soundtrack. Even if there is no speech at all in such a video, it can still contribute to a narrative.

(Think of ISIS’s recruitment videos several years ago: Many videos contained little speech but did have agitating scenes and music intended to connect with a certain audience.)

After all, where there’s no speech, ASR has nothing to transcribe, and narratives remain undetected.

Pendulum’s technical team is working on handling distractors like video ads popping with speech up during playback, potentially confusing a narrative that’s forming. Haris explained, “There’s this one banking video ad that’s the bane of my team’s existence, disrupting the transcription process. There’s still work to do.”

Get started with speech AI today

You, too, can try NVIDIA Riva to see how it stacks up in transcription accuracy, speed, and ease of use as you build your application. Here are some resources to get you started:

- Learn more about speech recognition and how to start using it today.

- Get a detailed overview of the growing Speech AI landscape in this free ebook, Introduction to Speech AI.

- Learn how to achieve natural-sounding speech by adding TTS skills to your application with the free ebook, End-to-End Speech AI Pipelines.

Take the self-paced Deep Learning Institute course, Get Started with Highly Accurate Custom ASR for Speech AI, and learn how to customize a speech recognition pipeline.