The telecommunication industry has seen a proliferation of AI-powered technologies in recent years, with speech recognition and translation leading the charge. Multi-lingual AI virtual assistants, digital humans, chatbots, agent assists, and audio transcription are technologies that are revolutionizing the telco industry. Businesses are implementing AI in call centers to address incoming requests at an accelerated pace, resulting in major improvements to the customer experience, employee retention, and brand reputation.

For instance, automatic speech recognition (ASR), also known as speech-to-text, has been used to transcribe conversations in real time, enabling businesses to quickly identify resources or resolutions for customers. Speech AI is also being used to analyze sentiment, identify sources of friction, and improve compliance and agent performance.

This post delves into the transformative power of speech recognition in the telecommunication industry and highlights how industry leaders such as AT&T and T-Mobile are using these state-of-the-art technologies to deliver unparalleled customer experiences in their call centers.

Impact of speech-to-text on improving customer service

The implementation of speech-to-text technology has become a game-changer in the world of customer service. By automating tasks such as call routing, call categorization, and voice authentication, businesses can greatly reduce wait times and guarantee customers are directed to the most qualified agents to handle their requests.

Speech recognition can also be used as part of AI-driven analysis of customer feedback, helping to improve customer satisfaction, products, and services. With speech-to-text enabled AI applications, companies can accurately identify customer needs and promptly address them.

During his presentation at GTC, Jeremy Fix, AVP-Data Science AI at AT&T, outlined key reasons why they implemented AI to improve their call center experience:

- Optimize staffing resources

- Personalize their customer experiences

- Assist their agents in making actionable insights

Resource optimization

A critical component of call centers is adequate staffing. This includes attracting and retaining the best talent. Using AI, AT&T can forecast call supply and demand, providing agents with the support they need for performing at their best.

Personalization

By understanding the intent of customers when they first connect, AT&T can match callers with experienced agents who have previously resolved similar issues and provided relevant offers to customers at the right time.

Agent assist

With the combination of call transcription and an insights engine driven by natural language processing (NLP), AT&T can empower agents and their managers with real-time actionable insights. Those insights enable them to make intelligent decisions and deliver exceptional customer service (Video 1).

How does this work in real time? During a call, AT&T’s NLP engine uses real-time transcription and text mining to identify the topic discussed. It recommends the next best actions, identifies call sentiment, predicts customer satisfaction, or even measures agent quality and compliance.

Common speech-to-text accuracy challenges

Although speech AI can drive significant improvements to your call center, successfully implementing speech-to-text comes with a few challenges, as discussed by Heather Nolis, principal machine learning engineer at T-Mobile, during GTC:

- Phonetic ambiguity

- Diverse speaking styles

- Noisy environments

- Limitations of telephony

- Domain-specific vocabulary

Phonetic ambiguity

How many times have you been on a call and misunderstood someone? Did you say “recognize speech” or “wreck a nice beach”? This is called phonetic ambiguity—when words sound the same, but have different meanings—and it can result in incorrect transcription if speech-to-text is not trained to recognize words within a context.

Diverse speaking styles

Each person can also have different accents, dialects, and physiological differences in their mouths, meaning each word that we pronounce sounds different. For contact centers operating globally, these subtle nuances must be captured in your training dataset to improve speech recognition accuracy.

Noisy environments

Call center customer-agent conversations may include background noises, simultaneous speakers, low microphone quality, and even poor cellular reception that can cause sounds to be missing on phone calls. Robust speech-to-text must be able to withstand this type of environment when deployed in a contact center.

Limitations of telephony

Telephony limitations, which include the inability to record certain sounds like “s” and “f,” can further hinder speech-to-text accuracy. For example, when you are on the phone and hear “free for all Friday,” you don’t actually hear “f” as that sound is not sent over the phone—your brain fills the “f” in. For transcriptions, the speech-to-text model fills in missing sounds.

Domain-specific vocabulary

Every contact center ever created for a business is composed of enterprise situations where topics and vocabulary differ. Out-of-the-box ASR solutions are rarely useful in real life as they typically lack meaningful customization.

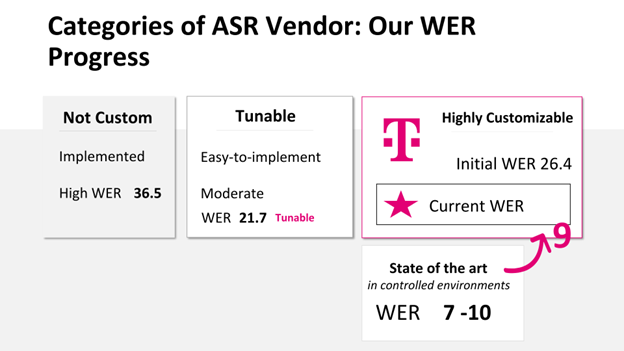

At GTC, T-Mobile showcased their innovative solution to speech recognition challenges that used NVIDIA Riva, a GPU-accelerated SDK for building and deploying customized speech applications, and NVIDIA NeMo for fine-tuning on their domain-specific data. T-Mobile improved speech recognition accuracy up to 3x (Figure 1) across diverse accents, speaking styles, and noisy production environments.

Top considerations for the best speech-to-text

From telco contact centers and emergency services to videoconferencing and broadcasting, businesses must consider many factors when implementing cutting-edge speech AI technology—accuracy, latency, scalability, security, and operational costs—to stay ahead of the competition.

Enterprises are continuously looking for new ways to turn call centers into value centers. Here, cost plays an important role. When dealing with large call volumes, businesses must evaluate vendors based on pricing models, the total cost of ownership (TCO), and hidden costs.

Achieving comprehensive language, accent, and dialect coverage is critical for speech recognition accuracy for all languages. Luckily, there have been significant advancements in speech AI multilingual accuracy. For example, Riva now offers world-class speech recognition for English, Spanish, Mandarin, Hindi, Russian, Arabic, Japanese, Korean, German, Portuguese, French, and Italian.

Lastly, speech AI models must achieve low latency to provide a better real-time experience for both agents and customers. For example, if an agent is having a conversation with a customer and the AI does not recommend the agent’s next steps fast enough, then it is serving no purpose.

At GTC, T-Mobile provided a detailed account of their speech-to-text evaluation process. Their findings were remarkable: Riva speech recognition outperformed out-of-the-shelf cloud-provider models in terms of latency, cost-effectiveness, and accuracy

At the recent Leading the Way with Cutting-Edge Speech AI Technology GTC panel, Infosys, Quantiphi, and Motorola shared their experience of addressing these factors when implementing speech AI for solutions in the telecommunications industry.

Key takeaways

Integrating speech and translation AI into AI solutions for customer service has become a game-changer for telcos. By using real-time multilingual transcription of customer conversations, telcos can better categorize and route calls and provide valuable insights and personalized recommendations to agents.

Telcos that embrace this technology can gain a competitive edge in the market by providing superior customer experience, staying ahead of the competition, and meeting their customers’ evolving needs.

To learn more about how speech and translation AI can transform customer experiences for Telcos, join us for the How Telcos Transform Customer Experiences with Conversational webinar on May 31, and for the technical deep dive Empower Telco Contact Center Agents with Multi-Language Speech-AI-Customized Agent Assists webinar on June 7. Learn from the experts and gain valuable insights in building your own AI-powered customer service solutions.