AI is moving from research to production and enterprises are embracing its power to develop and deploy it across a wide variety of applications, including retail analytics, medical imaging, autonomous driving, and smart manufacturing.

However, developing and deploying open source AI software inherently has its own challenges. Data scientists need optimized software, developers need the right tools to integrate AI models into their products, DevOps engineers need automation tool sets to deploy production software, and system administrators must provide the right infrastructure.

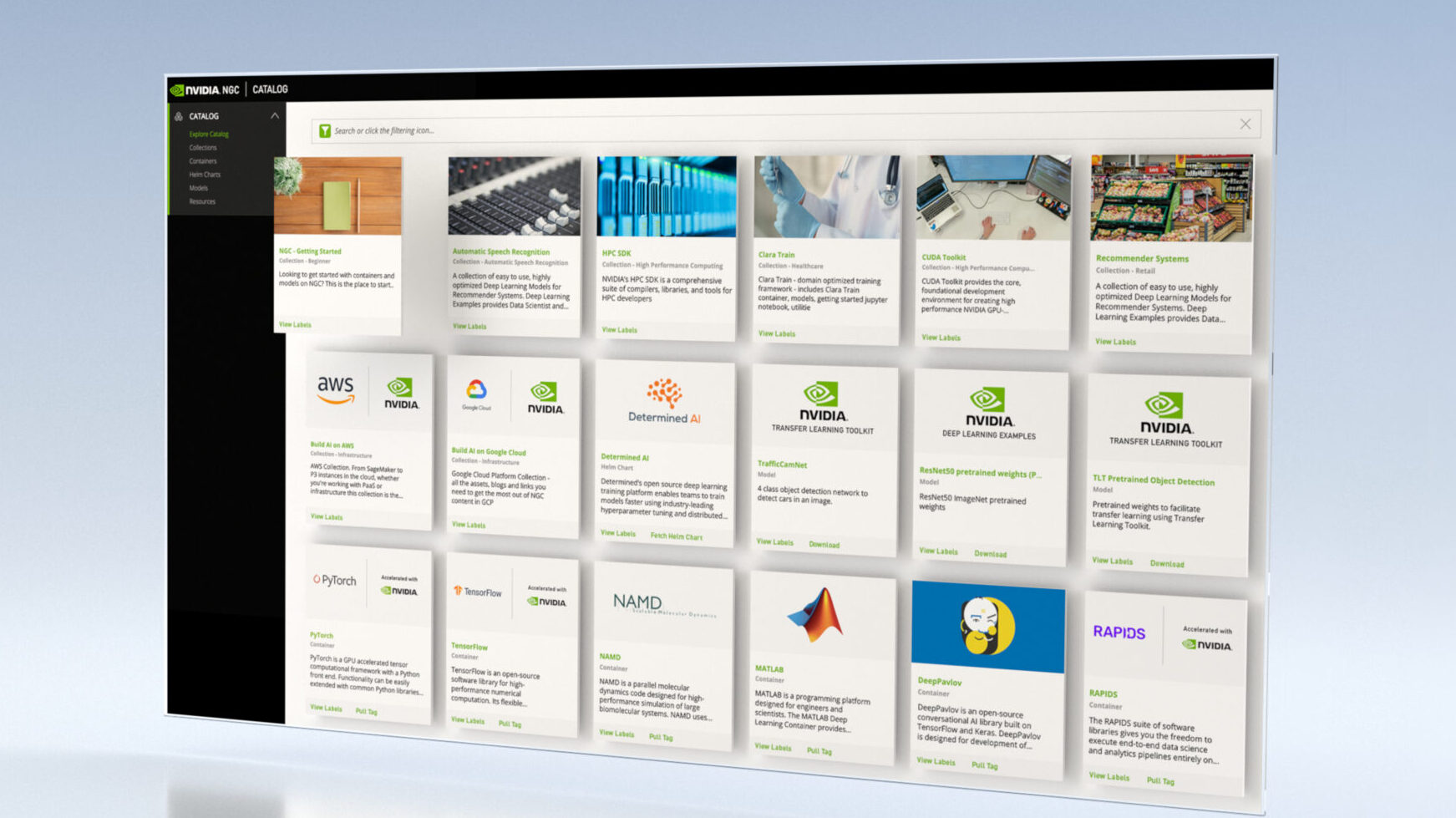

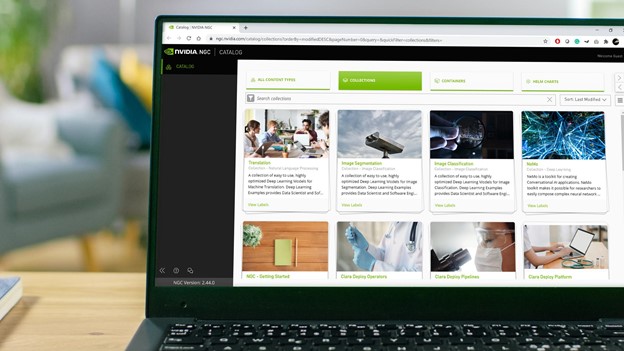

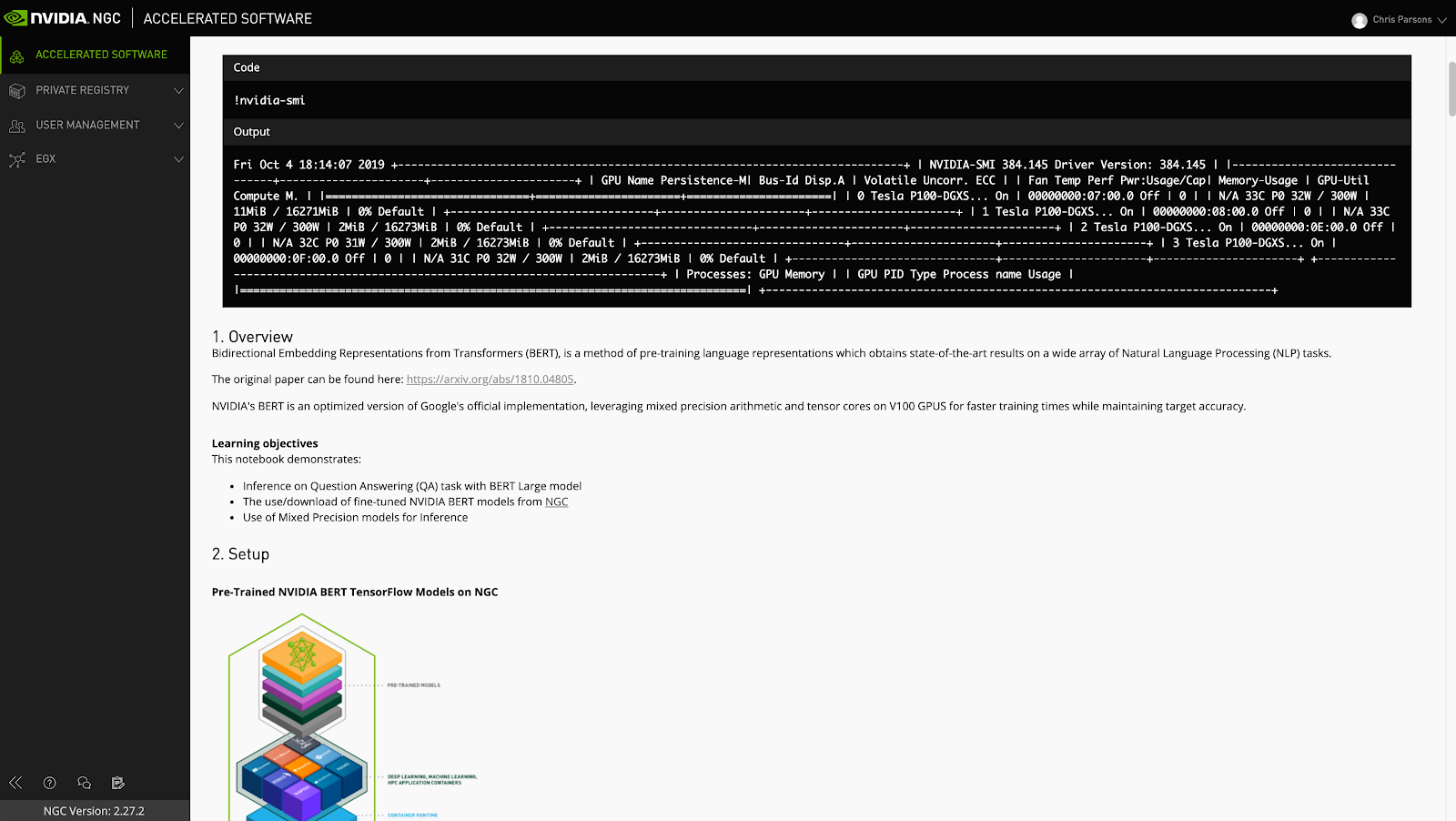

NGC provides you with easy access to GPU-optimized containers for deep learning (DL), machine learning (ML), and high performance computing (HPC) applications, along with pretrained models, model scripts, Helm charts, and software development kits (SDKs) that can be deployed at scale.

As data scientists build custom content, storing, sharing, and versioning of this valuable intellectual property is critical to meet their company’s business needs. To address these needs, NVIDIA has developed the NGC private registry to provide a secure space to store and share custom containers, models, model scripts, and Helm charts within your enterprise.

Before we delve into the salient features of NGC private registry, here’s a bit more on what each of the artifacts mean.

Containers

Containers package software applications, libraries, dependencies, and run time compilers in a self-contained environment so they can be easily deployed across various compute environments. The deep learning frameworks and HPC containers from NGC are GPU-optimized and tested on NVIDIA GPUs for scale and performance. With a one-click operation, you can easily pull, scale, and run containers in your environment.

Models and model scripts

As your teams build custom models and train on your own datasets to derive solutions, it’s important to share these assets and knowledge across your organization. By working collaboratively, your teams can leverage and deploy these assets faster and speed up time to market.

It’s not just custom models, though. What about the code that you need to get up and running, retrain the asset, or integrate into an end-user application? It’s imperative that you can share your code samples, example applications, videos, images, and instructions so that your work can be used by others within the organization. AI is very much a collaborative effort, and model scripts provide structure to solving the challenge of collaboration across your teams.

Software Development Kits

SDKs deliver all the tooling you need to build and deploy your own AI applications. You can use transfer learning to retrain models with your own data to suit your use case or even deploy pretrained models straight into your applications. SDKs suit a range of deployment options including cloud, your own data center, or the edge for low-latency inference.

Helm charts

Helm provides a container orchestration tool that allows you to configure and manage your containerized application deployments. Helm charts allow DevOps engineers and system administrators to describe exactly what components an application needs to run, simplifying deployment pipelines and enabling the easy deployment and integration of new apps.

NGC private registry features

The NGC private registry isn’t just a place to store your content. There are so many powerful features that can really help accelerate your organization’s end-to-end AI workflow.

- Multi-architecture support

- Model versioning

- Jupyter rendering

- User management

- API key management

Multi-architecture support

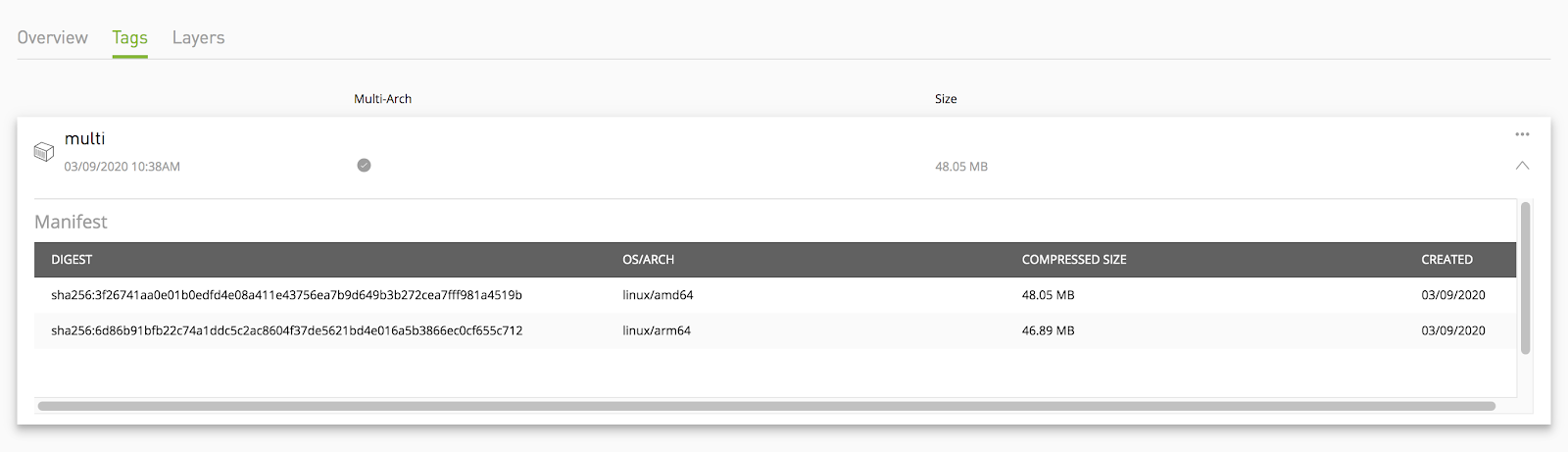

To further enable the ease of deployment, a NGC private registry now supports different architectures, such as x86 and ARM. You can package all different architecture images into a single image and push it into an NGC private registry.

When you run an image with multi-architecture support, Docker automatically selects an image variant that matches the end-user OS and architecture. End users can also visualize this through the UI as well as the manifest list to navigate through the available variants in images.

Model versioning

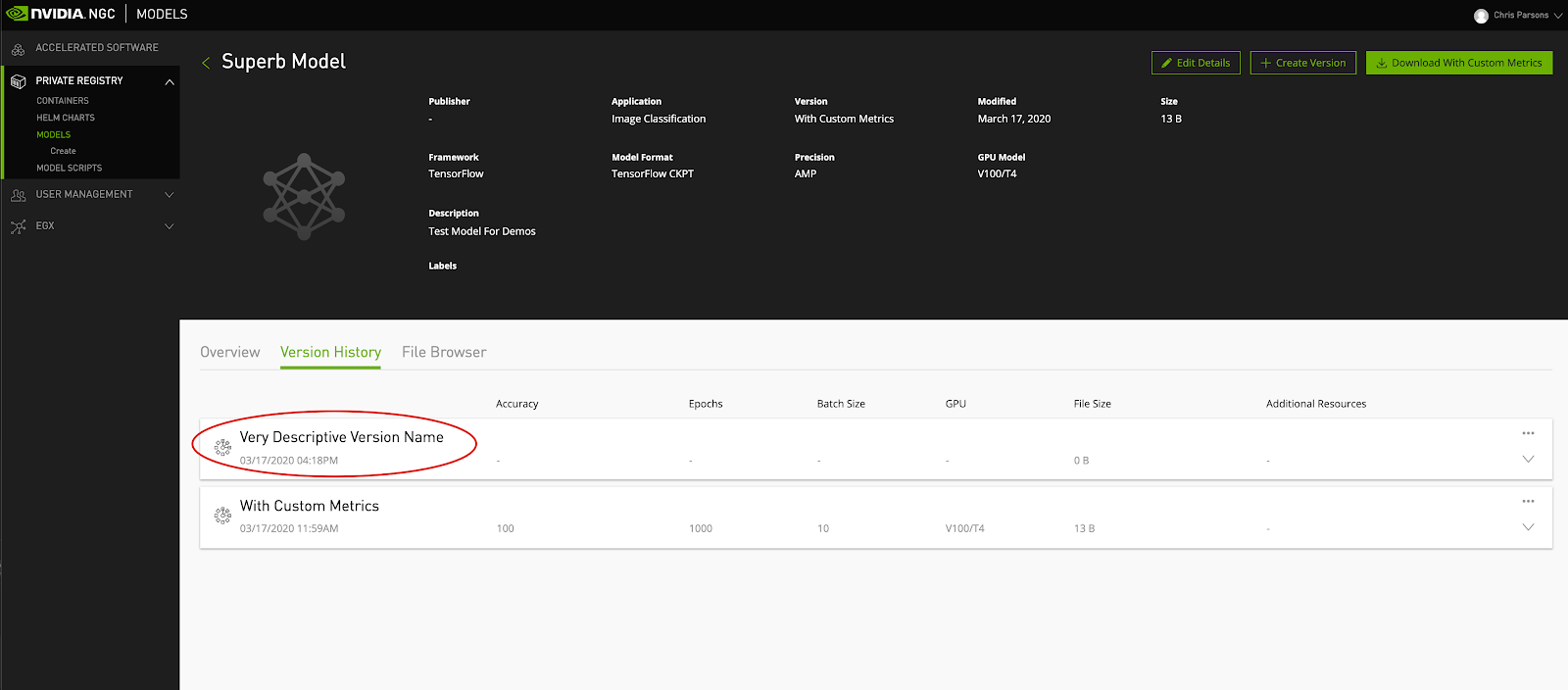

One of the main challenges facing teams building AI at the moment is managing different versions of models, trained on different data, with different training parameters (learning rate, batch size, and so on). There isn’t one version of a model that is applicable to all use cases.

So how do you manage the growing challenge and overhead of sharing these versions across your teams for collaboration? Better yet, how do you control which versions go to production and end up making a difference to your users?

NVIDIA overhauled the NGC model version system to build a completely new way of curating your content, sharing the pertinent details, and streamlining your deep learning development cycles.

With the new version control system, you can name your versions with text rather than numbers. This gives you much more scope for differentiating between the different content editions, with descriptive names that not only aid discovery but also help users find the right content, first time.

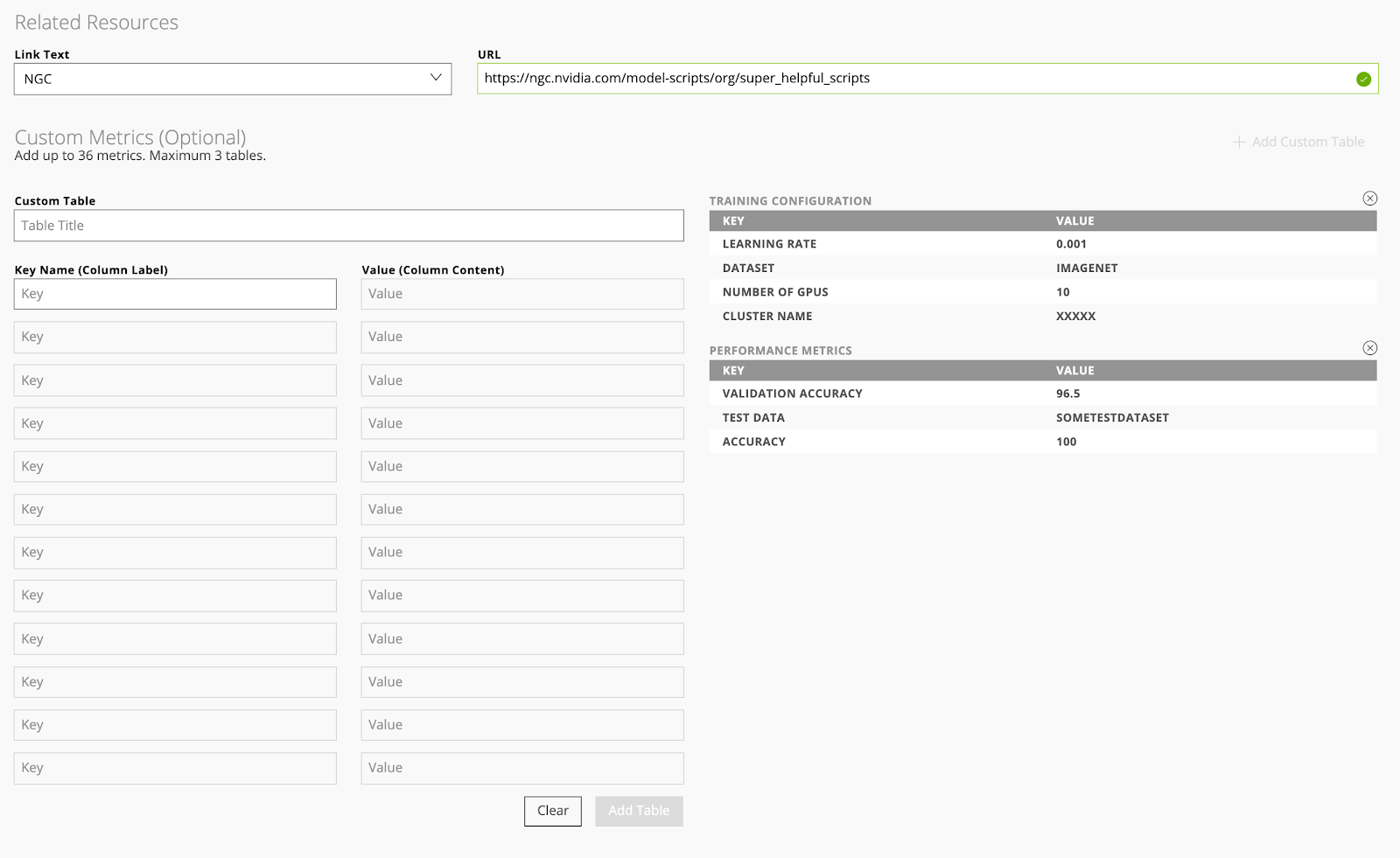

Not only do you have more control over the names of your versions, but you can also share a lot more detail about them. The new NGC version system lets you specify up to 36 different metrics about each version of your models. Your users can quickly discover all the relevant information about a given model.

The video below shows you to make the most out of versions in NGC:

Jupyter rendering

Jupyter notebooks are quickly becoming the default way for data scientists to describe their documents, codes, and reports. Jupyter notebooks help data scientists streamline their work and accelerate the data science workflow.

To make it even easier to leverage Jupyter assets in NGC, we’ve built a Jupyter rendering feature, which is designed to let you view your notebooks directly within NGC.

Navigate to any asset in the Model Scripts registry, and choose Actions, View Jupyter. This shows you the notebook’s contents in your browser, making it easy for you to consume documentation or copy code samples, without deploying the notebook.

The video below shows how to view Jupyter notebooks in NGC:

User management

The comprehensive user and team management in an NGC private registry allows administrators to control access to content stored in the registry. As the NGC administrator for your organization, you can invite new users to join your organization’s NGC account and assign them to their relevant teams.

The videos show how to manage user and teams:

API key management

The API key is the cornerstone of publishing content to NGC private registries. While role-based access control allows you to determine who has access to content, the API key allows individual users to consume artifacts from private registries.

Depending on the user role, this key allows you to pull, push, edit, or deploy content. These keys are unique to each member of your team and can also be regenerated by individual users right from within NGC.

The API key system in NGC reduces the management overhead of organizing your users so that you can focus on what matters most to you.

The video shows how to generate an API key:

Summary

The NGC private registry is a valuable tool for enterprises to store and share secure content to increase collaboration and drive productivity for end-to-end AI workflows. The NGC private registry is included as part of the subscription for customers of NVIDIA AI Enterprise as well as for those who have purchased DGX, NGC, and NVIDIA-Certified Support Services.