Moment Factory is a global multimedia entertainment studio that combines specializations in video, lighting, architecture, sound, software, and interactivity to create immersive experiences for audiences around the world.

At NVIDIA GTC 2024, Moment Factory will showcase digital twins for immersive location-based entertainment with Universal Scene Description (OpenUSD). To see the latest advancements in OpenUSD and how developers are building generative AI-enabled pipelines for 3D workflows, join OpenUSD Day on Tuesday, March 19.

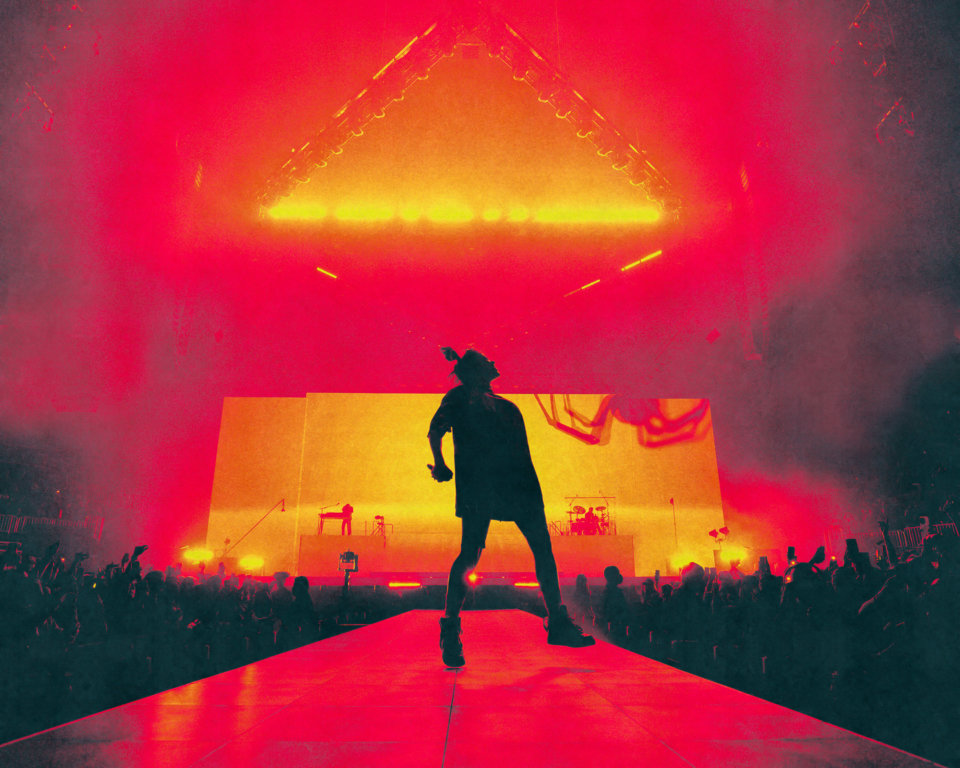

From live performances and multimedia shows to interactive installations, Moment Factory is known for some of the most awe-inspiring and entertaining experiences that bring people together in the real world. These include dazzling visuals at Billie Eilish’s Happier Than Ever world tour, Lumina Night Walks at natural sites around the world, and digital placemaking at the AT&T Discovery District.

With a team of over 400 professionals and offices in Montreal, Tokyo, Paris, New York City, and Singapore, Moment Factory has become a global leader in the entertainment industry.

Streamlining immersive experience development with OpenUSD

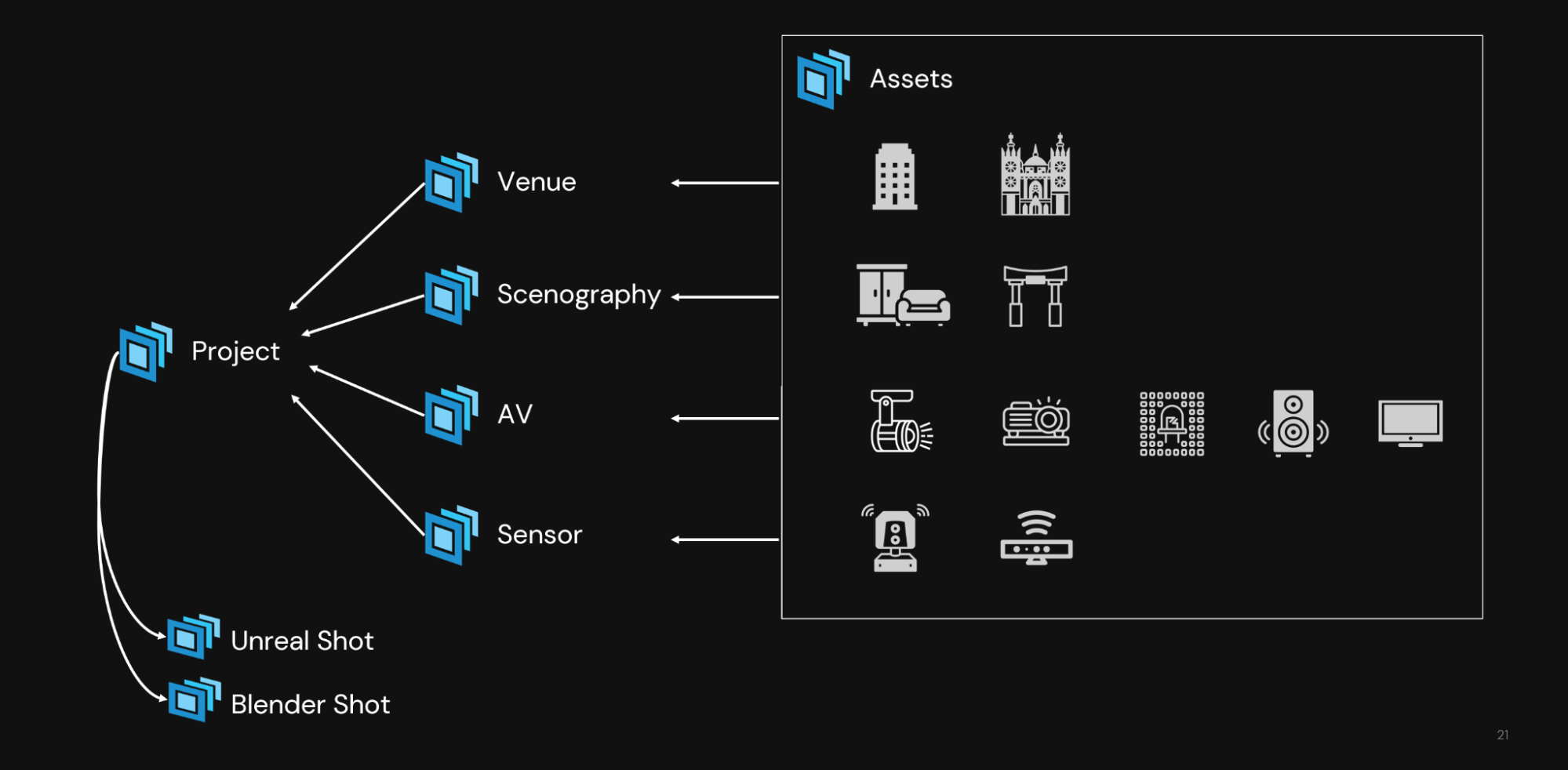

Bringing these experiences to life requires large teams of highly skilled experts with diverse specialties, all using unique tools. To achieve optimal efficiency in their highly complex production processes, Moment Factory looked to implement an interoperable open data format and development platform that could seamlessly integrate all aspects, from concept to operation.

Moment Factory chose Universal Scene Description, also known as OpenUSD, as the solution. OpenUSD is an extensible framework and ecosystem for describing, composing, simulating, and collaborating within 3D worlds. NVIDIA Omniverse is a software platform that enables teams to develop OpenUSD-based 3D workflows and applications. It provides the unified environment to visualize and collaborate on digital twins in real time with live connections to Moment Factory’s tools.

Using OpenUSD with Omniverse enables Moment Factory to unify data from their diverse digital content creation (DCC) tools to form a digital twin of a real-world environment. Every member of the team can interact with this digital twin and iterate on their aspect of the project without affecting other elements

For example, a scenographer can work on a base set and unique scene pieces using Vectorworks, 3D design software. At the same time in the same scene, an AV (audio visual) and lighting designer can take care of lighting and projectors with Moment Factory’s proprietary live entertainment operating system and virtual projection mapping software, X-Agora.

Simultaneously, artists and designers can render and create eye-catching visuals in the scene using tools like Epic Games Unreal Engine, Blender, and Adobe Photoshop—without affecting layers of the project still in progress.

“USD is unique in that it can be fragmented into smaller pieces that enable people to work on their own unique parts of a project while staying connected,” said Arnaud Grosjean, solution architect and project lead for Moment Factory’s Innovation Team. “Its flexibility and interoperability allows us to create powerful, custom 3D pipelines.”

Digital twins simulate real-world experiences

To simulate immersive events before deploying them in the real world, Moment Factory is developing digital twins of their installations in NVIDIA Omniverse. Omniverse, a computing platform that enables teams to develop OpenUSD-based 3D workflows and applications, provides the unified environment to visualize and collaborate on digital twins in real time with live connections to DCC tools.

The first digital twin they’ve created is that of Blackbox, which serves as an experimentation and prototyping space where they can preview fragments of immersive experiences before real-world deployment. It is a critical space for nearly every phase of the project lifecycle, from conception and design to integration and operation.

To build the digital twin of the Blackbox, Moment Factory used USD Composer, a fully customizable foundation application built on NVIDIA Omniverse.

The virtual replica of the installation enables the team to run innumerable iterations on the project to test for various factors. They can also better sell concepts for immersive experiences to prospective customers, who can see the show before live production in a virtual environment.

One of the key challenges in the process for building large-scale immersive experiences is reaching a consensus among various stakeholders and managing changes.

“Everyone has their own idea of how a scene should be structured, so we needed a way to align everyone contributing to the project in a unified, dynamic environment” explained Grosjean. “With the digital twin, potential ideas can be tested and simulated with stakeholders across every core expertise.”

As CAD drafters, AV designers, interactive designers, and others contribute to the digital twin of the Blackbox, artists and 2D/3D designers can render and experiment with beauty shots of the immersive experience in action.

To see the digital twin of the Blackbox in action, join the Omniverse Livestream with Moment Factory on Wednesday, September 13.

Developing Omniverse Connectors and extensions

Moment Factory is continuously building and testing extensions for Omniverse to bring new functionalities and possibilities into their digital twins.

They developed an Omniverse Connector for X-Agora, their proprietary multi-display software that allows you to design, plan and operate shows. The software now has a working implementation of a Nucleus connection, USD import/export, and an early live mode implementation.

Video projection is a key element of immersive events. The team will often experiment with mapping and projecting visual content onto architectural surfaces, scenic elements, and sometimes even moving objects, transforming static spaces into dynamic and captivating environments.

NDI, which stands for Network Design Interface, is a popular IP video protocol developed by NewTek that allows for efficient live video production and streaming across interconnected devices and systems. In their immersive experiences, Moment Factory typically connects a media system to physical projectors using video cables. With NDI, they can replicate this connection within a virtual venue, effectively simulating the entire experience digitally.

To enable seamless connectivity between the Omniverse RTX Renderer and their creative content, Moment Factory developed an NDI extension for Omniverse. The extension supports more than just video projection and allows the team to simulate LED walls, screens, and pixel fields to mirror their real-world setup in the digital twin.

The extension, which was developed with Omniverse Kit, also enables users to use video feeds as dynamic textures. Developers at Moment Factory used the kit-cv-video-example and kit-dynamic texture-example to develop the extension.

Anyone can access and use Moment Factory’s Omniverse-NDI-extension on GitHub, and install it on the Omniverse Launcher or launch with:

$ ./link_app.bat --app create

$ ./app/omni.create.bat --/rtx/ecoMode/enabled=false --ext-folder exts --enable mf.ov.ndi

Extensions in Omniverse serve as reusable components or tools that developers can build to accelerate and add new functionalities for 3D workflows. They can be built for simple tasks like randomizing objects or used to enable more complex workflows like visual scripting.

The team also developed an extension for converting MPDCI, a VESA standard describing multiprojector rigs, to USD called the Omniverse-MPCDI-converter. They are currently testing extensions for MVR (My Virtual Rig) and GDTF (General Device Type Format) Converters to import lighting fixtures and rigs into their digital twins.

Even more compelling is a lidar UDP simulator extension, which is being developed to enable sensor simulation in Omniverse and connect synthetic data to lidar-compatible software.

You can use Moment Factory’s NDI and MPDCI extensions today in your workflows. Stay tuned for new extensions coming soon.

To build extensions like Moment Factory, get started with all the Omniverse Developer Resources you’ll need, like documentation, tutorials, USD resources, GitHub samples, and more.

Get started with NVIDIA Omniverse by downloading the standard license free, or learn how Omniverse Enterprise can connect your team.

Developers can check out these Omniverse resources to begin building on the platform.

Stay up to date on the platform by subscribing to the newsletter and following NVIDIA Omniverse on Instagram, LinkedIn, Medium, Threads, and Twitter.

For more, check out our forums, Discord server, Twitch, and YouTube channels.