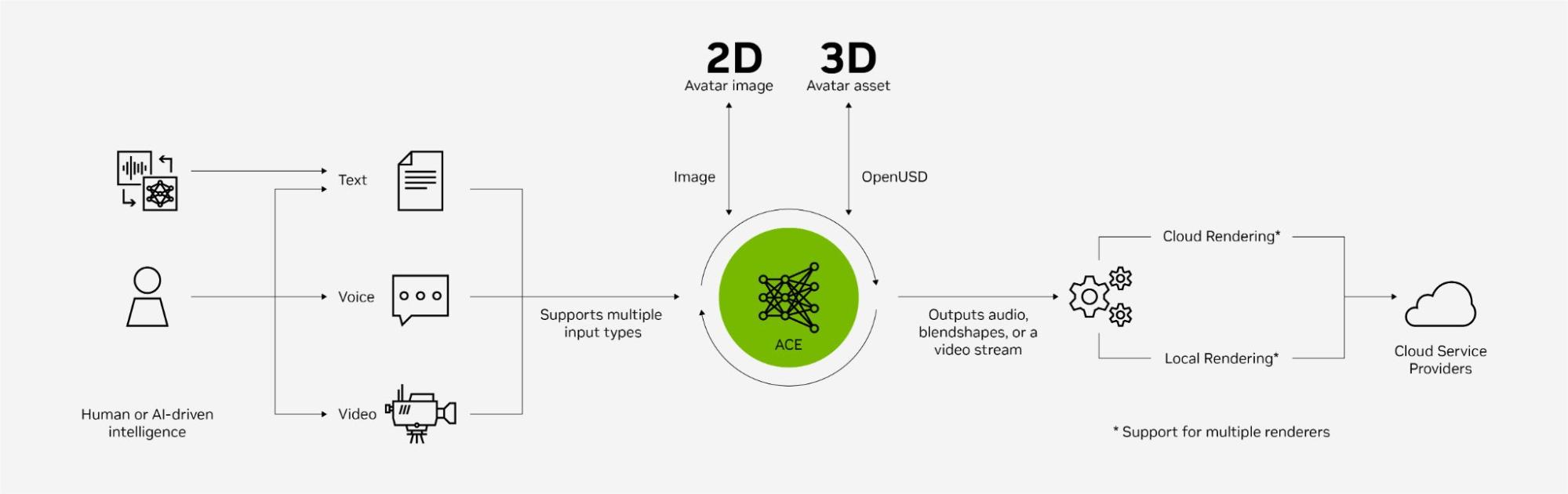

NVIDIA today unveiled major upgrades to the NVIDIA Avatar Cloud Engine (ACE) suite of technologies, bringing enhanced realism and accessibility to AI-powered avatars and digital humans. These latest animation and speech capabilities enable more natural conversations and emotional expressions.

Developers can now easily implement and scale intelligent avatars across applications using new cloud APIs for automatic speech recognition (ASR), text-to-speech (TTS), neural machine translation (NMT), and Audio2Face (A2F).

With these advanced features, available through the early access program, creators can leverage NVIDIA technologies to rapidly build next-generation avatar experiences. It is now easier than ever to build and deploy digital humans anywhere and at scale, using some of the most popular rendering tools such as Unreal Engine 5.

AI-powered animations with emotion

Build more expressive digital humans with the latest ACE AI animation features and microservices, including newly added A2F emotional support. An Animation Graph microservice for body, head, and eye movements is also now available.

For developers handling rendering production through the cloud or looking to do real-time inference, there is now an easy-to-use microservice. And A2F quality improvements include lip sync, bringing even more realism to digital humans.

Enhanced AI speech capabilities

Languages supported now include Italian, EU Spanish, German, and Mandarin. The overall accuracy of the ASR technology has also been improved. And cloud APIs for ASR, TTS, and NMT simplify access to the latest Speech AI features.

A new Voice Font microservice enables you to customize TTS output, whether you want to apply a custom voice to an intelligent NPC using your own voice or randomize a user’s voice in a video conferencing call. This technology converts a speaker’s distinct pitch and volume and converts it into a reference audio while maintaining the same patterns of rhythm and sound.

New tooling and frameworks

ACE Agent is a streamlined dialog management and system integrator that provides a more seamless end-to-end experience, efficiently orchestrating connections between microservices. Developers also have more control over accurate, adjustable responses through integrations with NVIDIA NeMo Guardrails, NVIDIA SteerLM, and LangChain.

It is now easier to get these tools up and running in your renderer or coding environment of choice. New features include:

- Support for blendshapes within the Avatar configurator to easily integrate popular renderers, including Unreal Engine.

- A new A2F application for Python users.

- A reference application for developers interested in building virtual assistants for customer service.

Summary

These newly introduced NVIDIA ACE features raise the quality bar for digital human experiences. With enhancements that make building and deployment easier, developers now have simplified configurations necessary to build next-generation digital human applications.

Interested in exploring cutting-edge digital human technologies? Apply for early access.