There is an ongoing demand for servers with the ability to transfer data from the network to a GPU at ever faster speeds. As AI models keep getting bigger, the sheer volume of data needed for training requires techniques such as multinode training to achieve results in a reasonable timeframe. Signal processing for 5G is more sophisticated than previous generations, and GPUs can help increase the speed at which this happens. Devices such as robots or sensors, are also starting to use 5G to communicate with edge servers for AI-based decisions and actions.

Purpose-built AI systems, such as the recently announced NVIDIA DGX H100, are specifically designed from the ground up to support these requirements for data center use cases. Now, another new product can help enterprises also looking to gain faster data transfer and increased edge device performance, but without the need for high-end or custom-built systems.

Announced by NVIDIA CEO Jensen Huang at NVIDIA GTC last week the NVIDIA H100 CNX is a high-performance package for enterprises. It combines the power of the NVIDIA H100 with the advanced networking capabilities of the NVIDIA ConnectX-7 SmartNIC. Available in a PCIe board, this advanced architecture delivers unprecedented performance for GPU-powered and I/O intensive workloads for mainstream data center and edge systems.

Design benefits of the H100 CNX

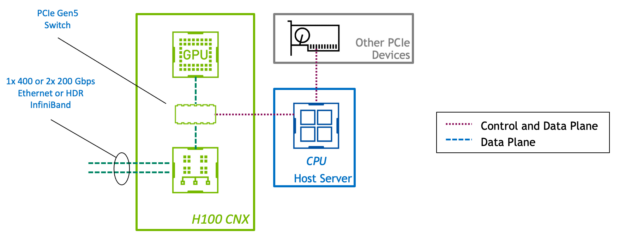

In standard PCIe devices, the control plane and data plane share the same physical connection. However, in the H100 CNX, the GPU and the network adapter connect through a direct PCIe Gen5 channel. This provides a dedicated high-speed path for data transfer between the GPU and the network using GPUDirect RDMA and eliminates bottlenecks of data going through the host.

With the GPU and SmartNIC combined on a single board, customers can leverage servers at PCIe Gen4 or even Gen3. Achieving a level of performance once only possible with high-end or purpose-built systems saves on hardware costs. Having these components on one physical board also improves space and energy efficiency.

Integrating a GPU and a SmartNIC into a single device creates a balanced architecture by design. In systems with multiple GPUs and NICs, a converged accelerator card enforces a 1:1 ratio of GPU to NIC. This avoids contention on the server’s PCIe bus, so the performance scales linearly with additional devices.

Core acceleration software libraries from NVIDIA such as NCCL and UCX automatically make use of the best-performing path for data transfer to GPUs. Existing accelerated multinode applications can take advantage of the H100 CNX without any modification, so customers immediately can benefit from the high performance and scalability.

H100 CNX use cases

The H100 CNX delivers GPU acceleration along with low-latency and high-speed networking. This is done at lower power, with a smaller footprint and higher performance than two discrete cards. Many use cases can benefit from this combination, but the following are particularly notable.

5G signal processing

5G signal processing with GPUs requires data to move from the network to the GPU as quickly as possible, and having predictable latency is critical too. NVIDIA converged accelerators combined with the NVIDIA Aerial SDK provide the highest-performing platform for running 5G applications. Because data doesn’t go through the host PCIe system, processing latency is greatly reduced. This increased performance is even seen when using commodity servers with slower PCIe systems.

Accelerating edge AI over 5G

NVIDIA AI-on-5G is made up of the NVIDIA EGX enterprise platform, the NVIDIA Aerial SDK for software-defined 5G virtual radio area networks, and enterprise AI frameworks. This includes SDKs, such as NVIDIA Isaac and NVIDIA Metropolis. Edge devices such as video cameras, industrial sensors, and robots can use AI and communicate with the server over 5G.

The H100 CNX makes it possible to provide this functionality in a single enterprise server, without deploying costly purpose-built systems. The same accelerator applied to 5G signal processing can be used for edge AI with the NVIDIA Multi-Instance GPU technology. This makes it possible to share a GPU for several different purposes.

Multinode AI training

Multinode training involves data transfer between GPUs on different hosts. In a typical data center network, servers often run into various limits around performance, scale, and density. Most enterprise servers don’t include a PCIe switch, so the CPU becomes a bottleneck for this traffic. Data transfer is bound by the speed of the host PCIe backplane. Although a 1:1 ratio of GPU:NIC is ideal, the number of PCIe lanes and slots in the server can limit the total number of devices.

The design of H100 CNX alleviates these problems. There is a dedicated path from the network to the GPU for GPUDirect RDMA to operate at near line speeds. The data transfer also occurs at PCIe Gen5 speeds regardless of host PCIe backplane. Scaling up of GPU power within a host can be done in a balanced manner, since the 1:1 ratio of GPU:NIC is inherently achieved. A server can also be equipped with more acceleration power, since fewer PCIe lanes and device slots are required for converged accelerators than discrete cards.

The NVIDIA H100 CNX is expected to be available for purchase in the second half of this year. If you have a use case that could benefit from this unique and innovative product, contact your favorite system vendor and ask when they plan to offer it with their servers.

Learn more about the NVIDIA H100 CNX.