Editor’s Note: Watch the Analysing Cassandra Data using GPUs workshop.

In the previous post, I talked about our journey to find the best way to load SSTable data onto the GPU for data analytics. We looked at various methods of converting Cassandra data into formats usable by RAPIDS and decided to create sstable-to-arrow, a custom implementation that parses sstables and writes them to arrow format. In this post, we’ll discuss sstable-to-arrow further, its capabilities, limitations, and how to get started with it for your analytics use cases.

Implementation details

Sstable-to-arrow is written in C++17. It uses the Kaitai Struct library to declaratively specify the layout of the SSTable files declaratively. The Kaitai Struct compiler then compiles these declarations into C++ classes, which can be included in the source code to parse the SSTables into data in memory. Then, it takes the data and converts each column in the table into an Arrow Vector. Sstable-to-arrow can then ship the Arrow data to any client, where the data can be turned into a cuDF and be available for GPU analysis.

Current limitations

- SStable-to-arrow can only read one SSTable at a time. To handle multiple SSTables, the user must configure a cuDF for each SSTable and use the GPU to merge them based on last write wins semantics.

- sstable-to-arrowexposes internal cassandra timestamps and tombstone markers so that merging can be done at the cuDF layer.

- Some data, including the names of the partition key and clustering columns, can’t actually be deduced from the SSTable files since they require the schema to be stored in the system tables.

- Cassandra stores data in memtables and commitlogs before flushing to SSTables, so analytics performed using only sstable-to-arrow will potentially be stale / not real time.

- Currently, the parser only supports files written by Cassandra OSS 3.11.

- The system is set up to scan entire SSTables (not read specific partitions). More work will be needed if we ever do predicate pushdown.

- The following cql types are not supported: counter, frozen, and user-defined types.

- varints can only store up to 8 bytes. Attempting to read a table with larger varints will crash.

- The parser can only read tables with up to 64 columns.

- The parser loads each SSTable into memory, so it is currently unable to handle large SSTables that exceed the machine’s memory capacity.

- Decimals are converted into an 8-byte floating point value because neither C++ nor Arrow has native support for arbitrary-precision integers or decimals like the Java BigInteger or BigDecimal classes. This means that operations on decimal columns will use floating point arithmetic, which may be inexact.

- Sets are treated as lists because Arrow has no equivalent of a set.

Roadmap and future developments

The ultimate goal for this project is to have some form of a read_sstable function included in the RAPIDS ecosystem, similar to cudf.read_csv. Performance is also a continuous area of development, and I’m currently looking into ways in which reading the SSTables can be further parallelized to make the most use of the GPU. I’m also working on solving or improving the limitations addressed preceding, especially broadening support for different CQL types and enabling the program to handle large datasets.

How to Use sstable-to-arrow

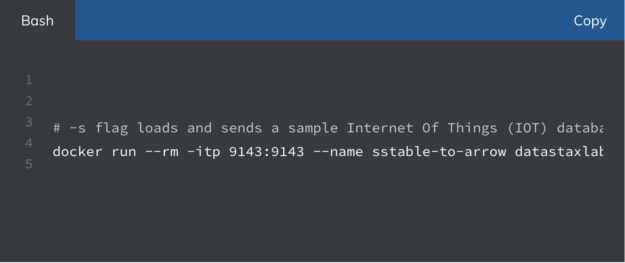

You can run sstable-to-arrow using Docker.

This will listen for a connection on port 9143. It expects the client to send a message first, and then it will send data in the following format:

- The number of Arrow Tables being transferred as an 8-byte big-endian unsigned integer

- For each table:

- Its size in bytes as an 8-byte big-endian unsigned integer.

- The contents of the table in Arrow IPC Stream Format.

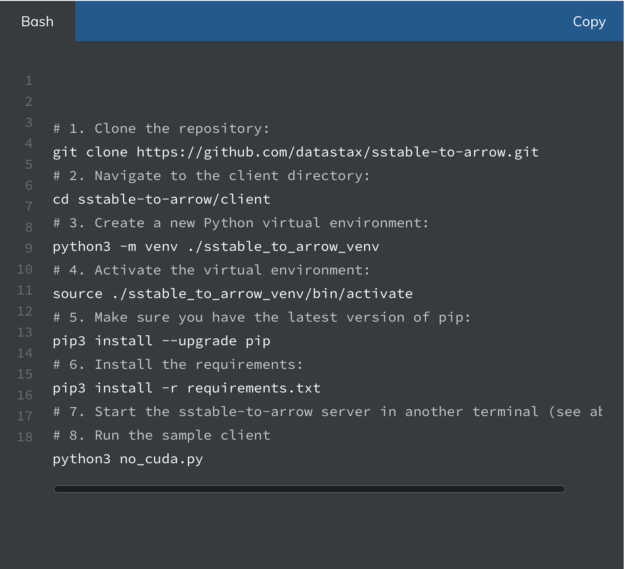

You can then fetch the data from your port using any client. To get started with the sample Python client, follow these steps if your system does not support CUDA:

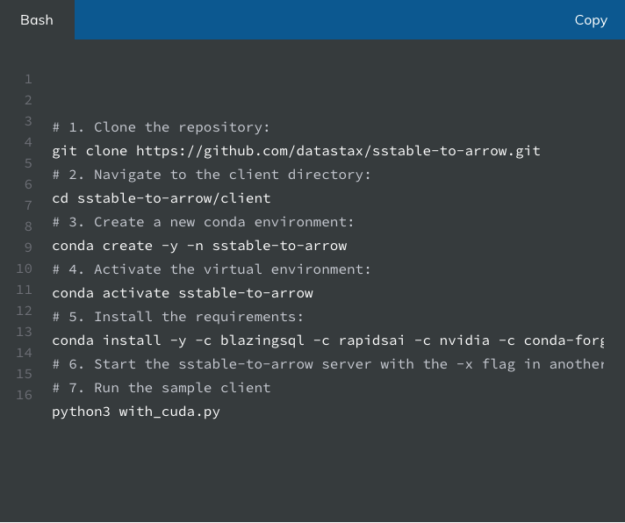

If your system does support CUDA, we recommend creating a conda environment with the following commands. You will also want to pass the -x flag when starting the sstable-to-arrow server preceding to turn all non-cudf-supported types into hex strings.

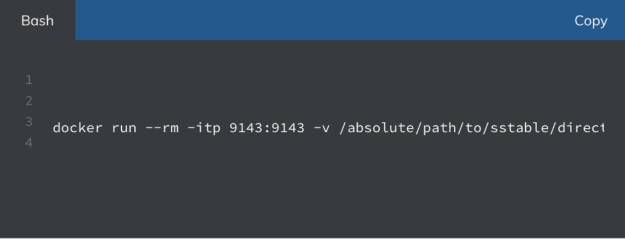

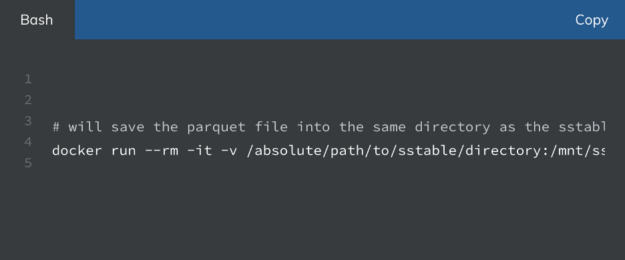

To experiment with other datasets, you will want raw SSTable files on your machine. You can download sample IOT data at this Google Drive folder. You can also generate IOT data using the generate-data script in the repository, or you can manually create a table using CQL and the Cassandra Docker image (see the Cassandra quickstart for more info). Make sure to use Docker volumes to share the SSTable files with the container:

You can also pass the -h flag to get information about other options. If you would like to build the project from the source, follow the steps in the GitHub repository.

SSTable to Parquet

Sstable-to-arrow is also able to save the SSTable data as a Parquet file, a common format for storing columnar data. Again, it does not yet support deduplication, so it will simply output the sstable and all metadata to the given Parquet file.

You can run this by passing the -p flag followed by the path where you would like to store the Parquet file:

Conclusion

Sstable-to-arrow is an early but promising approach for leveraging Cassandra data for GPU-based analytics. The project is available on GitHub and accessible through Docker Hub as an alpha release.

We will be holding a free online workshop, which will go deeper into this project with hands-on examples in mid August! You can sign up here if you’re interested.

If you’re interested in trying out sstable-to-arrow, look at the second blog post in this two-part series and feel free to reach out to seb@datastax.com with any feedback or questions.