Manually assessing damage in areas most affected by a disaster is challenging and time-consuming. To help produce more accurate and faster data for rescue workers and aid organizations, researchers from Facebook and CrowdAI developed a deep learning-based algorithm that can automatically estimate the level of damages an area has suffered.

“The goal of this research is to allow rescue workers to quickly identify where aid is needed most, without relying on manually annotated, disaster-specific data sets,” the researchers wrote in a blog post. “This new method has the potential to produce more accurate information in far less time than current manual methods.”

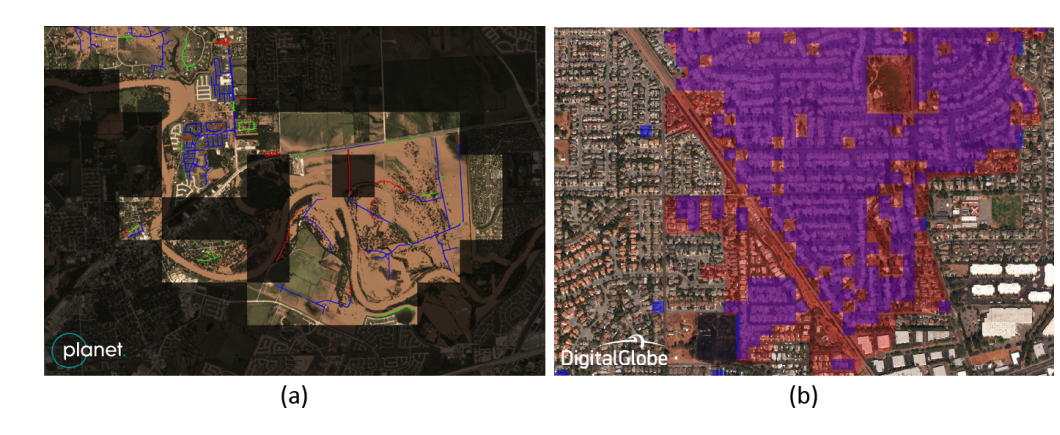

For this work, the team used NVIDIA Tesla P100 GPUs and a cuDNN-accelerated deep learning framework to train a convolutional neural network on open source data from Digital Globe and Planet Labs to detect human-made features from satellite imagery. Unlike existing works which apply convolutional neural networks on manually annotated, disaster-specific datasets, this method relies only on readily-available datasets for common man-made features in satellite imagery, such as roads and buildings, the researchers explained.

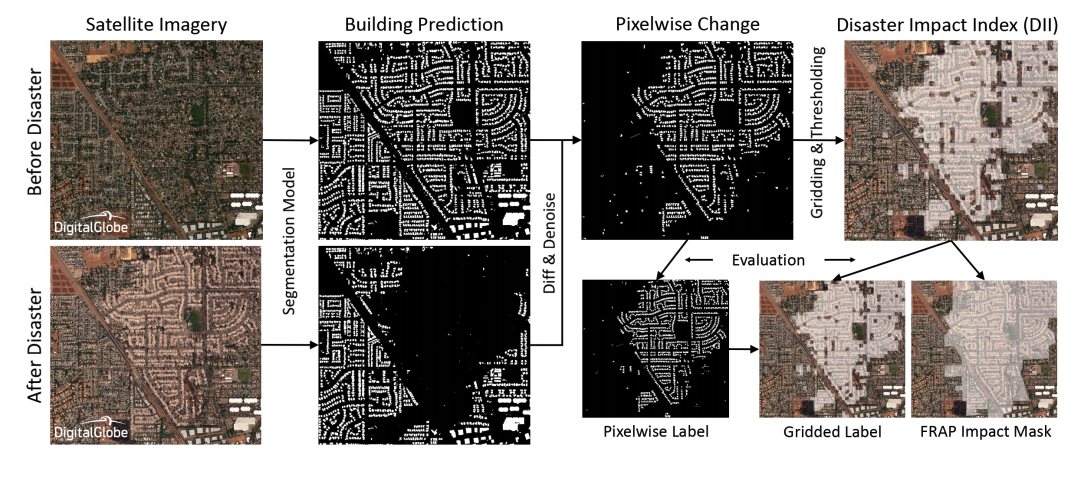

“By computing relative change between multiple snapshots of data captured before and after a disaster, we can identify areas of maximal damage and prioritize disaster response efforts,” the researchers stated in their paper.

To validate the results of the neural network the team tested their system on images of two natural disasters: Hurricane Harvey in Texas, and the Santa Rosa fire in Northern California.

Another major contribution of the research is a new metric introduced by the team called the Disaster Impact Index that is designed to help better understand the impact of a disaster.

“Quickly and accurately identifying which areas are most affected allows aid organizations to deliver supplies and aid where they are needed most,” the team wrote in a blog post. “In the future, this could be extended to quantify disaster impact on natural features like farmlands and forests, and to assess damage from other disasters, such as earthquakes.”

A paper highlighting the work was recently presented At the thirty-second Conference on Neural Information Processing Systems (NeurlPS) in Montreal, Canada. The work introduced at NeurIPS builds on previous work introduced by Jigar Doshi at CVPR earlier this year.

Read more>

AI Helps Detect Disaster Damage From Satellite Imagery

Dec 12, 2018

Discuss (0)

AI-Generated Summary

- Researchers from Facebook and CrowdAI developed a deep learning-based algorithm to automatically estimate damage levels in disaster-affected areas.

- The algorithm uses a convolutional neural network trained on open-source data from Digital Globe and Planet Labs, and NVIDIA Tesla P100 GPUs, to detect human-made features from satellite imagery.

- The team introduced a new metric called the Disaster Impact Index to help understand the impact of a disaster and achieved accuracy rates of 88.8 percent and 81.1 percent in identifying damaged roads and buildings in test cases.

AI-generated content may summarize information incompletely. Verify important information. Learn more